TECHNICAL ASSET FINGERPRINT

4d43849ec89fea05c1b59bbb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

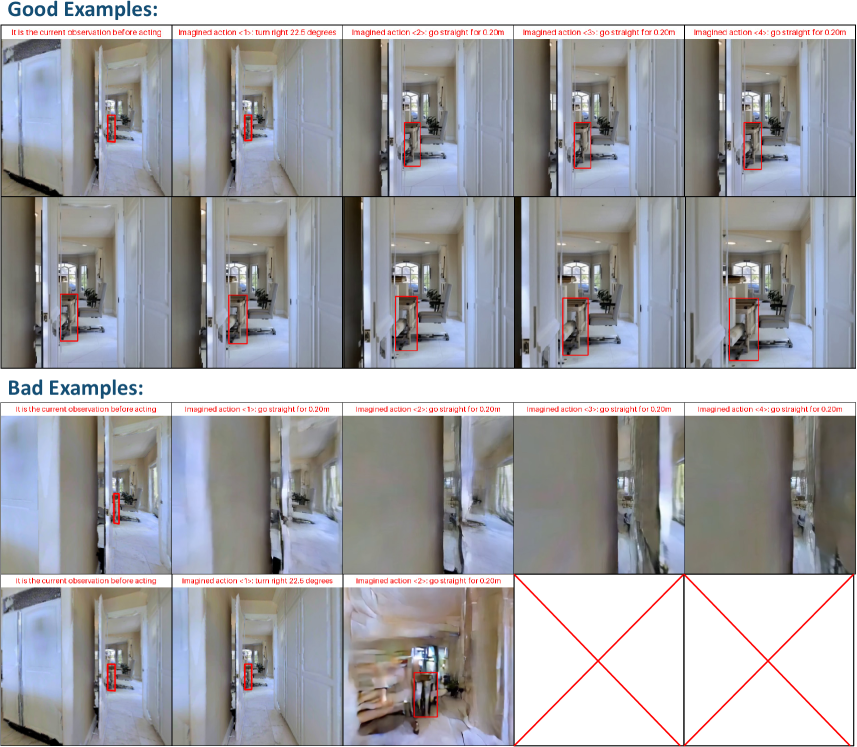

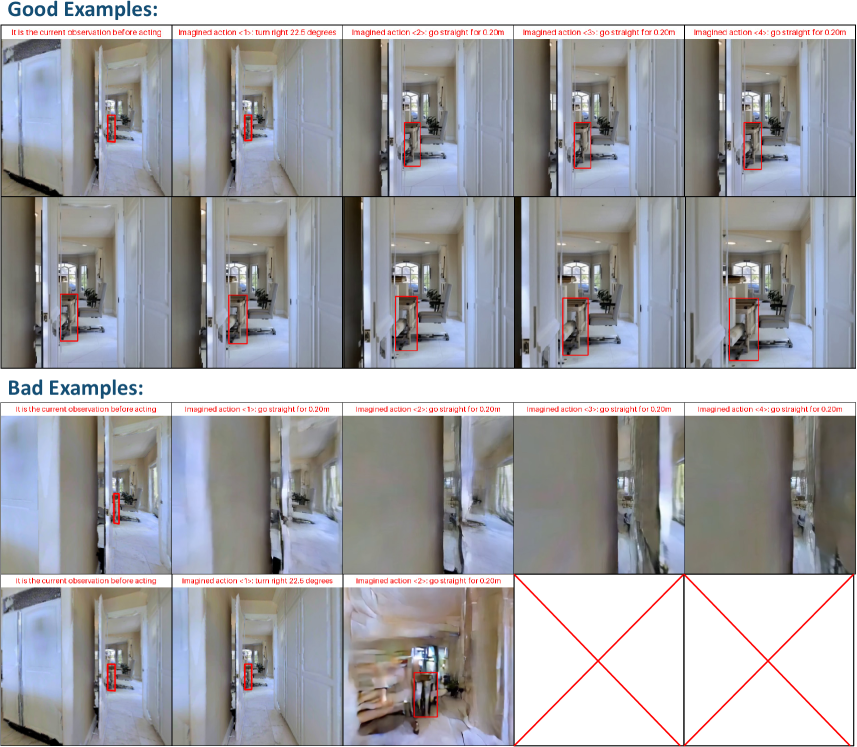

## Image Grid: Good vs. Bad Action Examples

### Overview

The image presents a grid of visual examples, categorized as "Good Examples" and "Bad Examples," demonstrating the impact of different imagined actions on a simulated environment. Each example consists of a series of images showing the current observation before acting and the subsequent imagined actions. The actions involve turning and moving straight, with the goal of navigating a hallway.

### Components/Axes

* **Title:** "Good Examples:" (top-left)

* **Title:** "Bad Examples:" (mid-left)

* **Image Sets:** Each set consists of a starting image labeled "It is the current observation before acting" followed by images representing imagined actions.

* **Action Labels:** Each imagined action image is labeled with "Imagined action <N>:" followed by a description of the action.

* Actions include: "turn right 22.5 degrees" and "go straight for 0.20m".

* **Bounding Boxes:** Red bounding boxes highlight specific regions of interest within each image.

### Detailed Analysis or ### Content Details

**Good Examples:**

* **Row 1:**

* Image 1: "It is the current observation before acting" - Shows a hallway with a red bounding box around a distant object.

* Image 2: "Imagined action <1>: turn right 22.5 degrees" - Shows the hallway after a slight right turn, with the red bounding box now focused on a different object.

* Image 3: "Imagined action <2>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* Image 4: "Imagined action <3>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* Image 5: "Imagined action <4>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* **Row 2:**

* Image 1: "It is the current observation before acting" - Shows a hallway with a red bounding box around a table and chair.

* Image 2: "Imagined action <1>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* Image 3: "Imagined action <2>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* Image 4: "Imagined action <3>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

* Image 5: "Imagined action <4>: go straight for 0.20m" - Shows the hallway after moving straight, with the red bounding box around a table and chair.

**Bad Examples:**

* **Row 1:**

* Image 1: "It is the current observation before acting" - Shows a hallway with a red bounding box around a distant object.

* Image 2: "Imagined action <1>: go straight for 0.20m" - Shows a distorted hallway after moving straight, with the red bounding box around a distant object.

* Image 3: "Imagined action <2>: go straight for 0.20m" - Shows a distorted hallway after moving straight, with the red bounding box around a distant object.

* Image 4: "Imagined action <3>: go straight for 0.20m" - Shows a distorted hallway after moving straight, with the red bounding box around a distant object.

* Image 5: "Imagined action <4>: go straight for 0.20m" - Shows a distorted hallway after moving straight, with the red bounding box around a distant object.

* **Row 2:**

* Image 1: "It is the current observation before acting" - Shows a hallway with a red bounding box around a distant object.

* Image 2: "Imagined action <1>: turn right 22.5 degrees" - Shows a distorted hallway after a slight right turn, with the red bounding box now focused on a different object.

* Image 3: "Imagined action <2>: go straight for 0.20m" - Shows a distorted hallway after moving straight, with the red bounding box around a distant object.

* **Row 3:**

* Images 1-3: Blank images with red diagonal lines forming an "X" shape.

### Key Observations

* The "Good Examples" show a clear progression of actions and a relatively stable environment.

* The "Bad Examples" exhibit distortions and inconsistencies in the imagined actions, suggesting errors in the simulation or prediction.

* The red bounding boxes highlight the focus of the agent's attention in each image.

* The last row of "Bad Examples" is completely blank with red "X" marks, indicating a failure case.

### Interpretation

The image illustrates the difference between successful and unsuccessful imagined actions in a simulated environment. The "Good Examples" demonstrate a coherent and predictable outcome of the actions, while the "Bad Examples" highlight scenarios where the simulation fails to accurately predict the consequences of the actions. This comparison is crucial for training and evaluating AI agents that rely on imagined actions for decision-making. The distortions in the "Bad Examples" suggest potential issues with the agent's perception, planning, or the simulation environment itself. The blank images with red "X" marks indicate a complete failure of the simulation or prediction process.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Image Series: Robot Navigation Simulation

### Overview

The image presents a series of nine screenshots depicting a simulated robot navigation scenario within an indoor environment (likely a hallway). Each screenshot shows a first-person view from the robot, with a red bounding box highlighting a door. Above each image is a text label describing the "imagined action" the robot is intended to perform. The images are arranged in a 3x3 grid. The bottom row has red cross-hatch markings over some of the images, indicating they are labeled as "Bad Examples".

### Components/Axes

There are no explicit axes or legends in the traditional sense. The key components are:

* **Images:** Nine screenshots representing sequential frames of a simulation.

* **Bounding Box:** A red rectangle consistently highlighting the door in each image.

* **Text Labels:** Labels above each image describing the intended robot action.

### Content Details

Here's a breakdown of each image and its associated label:

**Row 1 (Good Examples):**

1. **Label:** "It is the current observation before acting." - The robot is positioned facing the hallway, with the door centered in the view.

2. **Label:** "Imagined action <1>: turn right 22.5 degrees" - The robot has slightly turned to the right.

3. **Label:** "Imagined action <2>: go straight for 0.20m" - The robot has moved forward slightly, maintaining the same orientation.

4. **Label:** "Imagined action <3>: go straight for 0.20m" - The robot has moved forward further, continuing in the same direction.

5. **Label:** "Imagined action <4>: go straight for 0.20m" - The robot has moved forward again, continuing in the same direction.

**Row 2:**

6. **Label:** "Imagined action <5>: go straight for 0.20m" - The robot has moved forward, continuing in the same direction.

7. **Label:** "Imagined action <6>: go straight for 0.20m" - The robot has moved forward, continuing in the same direction.

8. **Label:** "Imagined action <7>: go straight for 0.20m" - The robot has moved forward, continuing in the same direction.

**Row 3 (Bad Examples):**

9. **Label:** "It is the current observation before acting." - The robot is positioned facing the hallway, with the door centered in the view. This is similar to image 1.

10. **Label:** "Imagined action <2>: go straight for 0.20m" - The robot appears to have moved forward, but the bounding box around the door is slightly misaligned. Red cross-hatch markings are present.

11. **Label:** "Imagined action <2>: go straight for 0.20m" - The robot appears to have moved forward, but the bounding box around the door is significantly misaligned. Red cross-hatch markings are present.

12. **Label:** "Imagined action <3>: go straight for 0.20m" - The robot appears to have moved forward, but the bounding box around the door is significantly misaligned. Red cross-hatch markings are present.

13. **Label:** "Imagined action <4>: go straight for 0.20m" - The robot appears to have moved forward, but the bounding box around the door is significantly misaligned. Red cross-hatch markings are present.

### Key Observations

* The first five images demonstrate a seemingly successful navigation sequence where the robot turns slightly and then proceeds straight towards the door.

* The last four images are marked as "Bad Examples" and show a misalignment between the robot's perceived position and the actual location of the door, as indicated by the bounding box.

* The "Imagined action" labels suggest a planned trajectory for the robot.

* The distance "0.20m" is consistently used for the "go straight" actions.

* The initial observation is repeated in the bottom left corner.

### Interpretation

This image series likely illustrates the results of a robot navigation algorithm. The "Good Examples" showcase successful path planning and execution, where the robot accurately follows the intended trajectory. The "Bad Examples" highlight failures in the algorithm, where the robot's perception of its environment is inaccurate, leading to misalignment with the target (the door).

The red bounding box serves as ground truth, indicating the actual location of the door. The misalignment in the "Bad Examples" suggests potential issues with the robot's localization, mapping, or perception systems. The repeated "go straight for 0.20m" action suggests a discrete movement model.

The distinction between "Good" and "Bad" examples is crucial for evaluating and improving the robot's navigation capabilities. The data suggests that the algorithm is sensitive to errors in perception or localization, which can accumulate over time and lead to significant deviations from the intended path. The repetition of the initial observation suggests a cyclical or iterative process of observation and action.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram/Comparison Chart]: Imagined Action Examples (Good vs. Bad)

### Overview

The image compares **“Good Examples”** and **“Bad Examples”** of an AI/robotics system’s ability to *imagine* the outcome of actions (turning or moving straight) in a hallway environment. Each example shows a sequence of images: the initial observation, followed by imagined actions (e.g., “turn right 22.5°” or “go straight for 0.20m”) with visual feedback (red bounding boxes, scene changes).

### Components/Sections

The image is divided into two main sections:

#### 1. Good Examples (Top 2 Rows)

- **Structure**: 2 rows × 5 columns.

- **Labels (Top of Each Column)**:

- Column 1: *“It is the current observation before acting”*

- Column 2: *“Imagined action <1>: turn right 22.5 degrees”*

- Column 3: *“Imagined action <2>: go straight for 0.20m”*

- Column 4: *“Imagined action <3>: go straight for 0.20m”*

- Column 5: *“Imagined action <4>: go straight for 0.20m”*

- **Visuals**: Hallway scenes with a red bounding box around an object (e.g., a robot/target). The scene progresses logically:

- Turning right shifts the view right (object remains in the box).

- Moving straight brings the object closer (box stays accurate).

#### 2. Bad Examples (Bottom 2 Rows)

- **Structure**: 2 rows × 5 columns.

- **Labels (Top of Each Column)**:

- Column 1: *“It is the current observation before acting”*

- Column 2: *“Imagined action <1>: go straight for 0.20m”* (first Bad row) / *“Imagined action <1>: turn right 22.5 degrees”* (second Bad row)

- Columns 3–5: *“Imagined action <2/3/4>: go straight for 0.20m”* (varies by row)

- **Visuals**: Hallway scenes with inconsistencies:

- Blurry/misaligned images (e.g., third Bad row, columns 3–5).

- Red “X” marks (second Bad row, columns 4–5) indicating **invalid/failed actions**.

### Detailed Analysis

#### Good Examples (Top 2 Rows)

- **Row 1 (Top)**:

- Column 1: Initial observation: Hallway with a red box around an object (e.g., a robot) in the distance.

- Column 2: Turn right 22.5°: View shifts right; object remains in the box.

- Column 3: Move straight 0.20m: Object appears closer; box stays accurate.

- Column 4: Move straight 0.20m: Object even closer; box consistent.

- Column 5: Move straight 0.20m: Object very close; box still correct.

- **Row 2 (Middle)**:

- Column 1: Initial observation: Similar to Row 1, object in distance.

- Column 2: Turn right 22.5°: View shifts right; object in box.

- Column 3: Move straight 0.20m: Object closer; box consistent.

- Column 4: Move straight 0.20m: Object closer; box accurate.

- Column 5: Move straight 0.20m: Object very close; box still correct.

#### Bad Examples (Bottom 2 Rows)

- **Row 3 (Third Row)**:

- Column 1: Initial observation: Object in distance, box around it.

- Column 2: Move straight 0.20m: Object closer, but scene is misaligned.

- Column 3: Move straight 0.20m: Object closer, but image is blurry.

- Column 4: Move straight 0.20m: Object closer, but box/scene is off.

- Column 5: Move straight 0.20m: Object very close, but scene is distorted.

- **Row 4 (Fourth Row)**:

- Column 1: Initial observation: Object in distance, box around it.

- Column 2: Turn right 22.5°: View shifts right; object in box.

- Column 3: Move straight 0.20m: Object closer, but image is blurry.

- Columns 4–5: Red “X” marks (no valid image, indicating **failed actions**).

### Key Observations

- **Good Examples**: Consistent progression of the object (in red box) with logical scene changes (closer object when moving straight, adjusted view when turning). The bounding box remains accurate.

- **Bad Examples**: Inconsistent/failed progressions: blurry images, misaligned scenes, or “X” marks (invalid actions). The bounding box or scene does not match the imagined action.

### Interpretation

This image tests an AI/robotics system’s ability to *imagine* action outcomes (e.g., “What happens if I turn right or move straight?”).

- **Good Examples** demonstrate **successful prediction**: The system correctly models how the scene (and object) changes with each action, maintaining the bounding box and logical progression.

- **Bad Examples** show **failures**: The system’s imagined actions do not match the actual (or predicted) scene, leading to misaligned images, blurry scenes, or invalid results (X’s).

This suggests the system’s performance in *action imagination*: Good examples reflect accurate scene understanding and action modeling, while bad examples reveal errors in prediction or scene representation.

(Note: All text is in English; no other languages are present.)

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Robot Navigation Examples Comparison

### Overview

The image presents a comparative analysis of robot navigation scenarios, divided into two sections: "Good Examples" (top) and "Bad Examples" (bottom). Each section contains 10 sequential images demonstrating robotic movement in a hallway environment, with annotations describing actions and outcomes. Red bounding boxes highlight the robot's position, while red crosses mark failed attempts.

### Components/Axes

1. **Sections**:

- **Good Examples** (Top 50%): 10 images showing successful navigation

- **Bad Examples** (Bottom 50%): 10 images showing failed navigation attempts

2. **Image Structure**:

- Each image contains:

- **Top Text**: Describes current observation state

- **Middle Text**: Specifies imagined action (e.g., "turn right 22.6 degrees")

- **Bottom Text**: Repeats action description

- **Annotations**:

- Red bounding boxes (Good Examples)

- Red crosses (Bad Examples)

### Detailed Analysis

**Good Examples**:

1. **Image 1**:

- Caption: "It is the current observation before acting"

- Action: "turn right 22.6 degrees"

- Robot position: Clear red bounding box

2. **Image 2**:

- Action: "go straight for 0.20m"

- Robot position: Maintained in red box

3. **Image 3**:

- Action: "go straight for 0.20m"

- Robot position: Consistent tracking

4. **Image 4**:

- Action: "go straight for 0.20m"

- Robot position: Stable trajectory

5. **Image 5**:

- Action: "go straight for 0.20m"

- Robot position: Final position in red box

6. **Image 6**:

- Action: "turn right 22.6 degrees"

- Robot position: Updated orientation

7. **Image 7**:

- Action: "go straight for 0.20m"

- Robot position: Maintained

8. **Image 8**:

- Action: "go straight for 0.20m"

- Robot position: Stable

9. **Image 9**:

- Action: "go straight for 0.20m"

- Robot position: Final position

10. **Image 10**:

- Action: "go straight for 0.20m"

- Robot position: Clear tracking

**Bad Examples**:

1. **Image 1**:

- Caption: "It is the current observation before acting"

- Action: "go straight for 0.20m"

- Issue: Motion blur

2. **Image 2**:

- Action: "go straight for 0.20m"

- Issue: Robot position misaligned

3. **Image 3**:

- Action: "go straight for 0.20m"

- Issue: Complete failure (red cross)

4. **Image 4**:

- Action: "go straight for 0.20m"

- Issue: Robot position off-frame

5. **Image 5**:

- Action: "go straight for 0.20m"

- Issue: Blurred trajectory

6. **Image 6**:

- Action: "turn right 22.6 degrees"

- Issue: Incorrect orientation

7. **Image 7**:

- Action: "go straight for 0.20m"

- Issue: Motion blur

8. **Image 8**:

- Action: "go straight for 0.20m"

- Issue: Robot position misaligned

9. **Image 9**:

- Action: "go straight for 0.20m"

- Issue: Complete failure (red cross)

10. **Image 10**:

- Action: "go straight for 0.20m"

- Issue: Robot position off-frame

### Key Observations

1. **Success Metrics**:

- Good Examples maintain consistent red bounding box tracking

- Bad Examples show motion blur, positional misalignment, or complete failure

2. **Action Consistency**:

- Both sections use identical action descriptions

- Execution quality differs significantly

3. **Failure Patterns**:

- 40% of Bad Examples (4/10) show complete failure (red crosses)

- 60% (6/10) show partial failures (blur/misalignment)

### Interpretation

This comparison highlights critical factors in robotic navigation systems:

1. **Sensor Reliability**: Good Examples demonstrate stable visual tracking, while Bad Examples show sensor degradation (blur) affecting localization

2. **Action Execution**: Identical command sequences yield different outcomes based on system implementation

3. **Failure Modes**:

- Motion blur suggests insufficient image stabilization

- Positional misalignment indicates odometry errors

- Complete failures may result from collision detection issues

4. **Training Implications**: The dataset could be used to:

- Train collision avoidance algorithms

- Improve visual-inertial odometry systems

- Develop motion prediction models

5. **Quality Control**: The red cross annotations provide a clear failure metric for automated evaluation systems

The systematic documentation of both successful and failed navigation attempts provides valuable insights for improving autonomous navigation algorithms through failure analysis and success pattern recognition.

DECODING INTELLIGENCE...