## Diagram: Collaborative LLM-Human System Architecture

### Overview

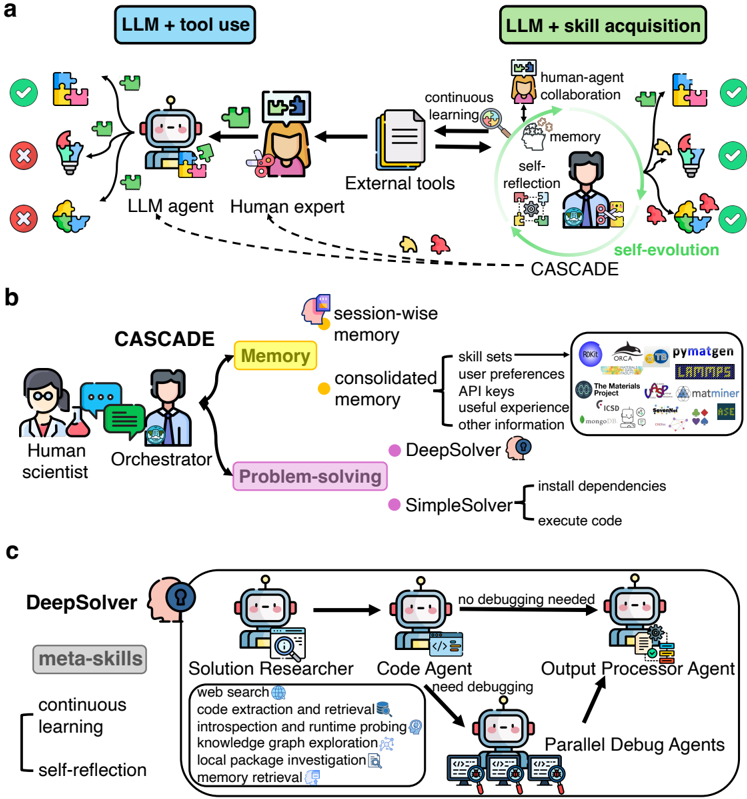

The diagram illustrates a multi-stage collaborative system integrating Large Language Models (LLMs) with human expertise and external tools. It is divided into three sections:

1. **LLM + Tool Use** (a): Focuses on initial problem-solving with human oversight.

2. **CASCADE** (b): A memory-driven framework for skill acquisition and problem-solving.

3. **DeepSolver** (c): A specialized system for code-related tasks with meta-skills.

### Components/Axes

#### Section a: LLM + Tool Use

- **Key Elements**:

- **LLM Agent**: Depicted as a robot with puzzle pieces, connected to a human expert via dashed lines.

- **Human Expert**: Illustrated as a figure interacting with the LLM agent.

- **External Tools**: Represented by icons (e.g., lightbulb, magnifying glass).

- **Flow**:

- Arrows indicate interactions between LLM agent, human expert, and external tools.

- Checkmarks (✓) and crosses (✗) denote successful/failed outcomes.

- **Labels**:

- "LLM + tool use" (top-left), "LLM + skill acquisition" (top-right).

- "External tools," "continuous learning," "memory," "self-reflection," "self-evolution."

#### Section b: CASCADE

- **Key Elements**:

- **Human Scientist**: Collaborates with an **Orchestrator** (another human figure).

- **Memory**: Central node with subcategories:

- Session-wise memory

- Consolidated memory

- Skill sets, API keys, user preferences, useful experience, other information.

- **Problem-Solving**:

- **DeepSolver**: Uses deep learning and self-reflection.

- **SimpleSolver**: Executes code directly.

- **Legend**:

- Yellow (memory), blue (tools), pink (problem-solving), purple (DeepSolver), green (SimpleSolver).

#### Section c: DeepSolver

- **Key Elements**:

- **Meta-Skills**: Continuous learning, self-reflection.

- **Agents**:

- **Solution Researcher**: Performs web search, code extraction, runtime probing.

- **Code Agent**: Handles code execution.

- **Output Processor Agent**: Processes results.

- **Parallel Debug Agents**: Debug code without human intervention.

- **Flow**:

- Arrows show progression from problem-solving to debugging.

- **Legend**:

- Robot icons with labels (e.g., "no debugging needed").

### Detailed Analysis

#### Section a: LLM + Tool Use

- **Flow**:

- LLM agent and human expert collaborate using external tools.

- Successful outcomes (✓) are linked to effective tool use and human oversight.

- Failed outcomes (✗) occur when tools or LLM capabilities are insufficient.

#### Section b: CASCADE

- **Memory Hierarchy**:

- Session-wise memory is transient, while consolidated memory retains long-term data.

- Skill sets and API keys are stored in memory for reuse.

- **Problem-Solving**:

- DeepSolver uses self-reflection and deep learning for complex tasks.

- SimpleSolver executes code directly for simpler tasks.

#### Section c: DeepSolver

- **Meta-Skills**:

- Continuous learning enables adaptation to new tasks.

- Self-reflection improves debugging efficiency.

- **Agent Roles**:

- Solution Researcher gathers data and identifies issues.

- Parallel Debug Agents automate debugging, reducing human intervention.

### Key Observations

1. **Human-LLM Collaboration**: Human experts guide LLM agents, ensuring alignment with real-world needs.

2. **Memory as a Bridge**: Consolidated memory enables skill reuse across sessions.

3. **Automation in Debugging**: Parallel Debug Agents minimize manual debugging, improving efficiency.

4. **Modular Design**: Each section (a, b, c) represents a specialized subsystem with distinct workflows.

### Interpretation

The diagram emphasizes a **symbiotic relationship** between LLMs and humans, where memory and meta-skills enhance problem-solving.

- **CASCADE** (b) acts as a bridge between raw data (session-wise memory) and actionable solutions (DeepSolver/SimpleSolver).

- **DeepSolver** (c) specializes in code tasks, leveraging automation to reduce human workload.

- **Checkmarks/Crosses** (a) highlight the importance of human oversight in tool use.

**Notable Trends**:

- Memory consolidation (b) is critical for long-term skill retention.

- Parallel debugging (c) suggests a shift toward autonomous systems in technical workflows.

**Anomalies**:

- No explicit data points or numerical values are provided, limiting quantitative analysis.

- The absence of failure cases in sections b and c implies an idealized workflow.

**Conclusion**:

This architecture demonstrates how LLMs can evolve from tool users to autonomous problem-solvers through human collaboration, memory integration, and meta-skill development.