TECHNICAL ASSET FINGERPRINT

4e1c370231aaa7f3f17c3215

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

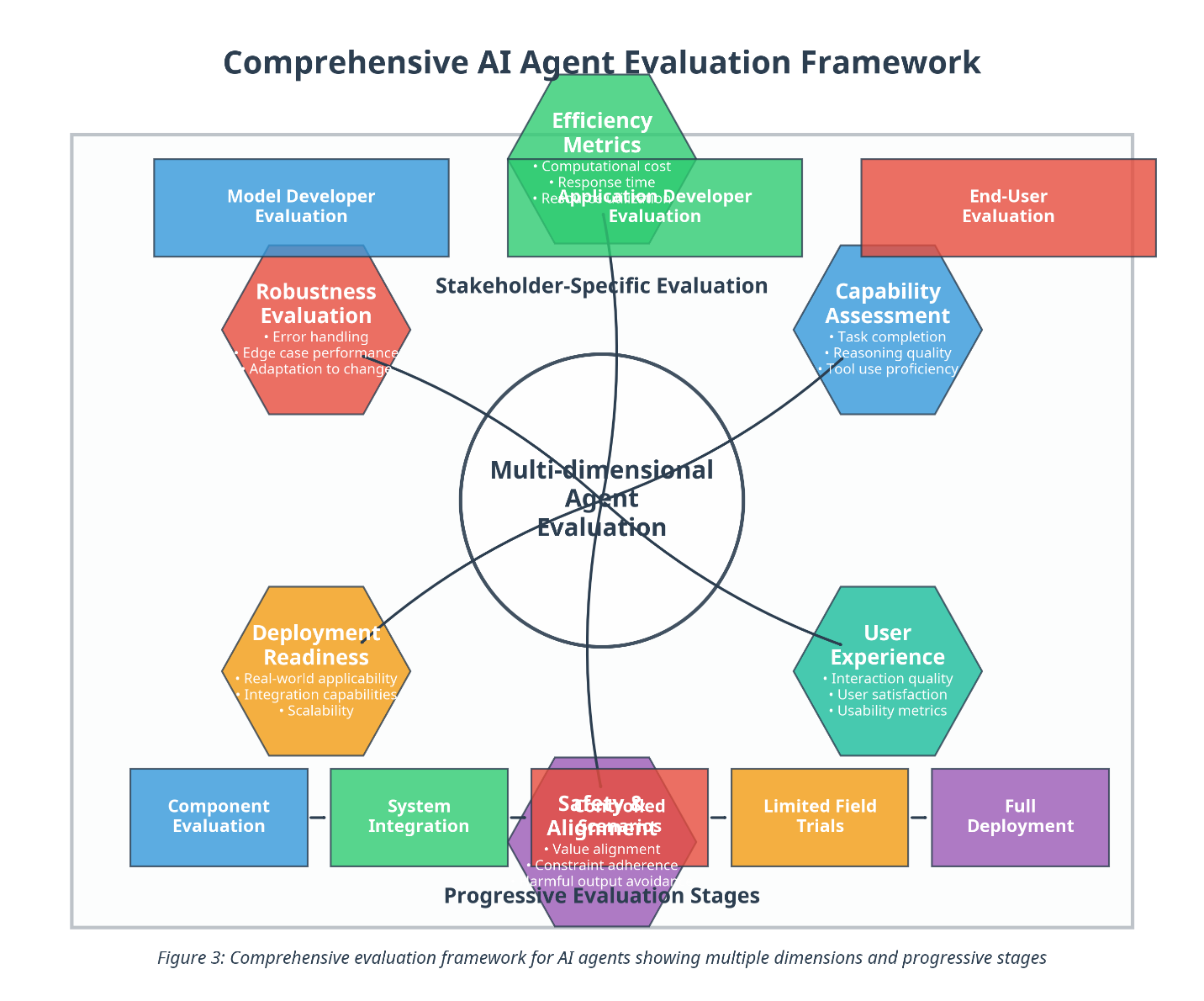

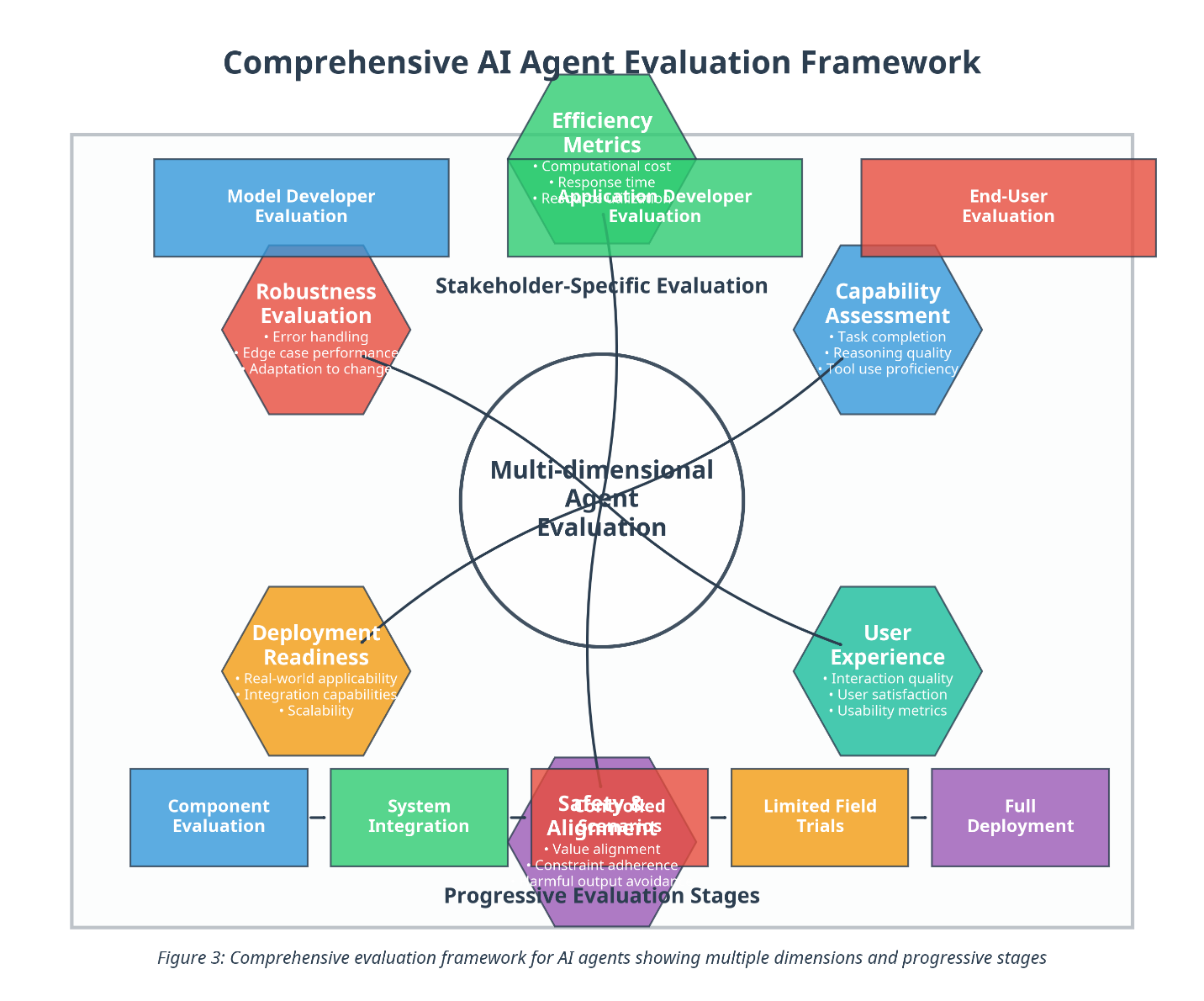

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

The image is a diagram illustrating a comprehensive evaluation framework for AI agents. It depicts a multi-dimensional evaluation process with stakeholder-specific evaluations and progressive evaluation stages. The diagram uses shapes and lines to connect different evaluation aspects, showing their relationships and flow.

### Components/Axes

* **Title:** Comprehensive AI Agent Evaluation Framework

* **Central Node:** Multi-dimensional Agent Evaluation (represented by a circle)

* **Stakeholder-Specific Evaluation:** This is a general category encompassing several specific evaluations.

* **Progressive Evaluation Stages:** This is a general category encompassing several specific stages.

* **Evaluation Categories (arranged around the central node):**

* Model Developer Evaluation (Blue Rectangle)

* Efficiency Metrics (Green Pentagon)

* Computational cost

* Response time

* Application Developer Evaluation

* End-User Evaluation (Red Rectangle)

* Robustness Evaluation (Red Hexagon)

* Error handling

* Edge case performance

* Adaptation to change

* Capability Assessment (Blue Hexagon)

* Task completion

* Reasoning quality

* Tool use proficiency

* User Experience (Teal Hexagon)

* Interaction quality

* User satisfaction

* Usability metrics

* Deployment Readiness (Orange Hexagon)

* Real-world applicability

* Integration capabilities

* Scalability

* Component Evaluation (Blue Rectangle)

* System Integration (Green Rectangle)

* Safety/Biased Alignments (Red Hexagon)

* Value alignment

* Constraint adherence

* Harmful output avoidance

* Limited Field Trials (Orange Rectangle)

* Full Deployment (Purple Rectangle)

* **Connectors:** Lines connecting the central "Multi-dimensional Agent Evaluation" node to the surrounding evaluation categories.

* **Caption:** Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages

### Detailed Analysis or ### Content Details

* **Model Developer Evaluation:** Located at the top-left, represented by a blue rectangle.

* **Efficiency Metrics:** Located at the top-center, represented by a green pentagon. It includes "Computational cost", "Response time", and "Application Developer Evaluation".

* **End-User Evaluation:** Located at the top-right, represented by a red rectangle.

* **Robustness Evaluation:** Located to the left of the center, represented by a red hexagon. It includes "Error handling", "Edge case performance", and "Adaptation to change".

* **Capability Assessment:** Located to the right of the center, represented by a blue hexagon. It includes "Task completion", "Reasoning quality", and "Tool use proficiency".

* **User Experience:** Located at the bottom-right, represented by a teal hexagon. It includes "Interaction quality", "User satisfaction", and "Usability metrics".

* **Deployment Readiness:** Located at the bottom-left, represented by an orange hexagon. It includes "Real-world applicability", "Integration capabilities", and "Scalability".

* **Component Evaluation:** Located at the bottom-left, represented by a blue rectangle.

* **System Integration:** Located at the bottom-center-left, represented by a green rectangle.

* **Safety/Biased Alignments:** Located at the bottom-center, represented by a red hexagon. It includes "Value alignment", "Constraint adherence", and "Harmful output avoidance".

* **Limited Field Trials:** Located at the bottom-center-right, represented by an orange rectangle.

* **Full Deployment:** Located at the bottom-right, represented by a purple rectangle.

### Key Observations

* The diagram presents a structured approach to evaluating AI agents, considering multiple dimensions and stages.

* The central node emphasizes the multi-dimensional nature of the evaluation.

* The surrounding categories cover various aspects, from model development to real-world deployment.

* The use of different shapes and colors helps to visually distinguish the categories.

* The diagram suggests a flow from component evaluation and system integration to limited field trials and full deployment, representing progressive stages.

### Interpretation

The diagram illustrates a holistic framework for evaluating AI agents, emphasizing the importance of considering various factors throughout the development and deployment lifecycle. The framework highlights the need for stakeholder-specific evaluations, ensuring that the AI agent meets the needs and expectations of different users and developers. The progressive evaluation stages suggest a phased approach to deployment, allowing for continuous monitoring and improvement. The framework's multi-dimensional nature underscores the complexity of evaluating AI agents and the need for a comprehensive approach that considers both technical and ethical aspects.

DECODING INTELLIGENCE...

EXPERT: gemini-3-pro VERSION 1

RUNTIME: nugit/gemini/gemini-3-pro-preview

INTEL_VERIFIED

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

This image presents a conceptual diagram titled "Comprehensive AI Agent Evaluation Framework." It illustrates a holistic approach to evaluating AI agents, organized around a central core of "Multi-dimensional Agent Evaluation." The framework connects this central core to six specific evaluation dimensions (hexagons) and frames these within broader contexts of "Stakeholder-Specific Evaluation" (top) and "Progressive Evaluation Stages" (bottom).

### Components & Structure

#### 1. Central Core

* **Shape:** Circle

* **Text:** "Multi-dimensional Agent Evaluation"

* **Function:** Acts as the hub, connecting to six surrounding hexagonal nodes via dark lines.

#### 2. Surrounding Evaluation Dimensions (Hexagons)

Six hexagons surround the central core, each representing a specific metric or assessment area. They are connected to the center by lines. Starting from the top and moving clockwise:

* **Top (Green Hexagon):**

* **Title:** Efficiency Metrics

* **Bullet Points:**

* Computational cost

* Response time

* Resource utilization

* **Top-Right (Blue Hexagon):**

* **Title:** Capability Assessment

* **Bullet Points:**

* Task completion

* Reasoning quality

* Tool use proficiency

* **Bottom-Right (Teal/Green Hexagon):**

* **Title:** User Experience

* **Bullet Points:**

* Interaction quality

* User satisfaction

* Usability metrics

* **Bottom (Purple/Red Hexagon):**

* **Title:** Safety & Alignment

* **Bullet Points:**

* Value alignment

* Constraint adherence

* Harmful output avoidance

* **Bottom-Left (Yellow/Orange Hexagon):**

* **Title:** Deployment Readiness

* **Bullet Points:**

* Real-world applicability

* Integration capabilities

* Scalability

* **Top-Left (Red/Pink Hexagon):**

* **Title:** Robustness Evaluation

* **Bullet Points:**

* Error handling

* Edge case performance

* Adaptation to change

#### 3. Stakeholder-Specific Evaluation (Top Layer)

Three rectangular boxes are positioned at the top, categorized under the label "Stakeholder-Specific Evaluation." Note: The middle box partially overlaps the top hexagon.

* **Left Box (Blue):** "Model Developer Evaluation"

* **Center Box (Green):** "Application Developer Evaluation" (Note: This box is visually layered behind the "Efficiency Metrics" hexagon).

* **Right Box (Red):** "End-User Evaluation"

#### 4. Progressive Evaluation Stages (Bottom Layer)

Five rectangular boxes are arranged horizontally at the bottom, connected by short dashes, representing a timeline or process flow. This section is labeled "Progressive Evaluation Stages."

* **Stage 1 (Blue):** "Component Evaluation"

* **Stage 2 (Green):** "System Integration"

* **Stage 3 (Red):** "Controlled Experiments" (Note: This text is partially obscured by the "Safety & Alignment" hexagon, but "Controlled" and "Experiments" are discernible).

* **Stage 4 (Yellow/Orange):** "Limited Field Trials"

* **Stage 5 (Purple):** "Full Deployment"

#### 5. Footer Caption

* **Text:** "Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages"

### Detailed Analysis of Relationships

* **Centrality:** The "Multi-dimensional Agent Evaluation" is the unifying concept. It implies that a proper evaluation cannot rely on a single metric but must balance all six surrounding dimensions.

* **Stakeholder Mapping:** The top layer suggests different stakeholders care about different aspects, though specific mapping lines are not drawn.

* *Model Developers* likely focus on Robustness and Capability.

* *Application Developers* likely focus on Efficiency and Deployment Readiness.

* *End-Users* likely focus on User Experience and Safety.

* **Process Flow:** The bottom layer indicates a linear progression. You start with evaluating components, move to integration, then experiments, trials, and finally full deployment. The central evaluation dimensions presumably apply at every stage of this progression.

### Key Observations & Anomalies

* **Visual Overlap:** There is significant visual overlap in the center-top and center-bottom areas.

* The "Efficiency Metrics" hexagon overlaps the "Application Developer Evaluation" box.

* The "Safety & Alignment" hexagon overlaps the "Controlled Experiments" box.

* This design choice visually integrates the specific metrics with the stakeholders and stages, but it slightly obscures the text.

* **Color Coding:** The colors of the bottom "Progressive Evaluation Stages" loosely correspond to the colors of the hexagons above them, suggesting a thematic link:

* **Blue:** Component Evaluation <-> Model Developer / Capability Assessment

* **Green:** System Integration <-> Efficiency Metrics

* **Red:** Controlled Experiments <-> Safety & Alignment / Robustness

* **Yellow:** Limited Field Trials <-> Deployment Readiness

* **Purple:** Full Deployment (matches the purple hue of the Safety hexagon border/fill).

### Interpretation

This framework proposes that evaluating AI agents is a complex, multi-faceted problem that must be viewed through three distinct lenses simultaneously:

1. **The "What" (Dimensions):** The six hexagons define *what* is being measured. It moves beyond simple accuracy (Capability) to include how the system behaves under stress (Robustness), how much it costs (Efficiency), how safe it is (Safety), how easy it is to use (User Experience), and how ready it is for the real world (Deployment Readiness).

2. **The "Who" (Stakeholders):** The top layer acknowledges that evaluation is subjective based on the user's role. A developer cares about code robustness; an end-user cares about the experience.

3. **The "When" (Stages):** The bottom layer places evaluation in a temporal context. You don't evaluate everything at once; you move from unit testing (Component) to real-world usage (Full Deployment).

**Conclusion:** The diagram argues against narrow benchmarks. A high "Capability" score is insufficient if the agent fails "Safety" checks or has poor "Efficiency." Successful AI agent deployment requires passing criteria in all six hexagonal dimensions across all five progressive stages.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

The image presents a diagram illustrating a comprehensive framework for evaluating AI agents. The framework is visualized as a series of overlapping circles representing different evaluation dimensions and stages, centered around "Multi-dimensional Agent Evaluation." The diagram emphasizes a stakeholder-specific approach and progressive evaluation stages, from component evaluation to full deployment.

### Components/Axes

The diagram is structured around the following key components:

* **Central Concept:** Multi-dimensional Agent Evaluation (located in the center of the diagram, within a black circle).

* **Stakeholder-Specific Evaluations (Outer Circles):**

* Model Developer Evaluation (Top-left, purple)

* End-User Evaluation (Top-right, teal)

* Robustness Evaluation (Left, orange)

* Capability Assessment (Right, yellow)

* Deployment Readiness (Bottom-left, dark blue)

* User Experience (Bottom-right, light blue)

* Safety & Alignment (Bottom-center, red)

* **Progressive Evaluation Stages (Bottom Row):**

* Component Evaluation (Dark purple)

* System Integration (Dark blue)

* Limited Field Trials (Red)

* Full Deployment (Teal)

* **Text within Circles:** Each evaluation circle contains bullet points listing specific evaluation criteria.

* **Title:** Comprehensive AI Agent Evaluation Framework (Top, black text)

* **Caption:** Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages (Bottom, black text)

### Detailed Analysis or Content Details

Let's break down the content within each evaluation circle:

* **Model Developer Evaluation (Purple):**

* Efficiency Metrics

* Computational cost

* Response time

* Reapplication Developer Evaluation

* **End-User Evaluation (Teal):**

* (No visible text)

* **Robustness Evaluation (Orange):**

* Error handling

* Edge case performance

* Adaptation to change

* **Capability Assessment (Yellow):**

* Task completion

* Reasoning quality

* Tool use proficiency

* **Deployment Readiness (Dark Blue):**

* Real-world applicability

* Integration capabilities

* Scalability

* **User Experience (Light Blue):**

* Interaction quality

* User satisfaction

* Usability metrics

* **Safety & Alignment (Red):**

* Value alignment

* Constraint adherence

* Harmful output avoidance

The Progressive Evaluation Stages are simply labeled as such, without further detail.

### Key Observations

* The diagram emphasizes the interconnectedness of different evaluation aspects. The overlapping circles suggest that a comprehensive evaluation requires considering multiple dimensions simultaneously.

* The "Multi-dimensional Agent Evaluation" at the center highlights the holistic nature of the framework.

* The progressive stages indicate a phased approach to evaluation, starting with individual components and culminating in real-world deployment.

* The color coding helps to visually distinguish between different evaluation areas.

* The text within the circles provides specific criteria for each evaluation dimension.

### Interpretation

This diagram presents a conceptual framework for evaluating AI agents, moving beyond simple performance metrics to encompass a broader range of considerations. The framework acknowledges that AI evaluation is not a one-size-fits-all process and must be tailored to different stakeholders (developers, end-users, etc.). The progressive stages suggest a risk-mitigation strategy, where AI agents are thoroughly tested and validated before being deployed in real-world scenarios.

The overlapping circles indicate that these evaluation dimensions are not mutually exclusive. For example, robustness (orange) and safety & alignment (red) are likely to be closely related, as an agent that is not robust may also be prone to generating harmful outputs. Similarly, user experience (light blue) and end-user evaluation (teal) are interconnected, as usability metrics are a key component of end-user satisfaction.

The inclusion of "Reapplication Developer Evaluation" within the "Efficiency Metrics" circle is somewhat ambiguous and could benefit from further clarification. It suggests that the framework considers the effort required to adapt or re-apply the AI agent to new tasks or contexts.

Overall, the diagram provides a valuable high-level overview of a comprehensive AI agent evaluation process. It serves as a useful starting point for developing more detailed evaluation plans and checklists.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

The image displays a conceptual diagram titled "Comprehensive AI Agent Evaluation Framework." It illustrates a multi-faceted approach to evaluating AI agents, organized around a central core with radiating dimensions and two horizontal layers representing stakeholder perspectives and deployment stages. The diagram uses color-coded shapes (hexagons and rectangles) connected by lines to show relationships.

### Components/Axes

The diagram is structured into three main areas:

1. **Central Core:** A large circle labeled **"Multi-dimensional Agent Evaluation"**. This is the focal point from which all evaluation dimensions radiate.

2. **Radiating Evaluation Dimensions (Hexagons):** Six hexagons surround the core, each representing a key evaluation dimension. They are connected to the core by lines.

* **Top-Left (Red):** **Robustness Evaluation**

* Sub-points: Error handling, Edge case performance, Adaptation to change.

* **Top-Center (Green):** **Efficiency Metrics**

* Sub-points: Computational cost, Response time, Resource utilization.

* *Note: The text "Application Developer Evaluation" overlaps this hexagon.*

* **Top-Right (Blue):** **Capability Assessment**

* Sub-points: Task completion, Reasoning quality, Tool use proficiency.

* **Bottom-Right (Teal):** **User Experience**

* Sub-points: Interaction quality, User satisfaction, Usability metrics.

* **Bottom-Center (Red):** **Safety & Alignment**

* Sub-points: Value alignment, Constraint adherence, Harmful output avoidance.

* *Note: The text "Safety & Alignment" overlaps the sub-points.*

* **Bottom-Left (Orange):** **Deployment Readiness**

* Sub-points: Real-world applicability, Integration capabilities, Scalability.

3. **Horizontal Layers (Rectangles):** Two rows of colored rectangles frame the central diagram.

* **Top Row - Stakeholder-Specific Evaluation:**

* **Left (Blue):** Model Developer Evaluation

* **Center (Green):** Application Developer Evaluation

* **Right (Red):** End-User Evaluation

* **Bottom Row - Progressive Evaluation Stages:**

* **Left (Blue):** Component Evaluation

* **Center-Left (Green):** System Integration

* **Center (Red):** Safety & Alignment

* **Center-Right (Orange):** Limited Field Trials

* **Right (Purple):** Full Deployment

**Figure Caption (Bottom):** "Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages"

### Detailed Analysis

The diagram presents a structured taxonomy for AI agent evaluation. The central "Multi-dimensional Agent Evaluation" is the unifying concept. The six radiating hexagons define the *what*—the specific areas to evaluate (Robustness, Efficiency, Capability, User Experience, Safety & Alignment, Deployment Readiness).

The two horizontal rows define the *who* and *when*:

* The **Stakeholder-Specific Evaluation** row indicates that different parties (Model Developers, Application Developers, End-Users) have distinct evaluation priorities. The color-coding suggests a loose association: Blue (Model Developer) connects to Capability Assessment and Component Evaluation; Green (Application Developer) connects to Efficiency Metrics and System Integration; Red (End-User) connects to User Experience and Safety & Alignment.

* The **Progressive Evaluation Stages** row outlines a sequential process from initial component testing to full deployment, implying that evaluation is not a single event but a continuous process integrated into the development lifecycle.

### Key Observations

1. **Interconnectedness:** Lines connect the central core to all six evaluation dimension hexagons, emphasizing that a comprehensive assessment requires looking at all these areas together.

2. **Stakeholder Overlap:** The "Application Developer Evaluation" label physically overlaps the "Efficiency Metrics" hexagon, and "Safety & Alignment" overlaps its own sub-points. This visual crowding may indicate these are particularly critical or complex intersections within the framework.

3. **Color-Coding Logic:** Colors are used thematically. Blue is associated with foundational/technical aspects (Model Developer, Component Evaluation, Capability). Green is linked to application and system-level concerns (Application Developer, System Integration, Efficiency). Red is tied to critical, user-facing, or safety concerns (End-User, Safety & Alignment, Robustness). Orange and Purple are used for later-stage, practical deployment phases.

4. **Non-Linear Flow:** While the bottom row suggests a linear progression (Component -> System -> Safety -> Trials -> Deployment), the radiating hexagons imply that dimensions like Robustness, Efficiency, and User Experience must be evaluated continuously throughout these stages.

### Interpretation

This framework argues that evaluating an AI agent is a complex, multi-variable problem that cannot be captured by a single metric. It proposes a holistic model that must account for:

* **Multiple Perspectives:** What matters to a model trainer (raw capability) differs from what matters to an end-user (interaction quality) or a product manager (deployment readiness and cost).

* **Multiple Phases:** Evaluation is not a gate at the end but an integral activity from the earliest component design through to post-deployment monitoring.

* **Inherent Tensions:** The framework visually balances competing priorities. For example, the pursuit of high **Capability** (top-right) might conflict with **Efficiency** (top-center) or **Safety & Alignment** (bottom-center). The diagram doesn't resolve these tensions but provides a map to identify and manage them.

The inclusion of **Safety & Alignment** as a core dimension connected to both the central evaluation concept and the "End-User" stakeholder highlights its critical role in responsible AI deployment. Similarly, placing **Deployment Readiness** as a first-class dimension signals that technical performance alone is insufficient; practical integration and scalability are paramount for real-world impact.

In essence, this diagram serves as a checklist and a conceptual map for teams building and deploying AI agents, ensuring they consider the full spectrum of technical, human, and operational factors necessary for success.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

The diagram illustrates a multi-dimensional framework for evaluating AI agents, emphasizing both stakeholder-specific criteria and progressive evaluation stages. It uses a central hub ("Multi-dimensional Agent Evaluation") connected to hexagonal and rectangular components representing evaluation dimensions and stages.

### Components/Axes

#### Central Hub

- **Multi-dimensional Agent Evaluation** (central circle)

- Connected to six hexagonal components (Stakeholder-Specific Evaluation) and five rectangular components (Progressive Evaluation Stages).

#### Stakeholder-Specific Evaluation (Hexagons)

1. **Efficiency Metrics** (green)

- Computational cost, response time, application deployment.

2. **Capability Assessment** (blue)

- Task completion, reasoning quality, tool use proficiency.

3. **User Experience** (teal)

- Interaction quality, user satisfaction, usability metrics.

4. **Deployment Readiness** (orange)

- Real-world applicability, integration capabilities, scalability.

5. **Robustness Evaluation** (red)

- Error handling, edge case performance, adaptation to change.

6. **Model Developer Evaluation** (blue)

- Accuracy, bias detection, explainability.

#### Progressive Evaluation Stages (Rectangles)

1. **Component Evaluation** (blue)

- Unit testing, code quality, documentation.

2. **System Integration** (green)

- API compatibility, interoperability, security.

3. **Safety & Alignment** (purple)

- Value alignment, constraint adherence, harmful output avoidance.

4. **Limited Field Trials** (orange)

- Performance metrics, user feedback, error rates.

5. **Full Deployment** (purple)

- Monitoring, maintenance, scalability.

### Detailed Analysis

- **Color Coding**:

- Blue: Model Developer Evaluation, Component Evaluation.

- Green: Efficiency Metrics, System Integration.

- Red: Robustness Evaluation.

- Orange: Deployment Readiness, Limited Field Trials.

- Purple: Safety & Alignment, Full Deployment.

- Teal: User Experience.

- **Flow and Relationships**:

- The central hub connects all evaluation dimensions, suggesting interdependence.

- Progressive stages flow linearly from Component Evaluation (left) to Full Deployment (right), indicating a phased approach.

### Key Observations

1. **Interconnected Dimensions**: Stakeholder-specific evaluations (e.g., User Experience, Robustness) are equally weighted, emphasizing holistic assessment.

2. **Staged Progression**: Evaluation begins with technical components (e.g., code quality) and advances to real-world deployment, ensuring iterative refinement.

3. **Balanced Focus**: Combines technical metrics (e.g., computational cost) with ethical considerations (e.g., value alignment).

### Interpretation

The framework prioritizes **comprehensiveness** by integrating technical, ethical, and user-centric criteria. The progressive stages ensure AI agents are rigorously tested at every development phase, from code quality to real-world performance. The hexagonal components highlight the need for **multi-stakeholder input**, balancing developer rigor (e.g., bias detection) with end-user satisfaction (e.g., usability metrics). This structure mitigates risks of biased or unsafe deployments by enforcing alignment checks and field trials before full-scale implementation.

*Note: No numerical data or trends are present; the diagram focuses on categorical relationships and evaluation priorities.*

DECODING INTELLIGENCE...