TECHNICAL ASSET FINGERPRINT

4e1c370231aaa7f3f17c3215

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-3-pro VERSION 1

RUNTIME: nugit/gemini/gemini-3-pro-preview

INTEL_VERIFIED

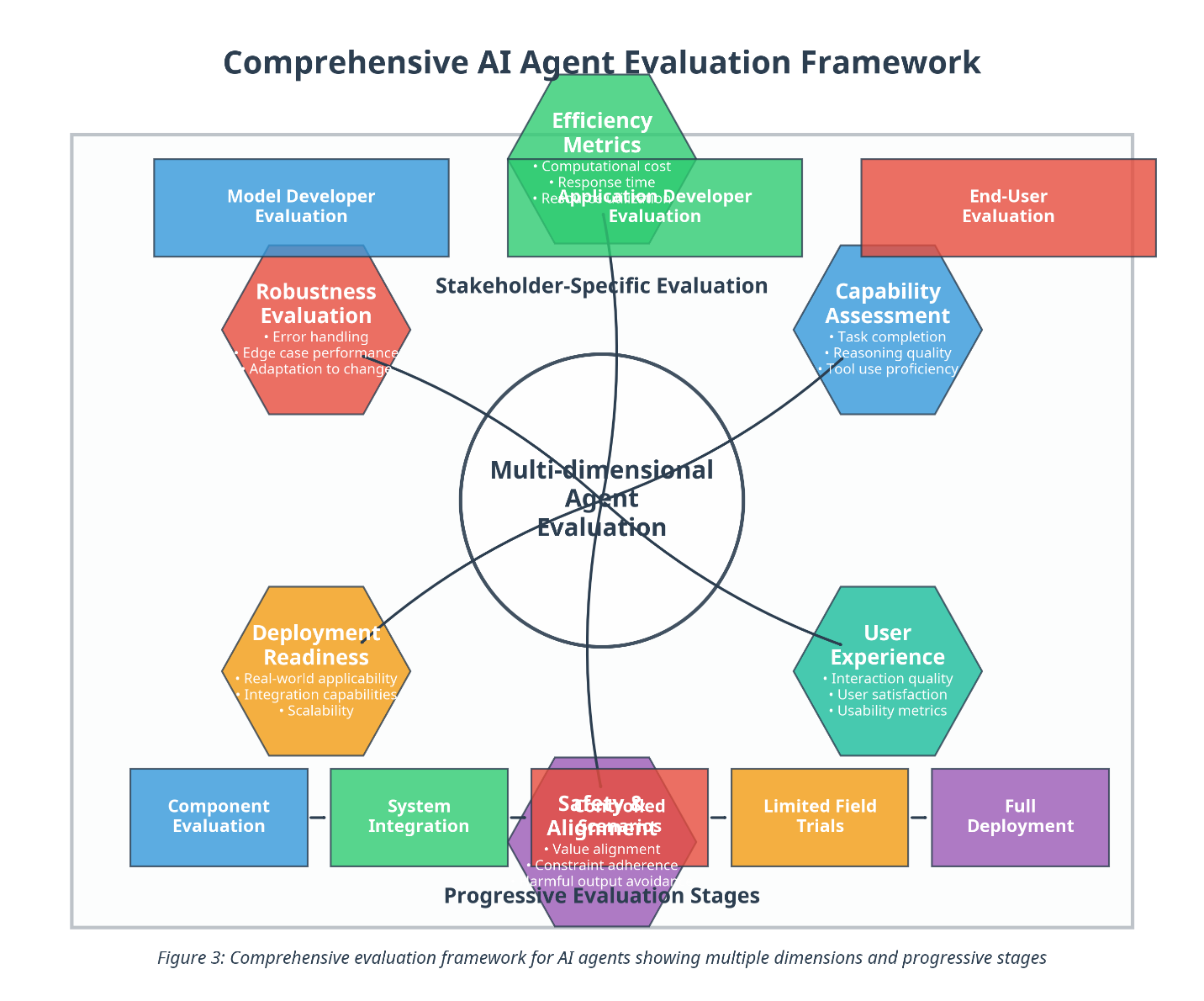

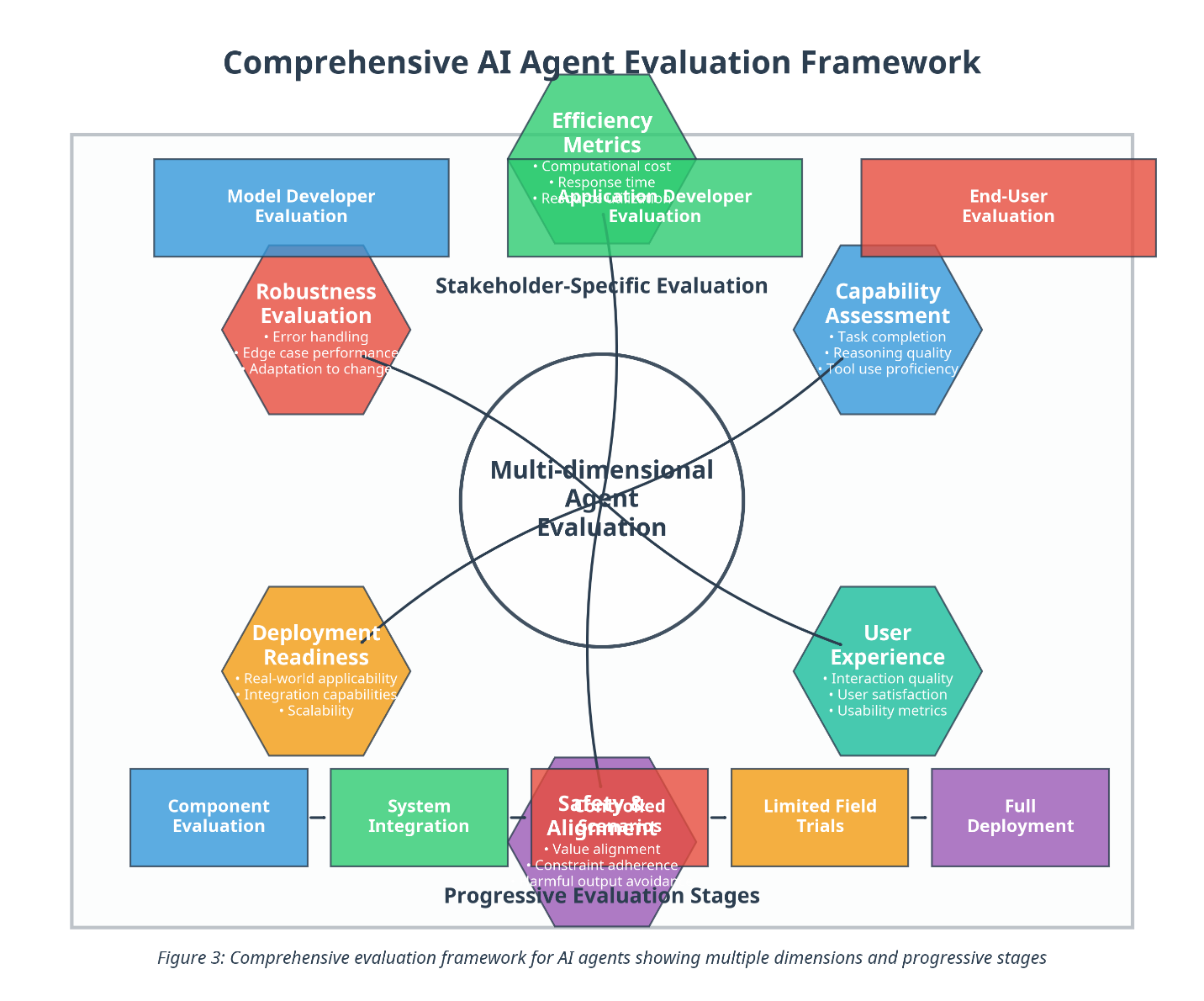

## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

This image presents a conceptual diagram titled "Comprehensive AI Agent Evaluation Framework." It illustrates a holistic approach to evaluating AI agents, organized around a central core of "Multi-dimensional Agent Evaluation." The framework connects this central core to six specific evaluation dimensions (hexagons) and frames these within broader contexts of "Stakeholder-Specific Evaluation" (top) and "Progressive Evaluation Stages" (bottom).

### Components & Structure

#### 1. Central Core

* **Shape:** Circle

* **Text:** "Multi-dimensional Agent Evaluation"

* **Function:** Acts as the hub, connecting to six surrounding hexagonal nodes via dark lines.

#### 2. Surrounding Evaluation Dimensions (Hexagons)

Six hexagons surround the central core, each representing a specific metric or assessment area. They are connected to the center by lines. Starting from the top and moving clockwise:

* **Top (Green Hexagon):**

* **Title:** Efficiency Metrics

* **Bullet Points:**

* Computational cost

* Response time

* Resource utilization

* **Top-Right (Blue Hexagon):**

* **Title:** Capability Assessment

* **Bullet Points:**

* Task completion

* Reasoning quality

* Tool use proficiency

* **Bottom-Right (Teal/Green Hexagon):**

* **Title:** User Experience

* **Bullet Points:**

* Interaction quality

* User satisfaction

* Usability metrics

* **Bottom (Purple/Red Hexagon):**

* **Title:** Safety & Alignment

* **Bullet Points:**

* Value alignment

* Constraint adherence

* Harmful output avoidance

* **Bottom-Left (Yellow/Orange Hexagon):**

* **Title:** Deployment Readiness

* **Bullet Points:**

* Real-world applicability

* Integration capabilities

* Scalability

* **Top-Left (Red/Pink Hexagon):**

* **Title:** Robustness Evaluation

* **Bullet Points:**

* Error handling

* Edge case performance

* Adaptation to change

#### 3. Stakeholder-Specific Evaluation (Top Layer)

Three rectangular boxes are positioned at the top, categorized under the label "Stakeholder-Specific Evaluation." Note: The middle box partially overlaps the top hexagon.

* **Left Box (Blue):** "Model Developer Evaluation"

* **Center Box (Green):** "Application Developer Evaluation" (Note: This box is visually layered behind the "Efficiency Metrics" hexagon).

* **Right Box (Red):** "End-User Evaluation"

#### 4. Progressive Evaluation Stages (Bottom Layer)

Five rectangular boxes are arranged horizontally at the bottom, connected by short dashes, representing a timeline or process flow. This section is labeled "Progressive Evaluation Stages."

* **Stage 1 (Blue):** "Component Evaluation"

* **Stage 2 (Green):** "System Integration"

* **Stage 3 (Red):** "Controlled Experiments" (Note: This text is partially obscured by the "Safety & Alignment" hexagon, but "Controlled" and "Experiments" are discernible).

* **Stage 4 (Yellow/Orange):** "Limited Field Trials"

* **Stage 5 (Purple):** "Full Deployment"

#### 5. Footer Caption

* **Text:** "Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages"

### Detailed Analysis of Relationships

* **Centrality:** The "Multi-dimensional Agent Evaluation" is the unifying concept. It implies that a proper evaluation cannot rely on a single metric but must balance all six surrounding dimensions.

* **Stakeholder Mapping:** The top layer suggests different stakeholders care about different aspects, though specific mapping lines are not drawn.

* *Model Developers* likely focus on Robustness and Capability.

* *Application Developers* likely focus on Efficiency and Deployment Readiness.

* *End-Users* likely focus on User Experience and Safety.

* **Process Flow:** The bottom layer indicates a linear progression. You start with evaluating components, move to integration, then experiments, trials, and finally full deployment. The central evaluation dimensions presumably apply at every stage of this progression.

### Key Observations & Anomalies

* **Visual Overlap:** There is significant visual overlap in the center-top and center-bottom areas.

* The "Efficiency Metrics" hexagon overlaps the "Application Developer Evaluation" box.

* The "Safety & Alignment" hexagon overlaps the "Controlled Experiments" box.

* This design choice visually integrates the specific metrics with the stakeholders and stages, but it slightly obscures the text.

* **Color Coding:** The colors of the bottom "Progressive Evaluation Stages" loosely correspond to the colors of the hexagons above them, suggesting a thematic link:

* **Blue:** Component Evaluation <-> Model Developer / Capability Assessment

* **Green:** System Integration <-> Efficiency Metrics

* **Red:** Controlled Experiments <-> Safety & Alignment / Robustness

* **Yellow:** Limited Field Trials <-> Deployment Readiness

* **Purple:** Full Deployment (matches the purple hue of the Safety hexagon border/fill).

### Interpretation

This framework proposes that evaluating AI agents is a complex, multi-faceted problem that must be viewed through three distinct lenses simultaneously:

1. **The "What" (Dimensions):** The six hexagons define *what* is being measured. It moves beyond simple accuracy (Capability) to include how the system behaves under stress (Robustness), how much it costs (Efficiency), how safe it is (Safety), how easy it is to use (User Experience), and how ready it is for the real world (Deployment Readiness).

2. **The "Who" (Stakeholders):** The top layer acknowledges that evaluation is subjective based on the user's role. A developer cares about code robustness; an end-user cares about the experience.

3. **The "When" (Stages):** The bottom layer places evaluation in a temporal context. You don't evaluate everything at once; you move from unit testing (Component) to real-world usage (Full Deployment).

**Conclusion:** The diagram argues against narrow benchmarks. A high "Capability" score is insufficient if the agent fails "Safety" checks or has poor "Efficiency." Successful AI agent deployment requires passing criteria in all six hexagonal dimensions across all five progressive stages.

DECODING INTELLIGENCE...