## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

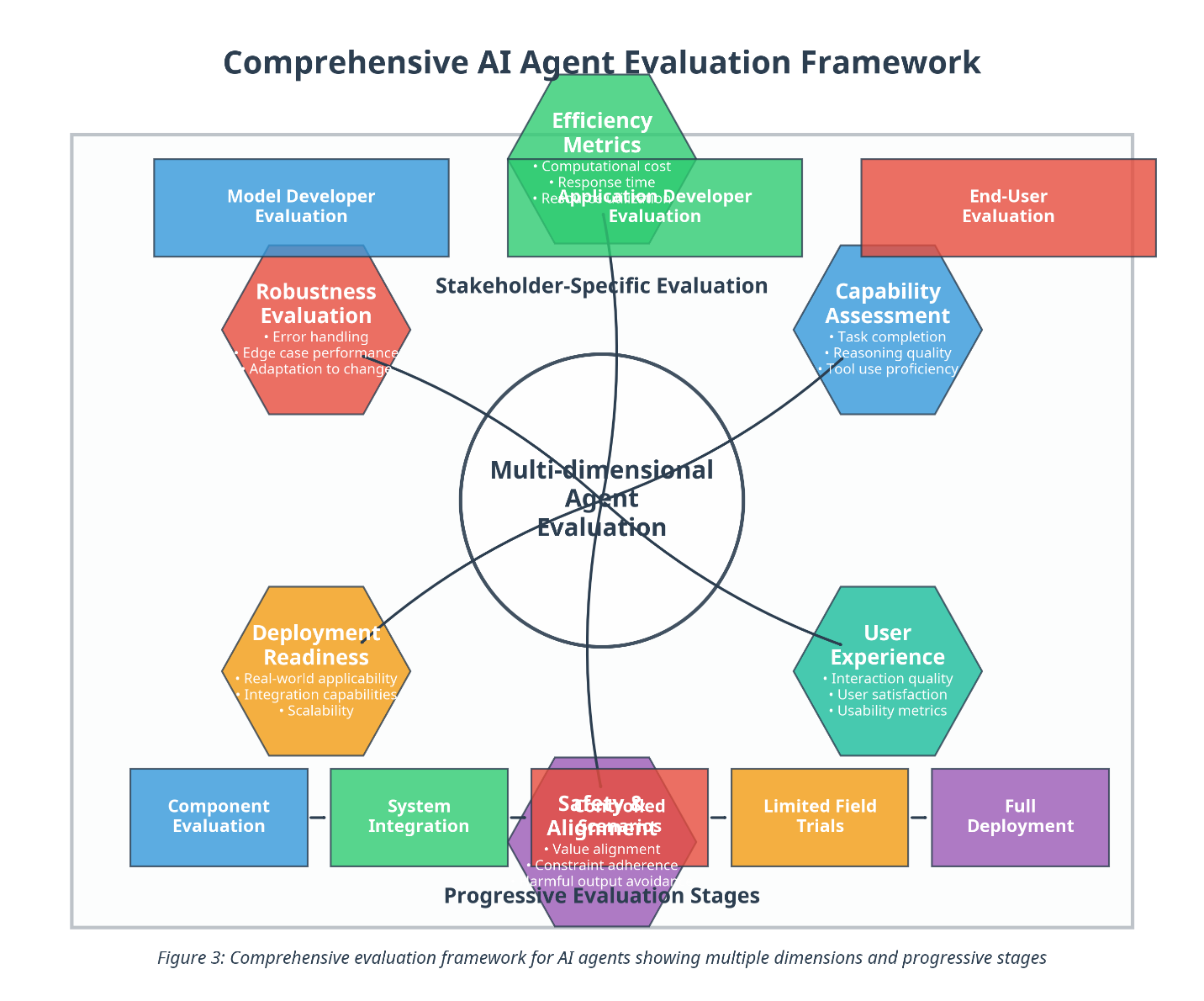

The image presents a diagram illustrating a comprehensive framework for evaluating AI agents. The framework is visualized as a series of overlapping circles representing different evaluation dimensions and stages, centered around "Multi-dimensional Agent Evaluation." The diagram emphasizes a stakeholder-specific approach and progressive evaluation stages, from component evaluation to full deployment.

### Components/Axes

The diagram is structured around the following key components:

* **Central Concept:** Multi-dimensional Agent Evaluation (located in the center of the diagram, within a black circle).

* **Stakeholder-Specific Evaluations (Outer Circles):**

* Model Developer Evaluation (Top-left, purple)

* End-User Evaluation (Top-right, teal)

* Robustness Evaluation (Left, orange)

* Capability Assessment (Right, yellow)

* Deployment Readiness (Bottom-left, dark blue)

* User Experience (Bottom-right, light blue)

* Safety & Alignment (Bottom-center, red)

* **Progressive Evaluation Stages (Bottom Row):**

* Component Evaluation (Dark purple)

* System Integration (Dark blue)

* Limited Field Trials (Red)

* Full Deployment (Teal)

* **Text within Circles:** Each evaluation circle contains bullet points listing specific evaluation criteria.

* **Title:** Comprehensive AI Agent Evaluation Framework (Top, black text)

* **Caption:** Figure 3: Comprehensive evaluation framework for AI agents showing multiple dimensions and progressive stages (Bottom, black text)

### Detailed Analysis or Content Details

Let's break down the content within each evaluation circle:

* **Model Developer Evaluation (Purple):**

* Efficiency Metrics

* Computational cost

* Response time

* Reapplication Developer Evaluation

* **End-User Evaluation (Teal):**

* (No visible text)

* **Robustness Evaluation (Orange):**

* Error handling

* Edge case performance

* Adaptation to change

* **Capability Assessment (Yellow):**

* Task completion

* Reasoning quality

* Tool use proficiency

* **Deployment Readiness (Dark Blue):**

* Real-world applicability

* Integration capabilities

* Scalability

* **User Experience (Light Blue):**

* Interaction quality

* User satisfaction

* Usability metrics

* **Safety & Alignment (Red):**

* Value alignment

* Constraint adherence

* Harmful output avoidance

The Progressive Evaluation Stages are simply labeled as such, without further detail.

### Key Observations

* The diagram emphasizes the interconnectedness of different evaluation aspects. The overlapping circles suggest that a comprehensive evaluation requires considering multiple dimensions simultaneously.

* The "Multi-dimensional Agent Evaluation" at the center highlights the holistic nature of the framework.

* The progressive stages indicate a phased approach to evaluation, starting with individual components and culminating in real-world deployment.

* The color coding helps to visually distinguish between different evaluation areas.

* The text within the circles provides specific criteria for each evaluation dimension.

### Interpretation

This diagram presents a conceptual framework for evaluating AI agents, moving beyond simple performance metrics to encompass a broader range of considerations. The framework acknowledges that AI evaluation is not a one-size-fits-all process and must be tailored to different stakeholders (developers, end-users, etc.). The progressive stages suggest a risk-mitigation strategy, where AI agents are thoroughly tested and validated before being deployed in real-world scenarios.

The overlapping circles indicate that these evaluation dimensions are not mutually exclusive. For example, robustness (orange) and safety & alignment (red) are likely to be closely related, as an agent that is not robust may also be prone to generating harmful outputs. Similarly, user experience (light blue) and end-user evaluation (teal) are interconnected, as usability metrics are a key component of end-user satisfaction.

The inclusion of "Reapplication Developer Evaluation" within the "Efficiency Metrics" circle is somewhat ambiguous and could benefit from further clarification. It suggests that the framework considers the effort required to adapt or re-apply the AI agent to new tasks or contexts.

Overall, the diagram provides a valuable high-level overview of a comprehensive AI agent evaluation process. It serves as a useful starting point for developing more detailed evaluation plans and checklists.