## Diagram: Comprehensive AI Agent Evaluation Framework

### Overview

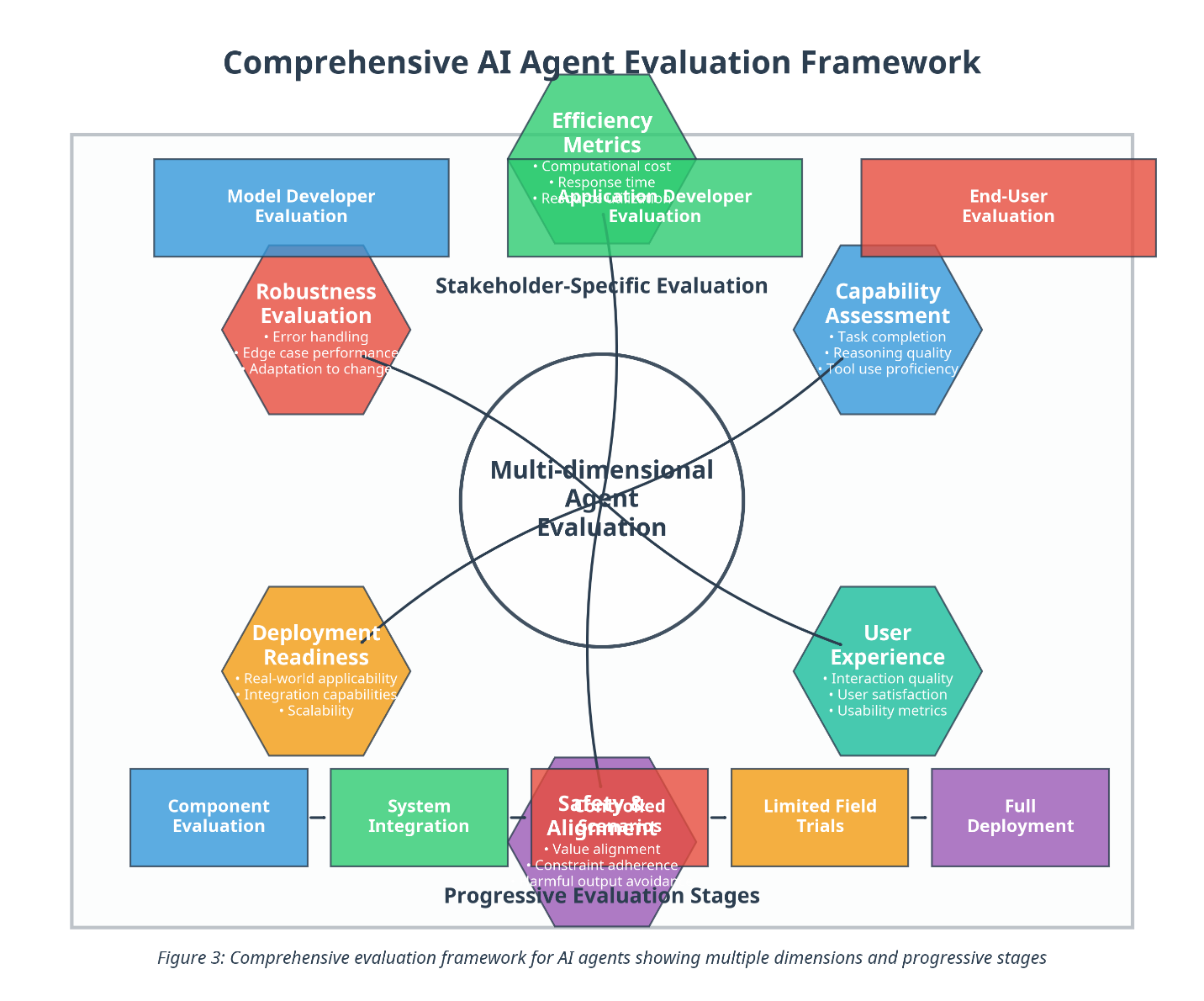

The diagram illustrates a multi-dimensional framework for evaluating AI agents, emphasizing both stakeholder-specific criteria and progressive evaluation stages. It uses a central hub ("Multi-dimensional Agent Evaluation") connected to hexagonal and rectangular components representing evaluation dimensions and stages.

### Components/Axes

#### Central Hub

- **Multi-dimensional Agent Evaluation** (central circle)

- Connected to six hexagonal components (Stakeholder-Specific Evaluation) and five rectangular components (Progressive Evaluation Stages).

#### Stakeholder-Specific Evaluation (Hexagons)

1. **Efficiency Metrics** (green)

- Computational cost, response time, application deployment.

2. **Capability Assessment** (blue)

- Task completion, reasoning quality, tool use proficiency.

3. **User Experience** (teal)

- Interaction quality, user satisfaction, usability metrics.

4. **Deployment Readiness** (orange)

- Real-world applicability, integration capabilities, scalability.

5. **Robustness Evaluation** (red)

- Error handling, edge case performance, adaptation to change.

6. **Model Developer Evaluation** (blue)

- Accuracy, bias detection, explainability.

#### Progressive Evaluation Stages (Rectangles)

1. **Component Evaluation** (blue)

- Unit testing, code quality, documentation.

2. **System Integration** (green)

- API compatibility, interoperability, security.

3. **Safety & Alignment** (purple)

- Value alignment, constraint adherence, harmful output avoidance.

4. **Limited Field Trials** (orange)

- Performance metrics, user feedback, error rates.

5. **Full Deployment** (purple)

- Monitoring, maintenance, scalability.

### Detailed Analysis

- **Color Coding**:

- Blue: Model Developer Evaluation, Component Evaluation.

- Green: Efficiency Metrics, System Integration.

- Red: Robustness Evaluation.

- Orange: Deployment Readiness, Limited Field Trials.

- Purple: Safety & Alignment, Full Deployment.

- Teal: User Experience.

- **Flow and Relationships**:

- The central hub connects all evaluation dimensions, suggesting interdependence.

- Progressive stages flow linearly from Component Evaluation (left) to Full Deployment (right), indicating a phased approach.

### Key Observations

1. **Interconnected Dimensions**: Stakeholder-specific evaluations (e.g., User Experience, Robustness) are equally weighted, emphasizing holistic assessment.

2. **Staged Progression**: Evaluation begins with technical components (e.g., code quality) and advances to real-world deployment, ensuring iterative refinement.

3. **Balanced Focus**: Combines technical metrics (e.g., computational cost) with ethical considerations (e.g., value alignment).

### Interpretation

The framework prioritizes **comprehensiveness** by integrating technical, ethical, and user-centric criteria. The progressive stages ensure AI agents are rigorously tested at every development phase, from code quality to real-world performance. The hexagonal components highlight the need for **multi-stakeholder input**, balancing developer rigor (e.g., bias detection) with end-user satisfaction (e.g., usability metrics). This structure mitigates risks of biased or unsafe deployments by enforcing alignment checks and field trials before full-scale implementation.

*Note: No numerical data or trends are present; the diagram focuses on categorical relationships and evaluation priorities.*