## [Diagram]: Factuality-Enhanced LLM Training Pipeline (3-Step Process)

### Overview

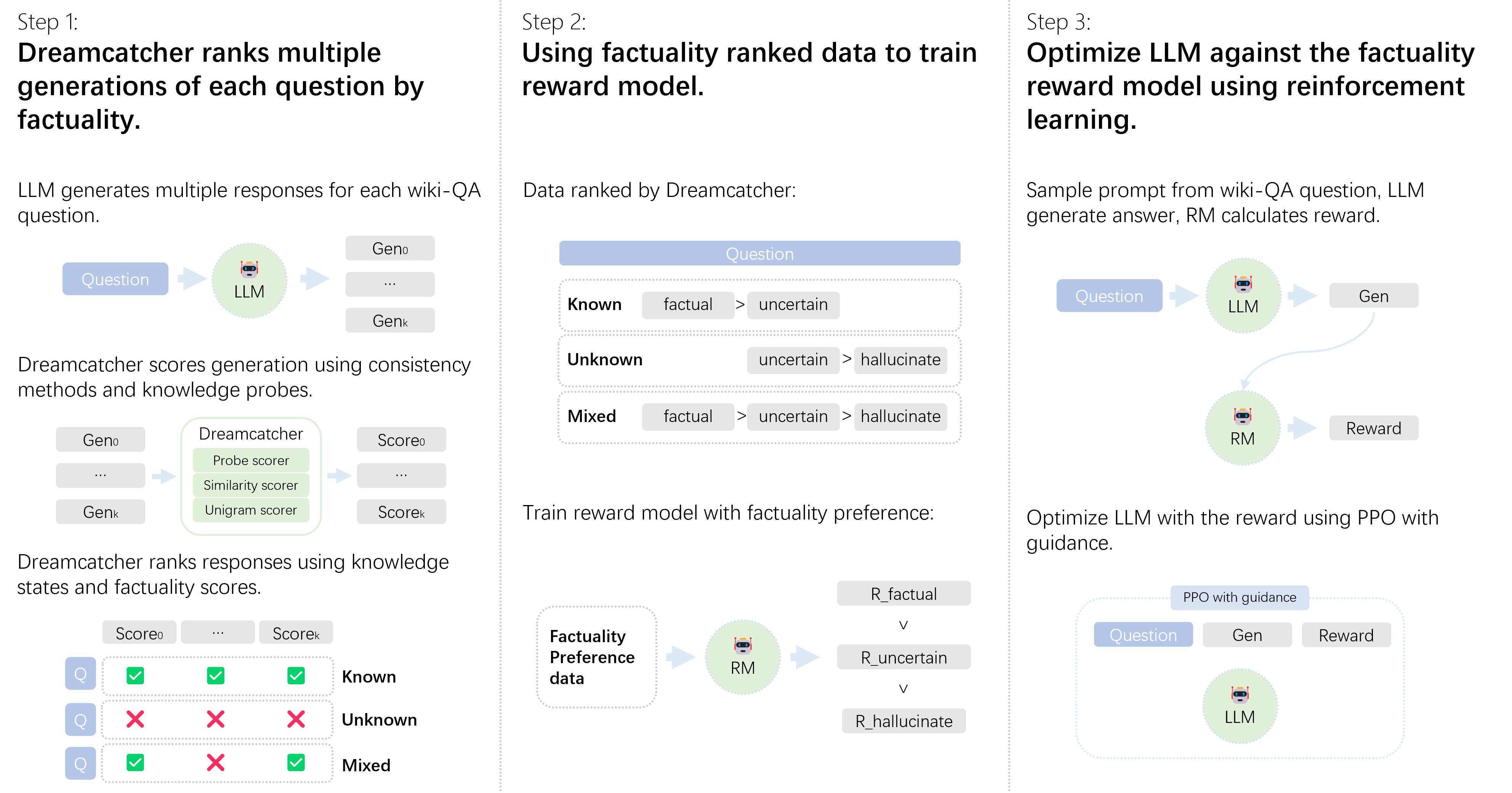

The image is a technical diagram illustrating a 3-step pipeline to improve a Large Language Model’s (LLM) factuality using **Dreamcatcher** (a factuality-ranking system), a **Reward Model (RM)**, and **Reinforcement Learning (PPO)**. Each step is visually segmented with text descriptions, icons, and flow arrows to explain the process.

### Components/Steps (Spatial Layout: Left → Middle → Right)

The diagram is divided into three vertical sections (Step 1, Step 2, Step 3) with text, icons, and flow arrows:

#### Step 1 (Left): *“Dreamcatcher ranks multiple generations of each question by factuality.”*

- **Sub-Components:**

1. *“LLM generates multiple responses for each wiki-QA question.”*

- Visual: `Question` (blue box) → `LLM` (green icon) → `Gen₀`, `...`, `Genₖ` (gray boxes, representing multiple responses).

2. *“Dreamcatcher scores generation using consistency methods and knowledge probes.”*

- Visual: `Gen₀`, `...`, `Genₖ` → `Dreamcatcher` (green box with “Probe scorer,” “Similarity scorer,” “Unigram scorer”) → `Score₀`, `...`, `Scoreₖ` (gray boxes, representing factuality scores).

3. *“Dreamcatcher ranks responses using knowledge states and factuality scores.”*

- Visual: Three rows (labeled “Q”) with checkmarks (✓) and crosses (×) for categories:

- `Known`: All ✓ (factual).

- `Unknown`: All × (hallucinate).

- `Mixed`: ✓, ×, ✓ (mix of factual/hallucinate).

#### Step 2 (Middle): *“Using factuality ranked data to train reward model.”*

- **Sub-Components:**

1. *“Data ranked by Dreamcatcher:”*

- Visual: `Question` (blue box) → three categories with factuality preferences:

- `Known`: `factual > uncertain`

- `Unknown`: `uncertain > hallucinate`

- `Mixed`: `factual > uncertain > hallucinate`

2. *“Train reward model with factuality preference:”*

- Visual: `Factuality Preference data` (gray box) → `RM` (green icon) → `R_factual`, `R_uncertain`, `R_hallucinate` (gray boxes, with “v” (versus) between them, indicating reward comparisons).

#### Step 3 (Right): *“Optimize LLM against the factuality reward model using reinforcement learning.”*

- **Sub-Components:**

1. *“Sample prompt from wiki-QA question, LLM generate answer, RM calculates reward.”*

- Visual: `Question` (blue box) → `LLM` (green icon) → `Gen` (gray box); `RM` (green icon) → `Reward` (gray box).

2. *“Optimize LLM with the reward using PPO with guidance.”*

- Visual: `PPO with guidance` (blue box) → `Question`, `Gen`, `Reward` (gray boxes) → `LLM` (green icon).

### Detailed Analysis (Content Details)

- **Step 1 (Ranking):**

- LLM generates multiple responses (`Gen₀` to `Genₖ`) for a wiki-QA question.

- Dreamcatcher scores responses using three methods: *Probe scorer*, *Similarity scorer*, *Unigram scorer* (producing `Score₀` to `Scoreₖ`).

- Responses are ranked into three knowledge states:

- `Known`: All scores (✓, ✓, ✓) → factual.

- `Unknown`: All scores (×, ×, ×) → hallucinate.

- `Mixed`: Scores (✓, ×, ✓) → mix of factual/hallucinate.

- **Step 2 (Training Reward Model):**

- Ranked data (from Step 1) is used to train a Reward Model (RM) with factuality preferences:

- `Known`: `factual > uncertain`

- `Unknown`: `uncertain > hallucinate`

- `Mixed`: `factual > uncertain > hallucinate`

- The RM learns to assign rewards (`R_factual`, `R_uncertain`, `R_hallucinate`) based on these preferences.

- **Step 3 (Optimizing LLM):**

- For a wiki-QA question, the LLM generates an answer (`Gen`), and the RM calculates a reward.

- The LLM is optimized using **PPO (Proximal Policy Optimization)** with guidance, aligning its outputs with the RM’s factuality preferences.

### Key Observations

- **Sequential Flow:** The process is linear: *Ranking (Step 1) → Training RM (Step 2) → Optimizing LLM (Step 3)*.

- **Multi-Scorer Ranking:** Dreamcatcher uses three scoring methods (probe, similarity, unigram) to ensure robust factuality assessment.

- **Factuality Hierarchy:** The RM is trained on a clear hierarchy: `factual > uncertain > hallucinate` (with variations for “Known,” “Unknown,” “Mixed” categories).

- **RL for Optimization:** PPO (a reinforcement learning algorithm) is used to iteratively improve the LLM’s factuality.

### Interpretation

This pipeline addresses the challenge of **hallucinations** in LLMs by:

1. **Ranking Responses:** Dreamcatcher evaluates multiple LLM outputs to identify factual, uncertain, or hallucinated responses.

2. **Training a Reward Model:** The RM learns to distinguish between factuality levels, providing a signal for optimization.

3. **Optimizing the LLM:** Using PPO, the LLM is fine-tuned to generate responses that align with the RM’s factuality preferences, reducing hallucinations and improving accuracy.

This approach leverages ranking, reward modeling, and reinforcement learning to iteratively enhance the LLM’s ability to produce factually correct answers, making it more reliable for tasks like question-answering.