## Flowchart: Misinformation Lifecycle and Detection System

### Overview

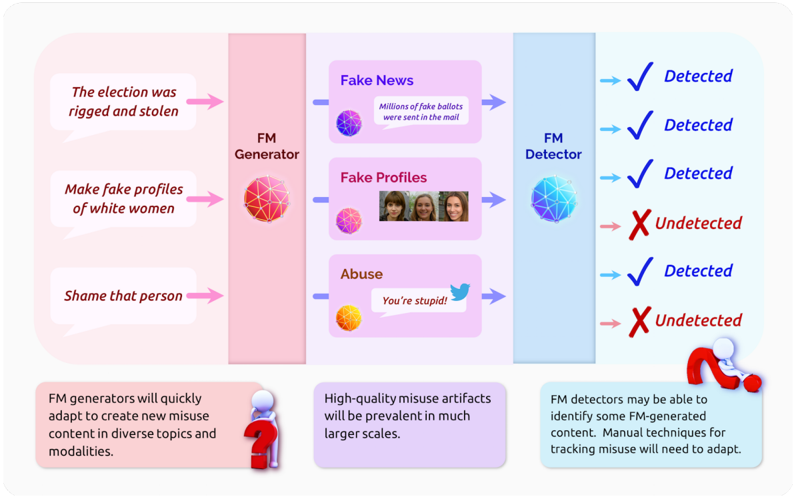

The diagram illustrates the lifecycle of misinformation generation and detection, showing how fake content is created, propagated, and identified. It uses color-coded components (pink, purple, blue) to represent different stages and outcomes, with arrows indicating directional flow and detection status.

### Components/Axes

- **FM Generator** (pink): Source of misinformation creation

- **Fake News** (purple): Misinformation content type

- **Fake Profiles** (purple): Synthetic identity creation

- **Abuse** (purple): Harassment tactics

- **FM Detector** (blue): Detection system

- **Detection Status**:

- ✅ Detected (blue checkmark)

- ❌ Undetected (red X)

- **Text Boxes**: Additional contextual information

### Detailed Analysis

1. **FM Generator** (pink):

- Inputs:

- "The election was rigged and stolen"

- "Make fake profiles of white women"

- "Shame that person"

- Outputs:

- Fake News (e.g., "Millions of fake ballots were sent in the mail")

- Fake Profiles (visual representation of 3 women)

- Abuse (e.g., "You're stupid!" with Twitter bird icon)

2. **FM Detector** (blue):

- Detection outcomes:

- ✅ Detected:

- Fake News (ballots)

- Fake Profiles (women)

- Abuse (Twitter message)

- ❌ Undetected:

- Fake News (ballots)

- Abuse (Twitter message)

3. **Text Boxes**:

- Bottom-left (pink): "FM generators will quickly adapt to create new misuse content in diverse topics and modalities."

- Bottom-center (purple): "High-quality misuse artifacts will be prevalent in much larger scales."

- Bottom-right (blue): "FM detectors may be able to identify some FM-generated content. Manual techniques for tracking misuse will need to adapt."

### Key Observations

- **Detection Paradox**:

- 3/5 content types show mixed detection status (2 detected/1 undetected for Fake News; 1 detected/2 undetected for Abuse)

- Fake Profiles show 100% detection rate

- **Adaptability Challenge**:

- Generators explicitly stated to "quickly adapt" to new topics/modalities

- Detectors require manual technique adaptation

- **Scale Concern**:

- High-quality misuse artifacts will appear at "much larger scales"

### Interpretation

This diagram reveals a critical arms race between misinformation generators and detection systems. While FM detectors show partial success (60% detection rate across all content types), the explicit mention of generator adaptability suggests an escalating threat. The detection of fake profiles (100%) contrasts with inconsistent results for text-based content, highlighting potential technical limitations in natural language processing versus image/synthetic identity analysis.

The bottom text boxes emphasize the need for continuous evolution in detection methodologies, particularly manual intervention. This suggests that purely automated systems may be insufficient against rapidly evolving misinformation tactics. The mixed detection rates also indicate potential vulnerabilities in content classification algorithms, particularly for text-based misinformation that can be easily modified to evade detection.

The Twitter bird icon in the abuse example implies social media as a primary vector for misinformation propagation, while the "fake ballots" example points to electoral interference as a key concern. The gender-specific mention of "white women" in profile creation hints at targeted demographic manipulation strategies.