## System Architecture Diagram: Retrieval-Augmented Generation (RAG) Pipeline

### Overview

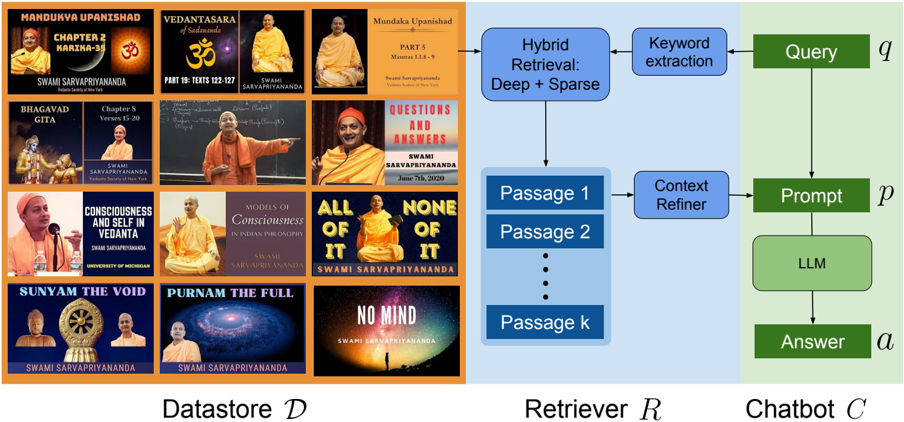

The image is a technical diagram illustrating a three-part system architecture for a retrieval-augmented generation (RAG) chatbot. The system is divided into three distinct, color-coded sections: a **Datastore (D)** on the left (orange background), a **Retriever (R)** in the center (light blue background), and a **Chatbot (C)** on the right (light green background). The diagram shows the flow of information from a knowledge base to a user query, culminating in a generated answer.

### Components/Axes

The diagram is segmented into three vertical panels:

1. **Left Panel - Datastore (D):**

* **Label:** "Datastore D" (bottom center).

* **Content:** A 4x3 grid of 12 rectangular thumbnails, resembling book covers or video thumbnails. Each contains text and an image of a person (presumably Swami Sarvapriyananda).

* **Text on Thumbnails (Transcribed):**

* Row 1, Col 1: "MANDUKYA UPANISHAD", "CHAPTER 2 MANTRA-7-8", "SWAMI SARVAPRIYANANDA"

* Row 1, Col 2: "VEDANTASARA", "PART 10: TEXTS 122-127", "SWAMI SARVAPRIYANANDA"

* Row 1, Col 3: "Mundaka Upanishad", "PART 5", "Mantras 1.1.1 - 9", "SWAMI SARVAPRIYANANDA"

* Row 2, Col 1: "BHAGAVAD GITA", "Chapter 8 Verses 15-20", "SWAMI SARVAPRIYANANDA"

* Row 2, Col 2: (Image of a person teaching, text is less clear but appears to be a lecture title)

* Row 2, Col 3: "QUESTIONS AND ANSWERS", "SWAMI SARVAPRIYANANDA", "June 19, 2023"

* Row 3, Col 1: (Image of a person speaking, text is a name/title)

* Row 3, Col 2: "CONSCIOUSNESS AND SELF IN VEDANTA", "SWAMI SARVAPRIYANANDA", "UNIVERSITY OF MICHIGAN"

* Row 3, Col 3: "MODELS OF CONSCIOUSNESS IN INDIAN PHILOSOPHY", "SWAMI SARVAPRIYANANDA"

* Row 4, Col 1: "SUNYAM THE VOID", "SWAMI SARVAPRIYANANDA"

* Row 4, Col 2: "PURNAM THE FULL", "SWAMI SARVAPRIYANANDA"

* Row 4, Col 3: "ALL OF IT", "NONE OF IT", "SWAMI SARVAPRIYANANDA"

* Row 4, Col 4 (Bottom Right): "NO MIND", "SWAMI SARVAPRIYANANDA"

2. **Center Panel - Retriever (R):**

* **Label:** "Retriever R" (bottom center).

* **Flowchart Components (Top to Bottom):**

* A blue box labeled "Hybrid Retrieval: Deep + Sparse".

* An arrow points from this box to a vertical stack of blue boxes labeled "Passage 1", "Passage 2", "..." (ellipsis), and "Passage k".

* An arrow points from the "Passage" stack to a blue box labeled "Context Refiner".

* **Connection:** An arrow originates from the Datastore panel and points into the "Hybrid Retrieval" box.

3. **Right Panel - Chatbot (C):**

* **Label:** "Chatbot C" (bottom center).

* **Flowchart Components (Top to Bottom):**

* A green box labeled "Query" with a variable `q` to its right.

* An arrow points from "Query" down to a green box labeled "Prompt" with a variable `p` to its right.

* An arrow points from "Prompt" down to a green box labeled "LLM".

* An arrow points from "LLM" down to a green box labeled "Answer" with a variable `a` to its right.

* **Connection:** An arrow originates from the "Context Refiner" box in the Retriever panel and points into the "Prompt" box in the Chatbot panel.

### Detailed Analysis

The diagram explicitly maps the data flow for a RAG system:

1. **Input:** A user's "Query" (`q`).

2. **Retrieval Process:** The query triggers the "Retriever" module. This module uses "Hybrid Retrieval: Deep + Sparse" to search the "Datastore" (`D`), which contains a collection of documents (represented by the 12 thumbnails). The retrieval process extracts multiple relevant text chunks ("Passage 1" through "Passage k").

3. **Context Processing:** The retrieved passages are processed by a "Context Refiner" module.

4. **Generation Process:** The refined context is combined with the original query to form a "Prompt" (`p`). This prompt is fed into a Large Language Model ("LLM").

5. **Output:** The LLM generates an "Answer" (`a`).

### Key Observations

* **Datastore Specificity:** The datastore is not generic; it is specifically populated with content from "Swami Sarvapriyananda," covering topics from the Mandukya Upanishad, Bhagavad Gita, and Vedantic philosophy. This suggests a specialized domain-specific chatbot.

* **Hybrid Retrieval:** The use of "Deep + Sparse" retrieval indicates a sophisticated search strategy combining semantic (deep learning-based) and keyword-based (sparse) methods for better accuracy.

* **Modular Design:** The architecture is clearly modular, separating knowledge storage (Datastore), information retrieval (Retriever), and response generation (Chatbot).

* **Variable Notation:** The use of mathematical variables (`q`, `p`, `a`) for Query, Prompt, and Answer suggests a formal, technical description of the process.

### Interpretation

This diagram illustrates the architecture of a **domain-specific, retrieval-augmented generative AI system**. Its purpose is to create a chatbot that can answer questions based on a curated knowledge base of spiritual/philosophical lectures and texts.

* **How Elements Relate:** The Datastore is the foundational knowledge source. The Retriever acts as the intelligent bridge, finding relevant information from that source based on a user's query. The Chatbot is the interface and reasoning engine, using the retrieved context to generate a coherent, grounded answer via an LLM.

* **Significance:** The design addresses a key limitation of standalone LLMs (lack of specific, up-to-date, or proprietary knowledge) by grounding the generation process in a verified external datastore. The "Context Refiner" step is crucial for ensuring the retrieved passages are optimally formatted for the LLM.

* **Notable Pattern:** The entire pipeline is a linear, feed-forward process from query to answer, with the critical side-input of retrieved context injected at the "Prompt" stage. The specialization of the datastore implies the chatbot's expertise is narrowly defined by the content it has been fed.