# Technical Diagram Analysis: Prompting and Reasoning Strategies

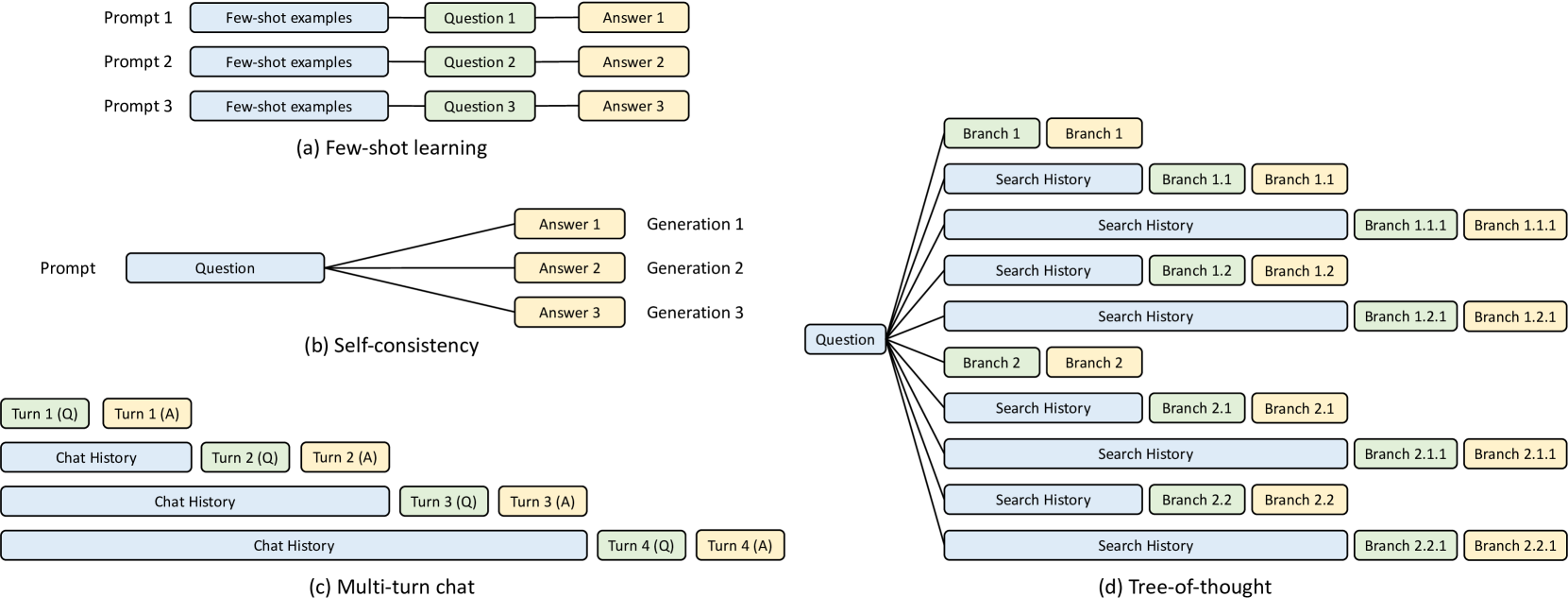

This image illustrates four distinct methodologies for structuring prompts and managing Large Language Model (LLM) outputs. The diagram is divided into four quadrants, labeled (a) through (d).

## Color Coding Legend

Across all diagrams, a consistent color scheme is used to denote functional components:

* **Light Blue:** Context, Examples, or History (Input data).

* **Light Green:** The specific Question or Branching logic.

* **Light Yellow:** The generated Answer or Result.

---

## (a) Few-shot learning

This section demonstrates a linear, repetitive structure where the model is provided with examples before the final task.

* **Structure:** Three parallel horizontal tracks labeled **Prompt 1**, **Prompt 2**, and **Prompt 3**.

* **Flow:** Each track follows a sequential flow: `[Few-shot examples]` $\rightarrow$ `[Question X]` $\rightarrow$ `[Answer X]`.

* **Components:**

* **Prompt 1:** Few-shot examples $\rightarrow$ Question 1 $\rightarrow$ Answer 1

* **Prompt 2:** Few-shot examples $\rightarrow$ Question 2 $\rightarrow$ Answer 2

* **Prompt 3:** Few-shot examples $\rightarrow$ Question 3 $\rightarrow$ Answer 3

---

## (b) Self-consistency

This section illustrates a one-to-many relationship where a single prompt generates multiple independent outputs to find a consensus.

* **Structure:** A single input branching into three parallel outputs.

* **Flow:** A central **Prompt** containing a `[Question]` branches out to three separate generations.

* **Components:**

* **Generation 1:** Answer 1

* **Generation 2:** Answer 2

* **Generation 3:** Answer 3

---

## (c) Multi-turn chat

This section depicts the cumulative nature of conversational history over four successive turns.

* **Structure:** A staggered, descending stack representing the growth of context over time.

* **Flow:**

* **Turn 1:** `[Turn 1 (Q)]` followed by `[Turn 1 (A)]`.

* **Turn 2:** `[Chat History]` (containing Turn 1) + `[Turn 2 (Q)]` $\rightarrow$ `[Turn 2 (A)]`.

* **Turn 3:** `[Chat History]` (containing Turns 1-2) + `[Turn 3 (Q)]` $\rightarrow$ `[Turn 3 (A)]`.

* **Turn 4:** `[Chat History]` (containing Turns 1-3) + `[Turn 4 (Q)]` $\rightarrow$ `[Turn 4 (A)]`.

* **Trend:** The `Chat History` block (Blue) grows significantly longer with each subsequent turn.

---

## (d) Tree-of-thought

This section shows a complex, hierarchical branching structure used for exploring multiple reasoning paths.

* **Structure:** A root node branching into primary, secondary, and tertiary levels.

* **Flow:** Starts with a single `[Question]` node on the left, which branches into multiple paths.

* **Components and Hierarchy:**

* **Level 1 (Primary Branches):**

* **Branch 1:** Leads to a `Branch 1` answer.

* **Branch 2:** Leads to a `Branch 2` answer.

* **Level 2 (Secondary Branches):**

* From the root, paths include `[Search History]` leading to `Branch 1.1` and `Branch 1.2`.

* From the root, paths include `[Search History]` leading to `Branch 2.1` and `Branch 2.2`.

* **Level 3 (Tertiary Branches):**

* Extended `[Search History]` blocks lead to deeper sub-branches: `Branch 1.1.1`, `Branch 1.2.1`, `Branch 2.1.1`, and `Branch 2.2.1`.

* **Key Observation:** Each deeper level of the tree incorporates a longer `Search History` (Blue) block to maintain the context of the reasoning path.