# Technical Data Extraction: Attention Forward Speed Benchmark

## 1. Document Header

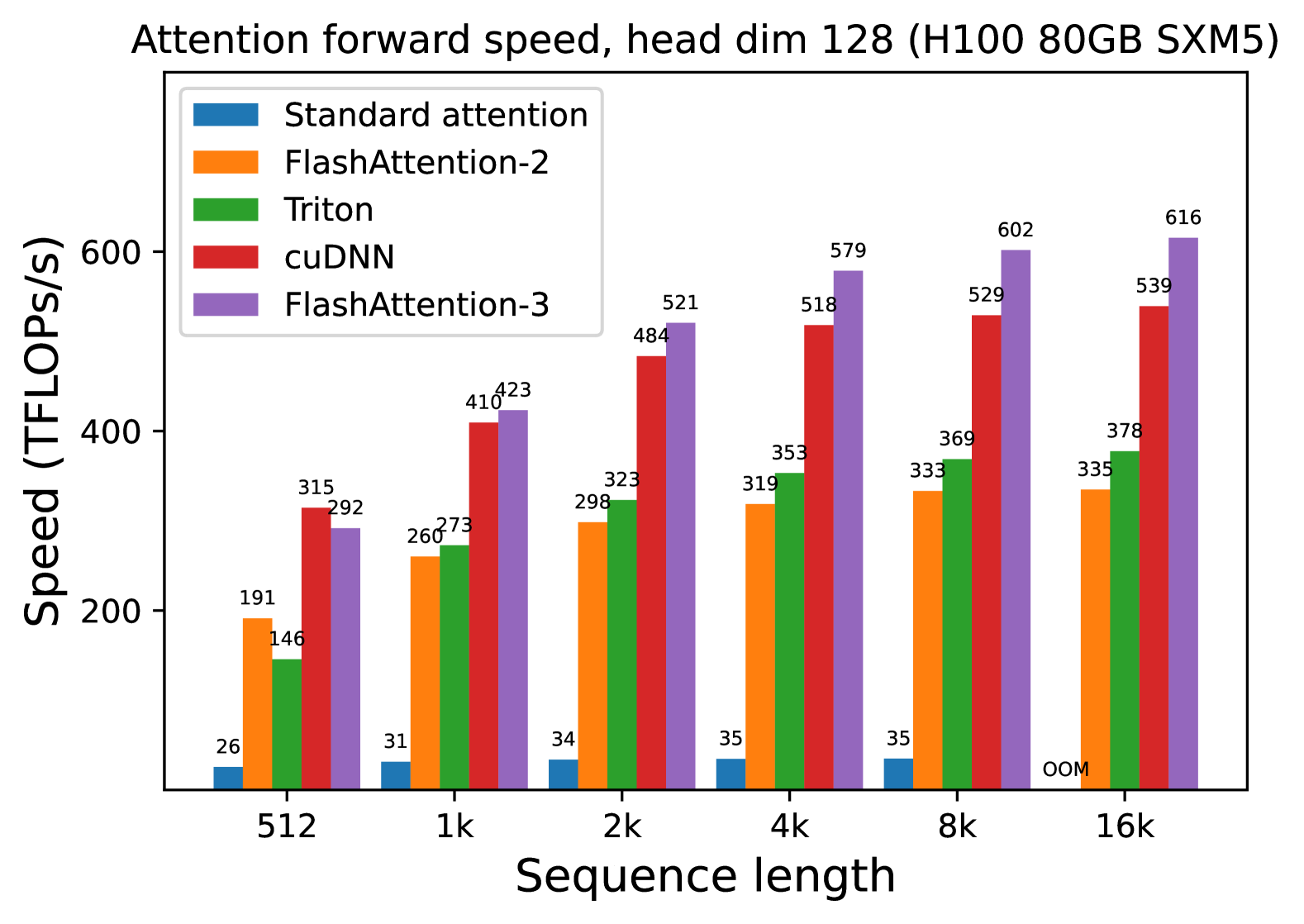

* **Title:** Attention forward speed, head dim 128 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Context:** Head dimension is fixed at 128.

## 2. Chart Metadata

* **Type:** Grouped Bar Chart.

* **Y-Axis Label:** Speed (TFLOPS/s)

* **Y-Axis Scale:** 0 to 600+ (increments of 200 marked).

* **X-Axis Label:** Sequence length

* **X-Axis Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Legend Location:** Top-left [x≈0.15, y≈0.85].

## 3. Legend and Series Identification

The chart compares five different implementations of the attention mechanism:

1. **Standard attention** (Blue): Represents the baseline performance.

2. **FlashAttention-2** (Orange): An optimized attention implementation.

3. **Triton** (Green): Implementation using the Triton language/compiler.

4. **cuDNN** (Red): NVIDIA's Deep Neural Network library implementation.

5. **FlashAttention-3** (Purple): The latest iteration of the FlashAttention algorithm.

---

## 4. Data Table Reconstruction

The following table transcribes the numerical values (TFLOPS/s) displayed above each bar in the chart.

| Sequence Length | Standard attention (Blue) | FlashAttention-2 (Orange) | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **512** | 26 | 191 | 146 | 315 | 292 |

| **1k** | 31 | 260 | 273 | 410 | 423 |

| **2k** | 34 | 298 | 323 | 484 | 521 |

| **4k** | 35 | 319 | 353 | 518 | 579 |

| **8k** | 35 | 333 | 369 | 529 | 602 |

| **16k** | OOM* | 335 | 378 | 539 | 616 |

*\*OOM: Out of Memory*

---

## 5. Trend Analysis and Component Isolation

### Standard attention (Blue)

* **Trend:** Extremely low and relatively flat performance.

* **Observation:** Performance crawls from 26 to 35 TFLOPS/s before failing at 16k sequence length due to memory constraints (OOM).

### FlashAttention-2 (Orange)

* **Trend:** Rapid initial growth, tapering off to a plateau.

* **Observation:** Shows a significant jump from 512 (191) to 1k (260), then stabilizes around 335 TFLOPS/s at higher sequence lengths.

### Triton (Green)

* **Trend:** Consistent upward slope across all sequence lengths.

* **Observation:** Starts lower than FlashAttention-2 at 512 (146 vs 191) but overtakes it at 1k and maintains a higher growth trajectory, reaching 378 TFLOPS/s at 16k.

### cuDNN (Red)

* **Trend:** High performance with steady gains, plateauing after 4k.

* **Observation:** Significantly outperforms the previous three methods. It is the fastest method at the shortest sequence length (512) with 315 TFLOPS/s.

### FlashAttention-3 (Purple)

* **Trend:** Strongest upward slope and highest peak performance.

* **Observation:** While slightly slower than cuDNN at 512 (292 vs 315), it scales better than all other methods. It becomes the performance leader starting at the 1k mark and reaches a peak of 616 TFLOPS/s at 16k, nearly doubling the performance of FlashAttention-2.

## 6. Summary of Findings

The data demonstrates that **FlashAttention-3** provides the best scaling and highest throughput for large sequence lengths on H100 hardware, specifically when the head dimension is 128. **Standard attention** is non-viable for large sequences due to its $O(n^2)$ memory requirements, resulting in an "OOM" state at 16k. **cuDNN** remains highly competitive, particularly at shorter sequence lengths (512).