## Diagram: LLM-Based Entity Selection and Question Synthesis Pipeline

### Overview

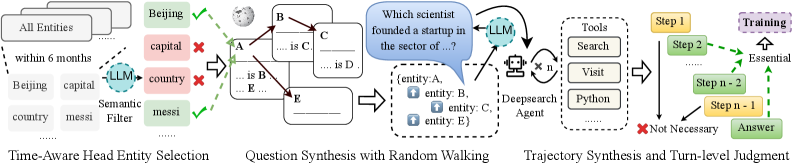

The image is a technical flowchart illustrating a multi-stage process for using Large Language Models (LLMs) to select relevant entities from a knowledge base and synthesize complex questions through a random walking graph method. The process culminates in generating a reasoning trajectory to arrive at an answer, with a judgment step to identify essential reasoning paths.

### Components/Axes

The diagram is organized into three main sequential blocks, flowing from left to right, connected by arrows.

**1. Left Block: Time-Aware Head Entity Selection**

* **Input:** A stack of documents labeled "All Entities".

* **Sub-components:**

* A list of example entities: "within 6 months", "Beijing", "capital", "country", "mesi".

* An LLM icon labeled "Semantic Filter".

* A filtered output list with validation marks:

* "Beijing" (Green Checkmark ✓)

* "capital" (Red Cross ✗)

* "country" (Red Cross ✗)

* "mesi" (Green Checkmark ✓)

* **Label:** "Time-Aware Head Entity Selection" is written below this block.

**2. Middle Block: Question Synthesis with Random Walking**

* **Input:** The selected entities from the previous block (e.g., "Beijing", "mesi").

* **Core Component:** A knowledge graph fragment with nodes (A, B, C, D, E) and labeled edges (e.g., "is", "is B", "is D").

* **Process:** An LLM icon is shown interacting with this graph. A speech bubble from the LLM contains the template question: "Which scientist founded a startup in the sector of ...?".

* **Output:** A structured question template box listing: "(entity: A, entity: B, entity: C, entity: D, entity: E)".

* **Label:** "Question Synthesis with Random Walking" is written below this block.

**3. Right Block: Trajectory Synthesis and Turn-level Judgment**

* **Input:** The synthesized question.

* **Core Components:**

* A "Deepsearch Agent" icon.

* A "Tools" box listing: "Search", "Visit", "Python".

* A sequence of steps: "Step 1", "Step 2", ... "Step n-2", "Step n-1".

* A branching path:

* A green dashed arrow labeled "Essential" connects "Step 2" to "Step n-1".

* A red "X" and the label "Not Necessary" points to the linear path from "Step 2" to "Step n-2".

* The final output box: "Answer".

* **Label:** "Trajectory Synthesis and Turn-level Judgment" is written below this block.

### Detailed Analysis

The diagram details a specific pipeline:

1. **Entity Filtering:** An LLM acts as a semantic filter on a raw list of entities, selecting contextually relevant ones (e.g., "Beijing", "mesi") while discarding generic terms (e.g., "capital", "country"). The "Time-Aware" label suggests temporal context is a filtering criterion.

2. **Question Generation:** Using the selected entities, the system performs a "random walk" on a knowledge graph to discover relationships. An LLM then uses these discovered relationships to construct a complex, multi-entity question (e.g., about a scientist, a startup, and a sector).

3. **Answer Synthesis & Evaluation:** A "Deepsearch Agent" uses tools (Search, Visit, Python) to generate a multi-step reasoning trajectory to answer the question. A "Turn-level Judgment" process evaluates this trajectory, identifying which steps ("Step 2", "Step n-1") are "Essential" to the final answer and which intermediate steps ("Step n-2") are "Not Necessary".

### Key Observations

* **Hybrid System:** The process combines symbolic AI (knowledge graphs, random walking) with neural AI (LLMs for filtering, synthesis, and judgment).

* **Focus on Efficiency:** The "Turn-level Judgment" explicitly aims to distill a long reasoning chain into its essential components, likely for training or evaluation purposes.

* **Tool-Augmented Generation:** The agent is not just a language model but is equipped with external tools ("Search", "Visit", "Python") to gather information.

* **Visual Coding:** Green checkmarks/dashed lines indicate selection or essential paths. Red crosses indicate rejection or non-essential paths.

### Interpretation

This diagram outlines a sophisticated framework for **automated question generation and complex reasoning**. It demonstrates a method to move from a broad knowledge base to a specific, multi-faceted question and then to a verified answer.

* **Purpose:** The system appears designed for creating high-quality training data for reasoning models or for building an agent that can answer complex, multi-hop questions by dynamically synthesizing queries and evaluating its own reasoning process.

* **Relationships:** The flow is strictly linear and causal: better entity selection leads to better question synthesis, which in turn requires and produces a more structured reasoning trajectory. The final judgment step creates a feedback loop by identifying the core logical steps, which could be used to train more efficient models.

* **Notable Pattern:** The most significant insight is the **"Essential" vs. "Not Necessary"** distinction. This implies the system is not just generating answers but is performing meta-cognition—analyzing its own thought process to isolate the critical reasoning steps. This is a key technique for improving the interpretability and efficiency of AI reasoning.

* **Underlying Principle:** The pipeline embodies a "generate-then-filter" or "expand-then-contract" pattern common in advanced AI: first, use the LLM's generative power to create a rich set of possibilities (entities, questions, reasoning steps), then use structured methods (semantic filtering, graph walking, turn-level judgment) to select the most coherent and essential path.