## Bar Chart and Text Snippets: DreamPRM Performance and Question Examples

### Overview

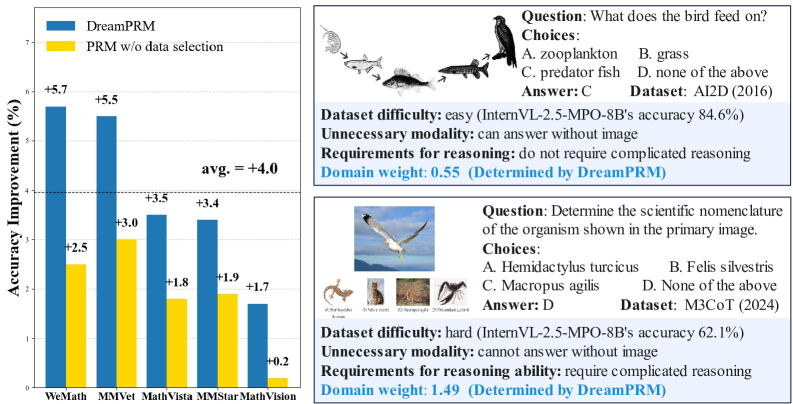

The image presents a bar chart comparing the accuracy improvement of DreamPRM against PRM without data selection across five datasets. Additionally, it includes two example questions with associated metadata, illustrating different levels of difficulty and reasoning requirements.

### Components/Axes

**Left Side: Bar Chart**

* **Y-axis:** "Accuracy Improvement (%)" with a scale from 0 to 6, with implied increments of 1.

* **X-axis:** Datasets: WeMath, MMVet, MathVista, MMStar, MathVision.

* **Legend:** Located at the top-left:

* Blue: DreamPRM

* Yellow: PRM w/o data selection

* **Horizontal Line:** A dashed line is present at the y-axis value of 4, labeled "avg. = +4.0".

**Right Side: Question Examples**

* Two question examples are displayed, each including:

* Question text

* Multiple-choice options (A, B, C, D)

* Correct answer

* Dataset name and year

* Dataset difficulty (easy/hard)

* Accuracy on InternVL-2.5-MPO-8B

* Unnecessary modality (can/cannot answer without image)

* Requirements for reasoning (do/do not require complicated reasoning)

* Domain weight (Determined by DreamPRM)

### Detailed Analysis

**Bar Chart Data:**

* **WeMath:**

* DreamPRM (Blue): +5.7%

* PRM w/o data selection (Yellow): +2.5%

* **MMVet:**

* DreamPRM (Blue): +5.5%

* PRM w/o data selection (Yellow): +3.0%

* **MathVista:**

* DreamPRM (Blue): +3.5%

* PRM w/o data selection (Yellow): +1.8%

* **MMStar:**

* DreamPRM (Blue): +3.4%

* PRM w/o data selection (Yellow): +1.9%

* **MathVision:**

* DreamPRM (Blue): +1.7%

* PRM w/o data selection (Yellow): +0.2%

**Question Examples:**

* **Top Question:**

* Question: "What does the bird feed on?"

* Choices: A. zooplankton, B. grass, C. predator fish, D. none of the above

* Answer: C

* Dataset: AI2D (2016)

* Dataset difficulty: easy (InternVL-2.5-MPO-8B's accuracy 84.6%)

* Unnecessary modality: can answer without image

* Requirements for reasoning: do not require complicated reasoning

* Domain weight: 0.55 (Determined by DreamPRM)

* **Bottom Question:**

* Question: "Determine the scientific nomenclature of the organism shown in the primary image."

* Choices: A. Hemidactylus turcicus, B. Felis silvestris, C. Macropus agilis, D. None of the above

* Answer: D

* Dataset: M3CoT (2024)

* Dataset difficulty: hard (InternVL-2.5-MPO-8B's accuracy 62.1%)

* Unnecessary modality: cannot answer without image

* Requirements for reasoning ability: require complicated reasoning

* Domain weight: 1.49 (Determined by DreamPRM)

### Key Observations

* DreamPRM consistently outperforms PRM without data selection across all datasets.

* The average accuracy improvement of DreamPRM is +4.0%.

* The WeMath and MMVet datasets show the highest accuracy improvements with DreamPRM.

* The question examples illustrate the range of difficulty and reasoning requirements in the datasets.

* The domain weight is higher for the harder question, suggesting DreamPRM prioritizes more complex reasoning tasks.

### Interpretation

The bar chart demonstrates the effectiveness of DreamPRM in improving accuracy compared to a baseline PRM model. The question examples provide context for the types of tasks the model is evaluated on, highlighting the model's ability to handle both simple and complex reasoning. The domain weight assigned to each question suggests that DreamPRM is designed to focus on tasks requiring more sophisticated reasoning abilities. The higher domain weight for the "hard" question indicates that DreamPRM places greater emphasis on correctly answering questions that demand more complex reasoning processes.