## Bar Chart: DreamPRM Accuracy Improvement

### Overview

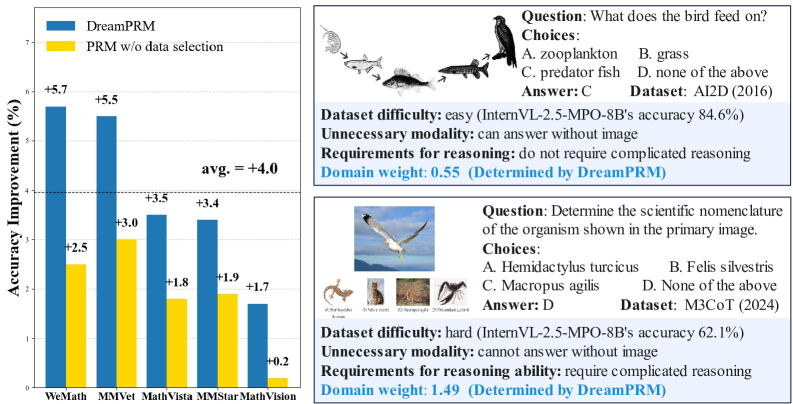

The image presents a bar chart comparing the accuracy improvement of "DreamPRM" versus "PRM w/o data selection" across four datasets: WeMath, MMVet, MathVista, and MMStarMathVision. Alongside the chart are two question-answer pairs with associated metadata about dataset difficulty, modality requirements, reasoning ability, and domain weight.

### Components/Axes

* **X-axis:** Datasets - WeMath, MMVet, MathVista, MMStarMathVision

* **Y-axis:** Accuracy Improvement (%) - Scale ranges from 0 to 7, with increments of 1.

* **Data Series:**

* DreamPRM (Blue bars)

* PRM w/o data selection (Yellow bars)

* **Legend:** Located at the top-left corner, clearly distinguishing between the two data series.

* **Average:** A horizontal line at approximately y=4.0, labeled "avg. = +4.0".

* **Question 1:** Top-right section, accompanied by an image of birds and fish.

* **Question 2:** Bottom-right section, accompanied by an image of a bird in flight and smaller images of reptiles.

### Detailed Analysis

The chart displays the accuracy improvement for each dataset.

* **WeMath:** DreamPRM shows an accuracy improvement of +5.7%, while PRM w/o data selection shows +2.5%.

* **MMVet:** DreamPRM shows an accuracy improvement of +5.5%, while PRM w/o data selection shows +3.0%.

* **MathVista:** DreamPRM shows an accuracy improvement of +3.5%, while PRM w/o data selection shows +1.8%.

* **MMStarMathVision:** DreamPRM shows an accuracy improvement of +3.4%, while PRM w/o data selection shows +0.2%.

The DreamPRM consistently outperforms PRM w/o data selection across all datasets. The largest difference in accuracy improvement is observed in the WeMath dataset.

**Question 1 & Metadata:**

* **Question:** "What does the bird feed on?"

* **Choices:**

* A. zooplankton

* B. grass

* C. predator fish

* D. none of the above

* **Answer:** C

* **Dataset:** AI2D (2016)

* **Dataset difficulty:** easy (InternVL-2.5-MPO-8B’s accuracy 84.6%)

* **Unnecessary modality:** can answer without image

* **Requirements for reasoning:** do not require complicated reasoning

* **Domain weight:** 0.55 (Determined by DreamPRM)

**Question 2 & Metadata:**

* **Question:** "Determine the scientific nomenclature of the organism shown in the primary image."

* **Choices:**

* A. Hemidactylus turcicus

* B. Felis silvestris

* C. Macropus agilis

* D. None of the above

* **Answer:** D

* **Dataset:** M3CoT (2024)

* **Dataset difficulty:** hard (InternVL-2.5-MPO-8B’s accuracy 62.1%)

* **Unnecessary modality:** cannot answer without image

* **Requirements for reasoning ability:** require complicated reasoning

* **Domain weight:** 1.49 (Determined by DreamPRM)

### Key Observations

* DreamPRM consistently demonstrates higher accuracy improvement than PRM w/o data selection.

* The accuracy improvement varies across datasets, suggesting the effectiveness of DreamPRM is dataset-dependent.

* Question 1 is considered "easy" and doesn't require the image, while Question 2 is "hard" and requires the image.

* The domain weight is lower for the easier question (0.55) and higher for the harder question (1.49).

### Interpretation

The data suggests that DreamPRM, with its data selection mechanism, significantly enhances accuracy compared to the PRM method without data selection. The varying degree of improvement across datasets indicates that the benefits of DreamPRM are more pronounced in certain contexts. The metadata associated with the question-answer pairs highlights a correlation between dataset difficulty, the necessity of image modality, reasoning complexity, and the domain weight assigned by DreamPRM. A higher domain weight seems to correspond to tasks requiring more complex reasoning and image understanding. The fact that the "easy" question can be answered without the image suggests that the image is not crucial for solving that particular problem, while the "hard" question explicitly requires image information. This demonstrates DreamPRM's ability to assess the relevance of visual information for different reasoning tasks.