TECHNICAL ASSET FINGERPRINT

4f5dc83965a587292064f35f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Pair Supervised Fine-Tuning vs. Online Reinforcement Learning

### Overview

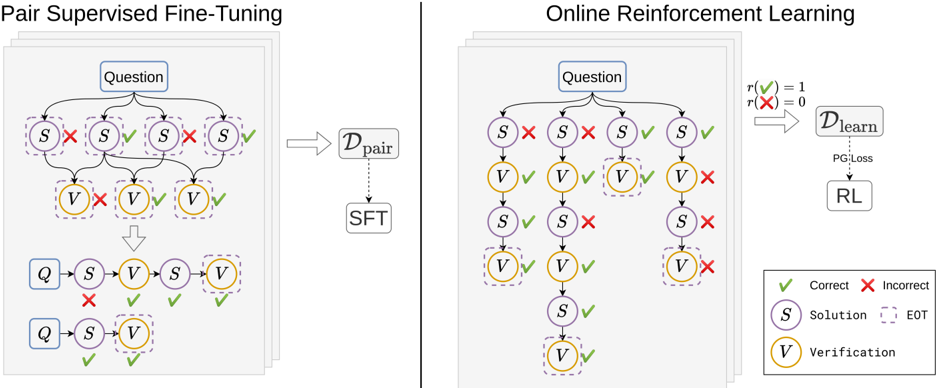

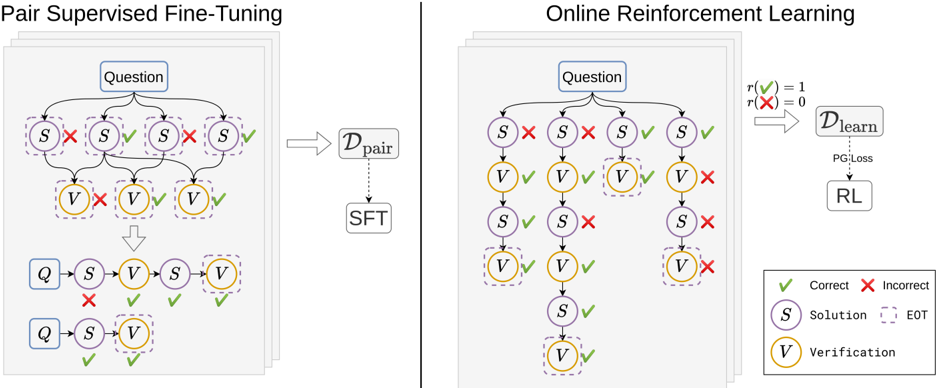

The image presents two diagrams illustrating different approaches to training a model: Pair Supervised Fine-Tuning (left) and Online Reinforcement Learning (right). Both diagrams depict a process involving questions, solutions, and verifications, but they differ in how the model learns from these elements.

### Components/Axes

**Left Diagram (Pair Supervised Fine-Tuning):**

* **Title:** Pair Supervised Fine-Tuning

* **Nodes:**

* "Question" (top, blue rectangle)

* "S" (Solution, purple circle, dashed border)

* "V" (Verification, yellow circle, dashed border)

* "Q" (blue rectangle)

* **Dataset:** D<sub>pair</sub> (right, gray rectangle)

* **Process:** SFT (below D<sub>pair</sub>, gray rectangle)

* **Arrows:** Indicate the flow of information.

* **Checkmarks (green):** Indicate correct/positive outcomes.

* **Crosses (red):** Indicate incorrect/negative outcomes.

**Right Diagram (Online Reinforcement Learning):**

* **Title:** Online Reinforcement Learning

* **Nodes:**

* "Question" (top, blue rectangle)

* "S" (Solution, purple circle, dashed border)

* "V" (Verification, yellow circle, dashed border)

* **Reward Function:** r(✅) = 1, r(❌) = 0 (top-right)

* **Dataset:** D<sub>learn</sub> (right, gray rectangle)

* **Process:** RL (below D<sub>learn</sub>, gray rectangle)

* **Loss:** PG Loss (dotted arrow from D<sub>learn</sub> to RL)

* **Arrows:** Indicate the flow of information.

* **Checkmarks (green):** Indicate correct/positive outcomes.

* **Crosses (red):** Indicate incorrect/negative outcomes.

**Legend (bottom-right):**

* ✅ Correct

* ❌ Incorrect

* S Solution

* EOT (End of Turn, dashed border)

* V Verification

### Detailed Analysis

**Left Diagram (Pair Supervised Fine-Tuning):**

1. **Initial State:** Starts with a "Question".

2. **Solution Branching:** The "Question" leads to four possible "Solution" nodes (S). Two are marked with a red "X" (incorrect), and two are marked with a green checkmark (correct).

3. **Verification Branching:** Each "Solution" node leads to a "Verification" node (V). The first V is marked with a red "X", the next two are marked with a green checkmark.

4. **Aggregation:** The results are aggregated and fed into D<sub>pair</sub>.

5. **Fine-Tuning:** D<sub>pair</sub> is used for Supervised Fine-Tuning (SFT).

6. **Final State:** Two possible sequences of Question, Solution, Verification. One sequence has a red "X" on the Solution node, the other has green checkmarks on both Solution and Verification.

**Right Diagram (Online Reinforcement Learning):**

1. **Initial State:** Starts with a "Question".

2. **Solution Branching:** The "Question" leads to four possible "Solution" nodes (S). Two are marked with a red "X" (incorrect), and two are marked with a green checkmark (correct).

3. **Verification Branching:** Each "Solution" node leads to a "Verification" node (V). The first two V's are marked with a green checkmark, the last is marked with a red "X".

4. **Subsequent Solution and Verification:** The first two branches continue with another Solution and Verification node. The first branch has a green checkmark on the Solution and Verification. The second branch has a red "X" on the Solution and a green checkmark on the Verification.

5. **Reward Function:** The reward function assigns a value of 1 for correct actions (✅) and 0 for incorrect actions (❌).

6. **Learning:** The reward signal is used to train the model through Reinforcement Learning (RL) using Policy Gradient Loss (PG Loss) on the dataset D<sub>learn</sub>.

### Key Observations

* Both diagrams illustrate a process of question answering, where solutions are proposed and then verified.

* The key difference lies in the learning mechanism: Pair Supervised Fine-Tuning uses a pre-collected dataset of pairs, while Online Reinforcement Learning learns directly from the interaction with the environment, using a reward signal.

* The diagrams highlight the importance of both correct and incorrect examples in the learning process.

### Interpretation

The diagrams compare two distinct approaches to training a question-answering model. Pair Supervised Fine-Tuning relies on a static dataset of question-answer pairs, which may limit its ability to generalize to new or unseen scenarios. Online Reinforcement Learning, on the other hand, allows the model to learn dynamically from its interactions with the environment, potentially leading to better adaptation and performance in real-world settings. The reward function in the RL approach provides a clear signal for the model to learn from, guiding it towards correct answers and away from incorrect ones. The use of "End of Turn" (EOT) nodes suggests that the process may involve multiple turns or interactions, further emphasizing the dynamic nature of the RL approach.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Pair Supervised Fine-Tuning & Online Reinforcement Learning

### Overview

The image presents a comparative diagram illustrating two machine learning approaches: Pair Supervised Fine-Tuning (SFT) and Online Reinforcement Learning (RL). Both diagrams depict a process starting with a "Question" and progressing through a series of "Solution" (S) and "Verification" (V) steps. The left side shows SFT, where the process is guided by paired data, while the right side shows RL, where the process is guided by rewards and penalties.

### Components/Axes

The diagram consists of two main sections, each with a similar structure. Key components include:

* **Question:** The initial input to the system.

* **Solution (S):** Represents a step towards answering the question. Visually represented by a circle with the letter "S" inside.

* **Verification (V):** Represents the evaluation of a solution step. Visually represented by a circle with the letter "V" inside.

* **Correct/Incorrect Indicators:** Green checkmarks (✓) indicate correct steps, while red crosses (✗) indicate incorrect steps.

* **D<sub>pair</sub>:** Represents the paired dataset used in SFT.

* **D<sub>learn</sub>:** Represents the learning dataset used in RL.

* **SFT:** Label for Pair Supervised Fine-Tuning.

* **RL:** Label for Reinforcement Learning.

* **PG_Loss:** Policy Gradient Loss.

* **r<sup>+</sup>(x) = 1, r<sup>-</sup>(x) = 0:** Reward function definition.

* **EOT:** End of Thought.

* **Legend:** Located in the bottom-right corner, defining the meaning of the checkmark and cross symbols, as well as the Solution and Verification nodes.

### Detailed Analysis / Content Details

**Pair Supervised Fine-Tuning (Left Side):**

* The process begins with a "Question" node at the top.

* From the "Question", multiple "Solution" (S) nodes branch out, each connected to a "Verification" (V) node.

* The first four "S-V" pairs are marked with red crosses (✗) indicating incorrect steps.

* The next "S-V" pair is marked with a green checkmark (✓) indicating a correct step.

* The final "S-V" pair is also marked with a green checkmark (✓) indicating a correct step.

* An arrow points downwards from the initial "Question" and "S-V" pairs to a simplified representation of the process: "Q -> S -> V -> V".

* Another arrow points downwards from "Q -> S -> V -> V" to "Q -> S -> V", indicating a further simplification.

* An arrow labeled "D<sub>pair</sub>" points from the initial branching structure to the "SFT" label.

**Online Reinforcement Learning (Right Side):**

* The process begins with a "Question" node at the top.

* From the "Question", multiple "Solution" (S) nodes branch out, each connected to a "Verification" (V) node.

* The first three "S-V" pairs are marked with green checkmarks (✓) indicating correct steps.

* The next "S-V" pair is marked with a red cross (✗) indicating an incorrect step.

* The following "S-V" pair is marked with a green checkmark (✓) indicating a correct step.

* The final "S-V" pair is marked with a red cross (✗) indicating an incorrect step.

* An arrow labeled "D<sub>learn</sub>" points from the branching structure to a box containing "PG_Loss" and "RL".

* Above the arrow, the reward function is defined: "r<sup>+</sup>(x) = 1, r<sup>-</sup>(x) = 0".

### Key Observations

* SFT relies on a pre-defined, paired dataset (D<sub>pair</sub>) to guide the learning process, resulting in a simplified output.

* RL uses a learning dataset (D<sub>learn</sub>) and a reward function to guide the learning process, utilizing Policy Gradient Loss within a Reinforcement Learning framework.

* The SFT process appears to converge more quickly to a simplified solution, while the RL process explores more possibilities before reaching a solution.

* The number of "S-V" pairs is the same in both diagrams.

* The proportion of correct and incorrect steps differs between the two approaches. SFT has more initial incorrect steps, while RL has a more mixed result.

### Interpretation

The diagram illustrates the fundamental difference between supervised learning (SFT) and reinforcement learning (RL). SFT learns from labeled examples, aiming to mimic the correct solutions provided in the dataset. This leads to a more direct path to a simplified solution. RL, on the other hand, learns through trial and error, receiving rewards for correct actions and penalties for incorrect ones. This allows it to explore a wider range of possibilities but may require more iterations to converge. The reward function (r<sup>+</sup>(x) = 1, r<sup>-</sup>(x) = 0) indicates a binary reward system: a reward of 1 for correct steps and 0 for incorrect steps. The diagram suggests that SFT is more efficient when a high-quality, labeled dataset is available, while RL is more suitable for problems where labeled data is scarce or the optimal solution is unknown. The "EOT" label suggests that the process is iterative and continues until a satisfactory solution is reached. The diagram is a conceptual illustration and does not provide specific numerical data or performance metrics.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Pair Supervised Fine-Tuning and Online Reinforcement Learning Processes

### Overview

The image is a technical diagram comparing two distinct training methodologies for AI models, presented side-by-side. The left panel illustrates "Pair Supervised Fine-Tuning," and the right panel illustrates "Online Reinforcement Learning." Both processes start with a "Question" and involve sequences of "Solution" (S) and "Verification" (V) steps, but they differ fundamentally in how they generate training data and apply learning signals.

### Components/Axes

The diagram is divided into two main panels by a vertical line.

**Common Elements (Both Panels):**

* **Question Node:** A blue rectangular box labeled "Question" at the top of each process flow.

* **Solution Node (S):** A purple circle labeled "S". The legend defines this as "Solution".

* **Verification Node (V):** A yellow circle labeled "V". The legend defines this as "Verification".

* **Correct/Incorrect Indicators:** A green checkmark (✓) indicates a correct step. A red cross (✗) indicates an incorrect step.

* **EOT Indicator:** A dashed purple box around a node. The legend defines this as "EOT" (likely "End of Thought" or a terminal state).

**Left Panel: Pair Supervised Fine-Tuning**

* **Title:** "Pair Supervised Fine-Tuning" at the top.

* **Data Flow:** Shows multiple parallel branches originating from the "Question". Each branch is a sequence of S and V nodes with correct/incorrect markings.

* **Dataset Symbol:** An arrow points from the collection of branches to a box labeled "D_pair".

* **Learning Process:** A dashed arrow points from "D_pair" to a box labeled "SFT" (Supervised Fine-Tuning).

**Right Panel: Online Reinforcement Learning**

* **Title:** "Online Reinforcement Learning" at the top.

* **Reward Function:** Text in the top-right corner defines: `r(✓) = 1` and `r(✗) = 0`.

* **Data Flow:** Shows a single, deeper branching tree structure originating from the "Question". The tree has multiple levels of S and V nodes.

* **Dataset Symbol:** An arrow points from the tree structure to a box labeled "D_learn".

* **Learning Process:** A dashed arrow points from "D_learn" to a box labeled "RL" (Reinforcement Learning), with the text "PG Loss" (Policy Gradient Loss) written on the arrow.

**Legend (Bottom-Right Corner):**

* A box containing the key for all symbols:

* Green checkmark (✓): "Correct"

* Red cross (✗): "Incorrect"

* Purple circle (S): "Solution"

* Yellow circle (V): "Verification"

* Dashed purple box: "EOT"

### Detailed Analysis

**Pair Supervised Fine-Tuning (Left Panel):**

1. **Process:** A single "Question" generates multiple, independent solution-verification *pairs*. Each pair is a short sequence (e.g., Q -> S -> V).

2. **Data Generation:** The diagram shows four such pairs. The correctness of the S and V steps within each pair is mixed (e.g., one pair has S✗, V✓; another has S✓, V✗).

3. **Outcome:** All these paired trajectories are collected into a static dataset, `D_pair`. This dataset is then used for a standard Supervised Fine-Tuning (SFT) procedure. The learning is offline and based on pre-collected, paired examples.

**Online Reinforcement Learning (Right Panel):**

1. **Process:** A single "Question" generates a single, extended, and branching *trajectory* of solution and verification steps. This represents an interactive, step-by-step reasoning process.

2. **Data Generation:** The tree shows a path where initial incorrect steps (S✗) are followed by corrective steps, leading to a final correct verification (V✓). Other branches show failure paths (ending with V✗).

3. **Reward Signal:** Each verification step (V) receives a reward `r`: 1 for correct (✓), 0 for incorrect (✗). This reward signal is used to evaluate the entire trajectory.

4. **Outcome:** The experience from this interactive process (the tree of decisions and outcomes) is used to populate a dataset `D_learn`. This dataset is used for Reinforcement Learning (RL) with a Policy Gradient (PG) Loss, updating the model online based on the rewards received.

### Key Observations

1. **Structural Difference:** The SFT process uses flat, parallel pairs. The RL process uses a deep, sequential tree, indicating a more complex, multi-step reasoning chain.

2. **Learning Paradigm:** SFT learns from static, labeled pairs (correct/incorrect). RL learns from dynamic, reward-based feedback (1/0) on the outcome of a process.

3. **Error Correction:** The RL diagram explicitly shows a path where an initial incorrect solution (S✗) is followed by a correct one, suggesting the model can recover from errors during the reasoning process. The SFT diagram shows pairs as isolated units.

4. **Data Efficiency:** The RL approach appears to generate more diverse and sequential data from a single question compared to the SFT approach's multiple independent attempts.

### Interpretation

This diagram contrasts two fundamental approaches to training reasoning or verification capabilities in AI models.

* **Pair Supervised Fine-Tuning** represents a **static, imitation-based approach**. The model is trained to mimic correct solution-verification pairs from a fixed dataset (`D_pair`). It learns "what a good pair looks like" but may not learn the *process* of arriving at a correct answer through trial and error. The data is collected offline, possibly from human demonstrations or a stronger model.

* **Online Reinforcement Learning** represents a **dynamic, trial-and-error approach**. The model actively generates a reasoning trajectory, receives a reward based on the final verification outcome, and updates its policy to maximize future rewards. This teaches the model the *process* of reasoning, including how to correct mistakes, as it directly associates actions (solutions) with outcomes (rewards). The learning is online and interactive.

The core message is a shift from learning from **static examples** (SFT) to learning from **interactive experience and outcomes** (RL). The RL method, with its deeper tree and reward signal, is likely aimed at developing more robust, self-correcting, and process-oriented reasoning skills, whereas SFT provides a foundational capability based on curated examples. The "PG Loss" specification indicates the use of a policy gradient algorithm, a common RL technique for discrete action spaces like selecting the next solution step.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Pair Supervised Fine-Tuning vs. Online Reinforcement Learning

### Overview

The image compares two machine learning training paradigms: **Pair Supervised Fine-Tuning (SFT)** and **Online Reinforcement Learning (RL)**. Both processes involve iterative evaluation of solutions (S) and verifications (V) against questions (Q), with feedback loops to refine performance. The diagrams use color-coded symbols (green checkmarks for correct, red X for incorrect) to denote evaluation outcomes.

---

### Components/Axes

#### Left Diagram: Pair Supervised Fine-Tuning

- **Question (Q)**: Input prompt or problem statement.

- **Solution (S)**: Candidate answer or model output.

- **Verification (V)**: Evaluation of S against ground truth.

- **EOT (End of Turn)**: Termination marker for a training iteration.

- **D_pair**: Dataset for pair-wise comparisons.

- **SFT**: Supervised Fine-Tuning phase.

- **Legend**:

- Green checkmark (✓): Correct evaluation.

- Red X (✗): Incorrect evaluation.

#### Right Diagram: Online Reinforcement Learning

- **PG:Loss**: Policy Gradient Loss function.

- **RL**: Reinforcement Learning optimization.

- **D_learn**: Learning dataset updated via RL.

- **Legend**: Same as left diagram (✓ = Correct, ✗ = Incorrect).

---

### Detailed Analysis

#### Pair Supervised Fine-Tuning (Left)

1. **Flow**:

- A question (Q) generates multiple solution (S) and verification (V) pairs.

- Correct (✓) and incorrect (✗) evaluations are recorded.

- Data is aggregated into **D_pair**, which feeds into **SFT** for model refinement.

2. **Key Nodes**:

- Multiple S and V nodes per question, suggesting batch processing.

- Final S and V nodes are connected to **SFT**, indicating iterative improvement.

#### Online Reinforcement Learning (Right)

1. **Flow**:

- A question (Q) generates S and V pairs, with outcomes feeding into **PG:Loss**.

- **PG:Loss** drives updates to **RL**, which refines **D_learn**.

- The process includes recursive evaluation (e.g., S → V → S → V loops).

2. **Key Nodes**:

- **PG:Loss** acts as a feedback mechanism, optimizing **RL**.

- **D_learn** is dynamically updated, unlike the static **D_pair** in SFT.

---

### Key Observations

1. **Structural Differences**:

- SFT uses a static dataset (**D_pair**) with fixed evaluations.

- RL employs a dynamic dataset (**D_learn**) updated via continuous feedback.

2. **Evaluation Outcomes**:

- Both diagrams show mixed correctness (✓/✗) across S and V nodes, indicating iterative refinement.

3. **EOT vs. PG:Loss**:

- EOT marks the end of a training cycle in SFT.

- PG:Loss in RL quantifies the gradient for policy updates, enabling real-time learning.

---

### Interpretation

- **Pair Supervised Fine-Tuning** emphasizes batch-based correction, where multiple S/V pairs are evaluated before updating the model. This aligns with traditional supervised learning but lacks adaptability to new data.

- **Online Reinforcement Learning** introduces a dynamic, self-improving loop where **PG:Loss** directly influences **RL**, allowing the model to adapt to new questions and evaluation outcomes in real time. The recursive S/V loops suggest a focus on long-term reward maximization.

- **Notable Anomalies**: The left diagram’s EOT implies a fixed training horizon, while the right diagram’s recursive flow suggests unbounded learning. This highlights a trade-off between stability (SFT) and adaptability (RL).

The diagrams illustrate complementary approaches: SFT for foundational training and RL for continuous, context-aware optimization.

DECODING INTELLIGENCE...