\n

## Bar Charts: LLM Performance Comparison

### Overview

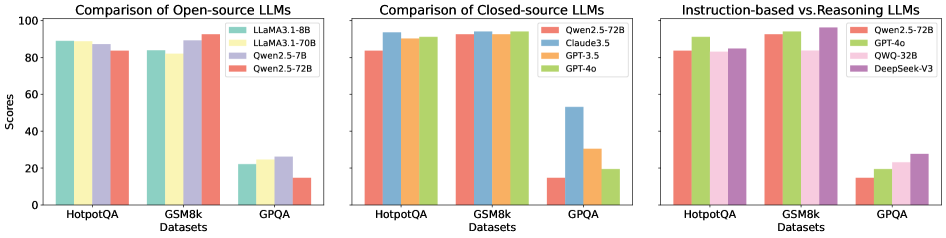

The image presents three bar charts comparing the performance of various Large Language Models (LLMs) across three datasets: HotpotQA, GSM8k, and GPQA. The first chart focuses on open-source LLMs, the second on closed-source LLMs, and the third compares instruction-based and reasoning LLMs. The y-axis represents "Scores," ranging from 0 to 100. The x-axis represents the datasets.

### Components/Axes

* **Y-axis:** "Scores" (0 to 100, linear scale)

* **X-axis:** "Datasets" (HotpotQA, GSM8k, GPQA)

* **Chart 1 (Open-source LLMs):**

* Legend:

* LLaMA3-8B (Yellow)

* LLaMA3-70B (Light Blue)

* Qwen2-7B (Orange)

* Qwen2-5-72B (Red)

* **Chart 2 (Closed-source LLMs):**

* Legend:

* Qwen2.5-72B (Orange)

* Claude3.5 (Light Blue)

* GPT-3.5 (Yellow)

* GPT-4o (Red)

* **Chart 3 (Instruction-based vs. Reasoning LLMs):**

* Legend:

* Qwen2.5-72B (Orange)

* GPT-4o (Red)

* QWQ-32B (Light Blue)

* DeepSeek-V3 (Green)

### Detailed Analysis or Content Details

**Chart 1: Comparison of Open-source LLMs**

* **HotpotQA:** LLaMA3-70B (approximately 92) performs best, followed by LLaMA3-8B (approximately 88), Qwen2-7B (approximately 85), and Qwen2-5-72B (approximately 82).

* **GSM8k:** LLaMA3-70B (approximately 98) performs best, followed by LLaMA3-8B (approximately 95), Qwen2-7B (approximately 93), and Qwen2-5-72B (approximately 90).

* **GPQA:** LLaMA3-70B (approximately 22) performs best, followed by Qwen2-7B (approximately 20), LLaMA3-8B (approximately 18), and Qwen2-5-72B (approximately 14).

**Chart 2: Comparison of Closed-source LLMs**

* **HotpotQA:** GPT-4o (approximately 95) performs best, followed by Qwen2.5-72B (approximately 92), Claude3.5 (approximately 90), and GPT-3.5 (approximately 87).

* **GSM8k:** GPT-4o (approximately 97) performs best, followed by Qwen2.5-72B (approximately 95), Claude3.5 (approximately 93), and GPT-3.5 (approximately 90).

* **GPQA:** GPT-4o (approximately 45) performs best, followed by Qwen2.5-72B (approximately 35), Claude3.5 (approximately 25), and GPT-3.5 (approximately 15).

**Chart 3: Instruction-based vs. Reasoning LLMs**

* **HotpotQA:** Qwen2.5-72B (approximately 94) performs best, followed by GPT-4o (approximately 92), QWQ-32B (approximately 88), and DeepSeek-V3 (approximately 85).

* **GSM8k:** GPT-4o (approximately 96) performs best, followed by Qwen2.5-72B (approximately 94), QWQ-32B (approximately 92), and DeepSeek-V3 (approximately 90).

* **GPQA:** GPT-4o (approximately 40) performs best, followed by Qwen2.5-72B (approximately 30), QWQ-32B (approximately 25), and DeepSeek-V3 (approximately 20).

### Key Observations

* LLaMA3-70B consistently outperforms LLaMA3-8B across all datasets in the open-source comparison.

* GPT-4o consistently outperforms other closed-source LLMs across all datasets.

* GPQA consistently yields the lowest scores across all models, indicating it is the most challenging dataset.

* The performance gap between models is more pronounced on GPQA than on HotpotQA or GSM8k.

* Qwen2.5-72B is a strong performer among the closed-source models, often rivaling or exceeding the performance of Claude3.5 and GPT-3.5.

### Interpretation

The data suggests that larger models (e.g., LLaMA3-70B, GPT-4o) generally perform better than smaller models. GPT-4o is the top performer overall, demonstrating the capabilities of advanced closed-source LLMs. The varying performance across datasets indicates that different datasets test different aspects of LLM capabilities. GPQA appears to be a more difficult benchmark, potentially requiring more complex reasoning or knowledge. The comparison between open-source and closed-source models highlights the progress being made in both areas, with open-source models like LLaMA3-70B achieving competitive performance. The third chart suggests that instruction-based and reasoning LLMs have different strengths, with GPT-4o excelling in GSM8k and Qwen2.5-72B performing well in HotpotQA. This indicates that the choice of model may depend on the specific task and dataset.