## Diagram & Code Snippet: Multi-Task Learning with Shared Backbone

### Overview

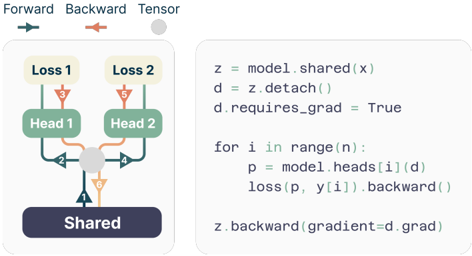

The image is a composite technical illustration divided into two primary sections. On the left is a schematic diagram of a neural network architecture designed for multi-task learning. On the right is a corresponding Python code snippet that implements a specific training procedure for this architecture. The diagram uses color-coded arrows to illustrate the flow of data (forward pass) and gradients (backward pass).

### Components/Axes

**Left Diagram Components:**

1. **Legend (Top-Left):**

* `Forward` (Teal arrow pointing right)

* `Backward` (Orange arrow pointing left)

* `Tensor` (Gray filled circle)

2. **Architecture Blocks (from bottom to top):**

* `Shared`: A dark purple rectangular block at the base.

* `Head 1` & `Head 2`: Two green rectangular blocks positioned above the Shared block.

* `Loss 1` & `Loss 2`: Two light yellow rectangular blocks at the top.

3. **Connections (Arrows):**

* **Forward Pass (Teal):** Arrows flow upward from `Shared` to both `Head 1` and `Head 2`, and then from each Head to its respective `Loss`.

* **Backward Pass (Orange):** Arrows flow downward from `Loss 1` and `Loss 2` to their respective `Head`, and then converge at a gray `Tensor` circle positioned between the two heads. From this tensor, a single orange arrow points back down to the `Shared` block.

* **Tensor Node:** A gray circle acts as a junction point for the backward gradients from both heads before they are passed to the shared layer.

**Right Code Snippet:**

The code is written in a Python-like pseudocode syntax. It is presented as plain text within a light gray rounded rectangle.

### Detailed Analysis

**Diagram Flow Analysis:**

* **Forward Trend:** The data flow is strictly bottom-up and divergent. A single input `x` is processed by the `Shared` layer. The resulting representation is then fed independently into two separate task-specific heads (`Head 1`, `Head 2`), each producing its own output and calculating its own loss (`Loss 1`, `Loss 2`).

* **Backward Trend:** The gradient flow is convergent. Gradients from both `Loss 1` and `Loss 2` are backpropagated through their respective heads. These gradients meet at an intermediate tensor node (the gray circle). A single, combined gradient signal is then passed back to update the `Shared` layer. This suggests a mechanism for aggregating gradients from multiple tasks before updating the shared parameters.

**Code Transcription:**

```python

z = model.shared(x)

d = z.detach()

d.requires_grad = True

for i in range(n):

p = model.heads[i](d)

loss(p, y[i]).backward()

z.backward(gradient=d.grad)

```

**Code Logic Breakdown:**

1. `z = model.shared(x)`: The shared layer processes input `x` to produce representation `z`.

2. `d = z.detach()`: A detached copy `d` of the representation `z` is created. This severs the direct computational graph link between `d` and the parameters of `model.shared`.

3. `d.requires_grad = True`: The detached tensor `d` is manually set to require gradients. This allows it to accumulate gradients from the subsequent head computations.

4. **Loop (`for i in range(n)`):** Iterates through `n` tasks (corresponding to the heads).

* `p = model.heads[i](d)`: The i-th head processes the shared representation `d`.

* `loss(p, y[i]).backward()`: The loss for task `i` is computed and backpropagated. Gradients flow through the head and accumulate on `d.grad`, but **do not** flow further back into `model.shared` because of the `.detach()` operation earlier.

5. `z.backward(gradient=d.grad)`: After the loop, the accumulated gradients from all tasks (`d.grad`) are manually passed backward through the original, non-detached tensor `z`. This single call updates the parameters of `model.shared`.

### Key Observations

1. **Gradient Isolation Technique:** The core technical insight is the use of `.detach()` and manual gradient assignment. This prevents gradients from the individual task losses from interfering with each other *within* the shared layer's parameter update during the forward/backward pass of each task. The final update to the shared layer uses an aggregated gradient signal.

2. **Architectural vs. Procedural Representation:** The diagram shows a conceptual, simultaneous multi-task setup. The code reveals a sequential implementation where tasks are processed one after another in a loop, but their gradients are aggregated before the shared layer update.

3. **Spatial Grounding:** The legend is positioned top-left, clearly defining the visual language. The diagram occupies the left ~40% of the image, the code the right ~60%. The gray tensor node in the diagram is spatially centered between the two heads, visually representing its role as a gradient junction.

### Interpretation

This image illustrates a sophisticated method for **multi-task learning** aimed at mitigating "gradient conflict" or "negative transfer" between tasks. In naive multi-task learning, simultaneous backpropagation from different losses can lead to conflicting gradient directions for the shared parameters, harming performance.

The depicted technique, often associated with methods like **Gradient Surgery** or **PCGrad**, proposes a solution:

1. **Isolate:** Compute task-specific gradients on a detached copy of the shared representation (`d`). This allows each task's gradient to be calculated independently without immediately affecting the shared weights.

2. **Aggregate:** Combine the gradients from all tasks (the code implies simple summation via `.backward()` calls accumulating on `d.grad`, though more complex aggregation like projection could be implemented).

3. **Update:** Apply the aggregated, potentially "conflict-resolved" gradient to update the shared model (`z.backward()`).

The diagram simplifies this by showing a single convergence point (the gray tensor), while the code exposes the precise mechanism using PyTorch-style autograd operations. The overall goal is to enable the shared feature extractor to learn a representation that is beneficial for all tasks simultaneously, by carefully controlling how gradient information from different tasks is combined. This is a critical technique for building robust multi-task models in fields like computer vision (e.g., joint depth estimation, segmentation, and detection) or natural language processing (e.g., joint parsing, tagging, and classification).