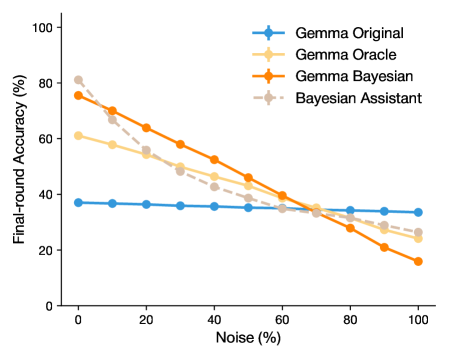

## Line Chart: Model Accuracy vs. Noise Level

### Overview

This is a line chart comparing the performance of four different models or methods as a function of increasing noise. The chart demonstrates how the "Final-round Accuracy" of each model degrades as the percentage of "Noise" increases from 0% to 100%.

### Components/Axes

* **X-Axis:** Labeled "Noise (%)". The scale runs from 0 to 100 with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Y-Axis:** Labeled "Final-round Accuracy (%)". The scale runs from 0 to 100 with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Legend:** Located in the top-right quadrant of the chart area. It contains four entries:

1. **Gemma Original:** Represented by a solid blue line with circular markers.

2. **Gemma Oracle:** Represented by a solid light yellow/beige line with circular markers.

3. **Gemma Bayesian:** Represented by a solid orange line with circular markers.

4. **Bayesian Assistant:** Represented by a dashed grey line with circular markers.

### Detailed Analysis

The chart plots four data series. Below is an analysis of each, including approximate values extracted from the chart. Values are estimated based on the grid lines and carry inherent visual uncertainty.

**1. Gemma Original (Blue Line, Solid)**

* **Trend:** Nearly flat, showing very minimal decline in accuracy as noise increases.

* **Data Points (Approximate):**

* Noise 0%: ~38%

* Noise 20%: ~37%

* Noise 40%: ~36%

* Noise 60%: ~35%

* Noise 80%: ~34%

* Noise 100%: ~34%

**2. Gemma Oracle (Light Yellow Line, Solid)**

* **Trend:** Steady, moderate downward slope.

* **Data Points (Approximate):**

* Noise 0%: ~61%

* Noise 20%: ~55%

* Noise 40%: ~48%

* Noise 60%: ~40%

* Noise 80%: ~32%

* Noise 100%: ~25%

**3. Gemma Bayesian (Orange Line, Solid)**

* **Trend:** Steep, consistent downward slope. It starts as the second-highest performer but ends as the lowest.

* **Data Points (Approximate):**

* Noise 0%: ~76%

* Noise 20%: ~64%

* Noise 40%: ~52%

* Noise 60%: ~40%

* Noise 80%: ~28%

* Noise 100%: ~16%

**4. Bayesian Assistant (Grey Line, Dashed)**

* **Trend:** Steep initial decline, then a more gradual slope. It starts as the highest performer but is overtaken by Gemma Original at high noise levels.

* **Data Points (Approximate):**

* Noise 0%: ~81%

* Noise 20%: ~68%

* Noise 40%: ~50%

* Noise 60%: ~38%

* Noise 80%: ~30%

* Noise 100%: ~22%

### Key Observations

1. **Performance Hierarchy Inversion:** At 0% noise, the order from highest to lowest accuracy is: Bayesian Assistant > Gemma Bayesian > Gemma Oracle > Gemma Original. At 100% noise, the order is completely inverted for the top three: Gemma Original > Gemma Oracle > Bayesian Assistant > Gemma Bayesian.

2. **Robustness vs. Peak Performance:** The "Gemma Original" model exhibits high robustness to noise (flat line) but has the lowest peak accuracy. Conversely, the "Bayesian Assistant" and "Gemma Bayesian" models achieve high accuracy in low-noise conditions but are highly sensitive to noise, suffering significant degradation.

3. **Crossover Points:** The lines intersect at various points, indicating noise levels where model performance is equivalent. For example, the Gemma Original and Gemma Bayesian lines cross at approximately 65% noise.

4. **Dashed Line Distinction:** The "Bayesian Assistant" is the only series represented with a dashed line, potentially indicating it is a baseline, a different class of model, or uses a distinct methodology.

### Interpretation

This chart illustrates a classic trade-off in machine learning and robust systems: **specialization versus generalization**.

* The **Bayesian Assistant** and **Gemma Bayesian** models appear to be highly specialized or optimized for clean data (low noise). Their steep decline suggests they may overfit to the training distribution and lack mechanisms to handle corrupted or out-of-distribution inputs.

* The **Gemma Original** model, while less accurate in ideal conditions, demonstrates remarkable stability. This suggests it may have inherent regularization or a simpler architecture that prevents it from learning noise-specific patterns, making it more reliable in unpredictable, real-world environments where data quality cannot be guaranteed.

* The **Gemma Oracle** model sits in the middle, offering a compromise between peak performance and noise robustness.

The practical implication is that model selection should be driven by the expected operational environment. For controlled settings with clean data, a Bayesian approach yields the best results. For applications where input noise is significant or variable (e.g., real-world sensor data, user-generated content), a more robust model like "Gemma Original" would be the safer and more reliable choice, despite its lower theoretical maximum accuracy. The chart quantifies the cost of robustness in terms of peak performance and vice-versa.