## Line Graph: Final-round Accuracy vs. Noise (%)

### Overview

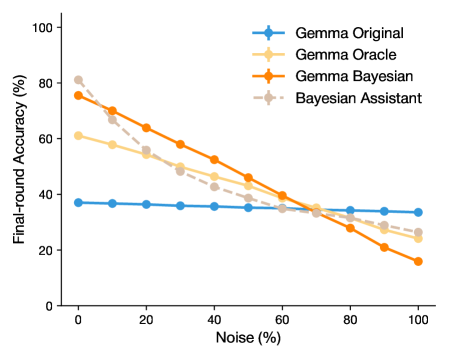

The graph illustrates the relationship between noise percentage (0-100%) and final-round accuracy (%) for four distinct models: Gemma Original, Gemma Oracle, Gemma Bayesian, and Bayesian Assistant. All models exhibit declining accuracy as noise increases, with varying rates of degradation.

### Components/Axes

- **X-axis**: Noise (%) ranging from 0 to 100 in 20% increments.

- **Y-axis**: Final-round Accuracy (%) ranging from 0 to 100 in 20% increments.

- **Legend**: Located in the top-right corner, associating colors with models:

- **Blue**: Gemma Original

- **Orange**: Gemma Oracle

- **Gray**: Gemma Bayesian

- **Dashed Gray**: Bayesian Assistant

### Detailed Analysis

1. **Gemma Original (Blue)**:

- Maintains a flat line at ~35-40% accuracy across all noise levels.

- No significant deviation observed.

2. **Gemma Oracle (Orange)**:

- Starts at ~75% accuracy at 0% noise.

- Declines steadily to ~20% at 100% noise.

- Steepest slope among all models.

3. **Gemma Bayesian (Gray)**:

- Begins at ~80% accuracy at 0% noise.

- Decreases to ~30% at 100% noise.

- Slightly less steep decline than Gemma Oracle.

4. **Bayesian Assistant (Dashed Gray)**:

- Starts at ~80% accuracy at 0% noise.

- Drops to ~25% at 100% noise.

- Similar trajectory to Gemma Bayesian but ends marginally higher.

### Key Observations

- **Initial Performance**: Gemma Oracle and Bayesian models (Gemma Bayesian/Bayesian Assistant) outperform Gemma Original at 0% noise.

- **Noise Sensitivity**: All models degrade with noise, but Gemma Oracle shows the most pronounced decline.

- **Robustness**: Bayesian approaches (Gemma Bayesian/Bayesian Assistant) retain higher accuracy than Gemma Original under noise.

- **Dashed Line Distinction**: Bayesian Assistant’s dashed line suggests a variant of Bayesian methods, possibly with adaptive mechanisms.

### Interpretation

The data highlights trade-offs between model complexity and noise resilience. Gemma Oracle’s high initial accuracy likely stems from oracle-guided training but lacks generalization under noise. Bayesian methods (Gemma Bayesian/Bayesian Assistant) balance initial performance with moderate noise tolerance, suggesting inherent robustness. Gemma Original’s stability at lower accuracy implies simplicity but limited adaptability. The Bayesian Assistant’s dashed line may indicate a hybrid approach, optimizing for noise resistance without sacrificing initial performance. These trends underscore the importance of model design choices in real-world noisy environments.