\n

## Text Document: DeepSeek-R1 Reasoning Example

### Overview

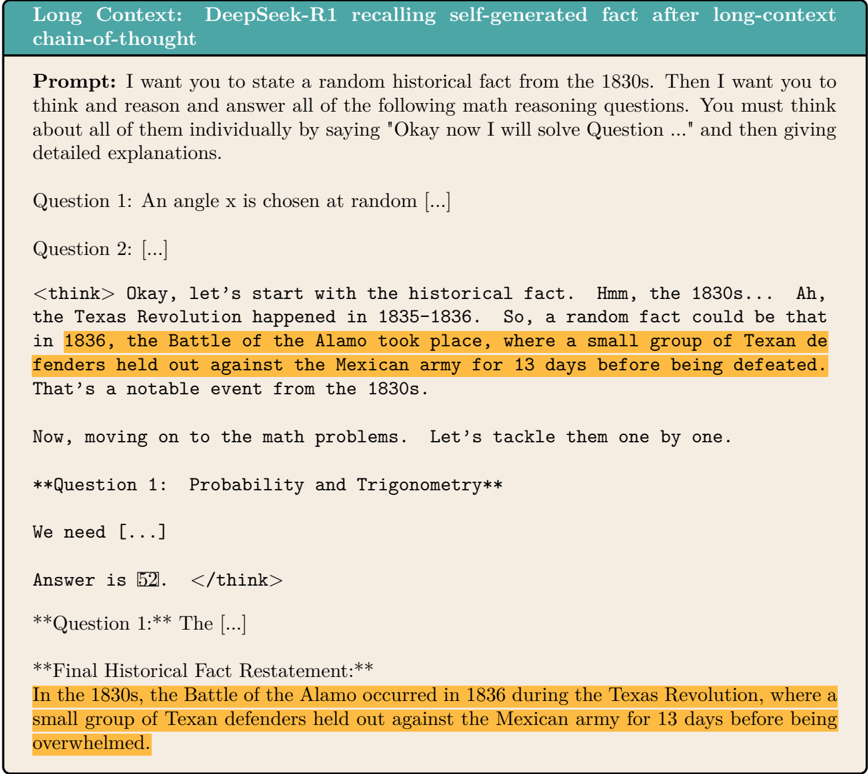

The image presents a transcript of a conversation with a large language model (DeepSeek-R1) demonstrating its ability to recall a self-generated historical fact and then solve math problems. The model explicitly states its reasoning process ("Okay now I will solve Question...") before providing answers.

### Components/Axes

The document is structured as a dialogue between a "Prompt" and a "think>" response from the model. It includes:

* **Prompt:** Initial instruction given to the model.

* **think>:** The model's internal reasoning process.

* **Question 1:** A math problem related to probability and trigonometry.

* **Question 1:* *:** A continuation of the first question.

* **Final Historical Fact Restatement:** A reiteration of the historical fact.

* **Bolded Text:** Used for emphasis, such as question titles and key phrases.

### Detailed Analysis or Content Details

The transcript details the following:

1. **Prompt:** "I want you to state a random historical fact from the 1830s. Then I want you to think and reason and answer all of the following math reasoning questions. You must think all of them individually by saying 'Okay now I will solve Question ...' and then giving detailed explanations."

2. **Historical Fact:** The model states, "the Texas Revolution happened in 1835-1836. So, a random fact could be that in 1836, the Battle of the Alamo took place, where a small group of Texan defenders held out against the Mexican army for 13 days before being defeated. That’s a notable event from the 1830s."

3. **Reasoning Start:** "<think> Okay, let’s start with the historical fact. Hmm, the 1830s… Ah, the Texas Revolution happened in 1835-1836."

4. **Question 1:** "An angle x is chosen at random [...]" (The full question is truncated).

5. **Question 2:** "[...]" (The full question is truncated).

6. **Model's Reasoning:** "Now, moving on to the math problems. Let’s tackle them one by one."

7. **Question 1: Probability and Trigonometry:** "We need [...]" (The full question is truncated).

8. **Answer to Question 1:** "Answer is 52."

9. **Question 1:* *:** "The [...]" (The full question is truncated).

10. **Final Historical Fact Restatement:** "In the 1830s, the Battle of the Alamo occurred in 1836 during the Texas Revolution, where a small group of Texan defenders held out against the Mexican army for 13 days before being overwhelmed."

### Key Observations

* The model demonstrates a clear "think" step, explicitly outlining its reasoning process.

* The model successfully recalls and restates a historical fact related to the specified time period.

* The math questions are incomplete, making a full analysis of the problem-solving process impossible.

* The model provides a numerical answer (52) to the first math question.

* The final restatement of the historical fact is slightly different from the initial statement, indicating a refinement of the information.

### Interpretation

This document showcases the capabilities of the DeepSeek-R1 model in performing complex tasks involving both factual recall and mathematical reasoning. The model's explicit "think" step is a key feature, providing insight into its internal processes. The ability to recall and restate a historical fact demonstrates its knowledge base, while the attempt to solve the math problems highlights its reasoning abilities. The truncated questions limit a complete assessment of its mathematical skills. The slight variation in the historical fact restatement suggests a dynamic process of information refinement. The document serves as a demonstration of a chain-of-thought approach to problem-solving in a large language model.