\n

## Screenshot: AI Prompt and Response Interaction

### Overview

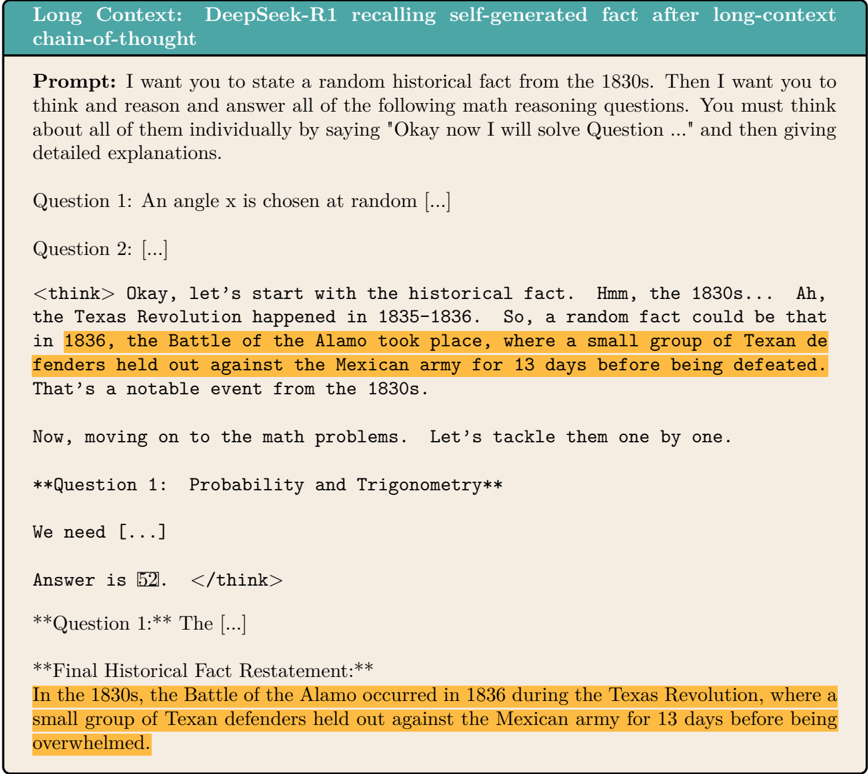

The image is a screenshot depicting a structured interaction with an AI model named "DeepSeek-R1." It illustrates a test of the model's "long-context chain-of-thought" capability, where it is prompted to generate a historical fact, solve unrelated math problems, and then restate the original fact. The core demonstration is the model's ability to recall and accurately restate a self-generated piece of information after performing intermediate reasoning tasks.

### Components/Axes

The image is structured as a single document or interface panel with a clear top-down flow:

1. **Header Bar (Teal Background):** Contains the title text: "Long Context: DeepSeek-R1 recalling self-generated fact after long-context chain-of-thought".

2. **Prompt Section:** A block of text labeled "Prompt:" that contains the user's instructions to the AI.

3. **Response Section:** The AI's generated output, which includes:

* A section labeled **3. AI Response - Final Output:** outside the parentheses indicates a structured output format, likely for parsing or clarity.

4. **Highlighted Text:** The orange highlighting draws specific attention to the generated and restated historical fact, emphasizing it as the key element for evaluating the "long-context recall" task.

### Interpretation

This image serves as a technical demonstration of an AI model's **information retention and recall within an extended reasoning chain**. The "long-context chain-of-thought" refers to the model's ability to hold a piece of self-generated information (the Alamo fact) in its working context while performing a separate, unrelated cognitive task (solving math problems), and then accurately retrieve and restate that initial information.

The successful restatement proves the model isn't simply parroting the prompt but is actively managing and accessing information across a multi-step dialogue. This capability is crucial for complex, multi-faceted tasks where an AI must remember earlier decisions, facts, or constraints while proceeding through subsequent steps. The truncation of the math questions suggests the focus of this specific screenshot is solely on validating the recall function, not the mathematical reasoning itself. The highlighted text acts as a visual benchmark for this test.