\n

## Line Chart: BLEU Score vs. Sentence Length for RNN Models

### Overview

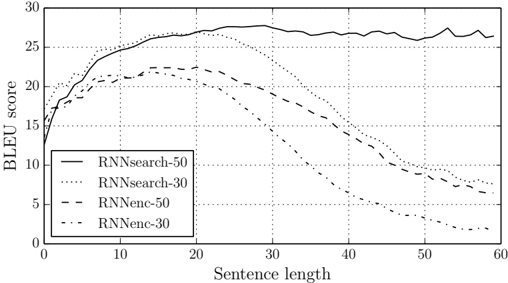

The image is a line chart comparing the performance, measured in BLEU score, of four different Recurrent Neural Network (RNN) models as a function of input sentence length. The chart demonstrates how model performance degrades or is maintained as sentences become longer.

### Components/Axes

* **Chart Type:** Line graph with multiple series.

* **Y-Axis:**

* **Label:** "BLEU score"

* **Scale:** Linear, ranging from 0 to 30.

* **Major Ticks:** 0, 5, 10, 15, 20, 25, 30.

* **X-Axis:**

* **Label:** "Sentence length"

* **Scale:** Linear, ranging from 0 to 60.

* **Major Ticks:** 0, 10, 20, 30, 40, 50, 60.

* **Legend:** Located in the bottom-left quadrant of the chart area. It defines four data series:

1. **RNNsearch-50:** Represented by a solid black line.

2. **RNNsearch-30:** Represented by a dotted line.

3. **RNNenc-50:** Represented by a dashed line.

4. **RNNenc-30:** Represented by a dash-dot line.

* **Grid:** A light grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **RNNsearch-50 (Solid Line):**

* **Trend:** Starts low, rises steeply to a high plateau, and maintains high performance with minor fluctuations across all sentence lengths.

* **Key Points:** Starts at ~13 (length 0). Rises to ~24 by length 10. Peaks and stabilizes between ~26-28 from length 20 onward. Ends at ~26 (length 60).

2. **RNNsearch-30 (Dotted Line):**

* **Trend:** Similar initial rise to RNNsearch-50, but begins a steady decline after peaking around length 20.

* **Key Points:** Starts at ~15 (length 0). Peaks at ~27 around length 20. Declines steadily to ~7 by length 60.

3. **RNNenc-50 (Dashed Line):**

* **Trend:** Rises to a moderate peak and then declines, performing worse than both RNNsearch models but better than RNNenc-30.

* **Key Points:** Starts at ~14 (length 0). Peaks at ~22 around length 15-20. Declines to ~6 by length 60.

4. **RNNenc-30 (Dash-Dot Line):**

* **Trend:** Shows the poorest performance and the most severe degradation with increasing sentence length.

* **Key Points:** Starts at ~13 (length 0). Peaks at ~21 around length 15. Declines sharply to ~2 by length 60.

**Spatial Grounding:** The legend is positioned in the lower-left, ensuring it does not obscure the primary data trends, which are concentrated in the upper half of the chart for shorter sentence lengths.

### Key Observations

1. **Model Architecture Superiority:** The "RNNsearch" models (both -50 and -30) consistently outperform their "RNNenc" counterparts across all sentence lengths.

2. **Context Size Impact:** For both model types, the variant with "-50" (presumably a larger context window or hidden state) significantly outperforms the "-30" variant, especially on longer sentences.

3. **Critical Failure Point:** The RNNenc-30 model's performance collapses dramatically for sentences longer than 30 words, approaching a BLEU score of near zero.

4. **Robustness:** The RNNsearch-50 model is uniquely robust, showing almost no performance loss for sentences up to 60 words in length.

### Interpretation

This chart provides a clear technical comparison of sequence-to-sequence model architectures for tasks like machine translation, where BLEU is a standard metric.

* **What the data suggests:** The data demonstrates that the "RNNsearch" architecture (likely an attention-based model) is fundamentally better at handling long-range dependencies in language than the standard "RNNenc" (encoder-decoder) architecture. The attention mechanism allows it to focus on relevant parts of the input sentence regardless of length.

* **How elements relate:** The performance gap between the "-50" and "-30" variants highlights the importance of model capacity (e.g., hidden state size). Larger capacity mitigates, but does not solve, the inherent weakness of the RNNenc architecture on long sequences.

* **Notable Anomalies/Outliers:** The near-total failure of RNNenc-30 on long sentences is the most striking outlier. It suggests a fundamental limitation in the model's ability to retain information over long sequences, a problem the RNNsearch architecture was specifically designed to overcome.

* **Underlying Message:** The chart is likely from a research paper (e.g., the original "Neural Machine Translation by Jointly Learning to Align and Translate" paper introducing attention). It serves as empirical evidence for the superiority of attention-based models over vanilla encoder-decoder RNNs for processing variable-length sequences, which was a major breakthrough in the field.