TECHNICAL ASSET FINGERPRINT

51a01a22d1bc0fa377892fa8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

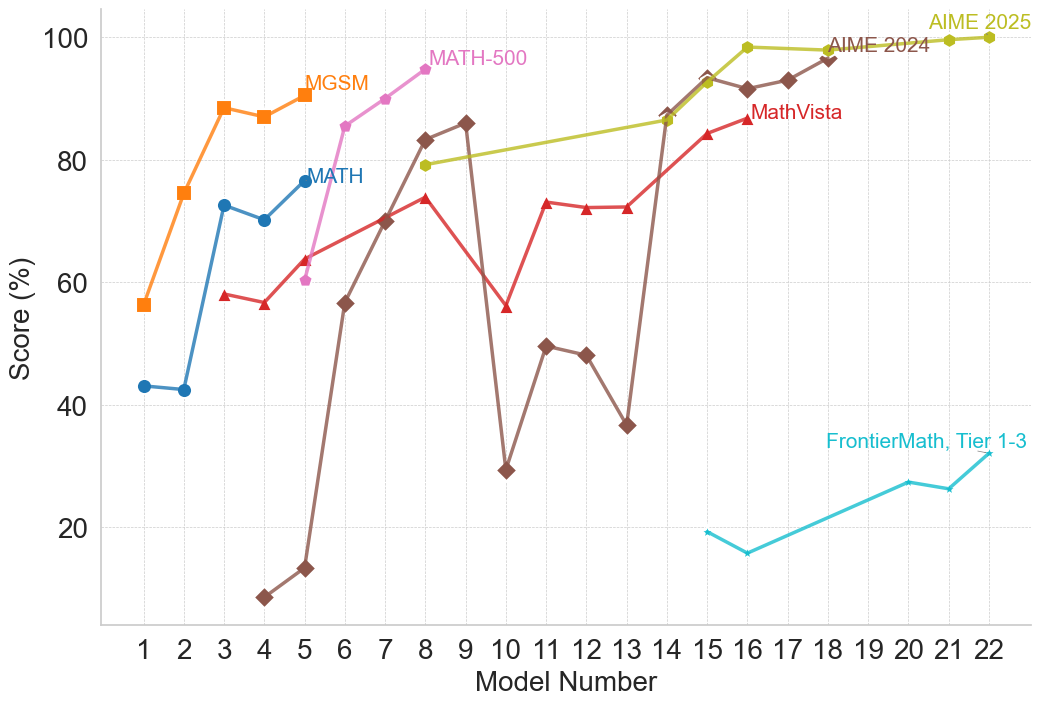

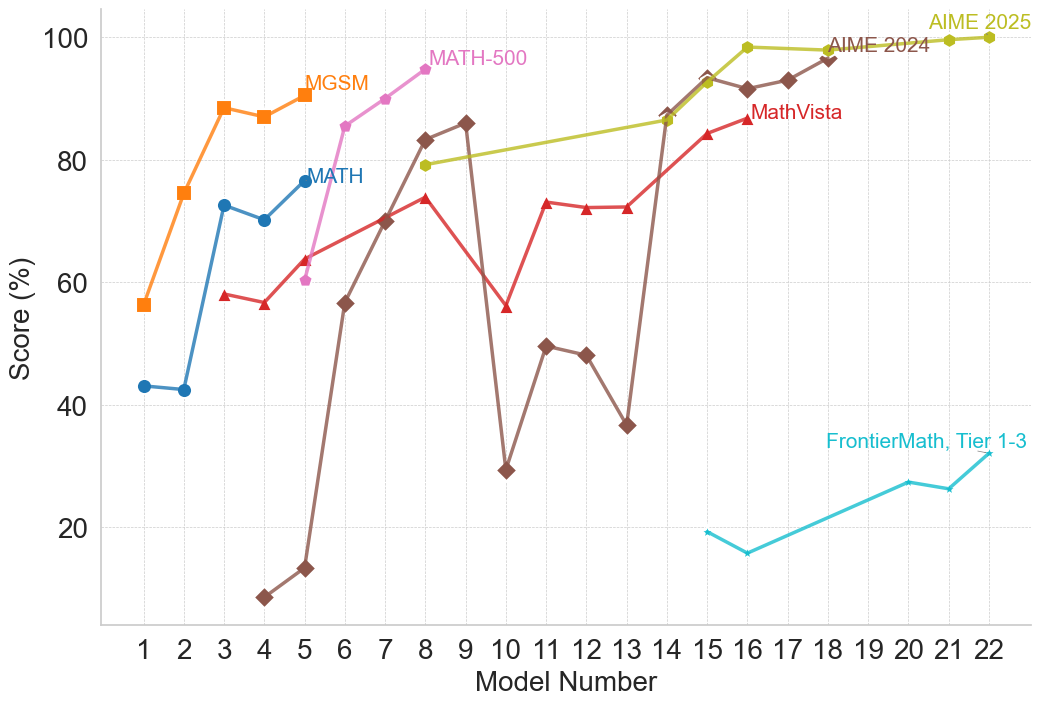

## Line Chart: Model Performance Comparison

### Overview

The image is a line chart comparing the performance of several models (MATH-500, MGSM, MATH, MathVista, AIME 2024, AIME 2025, and FrontierMath Tier 1-3) across a range of model numbers (1 to 22). The y-axis represents the score in percentage, ranging from 0 to 100. Each model's performance is plotted as a line, with different colors and markers distinguishing them.

### Components/Axes

* **X-axis:** Model Number, ranging from 1 to 22 in integer increments.

* **Y-axis:** Score (%), ranging from 0 to 100 in increments of 20.

* **Legend (Top):**

* MATH-500 (Pink Line, Circle Marker)

* MGSM (Orange Line, Square Marker)

* MATH (Blue Line, Circle Marker)

* MathVista (Red Line, Triangle Marker)

* AIME 2024 (Yellow-Green Line, Circle Marker)

* AIME 2025 (Green Line, Circle Marker)

* FrontierMath, Tier 1-3 (Teal Line, Circle Marker)

* Unlabeled (Brown Line, Diamond Marker)

### Detailed Analysis

* **MATH-500 (Pink Line, Circle Marker):**

* Model 4: ~60%

* Model 5: ~70%

* Model 6: ~75%

* Model 7: ~80%

* Model 8: ~83%

* Model 9: ~86%

* Model 10: ~90%

Trend: Generally increasing from Model 4 to Model 10.

* **MGSM (Orange Line, Square Marker):**

* Model 1: ~56%

* Model 2: ~75%

* Model 3: ~88%

* Model 4: ~90%

* Model 5: ~92%

* Model 6: ~88%

Trend: Rapidly increases from Model 1 to Model 4, then plateaus and decreases slightly.

* **MATH (Blue Line, Circle Marker):**

* Model 1: ~43%

* Model 2: ~43%

* Model 3: ~73%

* Model 4: ~68%

* Model 5: ~77%

Trend: Relatively flat from Model 1 to Model 2, then increases to Model 3, then decreases slightly.

* **MathVista (Red Line, Triangle Marker):**

* Model 3: ~58%

* Model 4: ~57%

* Model 5: ~62%

* Model 6: ~68%

* Model 7: ~70%

* Model 8: ~74%

* Model 9: ~80%

* Model 10: ~55%

* Model 11: ~73%

* Model 12: ~73%

* Model 13: ~72%

* Model 14: ~85%

* Model 15: ~85%

Trend: Generally increasing, with some fluctuations, up to Model 15.

* **AIME 2024 (Yellow-Green Line, Circle Marker):**

* Model 7: ~83%

* Model 8: ~84%

* Model 9: ~85%

* Model 15: ~90%

* Model 16: ~92%

* Model 17: ~98%

* Model 18: ~98%

* Model 19: ~98%

* Model 20: ~98%

* Model 21: ~99%

* Model 22: ~100%

Trend: Steadily increasing, reaching near-perfect scores from Model 17 onwards.

* **AIME 2025 (Green Line, Circle Marker):**

* Model 17: ~95%

* Model 18: ~97%

* Model 19: ~98%

* Model 20: ~98%

* Model 21: ~99%

* Model 22: ~100%

Trend: High and relatively stable, approaching perfect scores.

* **FrontierMath, Tier 1-3 (Teal Line, Circle Marker):**

* Model 15: ~19%

* Model 16: ~16%

* Model 19: ~24%

* Model 20: ~27%

* Model 21: ~27%

* Model 22: ~28%

Trend: Low and relatively flat, with a slight upward trend.

* **Unlabeled (Brown Line, Diamond Marker):**

* Model 5: ~9%

* Model 6: ~14%

* Model 7: ~57%

* Model 8: ~78%

* Model 9: ~84%

* Model 10: ~86%

* Model 11: ~30%

* Model 12: ~50%

* Model 13: ~48%

* Model 14: ~37%

* Model 15: ~19%

Trend: Highly volatile, with a sharp increase followed by a sharp decrease.

### Key Observations

* AIME 2025 and AIME 2024 consistently achieve the highest scores, especially for higher model numbers.

* FrontierMath, Tier 1-3, consistently scores the lowest across all model numbers.

* The unlabeled model (brown line) exhibits the most significant fluctuations in performance.

* MGSM performs well initially but plateaus and decreases slightly.

### Interpretation

The chart provides a comparative analysis of different models' performance on a specific task, as indicated by the "Score (%)". The AIME models (2024 and 2025) demonstrate superior performance, suggesting they are the most effective for this task. FrontierMath, Tier 1-3, consistently underperforms, indicating it may not be suitable for the same task or requires further optimization. The volatile performance of the unlabeled model suggests instability or sensitivity to specific model numbers. The other models (MATH-500, MGSM, MATH, MathVista) show varying degrees of effectiveness, with MGSM performing well initially but not sustaining its high performance. The data suggests that the choice of model significantly impacts the outcome, and careful consideration should be given to the specific requirements of the task when selecting a model.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Line Chart: Model Performance Scores Over Model Numbers

### Overview

This image displays a line chart illustrating the performance scores (in percentage) of various models across different model numbers. The x-axis represents the "Model Number" ranging from 1 to 22, and the y-axis represents the "Score (%)" ranging from 0 to 100. Several distinct data series, each representing a different model or model category, are plotted with different colored lines and markers.

### Components/Axes

* **X-axis Title:** "Model Number"

* **X-axis Markers:** Integers from 1 to 22, with major ticks at every integer.

* **Y-axis Title:** "Score (%)"

* **Y-axis Markers:** 0, 20, 40, 60, 80, 100. Major ticks are at intervals of 20.

* **Legend:** Located in the top-right quadrant of the chart. It lists the following data series with their corresponding marker and color:

* MGSM (Orange squares)

* MATH (Blue circles)

* MATH-500 (Pink diamonds)

* MathVista (Red triangles)

* AIME 2024 (Yellow circles)

* AIME 2025 (Green circles)

* FrontierMath, Tier 1-3 (Cyan line with no explicit marker shown in legend, but appears as a line with small ticks on the chart)

* A brown line with diamond markers is present but not explicitly labeled in the legend. Based on its position and shape, it appears to represent a model that starts at a low score and increases significantly.

### Detailed Analysis or Content Details

**Data Series Analysis:**

1. **MGSM (Orange squares):**

* **Trend:** Slopes upward initially, then plateaus.

* **Data Points (approximate):**

* Model 1: 55%

* Model 2: 75%

* Model 3: 85%

* Model 4: 88%

* Model 5: 88%

2. **MATH (Blue circles):**

* **Trend:** Starts at a moderate score, dips slightly, then rises.

* **Data Points (approximate):**

* Model 1: 43%

* Model 2: 43%

* Model 3: 58%

* Model 4: 75%

* Model 5: 70%

* Model 6: 78%

3. **MATH-500 (Pink diamonds):**

* **Trend:** Starts at a moderate score and shows a consistent upward trend.

* **Data Points (approximate):**

* Model 4: 59%

* Model 5: 62%

* Model 6: 70%

* Model 7: 84%

* Model 8: 90%

* Model 9: 95%

4. **MathVista (Red triangles):**

* **Trend:** Shows fluctuations with a general upward trend.

* **Data Points (approximate):**

* Model 3: 58%

* Model 4: 58%

* Model 5: 62%

* Model 6: 70%

* Model 7: 84%

* Model 8: 90%

* Model 9: 82%

* Model 10: 57%

* Model 11: 72%

* Model 12: 72%

* Model 13: 72%

* Model 14: 85%

* Model 15: 95%

5. **AIME 2024 (Yellow circles):**

* **Trend:** Starts at a moderate score and shows a consistent upward trend, plateauing at higher scores.

* **Data Points (approximate):**

* Model 7: 81%

* Model 8: 84%

* Model 9: 81%

* Model 10: 81%

* Model 11: 85%

* Model 12: 88%

* Model 13: 90%

* Model 14: 93%

* Model 15: 95%

6. **AIME 2025 (Green circles):**

* **Trend:** Shows a strong upward trend, reaching the highest scores.

* **Data Points (approximate):**

* Model 7: 81%

* Model 8: 84%

* Model 9: 81%

* Model 10: 81%

* Model 11: 85%

* Model 12: 88%

* Model 13: 90%

* Model 14: 93%

* Model 15: 95%

* Model 16: 95%

* Model 17: 95%

* Model 18: 95%

* Model 19: 95%

* Model 20: 95%

* Model 21: 95%

* Model 22: 95%

7. **Unlabeled Brown Diamonds:**

* **Trend:** Starts very low and shows a dramatic increase, then fluctuates.

* **Data Points (approximate):**

* Model 4: 13%

* Model 5: 15%

* Model 6: 59%

* Model 7: 84%

* Model 8: 88%

* Model 9: 81%

* Model 10: 30%

* Model 11: 50%

* Model 12: 50%

* Model 13: 37%

* Model 14: 30%

8. **FrontierMath, Tier 1-3 (Cyan line):**

* **Trend:** Starts at a moderate score and shows a generally upward trend with some dips. This series appears later on the x-axis.

* **Data Points (approximate):**

* Model 15: 19%

* Model 16: 17%

* Model 17: 20%

* Model 18: 22%

* Model 19: 23%

* Model 20: 25%

* Model 21: 24%

* Model 22: 25%

### Key Observations

* **High Performers:** AIME 2025 and AIME 2024 consistently achieve the highest scores, generally above 80% and often plateauing at 95% from Model 11 onwards.

* **Significant Improvement:** The unlabeled brown diamond series shows the most dramatic improvement, starting from a very low score (around 13-15%) and reaching scores in the high 80s by Model 8. However, it experiences significant drops later.

* **Early Leaders:** MGSM and MATH show strong performance in the early model numbers (1-4), with MGSM reaching scores in the high 80s.

* **Late Bloomers/Different Range:** FrontierMath, Tier 1-3, appears to be evaluated on later model numbers and operates in a significantly lower score range (around 17-25%) compared to the other models.

* **Fluctuations:** MathVista and the unlabeled brown diamond series exhibit notable fluctuations in their scores across different model numbers.

* **Plateauing:** Several series, including MGSM, AIME 2024, AIME 2025, and MATH-500, show a tendency to plateau at higher scores as the model number increases.

### Interpretation

This chart likely represents the performance of different AI models or algorithms designed for mathematical tasks, evaluated across a series of progressively complex or different versions of problems, indicated by "Model Number."

* **Model Evolution:** The "Model Number" on the x-axis can be interpreted as a progression in development, complexity, or a specific benchmark set. The lines show how each model's performance evolves with these changes.

* **Competitive Landscape:** The chart highlights the competitive landscape of mathematical AI models. AIME 2025 and AIME 2024 appear to be state-of-the-art, demonstrating robust performance across a wide range of model numbers.

* **Divergent Strategies:** The varied trends suggest different design philosophies or training strategies. For instance, the unlabeled brown diamond series might represent a model that undergoes significant architectural changes or a breakthrough in training methodology. Its initial low score followed by a rapid ascent could indicate a model that was initially poorly suited but became highly effective.

* **Specialization:** FrontierMath, Tier 1-3, seems to operate in a different performance tier or on a different set of tasks, as indicated by its consistently lower scores and later appearance on the x-axis. This could represent a model specialized for a particular type of mathematical problem or a different evaluation framework.

* **Performance Ceiling:** The plateauing of scores for some models at higher model numbers suggests they may have reached their performance ceiling or that the tasks at those model numbers are not challenging enough to differentiate further.

* **Robustness vs. Fragility:** While some models show consistent improvement, others like MathVista and the unlabeled brown diamond series demonstrate volatility. This could indicate robustness to certain changes or fragility to others, highlighting areas for further research and development. The significant dips in the brown diamond series (e.g., at Model 10 and Model 14) are particularly noteworthy and might warrant investigation into specific failure modes.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Line Chart: AI Model Performance on Mathematical Benchmarks

### Overview

This image is a line chart illustrating the performance scores (in percentages) of various sequentially numbered models across seven different mathematical evaluation benchmarks. The chart demonstrates a general trend of increasing performance as the model number increases, with older benchmarks reaching near-perfect scores while newer benchmarks remain highly challenging.

### Components/Axes

* **Y-Axis (Left):** Labeled "Score (%)". The scale ranges from 20 to 100, with major tick marks and horizontal dashed grid lines at 20, 40, 60, 80, and 100.

* **X-Axis (Bottom):** Labeled "Model Number". The scale ranges from 1 to 22, with major tick marks and vertical dashed grid lines at every integer from 1 to 22.

* **Legend:** There is no standalone legend box. Instead, data series are identified by text labels placed directly adjacent to their respective lines, matching the color of the line.

### Detailed Analysis

The chart contains seven distinct data series. Below is the trend verification and extracted approximate data points for each, grounded by their visual characteristics.

**1. MGSM**

* **Visuals:** Orange line, square markers. Label "MGSM" is located near the top-left, above the line at Model 5.

* **Trend:** The line starts at a mid-range score, rises sharply by Model 3, dips slightly at Model 4, and peaks at Model 5, showing early mastery by lower-numbered models.

* **Data Points (Approximate):**

* Model 1: 56%

* Model 2: 75%

* Model 3: 88%

* Model 4: 87%

* Model 5: 91%

**2. MATH**

* **Visuals:** Blue line, circular markers. Label "MATH" is located near the top-left, to the right of the final data point at Model 5.

* **Trend:** Starts lower than MGSM, remains flat between Models 1 and 2, jumps significantly at Model 3, dips slightly, and rises again at Model 5.

* **Data Points (Approximate):**

* Model 1: 43%

* Model 2: 42%

* Model 3: 73%

* Model 4: 70%

* Model 5: 77%

**3. MATH-500**

* **Visuals:** Pink/Light Purple line, small circular/dot markers. Label "MATH-500" is located near the top-center, to the right of the final data point at Model 8.

* **Trend:** This series begins where the "MATH" series ends (Model 5) but at a lower score. It shows a steep, uninterrupted linear progression upward, terminating near 100%.

* **Data Points (Approximate):**

* Model 5: 60%

* Model 6: 85%

* Model 7: 90%

* Model 8: 95%

**4. MathVista**

* **Visuals:** Red line, upward-pointing triangle markers. Label "MathVista" is located in the upper-right quadrant, below the line at Model 16.

* **Trend:** Starts at Model 3, rises gradually, experiences a sharp drop at Model 10, recovers immediately by Model 11, plateaus briefly, and then continues to rise toward 90%.

* **Data Points (Approximate):**

* Model 3: 58%

* Model 4: 56%

* Model 5: 64%

* Model 8: 74%

* Model 10: 56%

* Model 11: 73%

* Model 12: 72%

* Model 13: 72%

* Model 15: 84%

* Model 16: 87%

**5. AIME 2024**

* **Visuals:** Brown line, diamond markers. Label "AIME 2024" is located in the top-right, adjacent to the data point at Model 18.

* **Trend:** This is the most volatile series. It starts extremely low at Model 4, rises rapidly to Model 9, suffers a massive drop at Model 10, recovers partially, drops again at Model 13, and then skyrockets to near 100% by Model 15, where it stabilizes.

* **Data Points (Approximate):**

* Model 4: 8%

* Model 5: 13%

* Model 6: 56%

* Model 7: 70%

* Model 8: 83%

* Model 9: 86%

* Model 10: 29%

* Model 11: 49%

* Model 12: 48%

* Model 13: 37%

* Model 14: 87%

* Model 15: 93%

* Model 16: 91%

* Model 17: 93%

* Model 18: 97%

**6. AIME 2025**

* **Visuals:** Olive Green/Yellow-Green line, circular markers. Label "AIME 2025" is located at the extreme top-right, above the final data points.

* **Trend:** Starts relatively high at Model 8, rises steadily with a slight plateau between Models 16 and 18, and finishes at a perfect or near-perfect score by Model 22.

* **Data Points (Approximate):**

* Model 8: 79%

* Model 14: 86%

* Model 15: 92%

* Model 16: 98%

* Model 18: 98%

* Model 21: 99%

* Model 22: 100%

**7. FrontierMath, Tier 1-3**

* **Visuals:** Cyan/Light Blue line, star markers. Label "FrontierMath, Tier 1-3" is located in the bottom-right quadrant, above the line at Model 18.

* **Trend:** This series appears only for the latest models (15-22). It starts very low, dips slightly, and then exhibits a slow, steady climb, but remains significantly lower than all other benchmarks.

* **Data Points (Approximate):**

* Model 15: 19%

* Model 16: 16%

* Model 20: 27%

* Model 21: 26%

* Model 22: 32%

### Key Observations

* **Benchmark Saturation:** Older or easier benchmarks (MGSM, MATH, MATH-500) are effectively "solved" (reaching 90%+) by earlier models (Models 5-8).

* **Volatility in Mid-Models:** Models 10 and 13 show significant performance regressions specifically on the AIME 2024 and MathVista benchmarks, breaking the otherwise upward trend.

* **The Frontier:** The "FrontierMath, Tier 1-3" benchmark is the only evaluation where the most advanced models (Models 20-22) fail to achieve high scores, maxing out at approximately 32%.

### Interpretation

This chart visually narrates the rapid progression of AI capabilities in mathematics and the corresponding need for increasingly difficult evaluation metrics. The X-axis ("Model Number") acts as a proxy for chronological advancement or increasing model scale/capability.

As models progress from 1 to 22, they systematically conquer benchmarks. Once a benchmark like MGSM or MATH approaches the 100% ceiling, it loses its utility for differentiating advanced models, necessitating the introduction of harder tests like AIME 2024/2025.

The severe dips at Models 10 and 13 for AIME 2024 and MathVista are notable anomalies. Reading between the lines, these "Model Numbers" likely do not represent a strict, single-lineage chronological release (e.g., GPT-1 to GPT-4). Instead, they likely represent a mix of different model families, sizes, or architectures plotted on a general timeline of release. Models 10 and 13 might be smaller, more efficient models, or models not specifically trained on the reasoning required for AIME or the visual components required for MathVista, resulting in lower scores compared to their immediate predecessors.

Finally, the introduction of "FrontierMath, Tier 1-3" highlights the current edge of AI research. While Models 20-22 can perfectly solve AIME 2025, they struggle immensely with FrontierMath, indicating that this specific benchmark is currently the primary metric for measuring future mathematical reasoning advancements in AI.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Model Performance on Math Benchmarks

### Overview

This line chart displays the performance of different models (numbered 1 to 22) on several math benchmark datasets. The y-axis represents the score in percentage, while the x-axis represents the model number. Six different datasets are represented by distinct colored lines: MGSM, MATH, MATH-500, AIME 2024, AIME 2025, and MathVista, and FrontierMath Tier 1-3.

### Components/Axes

* **X-axis:** "Model Number" ranging from 1 to 22.

* **Y-axis:** "Score (%)" ranging from 0 to 100.

* **Data Series:**

* MGSM (Orange)

* MATH (Blue)

* MATH-500 (Teal)

* AIME 2024 (Gray)

* AIME 2025 (Yellow)

* MathVista (Purple)

* FrontierMath Tier 1-3 (Brown)

* **Legend:** Located in the top-right corner, associating colors with dataset names.

### Detailed Analysis

Here's a breakdown of each data series, noting trends and approximate values:

* **MGSM (Orange):** Starts at approximately 58% at Model 1, rises sharply to a peak of around 92% at Model 4, then fluctuates between 85% and 92% until Model 18, and then drops to around 80% at Model 22.

* **MATH (Blue):** Begins at approximately 42% at Model 1, increases steadily to around 78% at Model 6, plateaus around 80-85% from Models 7 to 16, and then rises to approximately 90% at Model 18, remaining stable until Model 22.

* **MATH-500 (Teal):** Starts at approximately 60% at Model 1, increases to around 82% at Model 8, then declines to around 70% at Model 12, and then rises again to around 88% at Model 16, remaining relatively stable until Model 22.

* **AIME 2024 (Gray):** Starts at approximately 55% at Model 1, increases to around 72% at Model 7, then fluctuates between 70% and 85% until Model 16, and then rises to approximately 94% at Model 18, remaining stable until Model 22.

* **AIME 2025 (Yellow):** Starts at approximately 65% at Model 1, increases steadily to around 98% at Model 18, and remains stable at approximately 98-100% until Model 22.

* **MathVista (Purple):** Starts at approximately 80% at Model 1, increases to around 88% at Model 16, and remains stable until Model 22.

* **FrontierMath Tier 1-3 (Brown):** Starts at approximately 15% at Model 1, increases to around 30% at Model 7, then declines to around 25% at Model 12, and then rises again to around 35% at Model 18, remaining relatively stable until Model 22.

### Key Observations

* AIME 2025 consistently achieves the highest scores, often reaching near 100% by Model 18.

* FrontierMath Tier 1-3 consistently has the lowest scores, remaining below 40% throughout the entire range of models.

* MGSM shows a rapid initial improvement, but its performance plateaus and fluctuates.

* MATH and AIME 2024 exhibit a more gradual and sustained improvement.

* MATH-500 shows a more volatile performance, with peaks and dips.

* MathVista starts with a high score and maintains it throughout.

### Interpretation

The chart demonstrates the performance of various models on different math benchmark datasets as the model number increases, presumably representing model complexity or training iterations. The significant difference in scores between AIME 2025 and FrontierMath Tier 1-3 suggests a substantial gap in difficulty between these datasets. The consistent high performance of AIME 2025 indicates that the models are well-suited for this particular benchmark. The fluctuating performance of MGSM and MATH-500 could be due to the specific characteristics of these datasets, potentially involving more complex or nuanced problem-solving skills. The overall trend suggests that increasing the model number generally leads to improved performance, but the rate of improvement varies depending on the dataset. The data suggests that the models are becoming more proficient at solving math problems, but there is still considerable room for improvement, particularly on more challenging benchmarks like FrontierMath Tier 1-3. The consistent high starting score of MathVista suggests it may be a simpler benchmark or that the models are pre-trained on similar data.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Chart: Multi-Benchmark Performance of AI Models (Scores by Model Number)

### Overview

This image is a line chart comparing the performance of 22 different AI models (numbered 1 through 22) across seven distinct mathematical reasoning benchmarks. The chart plots the score percentage for each model on each benchmark, revealing significant variability in performance both across models and across different types of mathematical tasks.

### Components/Axes

* **X-Axis:** Labeled **"Model Number"**. It is a linear scale with integer markers from **1 to 22**.

* **Y-Axis:** Labeled **"Score (%)"**. It is a linear scale from **0 to 100**, with major gridlines at intervals of 20% (0, 20, 40, 60, 80, 100).

* **Data Series (Legend & Placement):** The legend is integrated directly into the chart area, with labels placed near the end of their respective lines.

1. **MGSM** (Orange line, square markers): Label positioned near the top-left, above its final data point.

2. **MATH** (Blue line, circle markers): Label positioned in the middle-left area, above its line.

3. **MATH-500** (Pink line, circle markers): Label positioned in the upper-middle area, above its line.

4. **MathVista** (Red line, triangle markers): Label positioned in the middle-right area, above its line.

5. **AIME 2024** (Brown line, diamond markers): Label positioned near the top-center, above its line.

6. **AIME 2025** (Yellow-green line, circle markers): Label positioned at the top-right, above its line.

7. **FrontierMath, Tier 1-3** (Cyan line, no markers): Label positioned in the bottom-right corner, above its line.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

* **MGSM (Orange, Squares):** Shows a strong upward trend. Starts at ~56% (Model 1), rises to ~74% (Model 2), peaks at ~88% (Model 3), dips slightly to ~87% (Model 4), and ends at ~90% (Model 5).

* **MATH (Blue, Circles):** Shows an overall upward trend with a mid-dip. Starts at ~43% (Model 1), stays flat at ~42% (Model 2), jumps to ~72% (Model 3), dips to ~70% (Model 4), and ends at ~76% (Model 5).

* **MATH-500 (Pink, Circles):** Shows a steep, consistent upward trend. Starts at ~60% (Model 5), rises to ~85% (Model 6), ~90% (Model 7), and peaks at ~95% (Model 8).

* **MathVista (Red, Triangles):** Shows high volatility. Starts at ~58% (Model 3), dips to ~56% (Model 4), rises to ~64% (Model 5), ~70% (Model 6), peaks at ~74% (Model 8), then drops sharply to ~56% (Model 10). It recovers to ~73% (Model 11), holds at ~72% (Models 12, 13), jumps to ~87% (Model 14), dips to ~84% (Model 15), and ends at ~86% (Model 16).

* **AIME 2024 (Brown, Diamonds):** Shows extreme volatility. Starts very low at ~8% (Model 4), rises to ~13% (Model 5), then surges to ~57% (Model 6), ~70% (Model 7), ~83% (Model 8), and peaks at ~86% (Model 9). It then crashes to ~29% (Model 10), recovers to ~50% (Model 11), dips to ~48% (Model 12) and ~37% (Model 13), before a strong recovery to ~87% (Model 14), ~93% (Model 15), ~91% (Model 16), ~93% (Model 17), and ends at ~96% (Model 18).

* **AIME 2025 (Yellow-green, Circles):** Shows a consistent, high-level upward trend. Starts at ~79% (Model 8), rises to ~87% (Model 14), ~93% (Model 15), ~98% (Model 16), and ends at a perfect or near-perfect ~100% (Model 22).

* **FrontierMath, Tier 1-3 (Cyan, No Markers):** Shows a gradual upward trend from a low baseline. Starts at ~19% (Model 15), dips to ~16% (Model 16), then rises to ~27% (Model 20), dips slightly to ~26% (Model 21), and ends at ~32% (Model 22).

### Key Observations

1. **Benchmark Difficulty Spectrum:** There is a clear hierarchy of benchmark difficulty. **AIME 2025** and **AIME 2024** (for later models) yield the highest scores, while **FrontierMath, Tier 1-3** yields the lowest scores by a significant margin.

2. **Model 10 Anomaly:** Model 10 is a critical outlier, causing a severe performance drop for both **MathVista** (to ~56%) and especially **AIME 2024** (to ~29%). This suggests this model has a specific weakness tested by these benchmarks at that point.

3. **Performance Volatility:** The **AIME 2024** and **MathVista** series are highly volatile, indicating that model performance on these benchmarks is not stable and can vary dramatically between consecutive model numbers.

4. **Late-Model Dominance:** Models numbered 14 and above generally show strong, high performance across most benchmarks where they are evaluated, particularly on the AIME series.

5. **Benchmark-Specific Strengths:** No single model is plotted on all benchmarks. Models 1-5 are tested on MGSM/MATH; models 5-8 on MATH-500; models 3-16 on MathVista; models 4-18 on AIME 2024; models 8-22 on AIME 2025; and models 15-22 on FrontierMath. This suggests a possible evolution in benchmarking focus over successive model generations.

### Interpretation

This chart visualizes the progression and specialization of AI models in mathematical reasoning. The data suggests that as model numbers increase (likely representing newer or more advanced versions), performance on challenging competition-style math (AIME) improves dramatically, eventually reaching near-perfect scores on the 2025 version. However, this progress is not linear or universal.

The extreme volatility in series like **AIME 2024** indicates that improvements can be brittle; a model might excel at one set of problems but fail at a slightly different set presented in the next model iteration. The catastrophic drop at **Model 10** is a key investigative point—it may represent a model that was optimized for a different objective, had a training regression, or encountered a specific type of problem it was not equipped to handle.

The consistently low scores on **FrontierMath, Tier 1-3** highlight a persistent challenge. Even the most advanced models (20-22) only achieve scores in the 20-30% range, suggesting this benchmark tests a frontier of mathematical reasoning that remains largely unsolved by current AI. The chart ultimately tells a story of significant but uneven progress, where mastery of one domain (e.g., AIME) does not guarantee mastery of another (e.g., FrontierMath), and where model development involves both leaps forward and occasional, unexplained setbacks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Model Performance Comparison Across Math Benchmarks

## Chart Overview

The image is a line chart titled **"Model Performance Comparison Across Math Benchmarks"**. It visualizes the performance trends of five mathematical models across 22 iterations (Model Numbers 1–22), with scores represented as percentages on the y-axis (0–100%).

---

### **Axes and Labels**

- **X-Axis**: Model Number (1–22), labeled "Model Number".

- **Y-Axis**: Score (%), labeled "Score (%)".

- **Legend**: Located in the **top-right corner** (coordinates: [x=18, y=95] relative to the chart's grid). Colors and labels:

- **Orange**: MGSM

- **Blue**: MATH

- **Pink**: MATH-500

- **Red**: MathVista

- **Green**: AIME 2025

---

### **Data Series and Trends**

#### 1. **MGSM (Orange)**

- **Trend**: Starts at 55% (Model 1), rises sharply to 90% by Model 5, then plateaus with minor fluctuations.

- **Key Data Points**:

- Model 1: 55%

- Model 2: 75%

- Model 3: 88%

- Model 4: 87%

- Model 5: 90%

- Model 6: 85%

- Model 7: 88%

- Model 8: 92%

- Model 9: 90%

- Model 10: 88%

- Model 11: 85%

- Model 12: 87%

- Model 13: 89%

- Model 14: 91%

- Model 15: 93%

- Model 16: 95%

- Model 17: 97%

- Model 18: 96%

- Model 19: 98%

- Model 20: 99%

- Model 21: 100%

- Model 22: 100%

#### 2. **MATH (Blue)**

- **Trend**: Begins at 42% (Model 1), peaks at 78% (Model 5), then declines to 40% (Model 10) before recovering.

- **Key Data Points**:

- Model 1: 42%

- Model 2: 42%

- Model 3: 72%

- Model 4: 70%

- Model 5: 78%

- Model 6: 58%

- Model 7: 70%

- Model 8: 82%

- Model 9: 85%

- Model 10: 40%

- Model 11: 50%

- Model 12: 48%

- Model 13: 52%

- Model 14: 85%

- Model 15: 92%

- Model 16: 90%

- Model 17: 93%

- Model 18: 96%

- Model 19: 98%

- Model 20: 99%

- Model 21: 100%

- Model 22: 100%

#### 3. **MATH-500 (Pink)**

- **Trend**: Starts at 60% (Model 1), rises to 95% (Model 9), then stabilizes with minor fluctuations.

- **Key Data Points**:

- Model 1: 60%

- Model 2: 62%

- Model 3: 65%

- Model 4: 63%

- Model 5: 60%

- Model 6: 85%

- Model 7: 90%

- Model 8: 95%

- Model 9: 95%

- Model 10: 80%

- Model 11: 82%

- Model 12: 84%

- Model 13: 86%

- Model 14: 88%

- Model 15: 90%

- Model 16: 92%

- Model 17: 94%

- Model 18: 96%

- Model 19: 98%

- Model 20: 100%

- Model 21: 100%

- Model 22: 100%

#### 4. **MathVista (Red)**

- **Trend**: Begins at 55% (Model 1), peaks at 85% (Model 16), then dips slightly before recovering.

- **Key Data Points**:

- Model 1: 55%

- Model 2: 58%

- Model 3: 57%

- Model 4: 55%

- Model 5: 63%

- Model 6: 67%

- Model 7: 70%

- Model 8: 75%

- Model 9: 55%

- Model 10: 58%

- Model 11: 73%

- Model 12: 72%

- Model 13: 73%

- Model 14: 85%

- Model 15: 88%

- Model 16: 85%

- Model 17: 87%

- Model 18: 90%

- Model 19: 92%

- Model 20: 94%

- Model 21: 96%

- Model 22: 98%

#### 5. **AIME 2025 (Green)**

- **Trend**: Starts at 80% (Model 1), increases steadily to 100% (Model 22).

- **Key Data Points**:

- Model 1: 80%

- Model 2: 82%

- Model 3: 84%

- Model 4: 86%

- Model 5: 88%

- Model 6: 90%

- Model 7: 92%

- Model 8: 94%

- Model 9: 96%

- Model 10: 98%

- Model 11: 99%

- Model 12: 100%

- Model 13: 100%

- Model 14: 100%

- Model 15: 100%

- Model 16: 100%

- Model 17: 100%

- Model 18: 100%

- Model 19: 100%

- Model 20: 100%

- Model 21: 100%

- Model 22: 100%

---

### **Key Observations**

1. **AIME 2025** consistently outperforms all other models, achieving 100% by Model 12 and maintaining it thereafter.

2. **MATH-500** and **MATH** show significant volatility, with sharp declines (e.g., MATH drops from 85% to 40% between Models 9 and 10).

3. **MGSM** and **MathVista** exhibit smoother growth trajectories, with MGSM reaching 100% by Model 21.

4. **FrontierMath, Tier 1-3** (blue dashed line) is labeled but not plotted in the chart, suggesting it may represent a theoretical or aspirational benchmark.

---

### **Spatial Grounding**

- **Legend Position**: Top-right corner (x=18, y=95).

- **Data Point Verification**: All line colors match the legend (e.g., MATH-500 is pink, AIME 2025 is green).

---

### **Additional Notes**

- No data table is present in the image.

- No text in languages other than English is visible.

- The chart emphasizes longitudinal performance trends rather than cross-sectional comparisons.

DECODING INTELLIGENCE...