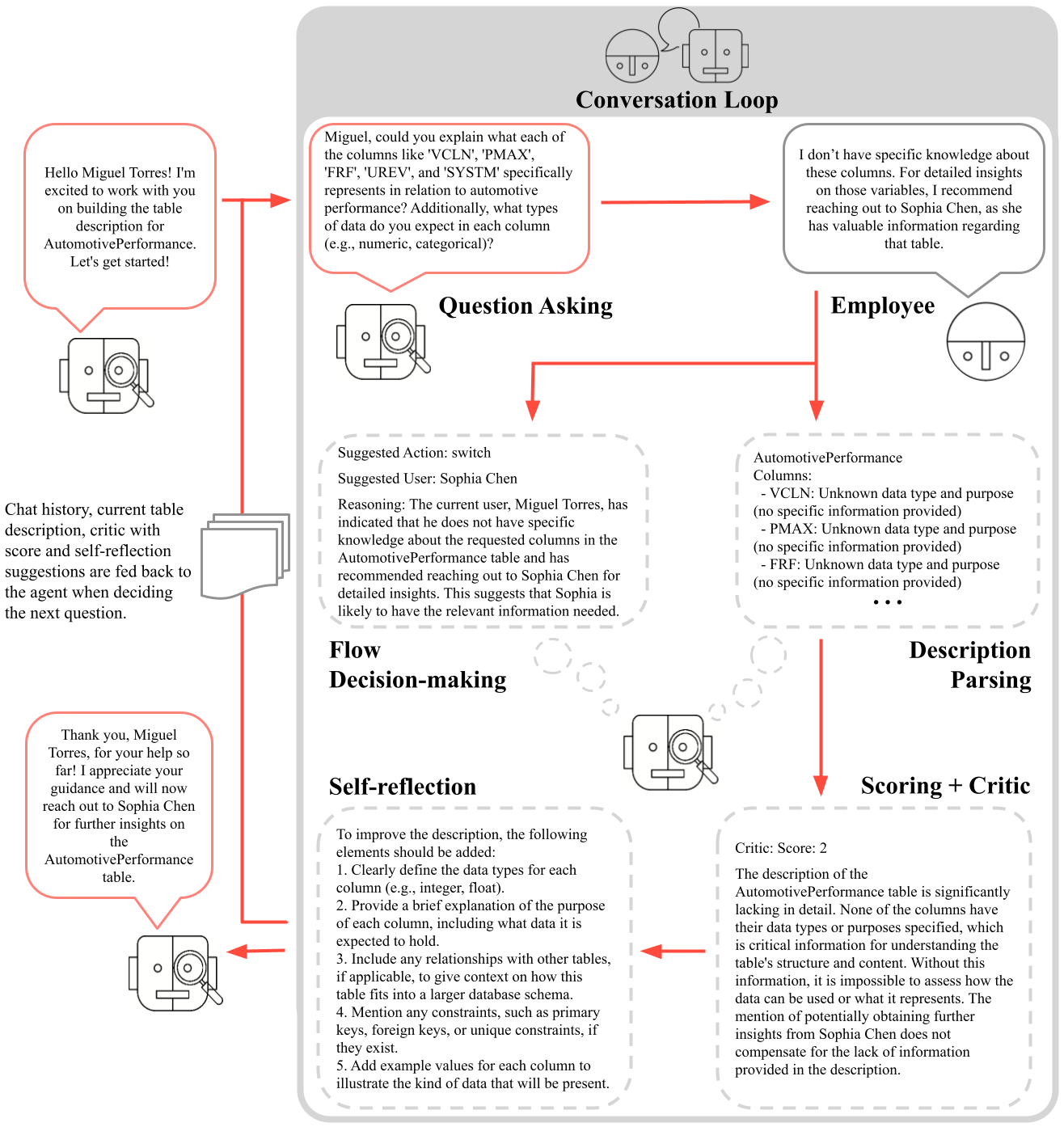

## Flowchart: Conversation Loop for AutomotivePerformance Table Description

### Overview

The diagram illustrates a multi-step process for refining a table description through iterative questioning, employee consultation, self-reflection, and scoring. It begins with a user (Miguel Torres) requesting clarification about columns in an "AutomotivePerformance" table, followed by an AI agent's inability to answer, a suggestion to consult an employee (Sophia Chen), and subsequent refinement of the description based on feedback.

### Components/Axes

1. **Conversation Loop** (Top Section)

- Contains two speech bubbles:

- **User Query**: "Hello Miguel Torres! I'm excited to work with you on building the table description for AutomotivePerformance. Let's get started!"

- **Agent Response**: "Miguel, could you explain what each of the columns like 'VCLN', 'PMAX', 'FRF', 'UREV', and 'SYSTEM' specifically represents in relation to automotive performance? Additionally, what types of data do you expect in each column (e.g., numeric, categorical)?"

2. **Question Asking** (Left Section)

- Arrows point to **Employee** (right section) with:

- **Suggested Action**: "switch"

- **Suggested User**: "Sophia Chen"

- **Reasoning**: The agent lacks knowledge about the columns and recommends consulting Sophia Chen for detailed insights.

3. **Flow Decision-making** (Central Section)

- Arrows connect **Employee** to **Description Parsing** and **Scoring + Critic**.

4. **Self-reflection** (Bottom Left)

- Lists five improvement elements for the description:

1. Define data types (e.g., integer, float)

2. Explain column purposes

3. Include relationships with other tables

4. Mention constraints (e.g., primary keys)

5. Add example values

5. **Description Parsing** (Bottom Right)

- Contains a critic's feedback:

- **Critic Score**: 2/5

- **Criticism**: The description lacks data types, purposes, and relationships for columns like VCLN, PMAX, FRF, and UREV. The suggestion to consult Sophia Chen does not compensate for the missing information.

### Detailed Analysis

- **Initial Interaction**: Miguel Torres initiates the conversation, requesting clarification about specific columns in the AutomotivePerformance table.

- **Agent Limitation**: The agent acknowledges its inability to answer due to insufficient knowledge about the columns' data types and purposes.

- **Employee Consultation**: The agent suggests switching to Sophia Chen, implying she has expertise in the table's structure.

- **Self-Reflection**: The agent identifies five critical gaps in the description, emphasizing the need for technical details (data types, constraints, examples).

- **Scoring**: The critic assigns a low score (2/5) due to the absence of essential metadata, highlighting the description's inadequacy for practical use.

### Key Observations

1. **Knowledge Gaps**: The agent's inability to answer basic questions about column definitions suggests either incomplete training data or a lack of access to domain-specific documentation.

2. **Human-AI Collaboration**: The process relies on human experts (Sophia Chen) to fill knowledge gaps, indicating a hybrid workflow where AI handles routine tasks while deferring complex queries to specialists.

3. **Iterative Improvement**: The self-reflection phase demonstrates a feedback loop where the agent actively works to enhance its outputs based on structured criteria.

### Interpretation

This flowchart represents a **knowledge refinement system** where an AI agent collaborates with human experts to document technical data structures. The low critic score underscores the importance of **explicit metadata** (data types, purposes, relationships) for usability. The process highlights two critical insights:

1. **AI Limitations**: Without access to domain-specific knowledge, AI systems cannot reliably interpret or describe complex datasets.

2. **Human-in-the-Loop Necessity**: Technical documentation requires human expertise to validate and contextualize information, especially for specialized tables like AutomotivePerformance.

The diagram also reveals a **workflow dependency** between AI agents and human experts, where the agent acts as a triage system—identifying when human intervention is necessary and facilitating knowledge transfer. The self-reflection phase suggests an attempt to automate quality assurance, though its effectiveness depends on the agent's ability to implement the suggested improvements.