## Diagram: Collaborative LLM-Human System Architecture

### Overview

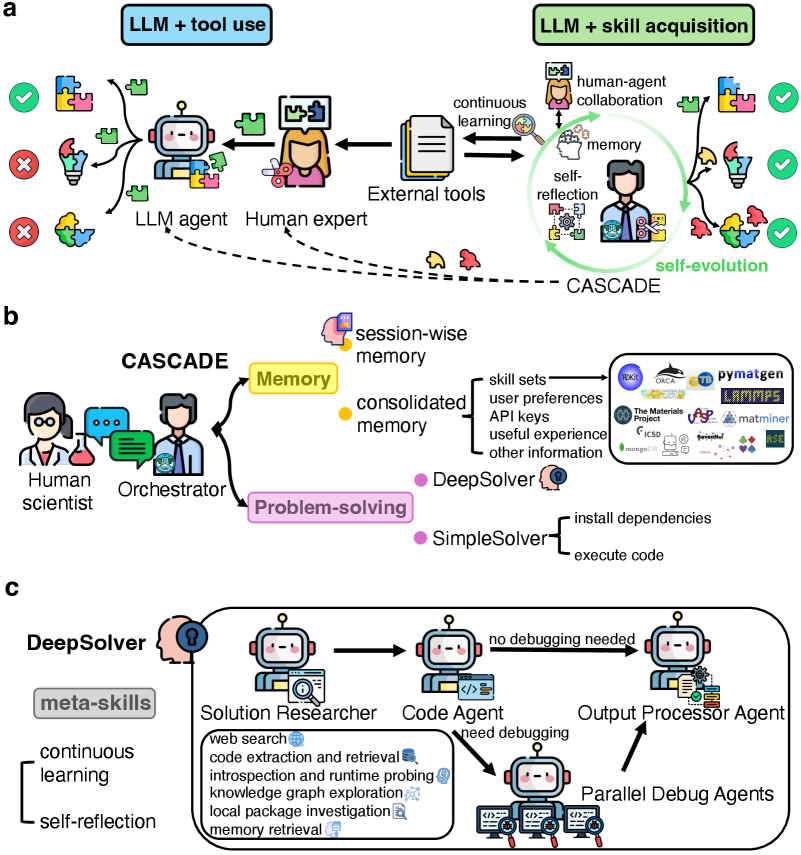

The image depicts a three-part technical architecture for a collaborative system integrating Large Language Models (LLMs), human expertise, and autonomous agents. It emphasizes skill acquisition, problem-solving, and continuous learning through human-agent collaboration.

### Components/Axes

#### Part a: LLM + Tool Use / LLM + Skill Acquisition

- **Key Elements**:

- **LLM Agent**: Interacts with external tools (e.g., puzzles, code snippets).

- **Human Expert**: Collaborates with the LLM agent, providing feedback (✓/✗ symbols).

- **External Tools**: Represented by puzzle pieces, code, and documents.

- **Memory**: Central to skill acquisition, with self-reflection and self-evolution loops.

- **Continuous Learning**: Arrows indicate iterative improvement.

- **Flow**:

- LLM agent uses tools → Human expert validates → Memory updates → Self-evolution.

#### Part b: CASCADE Framework

- **Key Elements**:

- **Human Scientist**: Oversees the system.

- **Orchestrator**: Manages workflow between agents.

- **Problem-Solving Agents**:

- **DeepSolver**: Handles complex tasks (e.g., code extraction, runtime probing).

- **SimpleSolver**: Executes basic tasks (e.g., code execution).

- **Memory**: Session-wise and consolidated memory.

- **User Preferences**: API keys, useful experiences.

- **Flow**:

- Human scientist → Orchestrator → Problem-solving agents → Memory integration.

#### Part c: DeepSolver Architecture

- **Key Elements**:

- **Meta-Skills**: Continuous learning, self-reflection.

- **Solution Researcher**: Performs web searches, code extraction, and runtime probing.

- **Code Agent**: Requires debugging (✗) or not (✓).

- **Output Processor Agent**: Finalizes results.

- **Parallel Debug Agents**: Handle debugging tasks.

- **Flow**:

- Solution Researcher → Code Agent → Output Processor Agent (with parallel debugging).

### Detailed Analysis

- **Part a**:

- The LLM agent’s tool use is validated by the human expert, with successful interactions (✓) and failures (✗).

- External tools (e.g., code, documents) feed into the LLM’s skill acquisition via memory and self-reflection.

- **Part b**:

- The CASCADE framework emphasizes memory consolidation and problem-solving hierarchies (DeepSolver for complex tasks, SimpleSolver for basic tasks).

- User preferences (API keys, experiences) guide the system’s behavior.

- **Part c**:

- DeepSolver integrates meta-skills (continuous learning, self-reflection) to autonomously resolve issues.

- The Solution Researcher handles knowledge graph exploration and local package investigation.

### Key Observations

1. **Human-AI Collaboration**: Human experts validate LLM outputs, ensuring accuracy and skill refinement.

2. **Memory-Centric Design**: Memory acts as a bridge between tool use, problem-solving, and skill acquisition.

3. **Autonomous Debugging**: Parallel Debug Agents in DeepSolver reduce reliance on human intervention.

4. **Hierarchical Problem-Solving**: Tasks are delegated to agents based on complexity (DeepSolver vs. SimpleSolver).

### Interpretation

This architecture demonstrates a symbiotic system where LLMs and humans co-evolve skills through iterative collaboration. The CASCADE framework ensures efficient problem-solving by leveraging memory and agent specialization, while DeepSolver’s meta-skills enable self-sufficiency in complex tasks. The emphasis on memory and self-reflection suggests a focus on long-term adaptability, positioning the system as a dynamic tool for technical workflows requiring both human insight and autonomous execution.