\n

## Diagram: Text-to-Text Generation with LLMs

### Overview

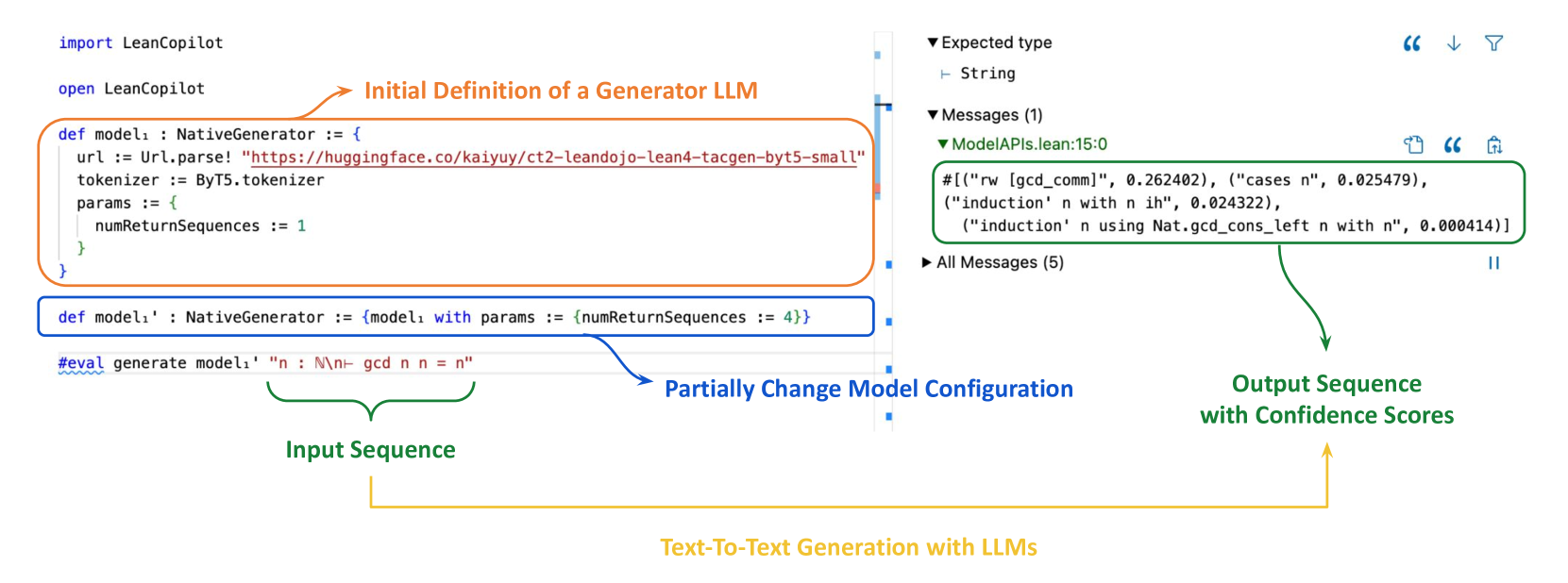

This diagram illustrates the process of text-to-text generation using Large Language Models (LLMs), specifically within the LeanCopilot framework. It depicts an input sequence being processed through a generator LLM, with the ability to partially change the model configuration, resulting in an output sequence with associated confidence scores. The diagram uses arrows to indicate the flow of information and labels to identify key stages and components.

### Components/Axes

The diagram is segmented into three main areas: Input Sequence (left), Model Interaction (center), and Output Sequence (right). There's a title at the bottom: "Text-To-Text Generation with LLMs".

* **Input Sequence:** Labeled "Input Sequence" and highlighted in light blue.

* **Model Interaction:** Highlighted in light green, labeled "Partially Change Model Configuration".

* **Output Sequence:** Labeled "Output Sequence with Confidence Scores" and highlighted in light purple.

* **Initial Definition of a Generator LLM:** A text box pointing to the initial code block.

* **Expected type:** A section labeled "Expected type" with a sub-label "String".

* **Messages (1):** A section labeled "Messages (1)".

* **ModelAPIs.lean:15:0:** A section labeled "ModelAPIs.lean:15:0".

* **All Messages (5):** A section labeled "All Messages (5)".

### Content Details

The diagram contains code snippets and text outputs.

**Input Sequence Code:**

```

import LeanCopilot

open LeanCopilot

def model: NativeGenerator := {

url := Url.parse! "https://huggingface.co/kaiyuy/ct2-leandojo-lean4-tacgen-bytesmall"

tokenizer := Byte5.tokenizer

params := {

numReturnSequences := 1

}

}

def model: NativeGenerator := (model with params := {numReturnSequences := 4})

#eval generate model: "n: M(n- gcd n n = n"

```

**Output Sequence Text:**

The output sequence displays several lines, each with a text string and a numerical confidence score. The text is presented within a `#` symbol.

* `("#\"rw [gcd_comm]", 0.262402)`

* `("#\"induction' n with n jh", 0.024322)`

* `("#\"induction' n using Nat.gcd_cons_left n with n", 0.000414)`

**Arrows and Labels:**

* An arrow labeled "Initial Definition of a Generator LLM" points from the top-left to the first code block.

* An arrow labeled "Partially Change Model Configuration" points from the first code block to the second code block.

* An arrow labeled "Output Sequence with Confidence Scores" points from the second code block to the output text.

### Key Observations

The diagram highlights the iterative nature of LLM interaction. The initial model definition can be modified (specifically, the `numReturnSequences` parameter is changed from 1 to 4), influencing the output generated. The output provides multiple possible completions with associated confidence scores, indicating the model's uncertainty. The confidence scores are relatively low, suggesting the model is not highly confident in any single completion.

### Interpretation

This diagram demonstrates a simplified workflow for text generation using LLMs. It showcases how the model's configuration can be adjusted to influence the output and how the model provides multiple potential completions along with confidence scores. The low confidence scores suggest that the input prompt might be ambiguous or require more context for the model to generate a highly probable completion. The diagram emphasizes the probabilistic nature of LLM outputs and the importance of considering multiple possibilities. The use of LeanCopilot suggests a focus on formal verification or mathematical reasoning, given the mathematical expression in the input prompt (`n: M(n- gcd n n = n`). The diagram is a high-level illustration and doesn't delve into the internal workings of the LLM or the specifics of the LeanCopilot framework.