TECHNICAL ASSET FINGERPRINT

5222210b5cbfee4d7b3a887c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

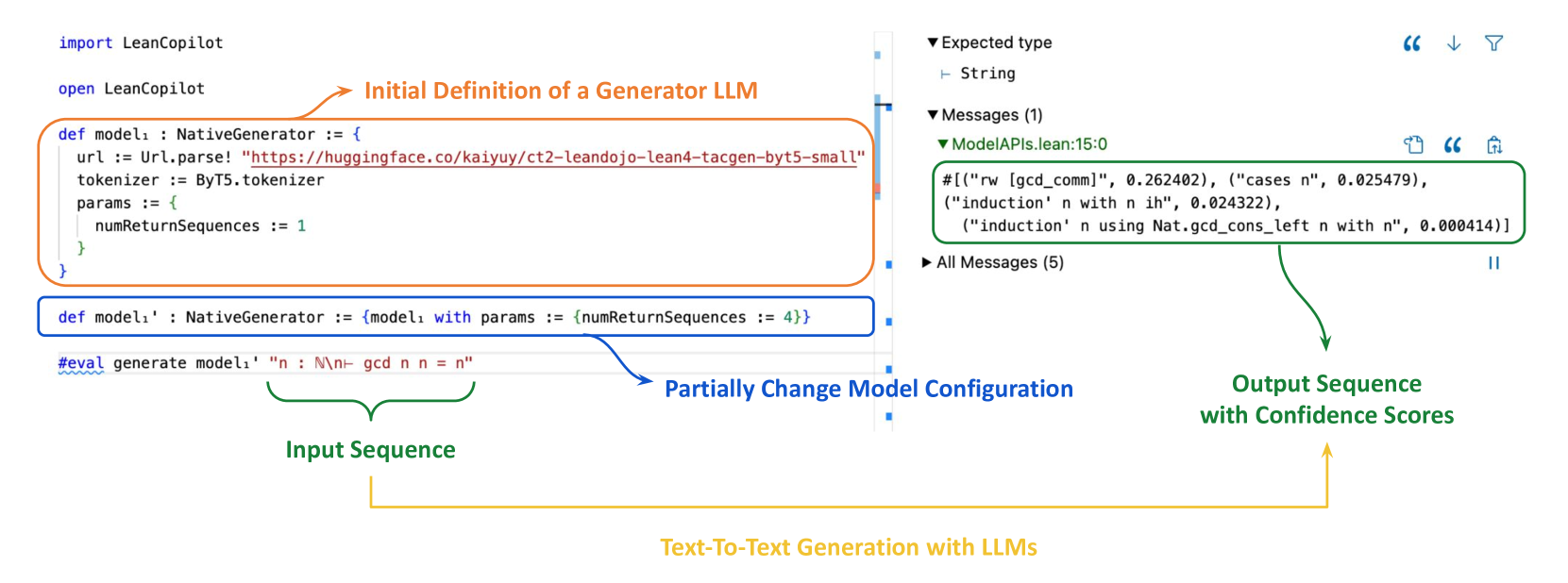

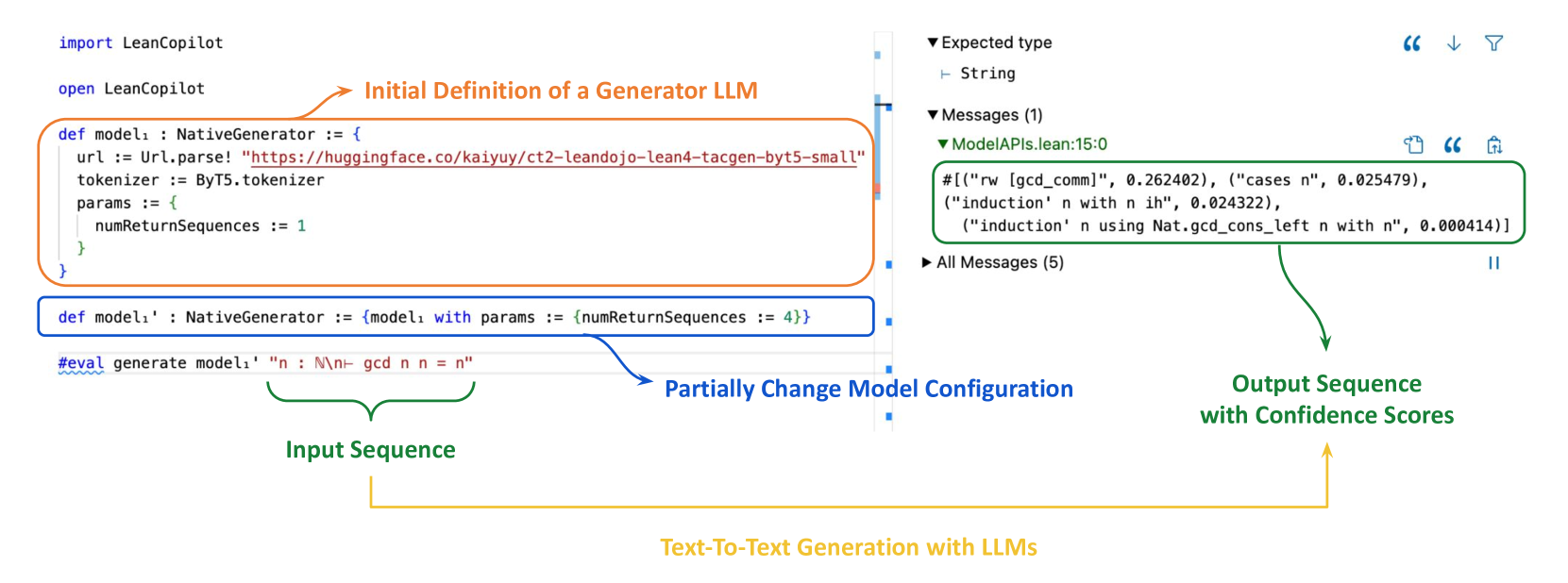

## Code Screenshot: LeanCopilot LLM Generator Configuration and Output

### Overview

This image is a screenshot of a code editor or integrated development environment (IDE) displaying Lean code that utilizes the `LeanCopilot` library to define and run a text-generation Large Language Model (LLM). The screenshot is heavily annotated with colored boxes and arrows to explain the workflow of defining a model, modifying its configuration, providing an input sequence, and viewing the generated output with confidence scores.

### Components/Axes

The image is divided into two primary vertical panels:

1. **Left Panel (Code Editor):** Contains Lean source code with syntax highlighting.

2. **Right Panel (Info/Output Panel):** Displays type information, messages, and evaluation output.

**Annotations (Colored Overlays):**

* **Orange Box & Arrow:** Highlights the initial model definition. Label: "Initial Definition of a Generator LLM".

* **Blue Box & Arrow:** Highlights a modified model definition. Label: "Partially Change Model Configuration".

* **Green Bracket & Arrow:** Highlights the input string for generation. Label: "Input Sequence".

* **Green Box & Arrow (Right Panel):** Highlights the generated output list. Label: "Output Sequence with Confidence Scores".

* **Yellow Bracket & Arrow:** Connects the input sequence to the output sequence. Label: "Text-To-Text Generation with LLMs".

### Detailed Analysis

#### Left Panel: Code Content

The code is written in the Lean programming language.

**Line 1:** `import LeanCopilot`

**Line 2:** `open LeanCopilot`

**First Code Block (Orange Box):**

```lean

def model₁ : NativeGenerator := {

url := Url.parse! "https://huggingface.co/kaiyuy/ct2-leandojo-lean4-tacgen-byt5-small"

tokenizer := ByT5.tokenizer

params := {

numReturnSequences := 1

}

}

```

* **Purpose:** Defines a constant `model₁` of type `NativeGenerator`.

* **Configuration:**

* `url`: Points to a Hugging Face model repository (`kaiyuy/ct2-leandojo-lean4-tacgen-byt5-small`).

* `tokenizer`: Uses the `ByT5.tokenizer`.

* `params`: A record containing generation parameters. Here, `numReturnSequences` is set to `1`.

**Second Code Block (Blue Box):**

```lean

def model₁' : NativeGenerator := {model₁ with params := {numReturnSequences := 4}}

```

* **Purpose:** Defines a new constant `model₁'` by updating `model₁`.

* **Modification:** It uses Lean's update syntax (`{model₁ with ...}`) to change only the `params` field, setting `numReturnSequences` to `4`. This creates a variant of the original model that will generate four sequences instead of one.

**Third Code Block (Green Bracket):**

```lean

#eval generate model₁' "n : ℕ\n- gcd n n = n"

```

* **Purpose:** An evaluation command (`#eval`) that calls the `generate` function.

* **Arguments:**

1. The model: `model₁'` (the variant configured to return 4 sequences).

2. The input sequence: `"n : ℕ\n- gcd n n = n"`. This is a string representing a Lean goal or theorem statement: "Given `n : ℕ` (n is a natural number), prove `gcd n n = n`".

#### Right Panel: Output Content

**Top Section:**

* `▼ Expected type`

* `⊢ String`

* `▼ Messages (1)`

* `▼ ModelAPIs.lean:15:0` (Indicates the file and line number where the message originates).

* **Content within Green Box:**

```

#[("rw [gcd_comm]", 0.262402), ("cases n", 0.025479),

("induction' n with n ih", 0.024322),

("induction' n using Nat.gcd_cons_left n with n", 0.000414)]

```

* `▶ All Messages (5)` (A collapsed section indicating there are 5 total messages).

**Output Sequence Analysis (Green Box Content):**

The output is a Lean list (`#[...]`) of tuples. Each tuple contains:

1. A **string**: A suggested proof tactic or step (e.g., `"rw [gcd_comm]"`).

2. A **floating-point number**: A confidence score associated with that suggestion.

The four generated sequences (tactics) and their approximate confidence scores are:

1. `("rw [gcd_comm]", 0.262402)` - Confidence ~26.24%

2. `("cases n", 0.025479)` - Confidence ~2.55%

3. `("induction' n with n ih", 0.024322)` - Confidence ~2.43%

4. `("induction' n using Nat.gcd_cons_left n with n", 0.000414)` - Confidence ~0.04%

### Key Observations

1. **Workflow Demonstration:** The image clearly illustrates a common LLM interaction pattern: define a base model, create a configured variant for a specific task (increasing output candidates), provide a prompt, and receive multiple ranked suggestions.

2. **Model Specificity:** The model URL (`ct2-leandojo-lean4-tacgen-byt5-small`) indicates it is a small, specialized model (`byt5-small`) fine-tuned for generating Lean 4 proof tactics (`tacgen`).

3. **Output Format:** The output is not a single answer but a ranked list of potential next steps in a formal proof, each with a confidence score. This is typical for assistive code or proof generation tools.

4. **Confidence Disparity:** There is a significant drop in confidence between the top suggestion (`rw [gcd_comm]` at ~26%) and the subsequent ones (all below 3%). This suggests the model has a clear, though not overwhelmingly certain, preference for the first tactic.

5. **Spatial Layout:** The annotations are strategically placed to guide the viewer's eye through the logical flow: from definition (top-left), to configuration (middle-left), to input (bottom-left), and finally to output (top-right), with a connecting arrow emphasizing the core text-to-text process.

### Interpretation

This screenshot serves as a technical tutorial or documentation snippet for the `LeanCopilot` library. It demonstrates how to programmatically interface with a specialized LLM to assist in formal mathematical theorem proving within the Lean ecosystem.

The data suggests that for the given goal (`gcd n n = n`), the model's primary suggested strategy is to rewrite (`rw`) using the commutativity of GCD (`gcd_comm`). The much lower confidence for `cases n` and `induction` strategies implies the model recognizes the problem as likely solvable by a simple rewrite rule rather than requiring case analysis or induction, which are more complex proof techniques.

The "Peircean" investigative reading here is that the image is not just showing code, but **modeling a cognitive process**—the process of an AI assistant breaking down a formal problem and proposing solutions with quantified uncertainty. It highlights the shift from deterministic programming to probabilistic assistance in advanced mathematical reasoning. The core message is that `LeanCopilot` enables developers to embed this kind of intelligent, suggestion-based workflow directly into Lean development environments.

DECODING INTELLIGENCE...