## Screenshot: LeanCopilot Code Editor with Model Configuration and Output

### Overview

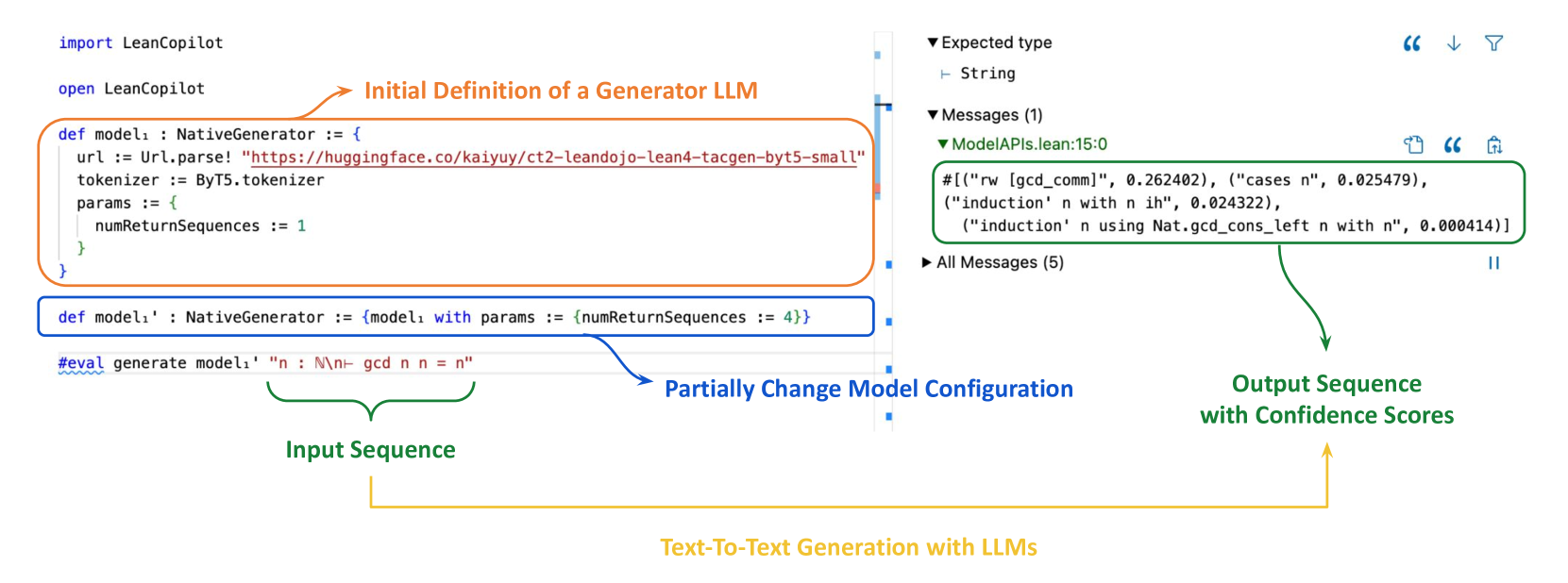

The image shows a Python code editor interface with annotations and arrows highlighting key components of a language model (LLM) configuration and text generation process. The code defines a generator LLM using the `LeanCopilot` library, modifies its parameters, and demonstrates text-to-text generation with confidence scores.

### Components/Axes

1. **Code Editor Interface**:

- **Left Panel**: Code editor with syntax highlighting (Python).

- **Right Panel**: Output console with model API responses and confidence scores.

- **Annotations**: Arrows and text boxes explaining code functionality.

2. **Annotations**:

- **Orange Arrow**: "Initial Definition of a Generator LLM" pointing to model definition.

- **Blue Arrow**: "Partially Change Model Configuration" pointing to parameter modification.

- **Green Arrow**: "Output Sequence with Confidence Scores" pointing to generated text.

3. **Code Elements**:

- **Imports**: `import LeanCopilot` and `open LeanCopilot`.

- **Model Definition**: `def model1: NativeGenerator` with URL and tokenizer.

- **Parameter Modification**: `numReturnSequences` changed from 1 to 4.

- **Input/Output**: `#eval generate model1 "n : N\\n- gcd n n = n"`.

4. **Output Section**:

- **Expected Type**: `String`.

- **Messages**: Model API responses with confidence scores (e.g., `0.262402`, `0.024322`).

### Detailed Analysis

#### Code Structure

- **Model Initialization**:

```python

def model1: NativeGenerator := {

url := Url.parse! "https://huggingface.co/kaiyuy/ct2-leandojo-lean4-tacgen-byt5-small"

tokenizer := ByT5.tokenizer

params := { numReturnSequences := 1 }

}

```

- **URL**: Hugging Face model repository.

- **Tokenizer**: `ByT5.tokenizer` for text preprocessing.

- **Initial Parameter**: `numReturnSequences := 1`.

- **Modified Model**:

```python

def model1': NativeGenerator := { model1 with params := { numReturnSequences := 4 } }

```

- **Change**: Increased `numReturnSequences` to 4 for multiple output sequences.

- **Generation Command**:

```python

#eval generate model1' "n : N\\n- gcd n n = n"

```

- **Input**: Mathematical problem (`gcd n n = n`).

- **Output**: Generated sequences with confidence scores.

#### Output Section

- **Model API Responses**:

```python

# [("rw [gcd_comm]", 0.262402), ("cases n", 0.025479),

("induction' n with n ih", 0.024322),

("induction' n using Nat.gcd_cons_left n with n", 0.000414)]

```

- **Confidence Scores**: Range from `0.000414` (low) to `0.262402` (high).

- **Generated Text**: Mathematical reasoning steps (e.g., induction proofs).

### Key Observations

1. **Parameter Impact**: Increasing `numReturnSequences` from 1 to 4 generates multiple output sequences with varying confidence scores.

2. **Confidence Distribution**: Higher confidence scores (`0.262402`) correspond to foundational steps (e.g., `gcd_comm`), while lower scores (`0.000414`) relate to complex reasoning (`induction`).

3. **Code Annotations**: Arrows and text boxes clarify the workflow: model definition → parameter adjustment → generation.

### Interpretation

- **Model Behavior**: The LLM generates mathematical proofs by leveraging induction and commutative properties of GCD. Confidence scores reflect the model's certainty in each step, with foundational rules having higher confidence.

- **Technical Workflow**: The code demonstrates how to configure and interact with an LLM for text-to-text generation, emphasizing parameter tuning (`numReturnSequences`) and output analysis.

- **Uncertainty**: Lower confidence scores (`<0.01`) suggest the model struggles with complex inductive reasoning, highlighting areas for improvement in LLM training or prompting.

### Languages Present

- **Primary**: English (code comments, annotations).

- **Secondary**: Python (code syntax).

### Spatial Grounding

- **Annotations**:

- Orange arrow (top-left) points to initial model definition.

- Blue arrow (middle) highlights parameter modification.

- Green arrow (right) directs to output.

- **Output Panel**: Right-aligned, with a green-highlighted box for confidence scores.

### Trends Verification

- **Confidence Scores**: No clear upward/downward trend; scores vary based on step complexity.

- **Code Flow**: Linear progression from model definition to generation command.

### Component Isolation

1. **Header**: Code imports and model definition.

2. **Main**: Parameter modification and generation command.

3. **Footer**: Output console with API responses.

This extraction captures the technical workflow of configuring and using an LLM for mathematical reasoning, emphasizing parameter adjustments and output analysis.