## Chart Type: Comparative Bar Charts of AgentFlow Accuracy

### Overview

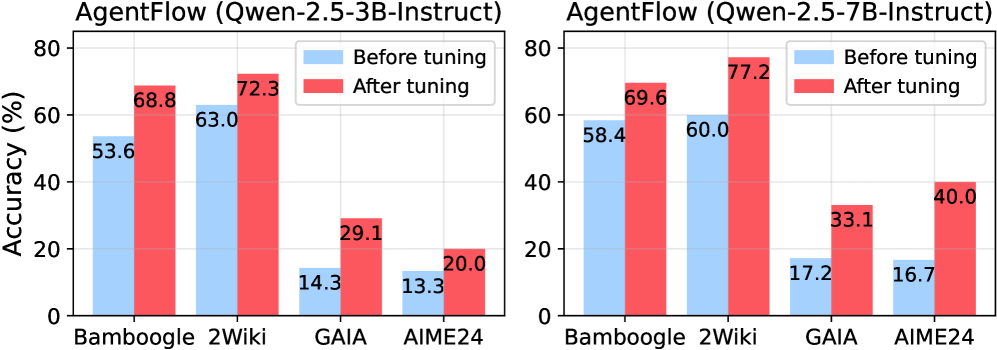

The image displays two side-by-side bar charts, comparing the accuracy of "AgentFlow" models, specifically "Qwen-2.5-3B-Instruct" and "Qwen-2.5-7B-Instruct", across four different datasets. For each model and dataset, the charts show the accuracy "Before tuning" and "After tuning", illustrating the impact of the tuning process. The Y-axis represents "Accuracy (%)", and the X-axis lists the four datasets: "Bamboogle", "2Wiki", "GAIA", and "AIME24".

### Components/Axes

The image consists of two distinct bar charts, arranged horizontally.

**Common Elements for Both Charts:**

* **Y-axis Label**: "Accuracy (%)"

* **Y-axis Scale**: Ranges from 0 to 80, with major tick marks at 0, 20, 40, 60, and 80. Minor grid lines are present at intervals of 10.

* **X-axis Categories**: The horizontal axis for both charts displays the same four categories, from left to right: "Bamboogle", "2Wiki", "GAIA", and "AIME24".

* **Legend**: A legend is positioned in the top-right corner of the plotting area for each chart.

* A light blue rectangle represents "Before tuning".

* A red rectangle represents "After tuning".

**Left Chart Specifics:**

* **Title**: "AgentFlow (Qwen-2.5-3B-Instruct)"

**Right Chart Specifics:**

* **Title**: "AgentFlow (Qwen-2.5-7B-Instruct)"

### Detailed Analysis

**Left Chart: AgentFlow (Qwen-2.5-3B-Instruct)**

This chart evaluates the Qwen-2.5-3B-Instruct model. For each dataset, there are two vertical bars: a light blue bar for "Before tuning" and a red bar for "After tuning".

* **Bamboogle**:

* The light blue bar ("Before tuning") reaches an accuracy of 53.6%.

* The red bar ("After tuning") is significantly taller, reaching 68.8%.

* **Trend**: Tuning results in a substantial increase in accuracy for Bamboogle.

* **2Wiki**:

* The light blue bar ("Before tuning") shows an accuracy of 63.0%.

* The red bar ("After tuning") is taller, indicating an accuracy of 72.3%.

* **Trend**: Tuning leads to an improvement in accuracy for 2Wiki.

* **GAIA**:

* The light blue bar ("Before tuning") is relatively short, at 14.3%.

* The red bar ("After tuning") is significantly taller, reaching 29.1%.

* **Trend**: Tuning more than doubles the accuracy for GAIA.

* **AIME24**:

* The light blue bar ("Before tuning") is short, at 13.3%.

* The red bar ("After tuning") is taller, showing an accuracy of 20.0%.

* **Trend**: Tuning results in a notable increase in accuracy for AIME24.

**Right Chart: AgentFlow (Qwen-2.5-7B-Instruct)**

This chart evaluates the Qwen-2.5-7B-Instruct model. Similar to the left chart, each dataset has two bars: light blue for "Before tuning" and red for "After tuning".

* **Bamboogle**:

* The light blue bar ("Before tuning") shows an accuracy of 58.4%.

* The red bar ("After tuning") is taller, reaching 69.6%.

* **Trend**: Tuning leads to an improvement in accuracy for Bamboogle.

* **2Wiki**:

* The light blue bar ("Before tuning") shows an accuracy of 60.0%.

* The red bar ("After tuning") is significantly taller, reaching 77.2%.

* **Trend**: Tuning results in a substantial increase in accuracy for 2Wiki.

* **GAIA**:

* The light blue bar ("Before tuning") is relatively short, at 17.2%.

* The red bar ("After tuning") is significantly taller, reaching 33.1%.

* **Trend**: Tuning nearly doubles the accuracy for GAIA.

* **AIME24**:

* The light blue bar ("Before tuning") is short, at 16.7%.

* The red bar ("After tuning") is significantly taller, reaching 40.0%.

* **Trend**: Tuning results in a very substantial increase in accuracy for AIME24.

### Key Observations

1. **Consistent Improvement**: Across all four datasets and both Qwen models (3B and 7B), "After tuning" accuracy (red bars) is consistently higher than "Before tuning" accuracy (light blue bars). This indicates that the tuning process is effective in improving model performance.

2. **Varying Degrees of Improvement**: The magnitude of improvement varies.

* For the 3B model, GAIA and Bamboogle show the largest absolute gains (14.8% for Bamboogle, 14.8% for GAIA). AIME24 also shows a significant relative improvement (from 13.3% to 20.0%).

* For the 7B model, AIME24 shows the largest absolute gain (23.3%), followed by 2Wiki (17.2%) and GAIA (15.9%).

3. **Model Size Impact**:

* The Qwen-2.5-7B-Instruct model generally starts with higher "Before tuning" accuracies than the Qwen-2.5-3B-Instruct model on Bamboogle (58.4% vs 53.6%), GAIA (17.2% vs 14.3%), and AIME24 (16.7% vs 13.3%). For 2Wiki, the 3B model starts slightly higher (63.0% vs 60.0%).

* After tuning, the 7B model consistently achieves higher accuracies than the 3B model across all datasets:

* Bamboogle: 69.6% (7B) vs 68.8% (3B)

* 2Wiki: 77.2% (7B) vs 72.3% (3B)

* GAIA: 33.1% (7B) vs 29.1% (3B)

* AIME24: 40.0% (7B) vs 20.0% (3B)

4. **Dataset Performance**:

* Bamboogle and 2Wiki generally show higher baseline accuracies and higher post-tuning accuracies compared to GAIA and AIME24, for both models.

* GAIA and AIME24 start with much lower accuracies (around 13-17%) but show substantial relative improvements after tuning, especially AIME24 with the 7B model, which more than doubles its accuracy from 16.7% to 40.0%.

### Interpretation

The data strongly suggests that the tuning process applied to the "AgentFlow" models (Qwen-2.5-3B-Instruct and Qwen-2.5-7B-Instruct) is highly effective in improving their accuracy across a diverse set of tasks or datasets. The consistent upward trend from "Before tuning" to "After tuning" for every single data point underscores the value of this tuning.

Furthermore, the comparison between the 3B and 7B parameter models highlights the general advantage of larger models. The Qwen-2.5-7B-Instruct model not only tends to perform better out-of-the-box (before tuning) but also achieves higher absolute accuracies after tuning across all evaluated datasets. This indicates that increased model capacity, combined with effective tuning, leads to superior performance.

The varying degrees of improvement across datasets are also insightful. Datasets like GAIA and AIME24, which start with lower baseline accuracies, often see the most dramatic relative gains from tuning, suggesting that tuning can be particularly impactful for tasks where the base model struggles. For instance, the Qwen-2.5-7B-Instruct model's accuracy on AIME24 jumps from 16.7% to 40.0%, indicating that tuning significantly enhances its capability to handle this specific task. Conversely, for datasets like Bamboogle and 2Wiki, where baseline performance is already relatively high, tuning still provides a meaningful, albeit sometimes smaller, boost.

In essence, the charts demonstrate that tuning is a critical step for optimizing AgentFlow's performance, and that leveraging larger Qwen models (like the 7B variant) further amplifies these benefits, leading to higher overall accuracy across various benchmarks. The results validate the efficacy of the tuning methodology and the scalability of performance with model size within the AgentFlow framework.