## Heatmap: Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

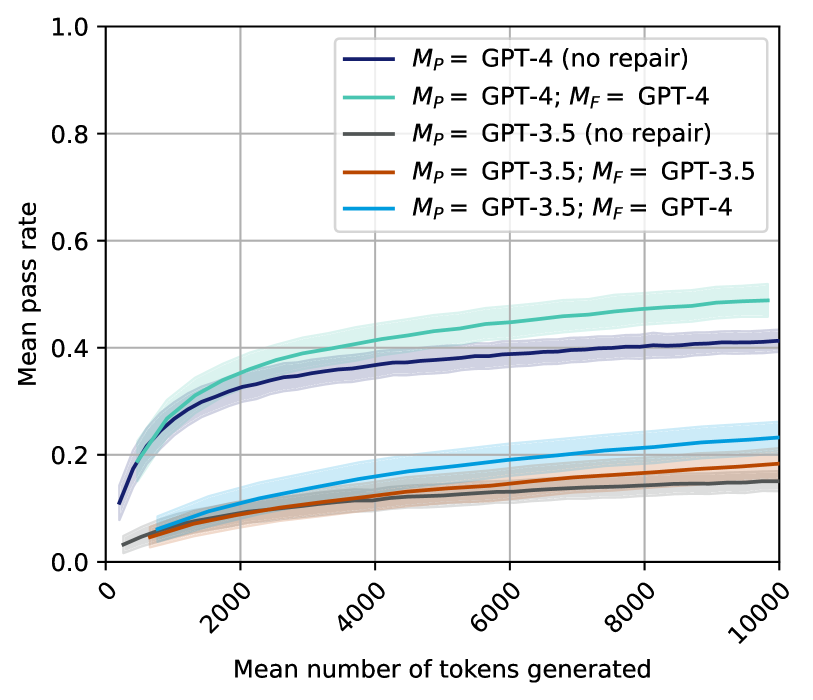

The heatmap illustrates the mean pass rate of different models as the mean number of tokens generated increases. The models compared are GPT-4, GPT-3.5, and their variants with and without repair mechanisms.

### Components/Axes

- **X-axis**: Mean number of tokens generated, ranging from 0 to 10,000.

- **Y-axis**: Mean pass rate, ranging from 0.0 to 1.0.

- **Legend**:

- **M_p**: GPT-4 (no repair)

- **M_p; M_f**: GPT-4; GPT-4

- **M_p**: GPT-3.5 (no repair)

- **M_p; M_f**: GPT-3.5; GPT-3.5

- **M_p**: GPT-3.5; GPT-4

### Detailed Analysis or ### Content Details

The heatmap shows that the mean pass rate generally increases with the mean number of tokens generated. However, the rate of increase varies between the models. GPT-4 (no repair) has the highest mean pass rate, followed by GPT-4 with repair (M_p; M_f). GPT-3.5 (no repair) and GPT-3.5 with repair (M_p; M_f) have lower pass rates compared to GPT-4. The pass rate for GPT-3.5 with repair (M_p; M_f) is slightly higher than GPT-3.5 (no repair) across all token counts.

### Key Observations

- GPT-4 (no repair) consistently has the highest mean pass rate.

- GPT-4 with repair (M_p; M_f) shows a slight improvement in pass rate compared to GPT-4 (no repair).

- GPT-3.5 (no repair) and GPT-3.5 with repair (M_p; M_f) have lower pass rates.

- The pass rate for GPT-3.5 with repair (M_p; M_f) is marginally higher than GPT-3.5 (no repair).

### Interpretation

The data suggests that the mean pass rate of language models generally improves with the number of tokens generated. The models with repair mechanisms (M_p; M_f) tend to have higher pass rates compared to their variants without repair (M_p). This could indicate that repair mechanisms help in improving the quality and coherence of the generated text. However, the improvement is relatively small, suggesting that the models are already quite effective in generating text with a high pass rate.