# Technical Document Extraction: AI Model Performance Comparison

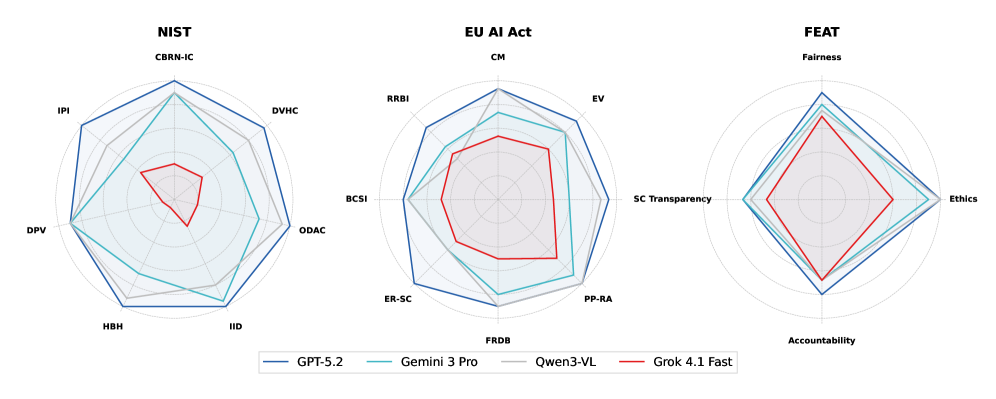

This document provides a comprehensive extraction of data from a series of three radar (spider) charts comparing the performance of four hypothetical AI models across different regulatory and ethical frameworks.

## 1. Metadata and Legend

The image contains three distinct radar charts arranged horizontally. A shared legend is located at the bottom center of the image.

### Legend Identification

| Color | Model Name |

| :--- | :--- |

| **Dark Blue** | GPT-5.2 |

| **Teal/Light Blue** | Gemini 3 Pro |

| **Grey** | Qwen3-VL |

| **Red** | Grok 4.1 Fast |

---

## 2. Chart 1: NIST Framework

This chart evaluates models based on NIST (National Institute of Standards and Technology) criteria. It features a heptagonal (7-axis) structure.

### Axes (Categories)

1. **CBRN-IC** (Top)

2. **DVHC** (Top-Right)

3. **ODAC** (Right)

4. **IID** (Bottom-Right)

5. **HBH** (Bottom-Left)

6. **DPV** (Left)

7. **IPI** (Top-Left)

### Model Performance Trends

* **GPT-5.2 (Dark Blue):** Shows the highest overall coverage, forming the outer perimeter of the web. It peaks at CBRN-IC and IID.

* **Gemini 3 Pro (Teal):** Follows a similar shape to GPT-5.2 but is consistently nested inside it, showing slightly lower performance across all metrics except DPV, where it nearly matches the leader.

* **Qwen3-VL (Grey):** Exhibits a more irregular shape. It performs strongly in HBH and DPV but lags significantly in CBRN-IC and DVHC.

* **Grok 4.1 Fast (Red):** Forms the innermost shape, indicating the lowest relative performance across all NIST metrics, particularly weak in IPI and CBRN-IC.

---

## 3. Chart 2: EU AI Act

This chart evaluates compliance or alignment with the European Union AI Act. It features a heptagonal (7-axis) structure.

### Axes (Categories)

1. **CM** (Top)

2. **EV** (Top-Right)

3. **PP-RA** (Bottom-Right)

4. **FRDB** (Bottom)

5. **ER-SC** (Bottom-Left)

6. **BCSI** (Left)

7. **RRBI** (Top-Left)

### Model Performance Trends

* **GPT-5.2 (Dark Blue):** Dominates the outer bounds, particularly in CM, EV, and ER-SC.

* **Qwen3-VL (Grey):** Shows high performance in PP-RA and BCSI, often overlapping or exceeding Gemini 3 Pro in these specific areas.

* **Gemini 3 Pro (Teal):** Maintains a balanced mid-tier position, showing consistent but non-peak performance across most categories.

* **Grok 4.1 Fast (Red):** Remains the innermost series, though it shows its best relative performance in the FRDB and CM categories compared to its performance in the NIST chart.

---

## 4. Chart 3: FEAT Framework

This chart evaluates models based on FEAT (Fairness, Ethics, Accountability, and Transparency) principles. It features a diamond (4-axis) structure.

### Axes (Categories)

1. **Fairness** (Top)

2. **Ethics** (Right)

3. **Accountability** (Bottom)

4. **SC Transparency** (Left)

### Model Performance Trends

* **GPT-5.2 (Dark Blue):** Leads in Ethics and Accountability. It shows a sharp vertical stretch toward Accountability.

* **Gemini 3 Pro (Teal):** Closely follows GPT-5.2 in Fairness and SC Transparency, showing a very balanced diamond shape.

* **Qwen3-VL (Grey):** Performance is closely aligned with Gemini 3 Pro, though slightly lower in Ethics.

* **Grok 4.1 Fast (Red):** Smallest footprint, centered mostly toward the Fairness and Accountability axes, with significantly lower scores in Ethics and SC Transparency.

---

## 5. Summary of Comparative Data

Across all three frameworks (NIST, EU AI Act, FEAT), a consistent hierarchy is observed:

1. **GPT-5.2** is the top-performing model across almost all measured dimensions.

2. **Gemini 3 Pro** and **Qwen3-VL** occupy the middle tier, with Qwen3-VL showing more specialized strengths in specific NIST and EU categories, while Gemini 3 Pro is more balanced.

3. **Grok 4.1 Fast** consistently represents the baseline/lowest performance tier in this comparison.