## Line Chart: Accuracy vs. Step for Three Reinforcement Learning Methods

### Overview

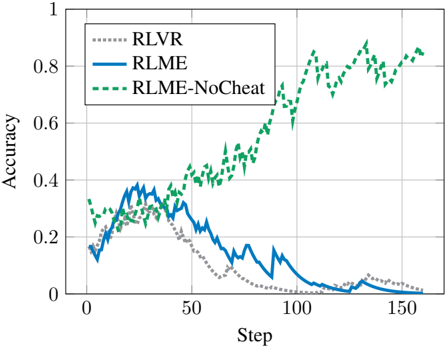

The image is a line chart comparing the performance of three different reinforcement learning methods over 150 training steps. The chart plots "Accuracy" on the y-axis against "Step" on the x-axis. One method shows a strong positive trend, while the other two show an initial rise followed by a decline to near-zero performance.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis:**

* **Label:** "Step"

* **Scale:** Linear, from 0 to 150.

* **Major Tick Marks:** 0, 50, 100, 150.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, from 0 to 1.

* **Major Tick Marks:** 0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Entries:**

1. **RLVR:** Represented by a gray dotted line (`......`).

2. **RLME:** Represented by a solid blue line (`——`).

3. **RLME-NoCheat:** Represented by a green dashed line (`- - -`).

### Detailed Analysis

**1. RLVR (Gray Dotted Line):**

* **Trend:** Starts at ~0.2 accuracy, rises to a peak of ~0.3 around step 25, then begins a steady, monotonic decline.

* **Data Points (Approximate):**

* Step 0: ~0.20

* Step 25 (Peak): ~0.30

* Step 50: ~0.25

* Step 75: ~0.10

* Step 100: ~0.02

* Step 150: ~0.01 (near zero)

**2. RLME (Solid Blue Line):**

* **Trend:** Starts lower than RLVR at ~0.15, rises more sharply to a higher peak of ~0.4 around step 25-30, then declines. Its decline is steeper than RLVR's after step 50, crossing below the RLVR line around step 60.

* **Data Points (Approximate):**

* Step 0: ~0.15

* Step 30 (Peak): ~0.40

* Step 50: ~0.30

* Step 75: ~0.15

* Step 100: ~0.05

* Step 125: ~0.02

* Step 150: ~0.00

**3. RLME-NoCheat (Green Dashed Line):**

* **Trend:** Starts the highest at ~0.3. Shows high variance (noisy) but a clear and strong upward trend throughout the entire training period. It consistently outperforms the other two methods after approximately step 40.

* **Data Points (Approximate):**

* Step 0: ~0.30

* Step 25: ~0.35

* Step 50: ~0.45

* Step 75: ~0.60

* Step 100: ~0.75

* Step 125: ~0.80

* Step 150: ~0.85

### Key Observations

1. **Divergent Paths:** The three methods, which start within 0.15 accuracy points of each other, diverge dramatically. RLME-NoCheat improves, while RLVR and RLME degrade.

2. **Peak Performance Timing:** Both RLVR and RLME achieve their peak accuracy early in training (steps 25-30) before deteriorating.

3. **Crossover Point:** The RLME line crosses below the RLVR line around step 60, indicating its performance degrades faster after the initial phase.

4. **Noise vs. Signal:** The RLME-NoCheat line is significantly noisier (has more short-term fluctuation) than the other two, yet its overall upward signal is unambiguous.

5. **Final State:** By step 150, RLME-NoCheat reaches ~0.85 accuracy, while RLVR and RLME have effectively failed, converging to ~0.

### Interpretation

This chart likely illustrates a critical finding in a reinforcement learning study. The "NoCheat" variant of the RLME method appears to be robust to a failure mode that cripples the standard RLME and RLVR methods over time.

* **What the data suggests:** The standard methods (RLVR, RLME) may be exploiting a shortcut or "cheating" strategy that provides early rewards but leads to catastrophic forgetting or policy collapse as training progresses. The "NoCheat" method is presumably designed to prevent this exploitation, forcing the agent to learn a more generalizable and stable policy, resulting in sustained improvement.

* **Relationship between elements:** The direct comparison on the same axes highlights the magnitude of the improvement. The early peaks of RLVR/RLME versus the sustained rise of RLME-NoCheat tell a story of short-term gain versus long-term learning.

* **Notable anomaly:** The most striking anomaly is the complete collapse of the two baseline methods. This is not merely slower learning but an active degradation of performance, which is a significant problem the "NoCheat" method successfully solves.

* **Underlying message:** The chart argues strongly for the necessity of the algorithmic modification introduced in "RLME-NoCheat." It visually demonstrates that without this modification, the learning process is fundamentally unstable for the given task. The noise in the green line may indicate a more challenging but ultimately more productive learning signal.