## Horizontal Bar Chart: AI Model Performance Across Capabilities

### Overview

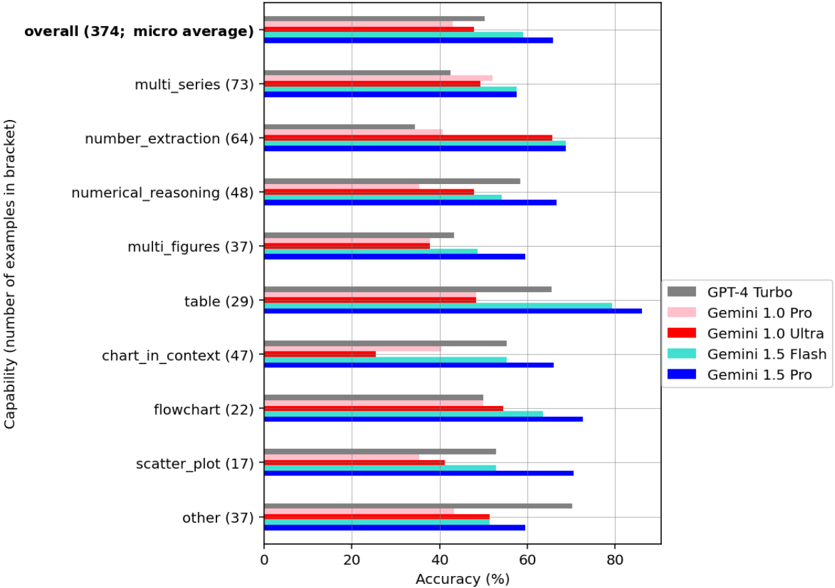

The chart compares the accuracy of five AI models (GPT-4 Turbo, Gemini 1.0 Pro, Gemini 1.0 Ultra, Gemini 1.5 Flash, Gemini 1.5 Pro) across 10 capabilities, with accuracy percentages plotted against the number of examples in each bracket. The "overall" row represents a micro-average across all capabilities.

### Components/Axes

- **Y-axis**: Capabilities (e.g., "overall (374; micro average)", "multi_series (73)", "number_extraction (64)") with example counts in brackets.

- **X-axis**: Accuracy (%) from 0 to 80.

- **Legend**:

- Gray: GPT-4 Turbo

- Pink: Gemini 1.0 Pro

- Red: Gemini 1.0 Ultra

- Cyan: Gemini 1.5 Flash

- Blue: Gemini 1.5 Pro

- **Placement**: Legend is positioned on the right side of the chart.

### Detailed Analysis

1. **Overall (374; micro average)**:

- Gemini 1.5 Pro (blue): ~70%

- Gemini 1.5 Flash (cyan): ~65%

- GPT-4 Turbo (gray): ~60%

- Gemini 1.0 Pro (pink): ~55%

- Gemini 1.0 Ultra (red): ~50%

2. **Multi_series (73)**:

- Gemini 1.5 Pro: ~60%

- Gemini 1.5 Flash: ~60%

- Gemini 1.0 Pro: ~55%

- GPT-4 Turbo: ~45%

- Gemini 1.0 Ultra: ~50%

3. **Number_extraction (64)**:

- Gemini 1.0 Ultra (red): ~70% (highest)

- Gemini 1.5 Pro: ~65%

- Gemini 1.5 Flash: ~65%

- GPT-4 Turbo: ~40%

- Gemini 1.0 Pro: ~50%

4. **Numerical_reasoning (48)**:

- Gemini 1.5 Pro: ~70%

- GPT-4 Turbo: ~60%

- Gemini 1.5 Flash: ~55%

- Gemini 1.0 Ultra: ~50%

- Gemini 1.0 Pro: ~45%

5. **Multi_figures (37)**:

- Gemini 1.5 Pro: ~60%

- Gemini 1.5 Flash: ~55%

- GPT-4 Turbo: ~45%

- Gemini 1.0 Ultra: ~40%

- Gemini 1.0 Pro: ~45%

6. **Table (29)**:

- Gemini 1.5 Pro: ~85% (highest)

- Gemini 1.5 Flash: ~80%

- GPT-4 Turbo: ~65%

- Gemini 1.0 Ultra: ~50%

- Gemini 1.0 Pro: ~50%

7. **Chart_in_context (47)**:

- Gemini 1.5 Pro: ~65%

- GPT-4 Turbo: ~60%

- Gemini 1.5 Flash: ~55%

- Gemini 1.0 Ultra: ~30%

- Gemini 1.0 Pro: ~40%

8. **Flowchart (22)**:

- Gemini 1.5 Pro: ~70%

- Gemini 1.5 Flash: ~65%

- Gemini 1.0 Ultra: ~55%

- GPT-4 Turbo: ~50%

- Gemini 1.0 Pro: ~50%

9. **Scatter_plot (17)**:

- Gemini 1.5 Pro: ~70%

- GPT-4 Turbo: ~60%

- Gemini 1.5 Flash: ~55%

- Gemini 1.0 Ultra: ~40%

- Gemini 1.0 Pro: ~40%

10. **Other (37)**:

- GPT-4 Turbo (gray): ~70% (highest)

- Gemini 1.5 Pro: ~60%

- Gemini 1.5 Flash: ~55%

- Gemini 1.0 Ultra: ~50%

- Gemini 1.0 Pro: ~45%

### Key Observations

- **Gemini 1.5 Pro** consistently leads in most capabilities, particularly in "table" (85%) and "numerical_reasoning" (70%).

- **Gemini 1.0 Ultra** outperforms others in "number_extraction" (70%) and "flowchart" (55%).

- **GPT-4 Turbo** excels in "other" (70%) and "chart_in_context" (60%), suggesting strength in diverse tasks.

- **Gemini 1.0 Pro** underperforms in most categories compared to newer Gemini models.

- **Gemini 1.5 Flash** shows mid-tier performance, often trailing Gemini 1.5 Pro but outperforming older models.

### Interpretation

The data suggests that **Gemini 1.5 Pro** is the most versatile and accurate model across capabilities, with specialized strengths in structured data tasks (e.g., tables). **Gemini 1.0 Ultra** demonstrates niche expertise in numerical extraction, while **GPT-4 Turbo** performs well in diverse, less-defined tasks ("other"). The performance gaps between Gemini 1.0 and 1.5 models highlight the impact of architectural updates. Notably, Gemini 1.0 Ultra’s strong showing in "number_extraction" may indicate specialized training for numerical data, whereas Gemini 1.5 Pro’s dominance in "table" tasks suggests advanced reasoning capabilities. Users prioritizing structured data processing might favor Gemini 1.5 Pro, while those needing numerical extraction could consider Gemini 1.0 Ultra.