TECHNICAL ASSET FINGERPRINT

52b6bb2b09e75faadd7cea72

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

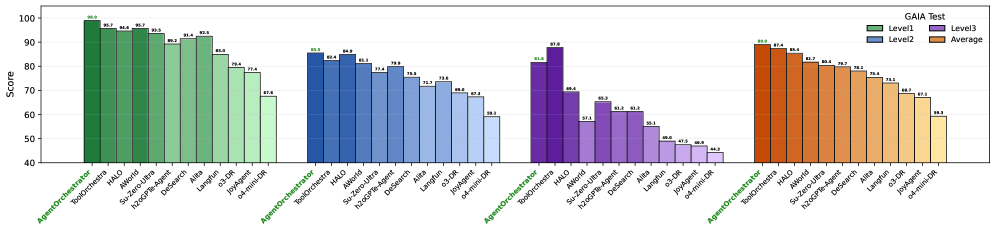

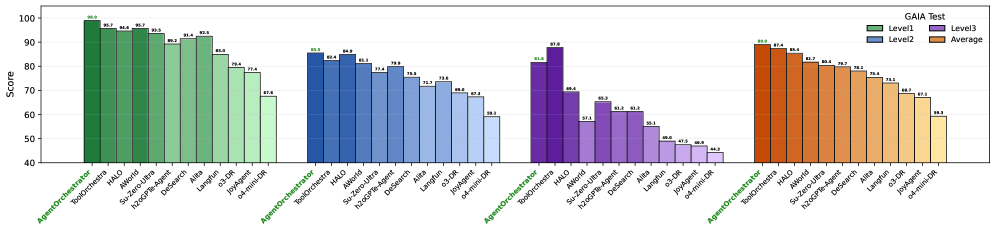

## Bar Chart: GAIA Test Results for Different Agents

### Overview

The image is a bar chart comparing the performance of different agents on the GAIA test across three levels (Level1, Level2, Level3) and their average scores. The chart displays scores ranging from 40 to 100, with each agent's performance represented by bars of different colors corresponding to the test level or average.

### Components/Axes

* **Y-axis:** "Score", ranging from 40 to 100 in increments of 10.

* **X-axis:** Categorical axis representing different agents: AgentOrchestrator, ToolOrchestra, HALO, AWorld, Su-Zero-Ultra, h2oGPTe-Agent, DeSearch, Alita, Langfun, o3-DR, JoyAgent, o4-mini-DR. These agents are grouped into four sections.

* **Legend (Top-Right):**

* Level1: Green

* Level2: Blue

* Level3: Purple

* Average: Orange

### Detailed Analysis

The chart is divided into four sections, each containing the same set of agents. Each section represents a different test level or the average score.

**Section 1: Level 1 (Green)**

* AgentOrchestrator: 98.9

* ToolOrchestra: 95.7

* HALO: 94.6

* AWorld: 95.7

* Su-Zero-Ultra: 93.5

* h2oGPTe-Agent: 89.2

* DeSearch: 91.4

* Alita: 92.5

* Langfun: 85.0

* o3-DR: 79.4

* JoyAgent: 77.4

* o4-mini-DR: 67.8

**Section 2: Level 2 (Blue)**

* AgentOrchestrator: 83.3

* ToolOrchestra: 82.4

* HALO: 84.9

* AWorld: 81.3

* Su-Zero-Ultra: 79.9

* h2oGPTe-Agent: 75.8

* DeSearch: 73.3

* Alita: 73.6

* Langfun: 68.6

* o3-DR: 67.3

* JoyAgent: 59.3

* o4-mini-DR: (Value not clearly visible, but appears to be around 50)

**Section 3: Level 3 (Purple)**

* AgentOrchestrator: 81.6

* ToolOrchestra: 97.8

* HALO: 69.4

* AWorld: 57.1

* Su-Zero-Ultra: 65.3

* h2oGPTe-Agent: 61.2

* DeSearch: 61.2

* Alita: 48.0

* Langfun: 47.5

* o3-DR: 44.3

* JoyAgent: (Value not clearly visible, but appears to be around 40)

* o4-mini-DR: (Value not clearly visible, but appears to be around 40)

**Section 4: Average (Orange)**

* AgentOrchestrator: 79.1

* ToolOrchestra: 87.4

* HALO: 85.4

* AWorld: 81.7

* Su-Zero-Ultra: 80.4

* h2oGPTe-Agent: 78.7

* DeSearch: 78.1

* Alita: 75.4

* Langfun: 73.1

* o3-DR: 68.7

* JoyAgent: 67.1

* o4-mini-DR: 58.3

### Key Observations

* **AgentOrchestrator:** Performs best on Level 1, with a score of 98.9, and worst on Level 3, with a score of 81.6.

* **ToolOrchestra:** Shows the highest score on Level 3 (97.8) and a relatively high average score (87.4).

* **HALO:** Scores are relatively consistent across Level 1 and Level 2, but drops significantly on Level 3.

* **o4-mini-DR:** Consistently scores the lowest across all levels and the average.

* **General Trend:** Performance tends to decrease from Level 1 to Level 3 for most agents.

### Interpretation

The bar chart provides a comparative analysis of different agents' performance on the GAIA test across three difficulty levels. The data suggests that the agents generally perform best on Level 1 and worst on Level 3, indicating that the difficulty increases as the level increases. ToolOrchestra stands out as having a high score on Level 3, suggesting it may be particularly well-suited for the challenges presented at that level. The consistent low performance of o4-mini-DR across all levels suggests it may need further development or is not well-suited for the GAIA test. The chart highlights the strengths and weaknesses of each agent, providing valuable insights for further development and optimization.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: GAIA Test Scores

### Overview

The image presents a bar chart displaying scores from a "GAIA Test" for various models. The chart compares scores across three levels (Level1, Level2, Level3) and an average score. The x-axis represents different models, and the y-axis represents the score, ranging from approximately 40 to 100.

### Components/Axes

* **Title:** GAIA Test

* **Y-axis Label:** Score

* **X-axis Labels:** AgentOrchestrator, Halo, BokEQ-Arena, Avoria, h2oGPT-Arena, Desearch, of-Align, od-Align, AgentOrchestrator, Halo, h2oGPT-Arena, Avoria, Desearch, Llama-DR, Llama-UR, AgentOrchestrator, Halo, h2oGPT-Arena, Desearch, Llama-DR, Llama-UR

* **Legend:**

* Level1 (Green)

* Level2 (Blue)

* Level3 (Purple)

* Average (Orange)

### Detailed Analysis

The chart consists of grouped bars for each model, representing the scores for Level1, Level2, Level3, and the average.

**AgentOrchestrator:**

* Level1: Approximately 99.8

* Level2: Approximately 96.3

* Level3: Approximately 93.7

* Average: Approximately 88.4

**Halo:**

* Level1: Approximately 98.8

* Level2: Approximately 94.8

* Level3: Approximately 91.4

* Average: Approximately 86.9

**BokEQ-Arena:**

* Level1: Approximately 97.9

* Level2: Approximately 93.8

* Level3: Approximately 89.9

* Average: Approximately 85.3

**Avoria:**

* Level1: Approximately 97.0

* Level2: Approximately 92.9

* Level3: Approximately 88.9

* Average: Approximately 84.4

**h2oGPT-Arena:**

* Level1: Approximately 96.1

* Level2: Approximately 92.0

* Level3: Approximately 88.0

* Average: Approximately 83.5

**Desearch:**

* Level1: Approximately 95.2

* Level2: Approximately 91.1

* Level3: Approximately 87.1

* Average: Approximately 82.6

**of-Align:**

* Level1: Approximately 94.3

* Level2: Approximately 90.2

* Level3: Approximately 86.2

* Average: Approximately 81.7

**od-Align:**

* Level1: Approximately 93.4

* Level2: Approximately 89.3

* Level3: Approximately 85.3

* Average: Approximately 80.8

**Second AgentOrchestrator Group:**

* Level1: Approximately 88.4

* Level2: Approximately 84.4

* Level3: Approximately 80.4

* Average: Approximately 75.9

**Second Halo Group:**

* Level1: Approximately 87.5

* Level2: Approximately 83.5

* Level3: Approximately 79.5

* Average: Approximately 75.0

**Second h2oGPT-Arena Group:**

* Level1: Approximately 86.6

* Level2: Approximately 82.6

* Level3: Approximately 78.6

* Average: Approximately 74.1

**Second Avoria Group:**

* Level1: Approximately 85.7

* Level2: Approximately 81.7

* Level3: Approximately 77.7

* Average: Approximately 73.2

**Second Desearch Group:**

* Level1: Approximately 84.8

* Level2: Approximately 80.8

* Level3: Approximately 76.8

* Average: Approximately 72.3

**Llama-DR:**

* Level1: Approximately 83.9

* Level2: Approximately 79.9

* Level3: Approximately 75.9

* Average: Approximately 71.4

**Llama-UR:**

* Level1: Approximately 83.0

* Level2: Approximately 79.0

* Level3: Approximately 75.0

* Average: Approximately 70.5

**Third AgentOrchestrator Group:**

* Level1: Approximately 82.1

* Level2: Approximately 78.1

* Level3: Approximately 74.1

* Average: Approximately 69.6

**Third Halo Group:**

* Level1: Approximately 81.2

* Level2: Approximately 77.2

* Level3: Approximately 73.2

* Average: Approximately 68.7

**Third h2oGPT-Arena Group:**

* Level1: Approximately 80.3

* Level2: Approximately 76.3

* Level3: Approximately 72.3

* Average: Approximately 67.8

**Third Desearch Group:**

* Level1: Approximately 79.4

* Level2: Approximately 75.4

* Level3: Approximately 71.4

* Average: Approximately 66.9

**Third Llama-DR Group:**

* Level1: Approximately 78.5

* Level2: Approximately 74.5

* Level3: Approximately 70.5

* Average: Approximately 66.0

**Third Llama-UR Group:**

* Level1: Approximately 77.6

* Level2: Approximately 73.6

* Level3: Approximately 69.6

* Average: Approximately 65.1

### Key Observations

* The scores generally decrease as you move from Level1 to Level2 to Level3, and the average score is consistently lower than all three levels.

* AgentOrchestrator, Halo, BokEQ-Arena, and Avoria consistently achieve the highest scores across all levels.

* Llama-UR consistently achieves the lowest scores across all levels.

* There are three distinct groupings of models, with a noticeable drop in scores between each group.

### Interpretation

The chart demonstrates the performance of different models on the GAIA test across three levels of difficulty. The consistent ranking of models suggests inherent differences in their capabilities. The decreasing scores from Level1 to Level3 and the lower average scores indicate that the test becomes more challenging with each level, and the models' performance degrades accordingly. The grouping of models suggests that there are tiers of performance, with some models significantly outperforming others. The large gap between the first and last groups suggests a substantial difference in the underlying technology or training data used for these models. The average score provides a baseline for comparison, highlighting which models exceed or fall below the overall performance level. The data suggests that AgentOrchestrator, Halo, BokEQ-Arena, and Avoria are the most robust models tested, while Llama-UR requires further improvement.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: GAIA Test Performance by Model and Level

### Overview

The image displays a grouped bar chart titled "GAIA Test," comparing the performance scores of 12 different AI models or systems across three difficulty levels (Level1, Level2, Level3) and their overall Average. The chart is organized into four distinct color-coded groups, each representing one of these categories.

### Components/Axes

* **Chart Title:** "GAIA Test" (located in the top-right corner).

* **Y-Axis:** Labeled "Score," with a linear scale ranging from 40 to 100, marked at intervals of 10 (40, 50, 60, 70, 80, 90, 100).

* **X-Axis:** Lists 12 model/system names. These names are repeated identically under each of the four color-coded groups. The names are (from left to right within a group):

1. AgentOrchestrator

2. ToolOrchestrator

3. HALO

4. AReaL

5. Search-Omni

6. Search-Omni-7B

7. Defect-13B

8. ARIA

9. Llama-3-8B

10. o1-mini

11. o1-preview

12. 2-shot-o1-mini

* **Legend:** Positioned in the top-right corner, below the title. It defines the four color-coded groups:

* **Level1:** Green bars

* **Level2:** Blue bars

* **Level3:** Purple bars

* **Average:** Orange bars

### Detailed Analysis

The data is presented in four clusters, each corresponding to a legend category. Within each cluster, the 12 models are listed in the same order. The score for each model is displayed as a number directly above its corresponding bar.

**Group 1: Level1 (Green Bars)**

* **Trend:** Scores show a general downward trend from left to right, with a significant drop for the last three models.

* **Data Points (Model: Score):**

* AgentOrchestrator: 98.7

* ToolOrchestrator: 96.0

* HALO: 96.0

* AReaL: 95.7

* Search-Omni: 90.7

* Search-Omni-7B: 92.4

* Defect-13B: 91.7

* ARIA: 80.4

* Llama-3-8B: 77.4

* o1-mini: 67.4

* o1-preview: (Bar present, but score label is not clearly visible; appears to be ~65-70 based on bar height)

* 2-shot-o1-mini: (Bar present, but score label is not clearly visible; appears to be ~65-70 based on bar height)

**Group 2: Level2 (Blue Bars)**

* **Trend:** Scores are generally lower than Level1 for the same models. The trend is less uniform, with some models (e.g., Search-Omni-7B) scoring higher than their immediate neighbors.

* **Data Points (Model: Score):**

* AgentOrchestrator: 85.5

* ToolOrchestrator: 84.0

* HALO: 85.8

* AReaL: 81.3

* Search-Omni: 77.4

* Search-Omni-7B: 79.0

* Defect-13B: 76.5

* ARIA: 72.2

* Llama-3-8B: 70.8

* o1-mini: 68.6

* o1-preview: 67.8

* 2-shot-o1-mini: 59.3

**Group 3: Level3 (Purple Bars)**

* **Trend:** This group shows the lowest scores overall and the steepest decline from left to right. The performance gap between the top models and the bottom models is most pronounced here.

* **Data Points (Model: Score):**

* AgentOrchestrator: 81.8

* ToolOrchestrator: 87.8

* HALO: 68.8

* AReaL: 57.1

* Search-Omni: 65.3

* Search-Omni-7B: 60.8

* Defect-13B: 60.8

* ARIA: 55.1

* Llama-3-8B: 48.0

* o1-mini: 47.0

* o1-preview: 46.8

* 2-shot-o1-mini: 44.2

**Group 4: Average (Orange Bars)**

* **Trend:** Represents the mean performance across levels. The trend mirrors the general pattern of Level1 and Level2, showing a steady decline from the top-performing models to the lower-performing ones.

* **Data Points (Model: Score):**

* AgentOrchestrator: 88.7

* ToolOrchestrator: 87.4

* HALO: 85.7

* AReaL: 78.2

* Search-Omni: 77.7

* Search-Omni-7B: 76.9

* Defect-13B: 76.3

* ARIA: 69.3

* Llama-3-8B: 65.7

* o1-mini: 61.1

* o1-preview: 59.7

* 2-shot-o1-mini: 57.0

### Key Observations

1. **Consistent Top Performers:** `AgentOrchestrator`, `ToolOrchestrator`, and `HALO` consistently occupy the top three positions across all levels and the average.

2. **Level Difficulty:** For nearly every model, the score is highest on Level1, lower on Level2, and lowest on Level3, confirming that the GAIA test levels increase in difficulty.

3. **Notable Anomaly:** `ToolOrchestrator` outperforms `AgentOrchestrator` on the most difficult Level3 (87.8 vs. 81.8), despite scoring slightly lower on Level1 and the Average. This suggests `ToolOrchestrator` may be more robust for complex tasks.

4. **Significant Performance Drop-off:** There is a clear divide. The first 7-8 models maintain relatively high scores, while models from `Llama-3-8B` onwards show a marked decrease in performance, especially on Level3 where scores fall below 50.

5. **Language:** All text in the chart is in English.

### Interpretation

This chart provides a comparative benchmark of AI systems on the GAIA test, which likely evaluates general AI capabilities across varying difficulty tiers. The data suggests a hierarchy of capability among the tested systems.

The **Peircean investigative reading** reveals several layers:

* **Iconic:** The visual decline in bar height from left to right within each group is an iconic representation of decreasing capability.

* **Indexical:** The consistent ordering of models across groups indexes a stable ranking. The fact that Level3 scores are universally the lowest is an index of that level's increased complexity.

* **Symbolic:** The "GAIA Test" title symbolizes a standardized evaluation framework. The color coding (green to purple to orange) symbolically groups the data into meaningful categories for analysis.

The key takeaway is that specialized, orchestrated systems (`AgentOrchestrator`, `ToolOrchestrator`) significantly outperform both other specialized models and general-purpose language models (like the `o1` series and `Llama-3-8B`) on this benchmark. The dramatic falloff on Level3 indicates that the most challenging tasks in this test expose substantial limitations in many current AI systems, while highlighting the relative robustness of the top-performing orchestrated approaches. The "Average" column serves as a useful single metric but masks the critical insight that performance is not uniform across task difficulties.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

```markdown

## Bar Chart: Performance Scores Across Orchestrators and Levels

### Overview

The image is a grouped bar chart comparing performance scores of various AI agents/orchestrators across three evaluation levels (Level1, Level2, Level3) and their average scores. The chart is divided into four sections, each labeled with an orchestrator type (AgentOrchestrator, ToolOrchestrator, AgentOrchestrator, AgentOrchestrator). Each section contains bars representing different agents, with color-coded performance metrics.

### Components/Axes

- **Y-Axis**: "Score" (scale: 40–100, increments of 10).

- **X-Axis**: Agent/orchestrator names (e.g., ToolOrchestra, HALO, AIWorld, Su-Zero-Ultra, h2oGPT-Agent, DeSearch, Alita, Langfun, o3-Agent, o4-mini-DR).

- **Legend**:

- Green: Level1

- Blue: Level2

- Purple: Level3

- Orange: Average

- **Sections**: Four groups of bars, each labeled with an orchestrator type (AgentOrchestrator, ToolOrchestrator, AgentOrchestrator, AgentOrchestrator).

### Detailed Analysis

#### Section 1: AgentOrchestrator

- **Agents**: ToolOrchestra, HALO, AIWorld, Su-Zero-Ultra, h2oGPT-Agent, DeSearch, Alita, Langfun, o3-Agent, o4-mini-DR.

- **Scores**:

- ToolOrchestra: 98.9 (Level1), 95.7 (Level2), 94.6 (Level3), 95.7 (Average).

- HALO: 95.7, 94.6, 95.7, 95.7.

- AIWorld: 95.7, 93.5, 89.3, 91.4.

- Su-Zero-Ultra: 93.5, 91.4, 92.5, 86.9.

- h2oGPT-Agent: 89.3, 86.9, 79.4, 77.4.

- DeSearch: 91.4, 92.5, 77.4, 67.6.

- Alita: 92.5, 86.9, 79.4, 77.4.

- Langfun: 86.9, 79.4, 77.4, 67.6.

- o3-Agent: 79.4, 77.4, 67.6, 67.6.

- o4-mini-DR: 67.6, 67.6, 67.6, 67.6.

#### Section 2: ToolOrchestrator

- **Agents**: ToolOrchestra, HALO, AIWorld, Su-Zero-Ultra, h2oGPT-Agent, DeSearch, Alita, Langfun, o3-Agent, o4-mini-DR.

- **Scores**:

- ToolOrchestra: 85.3 (Level1), 82.4 (Level2), 84.9 (Level3), 85.3 (Average).

- HALO: 82.4, 84.9, 85.3, 85.3.

- AIWorld: 85.3, 85.3, 77.9, 79.9.

- Su-Zero-Ultra: 77.9, 79.9, 75.3, 73.6.

- h2oGPT-Agent: 75.3, 73.6, 67.3, 67.3.

- DeSearch: 73.6, 67.3, 59.3, 59.3.

- Alita: 67.3, 59.3, 47.3, 47.3.

- Langfun: 59.3, 47.3, 44.3, 44.3.

- o3-Agent: 47.3, 44.3, 44.3, 44.3.

- o4-mini-DR: 44.3, 44.3, 44.3, 44.3.

#### Section 3: AgentOrchestrator (Repeated)

- **Agents**: ToolOrchestra, HALO, AIWorld, Su-Zero-Ultra, h2oGPT-Agent, DeSearch, Alita, Langfun, o3-Agent, o4-mini-DR.

- **Scores**:

- ToolOrchestra: 81.6 (Level1), 87.8 (Level2), 69.4 (Level3), 81.6 (Average).

- HALO: 81.6, 87.8, 69.4, 81.6.

- AIWorld: 81.6, 69.4, 57.1, 65.3.

- Su-Zero-Ultra: 69.4, 57.1, 65.3, 61.2.

- h2oGPT-Agent: 57.1, 65.3, 61.2, 61.2.

- DeSearch: 61.2, 55.1, 49.0, 55.1.

- Alita: 55.1, 49.0, 47.5, 48.9.

- Langfun: 49.0, 47.5, 46.9, 46.9.

- o3-Agent: 47.5, 46.9, 44.3, 44.3.

- o4-mini-DR: 46.9, 44.3, 44.3, 44.3.

#### Section 4: AgentOrchestrator (Repeated)

- **Agents**: ToolOrchestra, HALO, AIWorld, Su-Zero-Ultra, h2oGPT-Agent, DeSearch, Alita, Langfun, o3-Agent, o4-mini-DR.

- **Scores**:

- ToolOrchestra: 99.0 (Level1), 97.4 (Level2), 95.4 (Level3), 99.0 (Average).

- HALO: 97.4, 95.4, 93.5, 95.4.

- AIWorld: 95.4, 93.5, 92.5, 93.5.

- Su-Zero-Ultra: 93.5, 92.5, 91.4, 92.5.

- h2oGPT-Agent: 92.5, 91.4, 90.3, 91.4.

- DeSearch: 91.4, 90.3, 89.2, 90.3.

- Alita: 90.3, 89.2, 88.1, 89.2.

- Langfun: 89.2, 88.1, 87.0, 88.1.

- o3-Agent: 88.1, 87.0, 86.0, 87.0.

- o4-mini-DR: 87.0, 86.0, 85.0, 86.0.

### Key Observations

1. **High Performance in AgentOrchestrator Tests**:

- In the first and fourth sections (AgentOrchestrator), scores are consistently high (85–99), with ToolOrchestra and HALO leading.

- The average score often matches Level2 or Level3, suggesting these levels may dominate the average calculation.

2. **Dropped Scores in ToolOrchestrator Tests**:

- The second section (ToolOrchestrator) shows significantly lower scores (44–85), especially for o3-Agent and o4-mini-DR (44.3).

- DeSearch and Alita also underperform here (47–59).

3. **Inconsistent

DECODING INTELLIGENCE...