## Diagram: LLM Response Generation and Confidence Estimation Flowchart

### Overview

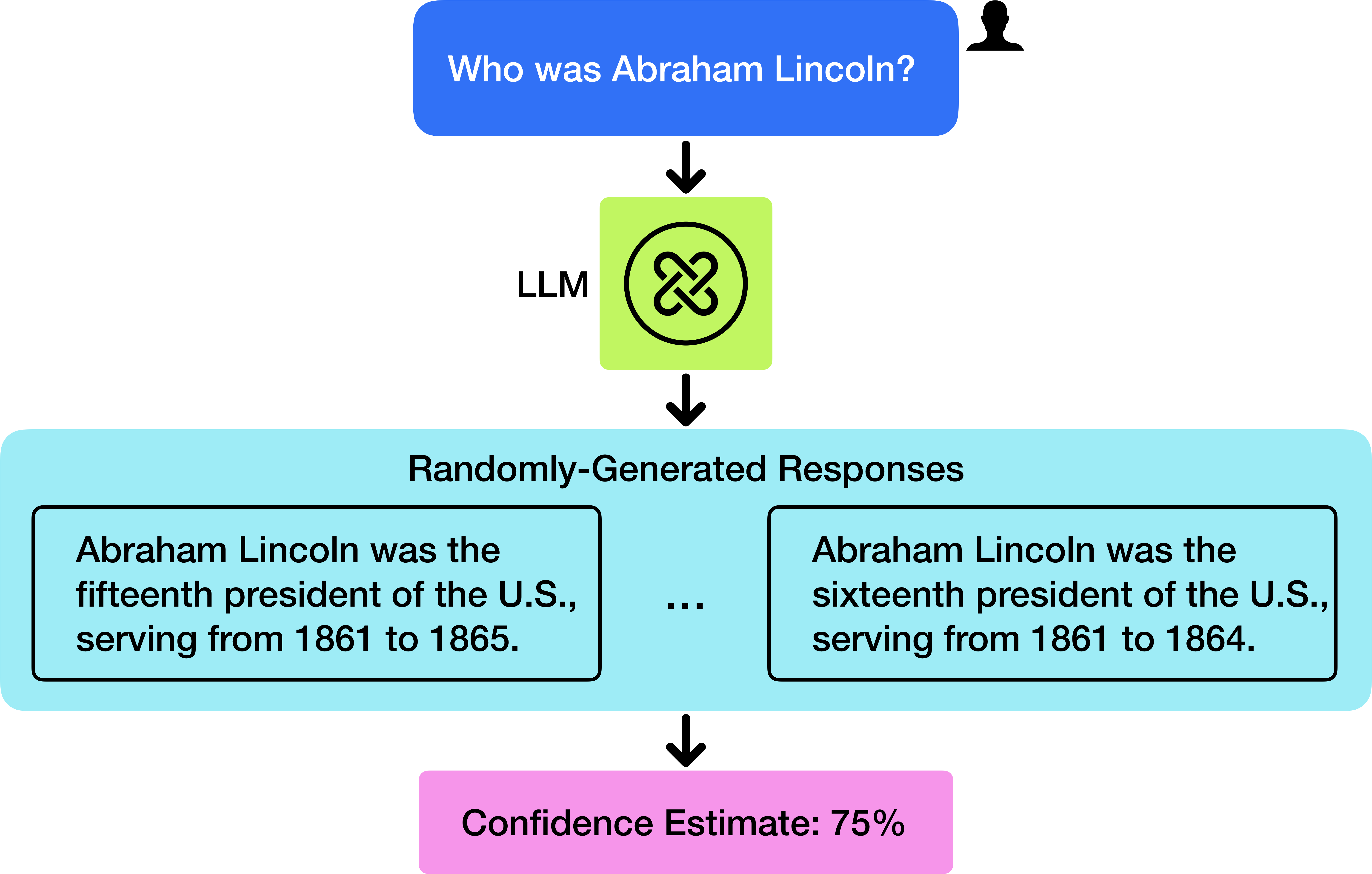

The image is a vertical flowchart diagram illustrating a process where a user's question is processed by a Large Language Model (LLM), which generates multiple, potentially conflicting responses, and then produces a confidence estimate for the information. The diagram uses colored boxes and directional arrows to depict the flow of information.

### Components/Axes

The diagram is composed of four main components arranged vertically, connected by downward-pointing black arrows.

1. **User Input (Top-Center):**

* **Shape:** Blue rounded rectangle.

* **Icon:** A black silhouette of a person's head and shoulders is positioned to the top-right of the box.

* **Text:** "Who was Abraham Lincoln?"

* **Function:** Represents the initial query posed by a user.

2. **Processing Unit (Middle-Center):**

* **Shape:** Light green square.

* **Label:** The text "LLM" is placed to the left of the square.

* **Icon:** Inside the square is a black circular logo containing a stylized, interlocking double-loop or infinity-like symbol.

* **Function:** Represents the Large Language Model that processes the input query.

3. **Output Set (Lower-Middle, spanning width):**

* **Shape:** Large, light blue rounded rectangle.

* **Title:** "Randomly-Generated Responses" is centered at the top of this container.

* **Content:** Inside this container are two example response boxes, separated by an ellipsis ("...") indicating additional possible outputs.

* **Left Response Box:** A black-bordered rectangle containing the text: "Abraham Lincoln was the fifteenth president of the U.S., serving from 1861 to 1865."

* **Right Response Box:** A black-bordered rectangle containing the text: "Abraham Lincoln was the sixteenth president of the U.S., serving from 1861 to 1864."

* **Function:** Demonstrates that the LLM can produce multiple, varying answers to the same query.

4. **Confidence Metric (Bottom-Center):**

* **Shape:** Pink rounded rectangle.

* **Text:** "Confidence Estimate: 75%"

* **Function:** Represents a calculated confidence score associated with the generated responses.

### Detailed Analysis

* **Text Transcription:**

* User Query: "Who was Abraham Lincoln?"

* Model Label: "LLM"

* Output Section Title: "Randomly-Generated Responses"

* Example Response 1: "Abraham Lincoln was the fifteenth president of the U.S., serving from 1861 to 1865."

* Example Response 2: "Abraham Lincoln was the sixteenth president of the U.S., serving from 1861 to 1864."

* Final Output: "Confidence Estimate: 75%"

* **Flow Direction:** The process flows strictly top-to-bottom, indicated by three black arrows: from User Input to LLM, from LLM to Randomly-Generated Responses, and from Randomly-Generated Responses to Confidence Estimate.

* **Data Discrepancy:** The two example responses contain factual contradictions:

* **Presidential Number:** One states "fifteenth," the other "sixteenth." (Fact: Abraham Lincoln was the 16th U.S. President).

* **Term End Year:** One states "1865," the other "1864." (Fact: Lincoln served from 1861 until his assassination in 1865).

### Key Observations

1. **Inconsistent Outputs:** The core observation is the generation of factually inconsistent information ("fifteenth" vs. "sixteenth" president; "1865" vs. "1864") from the same model for the same query.

2. **Confidence vs. Accuracy:** The system outputs a high confidence estimate (75%) despite presenting contradictory and partially incorrect information. This highlights a potential disconnect between a model's internal confidence metric and the factual accuracy of its output.

3. **Process Illustration:** The diagram explicitly models a pipeline: Query -> Stochastic Generation -> Multiple Outputs -> Aggregated Confidence Score.

### Interpretation

This diagram serves as a critical visualization of a fundamental challenge in current LLM technology: **hallucination and inconsistency**. It demonstrates that an LLM can confidently generate plausible-sounding but incorrect or contradictory facts. The "Randomly-Generated Responses" label suggests the model's outputs are samples from a probability distribution, which can include low-probability (and incorrect) tokens.

The "Confidence Estimate: 75%" is particularly significant. It implies the system has a mechanism to assess its own output reliability, yet in this example, that assessment does not align with ground truth. This raises important questions about the calibration of such confidence scores—whether they measure the model's certainty in its generated text sequence or its alignment with external facts.

The diagram essentially argues that interacting with an LLM is not a simple Q&A with a knowledge base, but a process of sampling from a complex, sometimes unreliable, generative model. It underscores the necessity for user verification, external fact-checking, and the development of more robust methods for uncertainty quantification in AI systems. The ellipsis ("...") between the responses is a subtle but crucial detail, indicating that the two shown examples are just a subset of a potentially larger set of varied outputs.