## Line Charts: Training Reward Comparison of GRPO vs. MEL Methods Across Model Sizes

### Overview

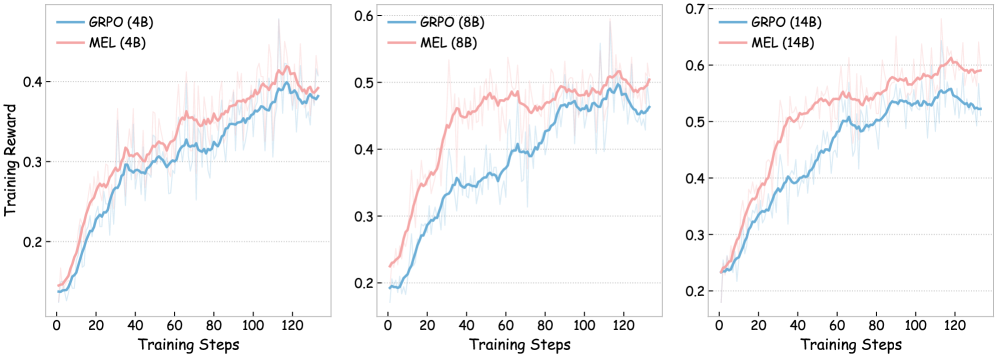

The image displays three horizontally arranged line charts comparing the training performance of two methods, GRPO and MEL, across three different model sizes (4B, 8B, and 14B parameters). Each chart plots "Training Reward" against "Training Steps," showing the learning curves for both methods. The charts indicate that MEL consistently achieves a higher training reward than GRPO at all model scales, and performance improves with larger model sizes.

### Components/Axes

* **Chart Layout:** Three separate line charts arranged side-by-side.

* **X-Axis (All Charts):** Labeled "Training Steps". Major tick marks are at intervals of 20, from 0 to 120.

* **Y-Axis (Left Chart - 4B):** Labeled "Training Reward". Scale ranges from approximately 0.15 to 0.45. Major gridlines are at 0.2, 0.3, and 0.4.

* **Y-Axis (Middle Chart - 8B):** Labeled "Training Reward". Scale ranges from approximately 0.2 to 0.6. Major gridlines are at 0.2, 0.3, 0.4, 0.5, and 0.6.

* **Y-Axis (Right Chart - 14B):** Labeled "Training Reward". Scale ranges from approximately 0.2 to 0.7. Major gridlines are at 0.2, 0.3, 0.4, 0.5, 0.6, and 0.7.

* **Legend (Each Chart):** Positioned in the top-left corner of each plot area.

* **Left Chart (4B):** "GRPO (4B)" (blue line), "MEL (4B)" (red line).

* **Middle Chart (8B):** "GRPO (8B)" (blue line), "MEL (8B)" (red line).

* **Right Chart (14B):** "GRPO (14B)" (blue line), "MEL (14B)" (red line).

* **Data Series:** Each chart contains two primary lines (blue for GRPO, red for MEL) with a lighter, semi-transparent shaded area around each line, likely representing variance or standard deviation across multiple runs.

### Detailed Analysis

**Left Chart (4B Models):**

* **Trend:** Both lines show a steep initial increase in reward from step 0 to ~40, followed by a more gradual, noisy ascent.

* **GRPO (4B) - Blue Line:** Starts at ~0.15. Reaches ~0.3 at step 40. Ends at approximately 0.38 at step 120.

* **MEL (4B) - Red Line:** Starts slightly above GRPO at ~0.16. Maintains a consistent lead. Reaches ~0.33 at step 40. Peaks near 0.42 around step 110 before a slight dip, ending at ~0.40 at step 120.

* **Relationship:** The MEL line is positioned above the GRPO line for the entire duration after the first few steps.

**Middle Chart (8B Models):**

* **Trend:** Similar rapid initial learning phase, with both curves plateauing at a higher reward level than the 4B models.

* **GRPO (8B) - Blue Line:** Starts at ~0.20. Rises to ~0.35 by step 40. Shows a steady climb to end at approximately 0.46 at step 120.

* **MEL (8B) - Red Line:** Starts at ~0.22. Rises sharply to ~0.45 by step 40. Continues a noisy ascent, peaking near 0.52 around step 110, and ending at ~0.50 at step 120.

* **Relationship:** The performance gap between MEL and GRPO is more pronounced here than in the 4B chart.

**Right Chart (14B Models):**

* **Trend:** The highest overall reward values. Both methods show strong initial gains and maintain an upward trend throughout.

* **GRPO (14B) - Blue Line:** Starts at ~0.23. Reaches ~0.40 by step 40. Climbs to end at approximately 0.52 at step 120.

* **MEL (14B) - Red Line:** Starts at ~0.24. Reaches ~0.50 by step 40. Continues to rise, peaking near 0.62 around step 110, and ending at ~0.60 at step 120.

* **Relationship:** MEL maintains a significant and consistent lead over GRPO. The final reward for MEL (14B) is notably higher than the peak rewards of both 8B models.

### Key Observations

1. **Consistent Superiority of MEL:** In all three charts, the red MEL line is positioned above the blue GRPO line after the initial training steps, indicating higher training reward.

2. **Positive Correlation with Model Size:** The maximum achieved reward increases with model size for both methods. The y-axis scales shift upward from left (4B) to right (14B).

3. **Learning Curve Shape:** All curves exhibit a characteristic rapid initial learning phase (steps 0-40) followed by a slower, noisier improvement phase.

4. **Variance:** The shaded regions indicate non-trivial variance in the training process, but the separation between the MEL and GRPO means remains clear despite this noise.

5. **Peak Performance:** MEL appears to peak slightly before the final step (around step 110) in the 4B and 8B charts, with a minor decline thereafter, while GRPO often continues a slight upward trend to the end.

### Interpretation

The data strongly suggests that the MEL training method is more effective than GRPO for maximizing the defined "Training Reward" metric across the tested model scales (4B to 14B parameters). The consistent performance gap implies that MEL's algorithmic approach leads to more efficient or effective learning.

The positive scaling with model size is expected, as larger models typically have greater capacity. However, the fact that MEL's advantage is maintained and even appears to widen slightly at larger scales (the gap between lines is visually larger in the 14B chart) indicates that its benefits are robust and potentially complementary to increased model capacity.

The noisy ascent after step 40 is typical of reinforcement learning or stochastic optimization processes, where progress is not perfectly monotonic. The slight late-stage dip for MEL in the smaller models could suggest the onset of overfitting to the training reward signal or increased instability, but this trend is not as clear in the 14B model, which continues to perform strongly.

**In summary, the charts provide empirical evidence that, under the conditions of this experiment, MEL is a superior training method to GRPO, and its advantages scale with model size.**