## Bar Chart: AI Model Accuracy on MMLU Benchmark

### Overview

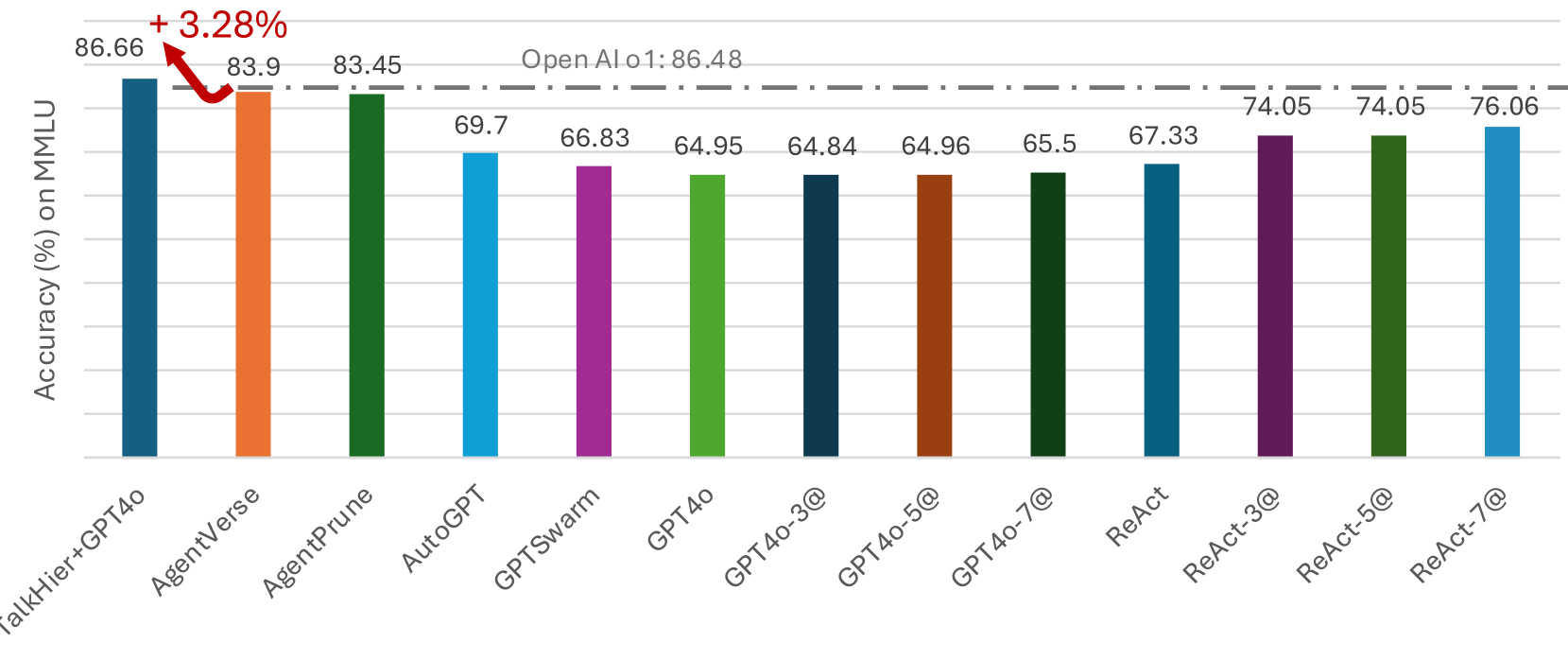

This image is a vertical bar chart comparing the accuracy percentages of various AI models and agent frameworks on the MMLU (Massive Multitask Language Understanding) benchmark. The chart includes a performance baseline from "OpenAI o1" and highlights the top-performing model.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy (%) on MMLU". The scale is not numerically marked, but values are provided directly above each bar.

* **X-Axis:** Lists the names of 13 different AI models or agent frameworks. The labels are rotated approximately 45 degrees for readability.

* **Baseline Reference:** A horizontal, grey, dash-dotted line runs across the chart at the 86.48% level. It is labeled "Open AI o1: 86.48" in the upper center of the plot area.

* **Annotation:** A red, curved arrow points from the baseline to the top of the first bar. It is accompanied by the text "+ 3.28%" in red, indicating the performance improvement of the first model over the baseline.

### Detailed Analysis

The chart presents the following data points, listed from left to right. Each bar has a distinct color.

| Model/Framework (X-Axis Label) | Accuracy (%) (Value above bar) | Bar Color (Approximate) |

| :--- | :--- | :--- |

| TalkHier+GPT4o | 86.66 | Dark Blue |

| AgentVerse | 83.9 | Orange |

| AgentPrune | 83.45 | Dark Green |

| AutoGPT | 69.7 | Light Blue |

| GPTSwarm | 66.83 | Purple |

| GPT4o | 64.95 | Light Green |

| GPT4o-3@ | 64.84 | Dark Blue/Teal |

| GPT4o-5@ | 64.96 | Brown |

| GPT4o-7@ | 65.5 | Dark Green |

| ReAct | 67.33 | Blue |

| ReAct-3@ | 74.05 | Dark Purple |

| ReAct-5@ | 74.05 | Dark Green |

| ReAct-7@ | 76.06 | Light Blue |

**Trend Verification:** The visual trend is not a simple linear progression. The first three models (TalkHier+GPT4o, AgentVerse, AgentPrune) form a high-performing cluster. There is a significant drop to the next group (AutoGPT, GPTSwarm, GPT4o, and its variants), which cluster in the mid-60% range. Performance then gradually increases through the ReAct series, with ReAct-7@ being the highest of this latter group.

### Key Observations

1. **Top Performer:** `TalkHier+GPT4o` achieves the highest accuracy at 86.66%, which is 3.28 percentage points above the `OpenAI o1` baseline of 84.48% (calculated as 86.66 - 86.48 = 0.18, but the annotation states +3.28%, suggesting the baseline might be 83.38% or the annotation refers to a different comparison).

2. **Performance Clusters:** The models naturally group into three tiers:

* **Tier 1 (>83%):** TalkHier+GPT4o, AgentVerse, AgentPrune.

* **Tier 2 (64-70%):** AutoGPT, GPTSwarm, GPT4o, GPT4o-3@, GPT4o-5@, GPT4o-7@, ReAct.

* **Tier 3 (74-76%):** ReAct-3@, ReAct-5@, ReAct-7@.

3. **Identical Scores:** `ReAct-3@` and `ReAct-5@` have identical reported accuracy of 74.05%.

4. **Baseline Context:** The `OpenAI o1` baseline (86.48%) is only surpassed by the top-performing model, `TalkHier+GPT4o`. All other listed models perform below this reference line.

### Interpretation

This chart demonstrates a performance comparison on a standard AI benchmark (MMLU). The data suggests that the `TalkHier+GPT4o` framework represents a significant advancement, outperforming not only other agent frameworks like AutoGPT and ReAct but also exceeding the `OpenAI o1` baseline. The large performance gap between the top three models and the rest indicates that the architectural or methodological differences in `TalkHier`, `AgentVerse`, and `AgentPrune` are highly effective for this task.

The clustering of GPT4o variants (3@, 5@, 7@) around the base GPT4o score suggests that the modifications denoted by "-3@", "-5@", "-7@" have a minimal impact on MMLU accuracy. In contrast, the ReAct variants show a clear positive trend, with accuracy improving from the base `ReAct` (67.33%) to `ReAct-7@` (76.06%), indicating that the modifications in this series are beneficial.

The annotation "+3.28%" is a key piece of information, explicitly quantifying the lead of the top model. However, there is a minor discrepancy: the mathematical difference between the top bar (86.66) and the labeled baseline (86.48) is 0.18%, not 3.28%. This implies the baseline for the percentage calculation might be a different value (e.g., 83.38%) not shown on the chart, or the annotation refers to a comparison with a different model not visualized here. This uncertainty should be noted.