\n

## Diagram: Model Training Pipeline

### Overview

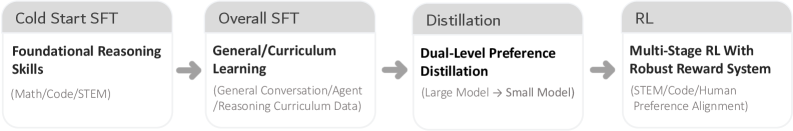

The image depicts a four-stage pipeline for training a model, progressing from "Cold Start SFT" to "RL". Each stage is represented by a grey rectangle with a title and a more detailed description below. Arrows indicate the flow of the process from left to right.

### Components/Axes

The diagram consists of four sequential stages:

1. **Cold Start SFT:** "Foundational Reasoning Skills" (Math/Code/STEM)

2. **Overall SFT:** "General/Curriculum Learning" (General Conversation/Agent/Reasoning Curriculum Data)

3. **Distillation:** "Dual-Level Preference Distillation" (Large Model -> Small Model)

4. **RL:** "Multi-Stage RL With Robust Reward System" (STEM/Code/Human Preference Alignment)

The arrows connecting the stages indicate the progression of the training process.

### Detailed Analysis or Content Details

The diagram outlines a sequence of model training techniques:

* **Stage 1: Cold Start SFT** - Focuses on building foundational reasoning skills using data related to Math, Code, and STEM fields.

* **Stage 2: Overall SFT** - Expands the training to include general conversation, agent behavior, and reasoning curriculum data.

* **Stage 3: Distillation** - Employs a dual-level preference distillation technique, transferring knowledge from a large model to a smaller model.

* **Stage 4: RL** - Utilizes multi-stage reinforcement learning with a robust reward system, incorporating STEM, Code, and human preference alignment.

The text within each stage provides specific details about the training approach and the types of data used.

### Key Observations

The pipeline demonstrates a progression from foundational skills to more complex training techniques. The inclusion of "STEM/Code" in multiple stages suggests a strong emphasis on these areas. The final stage incorporates human preference alignment, indicating a focus on creating models that align with human values.

### Interpretation

This diagram illustrates a comprehensive approach to model training, starting with a strong foundation in reasoning skills and progressively incorporating more advanced techniques like distillation and reinforcement learning. The pipeline appears designed to create models that are not only capable of general conversation and agent behavior but also possess strong reasoning abilities and align with human preferences. The emphasis on STEM and code throughout the process suggests a focus on building models that excel in technical domains. The use of distillation indicates an effort to create efficient models without sacrificing performance. The overall structure suggests a deliberate and iterative approach to model development.