## Diagram: AI Model Training Pipeline Flowchart

### Overview

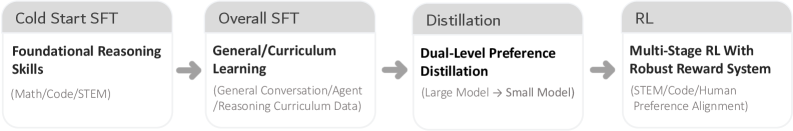

The image displays a horizontal, four-stage flowchart illustrating a sequential pipeline for training an AI model. The process flows from left to right, indicated by gray arrows connecting each stage. Each stage is represented by a rectangular box with a gray header containing the stage name and a white body containing the primary focus and specific data domains or methods.

### Components/Axes

The diagram consists of four distinct components (stages) arranged linearly:

1. **Stage 1 (Far Left):**

* **Header (Gray Box):** `Cold Start SFT`

* **Body (White Box):**

* **Primary Focus (Bold):** `Foundational Reasoning Skills`

* **Specific Domains (Regular text in parentheses):** `(Math/Code/STEM)`

2. **Stage 2 (Center-Left):**

* **Header (Gray Box):** `Overall SFT`

* **Body (White Box):**

* **Primary Focus (Bold):** `General/Curriculum Learning`

* **Specific Data Types (Regular text in parentheses):** `(General Conversation/Agent/Reasoning Curriculum Data)`

3. **Stage 3 (Center-Right):**

* **Header (Gray Box):** `Distillation`

* **Body (White Box):**

* **Primary Focus (Bold):** `Dual-Level Preference Distillation`

* **Process Description (Regular text in parentheses):** `(Large Model → Small Model)`

4. **Stage 4 (Far Right):**

* **Header (Gray Box):** `RL`

* **Body (White Box):**

* **Primary Focus (Bold):** `Multi-Stage RL With Robust Reward System`

* **Alignment Targets (Regular text in parentheses):** `(STEM/Code/Human Preference Alignment)`

**Flow Direction:** A solid gray arrow points from the right edge of the "Cold Start SFT" box to the left edge of the "Overall SFT" box. An identical arrow connects "Overall SFT" to "Distillation," and a final arrow connects "Distillation" to "RL." This establishes a strict, unidirectional sequence.

### Detailed Analysis

The diagram outlines a progressive training methodology:

1. **Cold Start SFT (Supervised Fine-Tuning):** The pipeline begins by instilling core, domain-specific reasoning abilities in the model using data from Mathematics, Coding, and STEM fields.

2. **Overall SFT:** The model's training is then broadened. It undergoes general supervised fine-tuning on a more diverse dataset that includes general conversation, agent-based interactions, and structured reasoning curricula.

3. **Distillation:** This stage involves compressing the knowledge and capabilities from a larger "teacher" model into a smaller, more efficient "student" model. The term "Dual-Level" suggests this distillation may operate on multiple layers or aspects of the model's knowledge.

4. **RL (Reinforcement Learning):** The final stage uses reinforcement learning with a robust reward system to align the model's outputs. The alignment targets are specified as STEM, Code, and Human Preferences, indicating the reward model is trained to favor responses that are accurate in technical domains and preferred by human evaluators.

### Key Observations

* **Sequential Dependency:** The arrows clearly indicate that each stage is a prerequisite for the next. The model cannot jump to general learning without first acquiring foundational skills, nor can it be distilled or aligned via RL before being trained via SFT.

* **Progressive Scope:** The training focus evolves from narrow and technical (Math/Code/STEM) to broad (General Conversation) and then back to targeted alignment (STEM/Code/Human Preference).

* **Methodological Progression:** The techniques advance from standard Supervised Fine-Tuning (SFT) to Knowledge Distillation and finally to Reinforcement Learning, representing increasing complexity in the training objective.

* **Efficiency Consideration:** The explicit inclusion of a "Distillation" stage (Large Model → Small Model) highlights a concern for model size and inference efficiency in the final product.

### Interpretation

This flowchart represents a sophisticated, multi-phase strategy for developing a capable and aligned AI assistant. The pipeline is designed to build competence systematically:

1. **Foundation First:** It prioritizes establishing robust reasoning in structured domains (STEM) before exposing the model to the noise and variability of open-ended conversation. This is a "curriculum learning" approach, teaching fundamentals before applications.

2. **Knowledge Transfer & Compression:** The distillation phase is critical for practical deployment. It suggests the training process may initially create a very large, powerful model, whose capabilities are then transferred to a smaller, cost-effective model suitable for production use.

3. **Alignment as the Final Step:** Placing Reinforcement Learning (RL) last indicates that alignment with human preferences and technical accuracy is treated as a refinement process applied to a model that has already acquired substantial knowledge and skills. The "Robust Reward System" is key to ensuring this alignment is stable and effective.

4. **Overall Goal:** The pipeline aims to produce a model that is not only knowledgeable and capable in technical and conversational domains but also efficient (via distillation) and helpful/harmless (via RL-based alignment). It reflects a modern, holistic approach to AI development that balances capability, efficiency, and safety.