## Flowchart: Multi-Stage AI Training Pipeline

### Overview

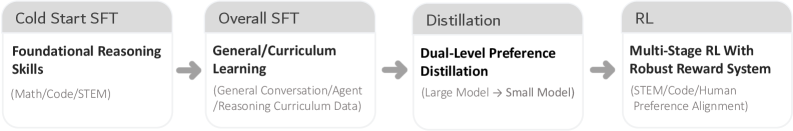

The image depicts a sequential pipeline of four stages in an AI training process, represented as interconnected boxes with arrows indicating progression. Each stage focuses on a specific aspect of model development, from foundational skills to robust reward systems.

### Components/Axes

1. **Stage 1: Cold Start SFT**

- **Label**: "Cold Start SFT" (top of box)

- **Content**:

- **Title**: "Foundational Reasoning Skills" (bold, black text)

- **Subtext**: "(Math/Code/STEM)" (gray text, parentheses)

2. **Stage 2: Overall SFT**

- **Label**: "Overall SFT" (top of box)

- **Content**:

- **Title**: "General/Curriculum Learning" (bold, black text)

- **Subtext**: "(General Conversation/Agent/Reasoning Curriculum Data)" (gray text, parentheses)

3. **Stage 3: Distillation**

- **Label**: "Distillation" (top of box)

- **Content**:

- **Title**: "Dual-Level Preference Distillation" (bold, black text)

- **Subtext**: "(Large Model → Small Model)" (gray text, parentheses)

4. **Stage 4: RL**

- **Label**: "RL" (top of box)

- **Content**:

- **Title**: "Multi-Stage RL With Robust Reward System" (bold, black text)

- **Subtext**: "(STEM/Code/Human Preference Alignment)" (gray text, parentheses)

**Arrows**: Gray arrows connect the stages sequentially (Cold Start SFT → Overall SFT → Distillation → RL).

### Detailed Analysis

- **Textual Content**:

- All text is in English. No other languages are present.

- Each box contains a hierarchical structure: a bold title followed by a descriptive subtext in parentheses.

- Subtexts clarify the scope or focus of each stage (e.g., "Math/Code/STEM" for foundational skills).

- **Flow and Relationships**:

- The pipeline progresses linearly, with each stage building on the prior.

- "Distillation" explicitly references model size reduction ("Large Model → Small Model"), suggesting optimization.

- The final stage ("RL") integrates human preferences, indicating alignment with user needs.

### Key Observations

- The pipeline emphasizes **progressive complexity**: starting with foundational skills, expanding to general learning, refining via distillation, and culminating in robust reinforcement learning.

- **Human preference alignment** is only addressed in the final stage, implying it is a later-stage refinement.

- No numerical data, trends, or outliers are present; the focus is on conceptual stages.

### Interpretation

This flowchart outlines a structured approach to AI model development:

1. **Foundational Skills**: Establish core competencies in technical domains (Math, Code, STEM).

2. **General Learning**: Broaden capabilities through curriculum-based training (e.g., conversation, reasoning).

3. **Distillation**: Optimize the model by transferring knowledge from large to smaller models, improving efficiency.

4. **Robust RL**: Implement reinforcement learning with a reward system aligned with human preferences, ensuring practical applicability.

The pipeline highlights a balance between technical rigor (STEM/Code) and user-centric design (human preference alignment), suggesting a focus on creating adaptable, efficient, and user-aligned AI systems.