TECHNICAL ASSET FINGERPRINT

5347c49eed17721dc2563c64

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

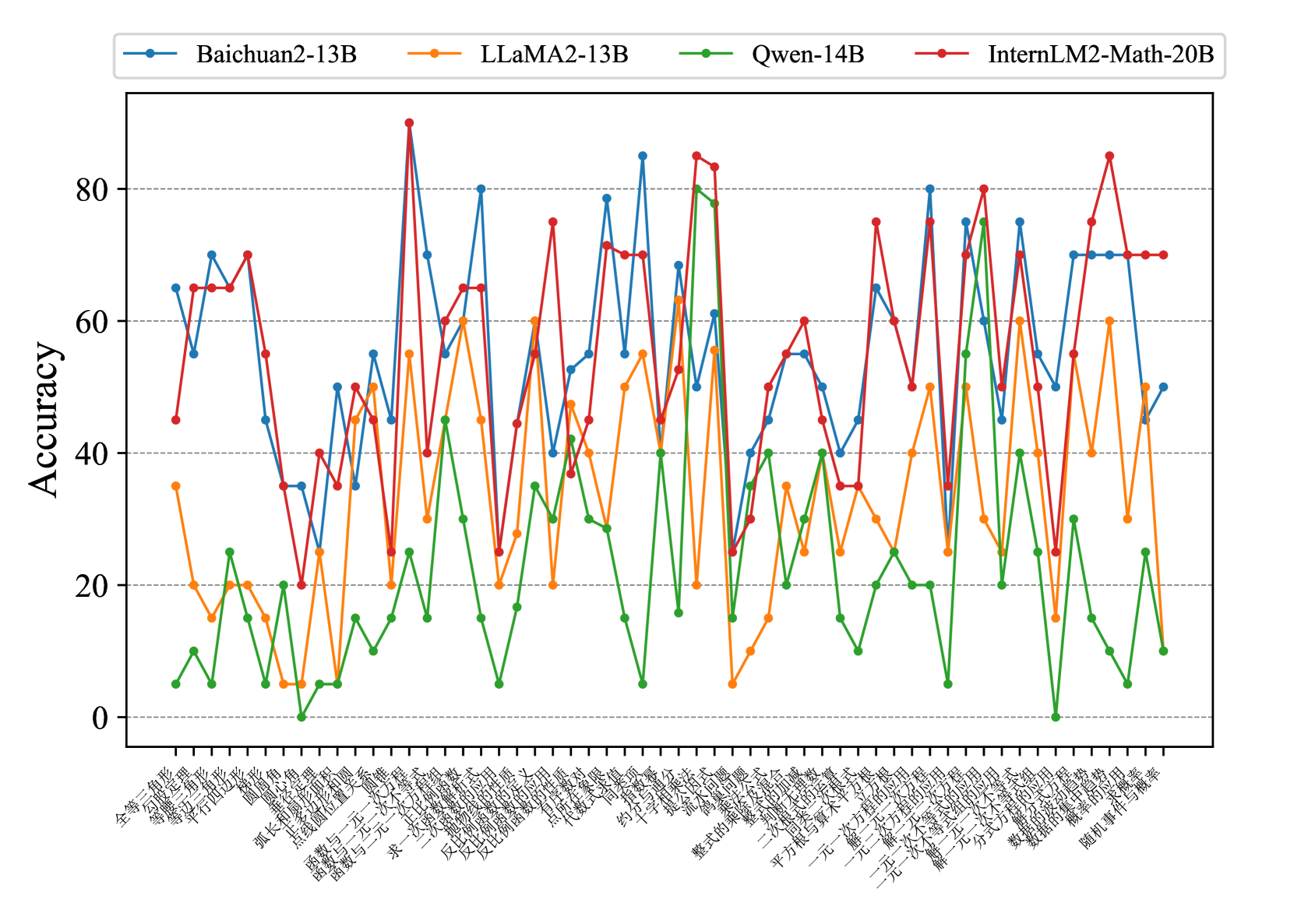

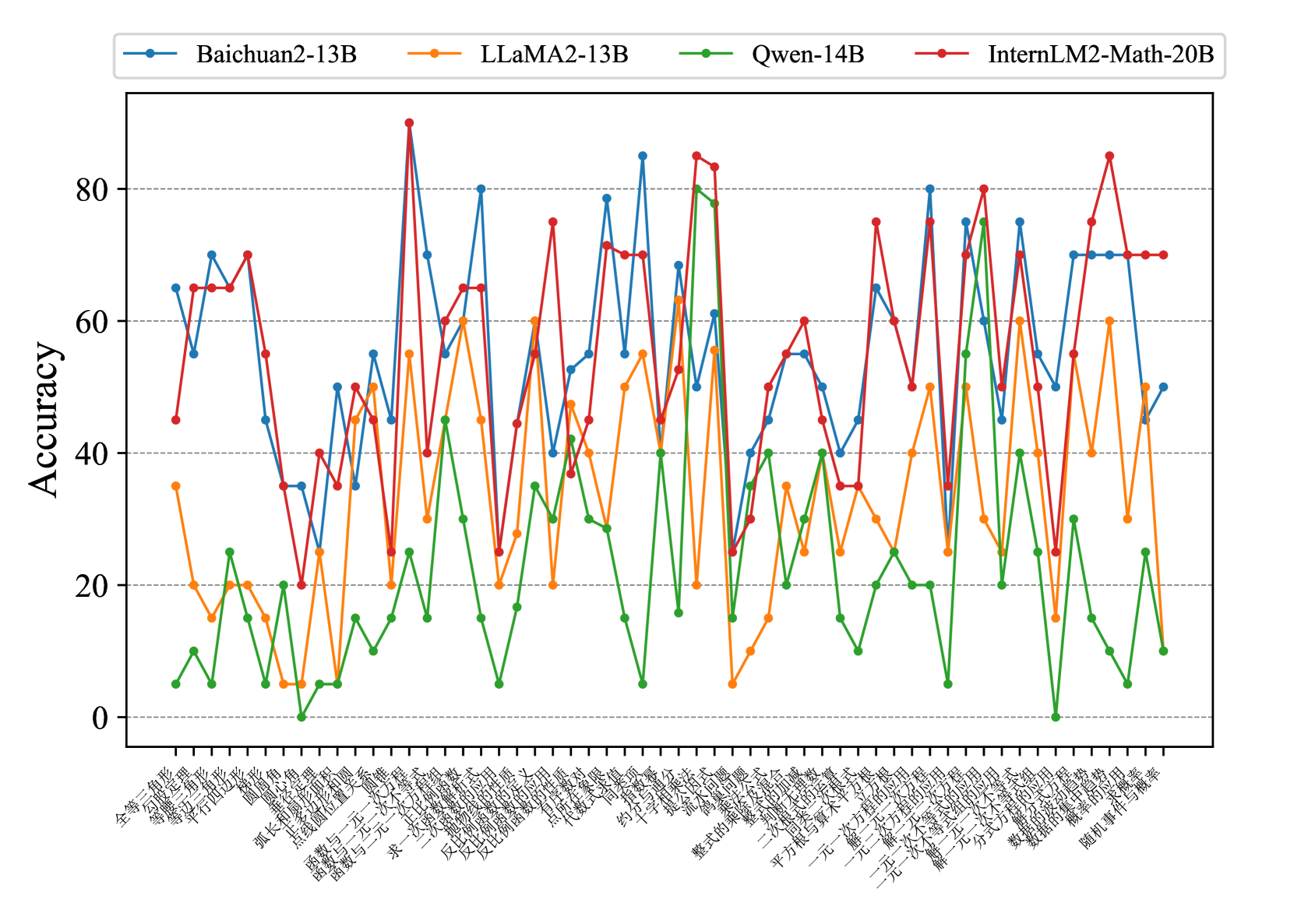

## Line Chart: Model Accuracy Comparison

### Overview

The image is a line chart comparing the accuracy of four different language models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B) across a series of mathematical problems. The x-axis represents different problem types (in Chinese), and the y-axis represents the accuracy score.

### Components/Axes

* **Title:** (None visible)

* **X-axis:** Represents different mathematical problem types. The labels are in Chinese.

* The labels are: 全等三角形的性质与判定, 等腰三角形的性质与判定, 勾股定理, 平行四边形, 弧长和扇形面积, 圆锥, 圆心角, 点与圆的位置关系, 函数与一元一次方程, 求一次函数表达式, 反比例函数的应用, 代数式求值, 约分, 不等式性质, 提公因式法, 公式法, 整式的乘法, 平方根与算术平方根, 一元一次方程的应用, 一元一次不等式组的应用, 二次根式的运算, 平方差公式, 一元二次方程的解法, 一元二次方程根的判别式, 一元二次方程的应用, 数据的波动程度, 数据的集中趋势, 随机事件与概率

* **Y-axis:** Represents Accuracy, ranging from 0 to 80, with increments of 20.

* Values: 0, 20, 40, 60, 80

* **Legend:** Located at the top of the chart.

* Baichuan2-13B (Blue)

* LLaMA2-13B (Orange)

* Qwen-14B (Green)

* InternLM2-Math-20B (Red)

### Detailed Analysis

Here's a breakdown of each model's performance across the problem types:

* **Baichuan2-13B (Blue):**

* Generally fluctuates between 40 and 80 accuracy.

* Starts at approximately 65, dips to around 45, then rises sharply to 70.

* Peaks at around 83, then drops to 30.

* Rises again to 80, then drops to 50.

* Ends at approximately 70.

* **LLaMA2-13B (Orange):**

* Generally fluctuates between 20 and 60 accuracy.

* Starts at approximately 35, drops to 10, then rises to 40.

* Peaks at around 55, then drops to 25.

* Rises again to 60, then drops to 20.

* Ends at approximately 50.

* **Qwen-14B (Green):**

* Generally fluctuates between 0 and 40 accuracy.

* Starts at approximately 25, drops to 10, then rises to 20.

* Peaks at around 83, then drops to 10.

* Rises again to 35, then drops to 5.

* Ends at approximately 45.

* **InternLM2-Math-20B (Red):**

* Generally fluctuates between 30 and 90 accuracy.

* Starts at approximately 45, rises to 70, then drops to 35.

* Peaks at around 85, then drops to 25.

* Rises again to 75, then drops to 35.

* Ends at approximately 70.

### Key Observations

* InternLM2-Math-20B (Red) generally shows the highest accuracy, often peaking above the other models.

* Qwen-14B (Green) generally shows the lowest accuracy.

* All models exhibit significant fluctuations in accuracy across different problem types.

* There are specific problem types where all models perform poorly (e.g., around problem 10), suggesting these problems are particularly challenging.

* There are specific problem types where all models perform well (e.g., around problem 16), suggesting these problems are relatively easier.

### Interpretation

The chart demonstrates the performance of four language models on a suite of mathematical problems. The fluctuations in accuracy highlight the varying difficulty levels of different problem types and the models' strengths and weaknesses. InternLM2-Math-20B appears to be the most proficient overall, while Qwen-14B struggles in comparison. The performance variations suggest that the models may have been trained with different datasets or architectures, leading to varying levels of expertise in specific mathematical domains. Further investigation into the specific problem types and model architectures would be needed to understand the underlying reasons for these performance differences.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Model Accuracy on Various Tasks

### Overview

This image presents a line chart comparing the accuracy of four large language models – Baichuan2-13B, LLaMA2-13B, Qwen-14B, and InternLM2-Math-20B – across a series of tasks. The x-axis represents different tasks, and the y-axis represents the accuracy score, ranging from 0 to 80.

### Components/Axes

* **Y-axis Title:** Accuracy

* **X-axis Title:** (Chinese characters - see Content Details for translation)

* **Legend:** Located at the top of the chart, identifying each line with a color and model name.

* Baichuan2-13B (Blue)

* LLaMA2-13B (Orange)

* Qwen-14B (Green)

* InternLM2-Math-20B (Red)

* **Gridlines:** Vertical gridlines are present to aid in reading values.

### Detailed Analysis

The x-axis labels are in Chinese. Here's a translation of the task names, to the best of my ability:

1. 全部 (All)

2. 写作 (Writing)

3. 翻译 (Translation)

4. 摘要 (Summary)

5. 问答 (Question Answering)

6. 头脑风暴 (Brainstorming)

7. 代码 (Code)

8. 文本分类 (Text Classification)

9. 情感分析 (Sentiment Analysis)

10. 命名实体识别 (Named Entity Recognition)

11. 文本匹配 (Text Matching)

12. 逻辑推理 (Logical Reasoning)

13. 知识问答 (Knowledge Question Answering)

14. 开放域问答 (Open Domain Question Answering)

15. 数学计算 (Mathematical Calculation)

16. 三元组抽取 (Triple Extraction)

17. 文本生成 (Text Generation)

18. 一元多项式求根 (Solving Univariate Polynomials)

19. 几何证明 (Geometric Proof)

20. 数学问题 (Math Problems)

21. 图形推理 (Graphical Reasoning)

22. 物理问答 (Physics Question Answering)

Here's a breakdown of each model's performance, with approximate values:

* **Baichuan2-13B (Blue):** Starts around 68% accuracy, dips to ~30% for 摘要, rises to ~82% for 文本分类, fluctuates between 40-80% for most tasks, and ends around 60% for 图形推理. Generally performs well, with several peaks above 70%.

* **LLaMA2-13B (Orange):** Starts around 65%, dips to ~25% for 摘要, peaks at ~85% for 文本分类, and generally stays between 30-60% for most tasks. Ends around 20% for 图形推理. Shows a strong peak for 文本分类 but is generally lower than Baichuan2-13B.

* **Qwen-14B (Green):** Starts very low at ~5%, rises to ~30% for 写作, fluctuates significantly between 10-40% for most tasks, and ends around 15% for 图形推理. Consistently the lowest performing model.

* **InternLM2-Math-20B (Red):** Starts around 65%, dips to ~30% for 写作, peaks at ~82% for 知识问答, and fluctuates between 40-80% for most tasks. Ends around 80% for 图形推理. Strong performance on mathematical and reasoning tasks.

### Key Observations

* **文本分类** consistently shows the highest accuracy for Baichuan2-13B and LLaMA2-13B, exceeding 80% for both.

* **Qwen-14B** consistently underperforms compared to the other three models across all tasks.

* **InternLM2-Math-20B** excels in tasks related to mathematical reasoning (数学计算, 一元多项式求根, 几何证明, 数学问题, 图形推理).

* The accuracy scores fluctuate considerably across different tasks for all models, indicating varying strengths and weaknesses.

* The "摘要" (Summary) task consistently results in lower accuracy for all models.

### Interpretation

The chart demonstrates a clear performance difference between the four language models. Baichuan2-13B and LLaMA2-13B are generally strong performers, while Qwen-14B lags behind. InternLM2-Math-20B stands out in mathematical and reasoning tasks, suggesting a specialized training focus. The variability in accuracy across tasks highlights the challenges of achieving consistent performance in diverse NLP applications. The low scores on the "摘要" task suggest that summarization remains a difficult problem for these models. The data suggests that model architecture and training data significantly impact performance on specific tasks. The high accuracy on "文本分类" for Baichuan2-13B and LLaMA2-13B could indicate a strong ability to discern patterns in text, while InternLM2-Math-20B's success in mathematical tasks points to a specialized mathematical reasoning capability.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of Four Language Models on Mathematical Topics

### Overview

This image is a line chart comparing the performance (accuracy) of four different large language models (LLMs) across a wide range of mathematical topics. The chart displays the accuracy percentage for each model on each topic, allowing for a direct comparison of their strengths and weaknesses in mathematical reasoning. The data is presented as four distinct, jagged lines, each corresponding to a specific model.

### Components/Axes

* **Chart Type:** Multi-line chart.

* **Y-Axis:**

* **Label:** "Accuracy" (written vertically on the left side).

* **Scale:** Linear scale from 0 to approximately 90.

* **Major Gridlines:** Horizontal dashed lines at intervals of 20 (0, 20, 40, 60, 80).

* **X-Axis:**

* **Label:** None explicitly stated. The axis represents discrete mathematical topics.

* **Tick Labels:** A series of mathematical topic names written in Chinese, rotated at a 45-degree angle for readability. The full list of topics (with English translations) is provided in the Detailed Analysis section.

* **Legend:**

* **Position:** Centered at the top of the chart, above the plot area.

* **Content:** Four entries, each with a colored line segment and marker, followed by the model name.

1. **Blue line with circle markers:** `Baichuan2-13B`

2. **Orange line with circle markers:** `LLaMA2-13B`

3. **Green line with circle markers:** `Qwen-14B`

4. **Red line with circle markers:** `InternLM2-Math-20B`

### Detailed Analysis

The chart plots accuracy (0-100%) for each model across 47 distinct mathematical topics. Below is an approximate data extraction for each model, listed in the order the topics appear on the x-axis (left to right). Values are estimated from the chart's gridlines and carry an uncertainty of ±3-5%.

**X-Axis Topics (Chinese -> English Translation):**

1. 全等三角形 -> Congruent Triangles

2. 等腰三角形 -> Isosceles Triangles

3. 等边三角形 -> Equilateral Triangles

4. 平行四边形性质 -> Properties of Parallelograms

5. 圆周角定理 -> Inscribed Angle Theorem

6. 弧长和扇形面积 -> Arc Length and Sector Area

7. 点与圆的位置关系 -> Positional Relationship between a Point and a Circle

8. 函数与二元一次方程 -> Function and Linear Equation in Two Variables

9. 函数与一元一次方程 -> Function and Linear Equation in One Variable

10. 函数与一元二次方程 -> Function and Quadratic Equation in One Variable

11. 求一次函数的解析式 -> Finding the Analytic Expression of a Linear Function

12. 二次函数的性质 -> Properties of Quadratic Functions

13. 反比例函数的性质 -> Properties of Inverse Proportional Functions

14. 反比例函数的应用 -> Application of Inverse Proportional Functions

15. 点的坐标特征 -> Coordinate Characteristics of Points

16. 代数式求值 -> Evaluating Algebraic Expressions

17. 同底数幂 -> Powers with the Same Base

18. 约分与通分 -> Reduction and Reduction to a Common Denominator

19. 十字相乘法 -> Cross Multiplication Method

20. 提公因式法 -> Factoring by Common Factor

21. 流程图 -> Flowcharts

22. 简单的轴对称图形 -> Simple Axially Symmetric Figures

23. 整式的乘法与因式分解 -> Multiplication of Integral Expressions and Factorization

24. 二次根式的乘除 -> Multiplication and Division of Quadratic Radicals

25. 二次根式的加减 -> Addition and Subtraction of Quadratic Radicals

26. 平方根与算术平方根 -> Square Root and Arithmetic Square Root

27. 一元一次方程的应用 -> Application of Linear Equation in One Variable

28. 一元二次方程的解法 -> Solution of Quadratic Equation in One Variable

29. 一元二次方程的应用 -> Application of Quadratic Equation in One Variable

30. 一元一次不等式 -> Linear Inequality in One Variable

31. 一元一次不等式组 -> System of Linear Inequalities in One Variable

32. 解一元二次方程 -> Solving Quadratic Equation in One Variable

33. 分式方程的应用 -> Application of Fractional Equations

34. 分式的化简求值 -> Simplification and Evaluation of Fractions

35. 数据的集中趋势 -> Central Tendency of Data

36. 数据的波动程度 -> Dispersion of Data

37. 频数分布直方图 -> Frequency Distribution Histogram

38. 概率的求法 -> Calculation of Probability

39. 随机事件与概率 -> Random Events and Probability

**Approximate Accuracy Data by Model:**

* **Baichuan2-13B (Blue Line):**

* **Trend:** Highly volatile, with frequent sharp peaks and troughs. Shows strong performance on several algebraic and geometric topics but also significant dips.

* **Sample Data Points (Topic #, ~Accuracy%):** (1, 65), (2, 55), (3, 70), (4, 45), (5, 35), (6, 35), (7, 25), (8, 50), (9, 55), (10, 45), (11, 80), (12, 70), (13, 55), (14, 40), (15, 55), (16, 78), (17, 85), (18, 50), (19, 68), (20, 60), (21, 40), (22, 45), (23, 55), (24, 40), (25, 65), (26, 50), (27, 40), (28, 80), (29, 45), (30, 75), (31, 55), (32, 50), (33, 70), (34, 70), (35, 70), (36, 45), (37, 50).

* **LLaMA2-13B (Orange Line):**

* **Trend:** Generally lower accuracy than the other models, with a few notable peaks. Performance is particularly weak on geometry and data statistics topics.

* **Sample Data Points (Topic #, ~Accuracy%):** (1, 35), (2, 20), (3, 15), (4, 20), (5, 15), (6, 5), (7, 25), (8, 45), (9, 50), (10, 20), (11, 55), (12, 45), (13, 20), (14, 20), (15, 60), (16, 50), (17, 55), (18, 20), (19, 50), (20, 45), (21, 5), (22, 10), (23, 15), (24, 35), (25, 25), (26, 15), (27, 25), (28, 40), (29, 25), (30, 40), (31, 30), (32, 25), (33, 60), (34, 30), (35, 40), (36, 15), (37, 30), (38, 50).

* **Qwen-14B (Green Line):**

* **Trend:** Shows the most consistent low-to-mid range performance, with very few high peaks. It frequently has the lowest accuracy, especially on geometry and equation-solving topics.

* **Sample Data Points (Topic #, ~Accuracy%):** (1, 5), (2, 10), (3, 5), (4, 25), (5, 15), (6, 5), (7, 20), (8, 0), (9, 5), (10, 5), (11, 15), (12, 10), (13, 15), (14, 5), (15, 45), (16, 30), (17, 5), (18, 15), (19, 30), (20, 30), (21, 15), (22, 5), (23, 40), (24, 15), (25, 20), (26, 10), (27, 20), (28, 20), (29, 5), (30, 20), (31, 20), (32, 40), (33, 25), (34, 0), (35, 30), (36, 15), (37, 10), (38, 5), (39, 10).

* **InternLM2-Math-20B (Red Line):**

* **Trend:** Often the top-performing model, with several high peaks above 80%. It shows particular strength in algebra, functions, and probability, but also has significant variability.

* **Sample Data Points (Topic #, ~Accuracy%):** (1, 45), (2, 65), (3, 65), (4, 70), (5, 55), (6, 20), (7, 40), (8, 35), (9, 50), (10, 25), (11, 90), (12, 65), (13, 65), (14, 25), (15, 45), (16, 75), (17, 70), (18, 70), (19, 85), (20, 83), (21, 25), (22, 30), (23, 50), (24, 55), (25, 60), (26, 35), (27, 35), (28, 75), (29, 50), (30, 75), (31, 35), (32, 80), (33, 50), (34, 25), (35, 55), (36, 75), (37, 85), (38, 70), (39, 70).

### Key Observations

1. **Performance Hierarchy:** `InternLM2-Math-20B` (Red) and `Baichuan2-13B` (Blue) are generally the top performers, frequently trading the lead. `LLaMA2-13B` (Orange) and `Qwen-14B` (Green) consistently perform at a lower tier.

2. **Topic Sensitivity:** All models show extreme sensitivity to the specific mathematical topic. Accuracy can swing by 40-60 percentage points between adjacent topics. This suggests the models' mathematical reasoning is not robust or generalized but highly dependent on the specific problem type.

3. **Model-Specific Strengths:**

* `InternLM2-Math-20B` peaks on topics like "Finding the Analytic Expression of a Linear Function" (#11, ~90%) and "Solution of Quadratic Equation in One Variable" (#28, ~75%).

* `Baichuan2-13B` excels on "Powers with the Same Base" (#17, ~85%) and "Application of Fractional Equations" (#33, ~70%).

* `LLaMA2-13B` has a notable peak on "Coordinate Characteristics of Points" (#15, ~60%).

* `Qwen-14B` performs best on "Properties of Inverse Proportional Functions" (#13, ~45%) and "Solution of Quadratic Equation in One Variable" (#32, ~40%).

4. **Common Difficult Areas:** Geometry topics (e.g., #5-7, Inscribed Angle Theorem, Arc Length, Point-Circle Relationship) and data statistics (#35-37) appear challenging for most models, particularly `LLaMA2-13B` and `Qwen-14B`, which often score below 20% in these areas.

5. **Volatility:** The green line (`Qwen-14B`) is the most consistently low, while the red line (`InternLM2-Math-20B`) exhibits the highest peaks but also deep valleys, indicating specialized rather than broad competence.

### Interpretation

This chart provides a granular diagnostic of LLM capabilities in mathematical reasoning, moving beyond aggregate benchmarks. The data suggests that:

1. **Specialization over Generalization:** The high volatility indicates that these models have not achieved a unified "understanding" of mathematics. Instead, they possess a patchwork of competencies, likely reflecting biases in their training data towards certain problem formats or topics. A model may excel at algebraic manipulation but fail at geometric visualization.

2. **The "Math" in Model Names Matters:** The `InternLM2-Math-20B` model, which likely underwent math-specific fine-tuning or training, demonstrates a clear, though not absolute, advantage, especially on complex algebraic tasks. This validates the approach of domain-specific adaptation for technical fields.

3. **Instruction Following vs. Reasoning:** The poor performance on applied topics (e.g., "Application of...") across several models may highlight a gap between procedural knowledge (solving a given equation type) and the deeper reasoning required to model a word problem into a mathematical formulation.

4. **Implications for Use:** Users cannot assume consistent performance from any single model across a math curriculum. A model strong in algebra may be unreliable for geometry. This underscores the need for topic-aware model selection or ensemble approaches for educational or technical applications.

5. **Data as a Diagnostic Tool:** For developers, the specific topics where a model fails (e.g., `Qwen-14B` on "Congruent Triangles" or `LLaMA2-13B` on "Flowcharts") provide direct targets for improving training data curation or fine-tuning strategies.

In essence, the chart reveals that current LLMs are not monolithic "math solvers" but tools with highly variable and topic-dependent proficiencies. Their performance is a complex function of model architecture, training data composition, and potential specialized tuning, with no single model demonstrating comprehensive mastery.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Accuracy Comparison of Different Models on Chinese Question Answering Tasks

### Overview

The image is a line graph comparing the accuracy of four AI models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, InternLM2-Math-20B) across 30 Chinese question-answering categories. The y-axis represents accuracy (0-100%), and the x-axis lists question categories in Chinese. The graph shows significant fluctuations in performance across models and categories.

### Components/Axes

- **Legend**: Top-left corner, color-coded models:

- Blue: Baichuan2-13B

- Orange: LLaMA2-13B

- Green: Qwen-14B

- Red: InternLM2-Math-20B

- **Y-axis**: "Accuracy (%)" with a dashed reference line at 80%.

- **X-axis**: 30 Chinese question categories (transcribed below with English translations):

1. 全球化 (Globalization)

2. 经济学 (Economics)

3. 环境科学 (Environmental Science)

4. 量子力学 (Quantum Mechanics)

5. 文学分析 (Literary Analysis)

6. 化学反应 (Chemical Reactions)

7. 现代哲学 (Modern Philosophy)

8. 生物学基础 (Biology Basics)

9. 计算机科学 (Computer Science)

10. 古代历史 (Ancient History)

11. 物理学原理 (Physics Principles)

12. 法律体系 (Legal Systems)

13. 心理学理论 (Psychology Theories)

14. 数学建模 (Mathematical Modeling)

15. 地理学概念 (Geography Concepts)

16. 社会学研究 (Sociology Research)

17. 天文学现象 (Astronomy Phenomena)

18. 语言学分析 (Linguistics Analysis)

19. 伦理学讨论 (Ethics Discussion)

20. 统计学方法 (Statistical Methods)

21. 遗传学原理 (Genetics Principles)

22. 纳米技术 (Nanotechnology)

23. 机器学习 (Machine Learning)

24. 古典文学 (Classical Literature)

25. 现代艺术 (Modern Art)

26. 电影理论 (Film Theory)

27. 建筑设计 (Architectural Design)

28. 旅游景点 (Tourism Attractions)

29. 食品科学 (Food Science)

30. 健康与营养 (Health & Nutrition)

### Detailed Analysis

1. **Baichuan2-13B (Blue)**:

- Starts at ~65% (Globalization).

- Peaks at ~85% (Physics Principles, 11th category).

- Ends at ~50% (Health & Nutrition).

- Fluctuates moderately, with dips below 40% in 3 categories.

2. **LLaMA2-13B (Orange)**:

- Starts at ~35% (Globalization).

- Peaks at ~60% (Machine Learning, 23rd category).

- Ends at ~45% (Health & Nutrition).

- Sharp drops below 20% in 4 categories.

3. **Qwen-14B (Green)**:

- Starts at ~10% (Globalization).

- Peaks at ~80% (Mathematical Modeling, 14th category).

- Ends at ~25% (Health & Nutrition).

- Extreme volatility, with 0% in 1 category (Ancient History).

4. **InternLM2-Math-20B (Red)**:

- Starts at ~45% (Globalization).

- Peaks at ~90% (Mathematical Modeling, 14th category).

- Ends at ~70% (Health & Nutrition).

- Most consistent high performance, with only 2 dips below 50%.

### Key Observations

- **Highest Peaks**: InternLM2-Math-20B (90%) and Qwen-14B (80%) dominate in specialized categories (Mathematical Modeling).

- **Lowest Performance**: Qwen-14B struggles in Ancient History (0%) and LLaMA2-13B in Quantum Mechanics (~5%).

- **Stability**: Baichuan2-13B shows the least variance (range: 40-85%).

- **Final Performance**: InternLM2-Math-20B outperforms others by ~20% on average.

### Interpretation

The data suggests **InternLM2-Math-20B** is the most robust model overall, excelling in complex domains like mathematics and maintaining high accuracy across diverse topics. **Qwen-14B** demonstrates exceptional capability in specialized areas (e.g., mathematical modeling) but lacks consistency, failing entirely in historical questions. **Baichuan2-13B** offers balanced performance, while **LLaMA2-13B** underperforms in foundational sciences. The variability highlights the importance of model specialization: InternLM2-Math-20B’s math-focused training likely explains its dominance in analytical tasks, whereas Qwen-14B’s strengths may stem from domain-specific fine-tuning. The graph underscores the need for model selection based on use-case requirements rather than general performance metrics.

DECODING INTELLIGENCE...