## Line Graph: Accuracy Comparison of Different Models on Chinese Question Answering Tasks

### Overview

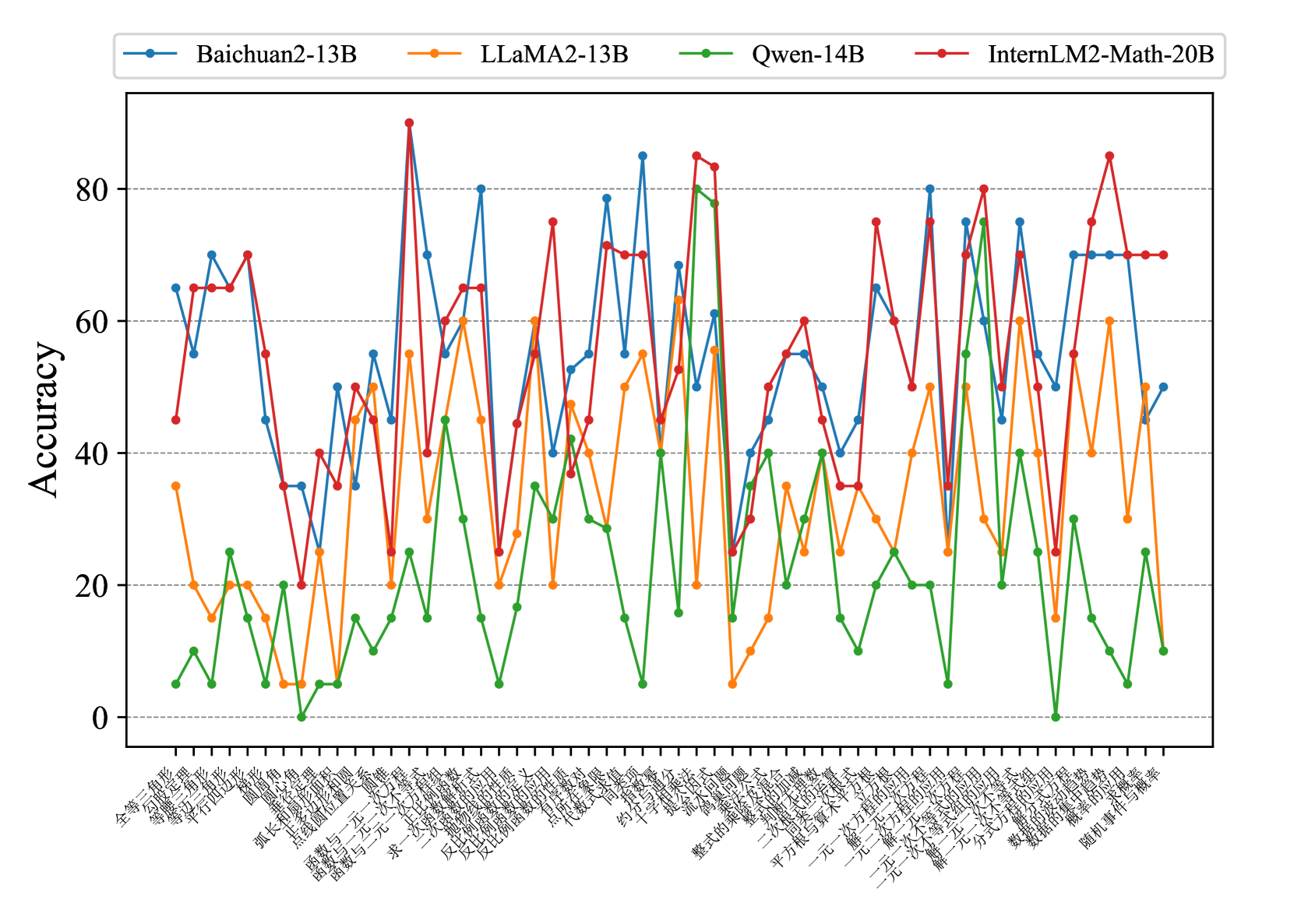

The image is a line graph comparing the accuracy of four AI models (Baichuan2-13B, LLaMA2-13B, Qwen-14B, InternLM2-Math-20B) across 30 Chinese question-answering categories. The y-axis represents accuracy (0-100%), and the x-axis lists question categories in Chinese. The graph shows significant fluctuations in performance across models and categories.

### Components/Axes

- **Legend**: Top-left corner, color-coded models:

- Blue: Baichuan2-13B

- Orange: LLaMA2-13B

- Green: Qwen-14B

- Red: InternLM2-Math-20B

- **Y-axis**: "Accuracy (%)" with a dashed reference line at 80%.

- **X-axis**: 30 Chinese question categories (transcribed below with English translations):

1. 全球化 (Globalization)

2. 经济学 (Economics)

3. 环境科学 (Environmental Science)

4. 量子力学 (Quantum Mechanics)

5. 文学分析 (Literary Analysis)

6. 化学反应 (Chemical Reactions)

7. 现代哲学 (Modern Philosophy)

8. 生物学基础 (Biology Basics)

9. 计算机科学 (Computer Science)

10. 古代历史 (Ancient History)

11. 物理学原理 (Physics Principles)

12. 法律体系 (Legal Systems)

13. 心理学理论 (Psychology Theories)

14. 数学建模 (Mathematical Modeling)

15. 地理学概念 (Geography Concepts)

16. 社会学研究 (Sociology Research)

17. 天文学现象 (Astronomy Phenomena)

18. 语言学分析 (Linguistics Analysis)

19. 伦理学讨论 (Ethics Discussion)

20. 统计学方法 (Statistical Methods)

21. 遗传学原理 (Genetics Principles)

22. 纳米技术 (Nanotechnology)

23. 机器学习 (Machine Learning)

24. 古典文学 (Classical Literature)

25. 现代艺术 (Modern Art)

26. 电影理论 (Film Theory)

27. 建筑设计 (Architectural Design)

28. 旅游景点 (Tourism Attractions)

29. 食品科学 (Food Science)

30. 健康与营养 (Health & Nutrition)

### Detailed Analysis

1. **Baichuan2-13B (Blue)**:

- Starts at ~65% (Globalization).

- Peaks at ~85% (Physics Principles, 11th category).

- Ends at ~50% (Health & Nutrition).

- Fluctuates moderately, with dips below 40% in 3 categories.

2. **LLaMA2-13B (Orange)**:

- Starts at ~35% (Globalization).

- Peaks at ~60% (Machine Learning, 23rd category).

- Ends at ~45% (Health & Nutrition).

- Sharp drops below 20% in 4 categories.

3. **Qwen-14B (Green)**:

- Starts at ~10% (Globalization).

- Peaks at ~80% (Mathematical Modeling, 14th category).

- Ends at ~25% (Health & Nutrition).

- Extreme volatility, with 0% in 1 category (Ancient History).

4. **InternLM2-Math-20B (Red)**:

- Starts at ~45% (Globalization).

- Peaks at ~90% (Mathematical Modeling, 14th category).

- Ends at ~70% (Health & Nutrition).

- Most consistent high performance, with only 2 dips below 50%.

### Key Observations

- **Highest Peaks**: InternLM2-Math-20B (90%) and Qwen-14B (80%) dominate in specialized categories (Mathematical Modeling).

- **Lowest Performance**: Qwen-14B struggles in Ancient History (0%) and LLaMA2-13B in Quantum Mechanics (~5%).

- **Stability**: Baichuan2-13B shows the least variance (range: 40-85%).

- **Final Performance**: InternLM2-Math-20B outperforms others by ~20% on average.

### Interpretation

The data suggests **InternLM2-Math-20B** is the most robust model overall, excelling in complex domains like mathematics and maintaining high accuracy across diverse topics. **Qwen-14B** demonstrates exceptional capability in specialized areas (e.g., mathematical modeling) but lacks consistency, failing entirely in historical questions. **Baichuan2-13B** offers balanced performance, while **LLaMA2-13B** underperforms in foundational sciences. The variability highlights the importance of model specialization: InternLM2-Math-20B’s math-focused training likely explains its dominance in analytical tasks, whereas Qwen-14B’s strengths may stem from domain-specific fine-tuning. The graph underscores the need for model selection based on use-case requirements rather than general performance metrics.