## System Architecture Diagram: LLM-Based Agent Control Loop with Failure Memory

### Overview

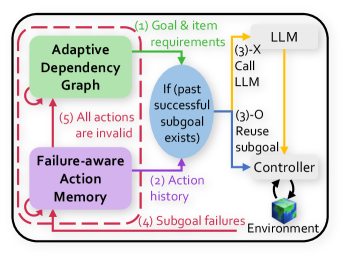

This image is a technical system architecture diagram illustrating a control loop for an AI agent that uses a Large Language Model (LLM) for planning and execution. The diagram shows the flow of information and decision-making between core components, emphasizing goal processing, subgoal validation, action execution, and failure memory. The system is designed to handle subgoal failures by referencing past successful actions.

### Components/Axes

The diagram consists of several labeled components connected by numbered, directional arrows indicating process flow. The components are spatially arranged as follows:

* **Top-Left (Green Box):** `Adaptive Dependency Graph`

* **Bottom-Left (Purple Box):** `Failure-aware Action Memory`

* **Center (Blue Oval):** `If (past successful subgoal exists)`

* **Top-Right (Grey Box):** `LLM`

* **Right (White Box):** `Controller`

* **Bottom-Right (3D Cube Icon):** `Environment`

A dashed red line encloses the `Adaptive Dependency Graph`, `Failure-aware Action Memory`, and the central decision oval, suggesting they form a core subsystem.

### Detailed Analysis: Process Flow and Components

The process flow is indicated by numbered arrows. The sequence and labels are as follows:

1. **(1) Goal & item requirements:** A green arrow flows from the `LLM` to the `Adaptive Dependency Graph`. This indicates the LLM provides high-level goals and requirements to the graph structure.

2. **(2) Action history:** A purple arrow flows from the `Failure-aware Action Memory` to the central decision oval (`If (past successful subgoal exists)`). This provides historical data on past actions and their outcomes.

3. **(3)-X Call LLM / (3)-O Reuse subgoal:** This is a decision point. Two arrows originate from the central oval:

* **(3)-X Call LLM (Yellow Arrow):** If the condition ("past successful subgoal exists") is *not* met (implied by 'X'), the flow goes to the `LLM` to generate a new plan or subgoal.

* **(3)-O Reuse subgoal (Blue Arrow):** If the condition *is* met (implied by 'O'), the flow goes directly to the `Controller`, reusing a previously successful subgoal.

4. **(4) Subgoal failures:** A red arrow flows from the `Environment` back to the `Failure-aware Action Memory`. This logs failures encountered during execution in the environment into the memory system.

5. **(5) All actions are invalid:** A red, dashed arrow flows from the `Failure-aware Action Memory` back to the `Adaptive Dependency Graph`. This indicates that when actions are deemed invalid (likely due to recorded failures), the dependency graph is updated accordingly.

The `Controller` has a bidirectional arrow connecting it to the `Environment`, representing the execution of actions and the reception of feedback or state changes from the environment.

### Key Observations

* **Dual-Path Decision Logic:** The core innovation is the conditional check for past successful subgoals, creating two distinct pathways: one for novel planning (calling the LLM) and one for efficient reuse of known-good strategies.

* **Closed-Loop Learning:** The system forms a closed loop where outcomes (successes and failures) from the `Environment` are fed back into the `Failure-aware Action Memory`, which in turn influences future decisions via the central condition and updates the `Adaptive Dependency Graph`.

* **Explicit Failure Handling:** The diagram explicitly models failure propagation (arrows 4 and 5) as a critical input for system adaptation, not just an error state.

* **Spatial Grouping:** The dashed red box visually groups the memory, graph, and decision logic, highlighting them as the agent's internal cognitive core, separate from the external LLM and Environment.

### Interpretation

This diagram depicts a sophisticated agent architecture designed for robustness and efficiency in sequential decision-making tasks. The system aims to reduce reliance on costly or slow LLM calls by caching and reusing successful subgoals, a form of experiential learning.

The **Adaptive Dependency Graph** likely models the relationships between tasks, items, and goals, providing a structured representation of the problem space. The **Failure-aware Action Memory** acts as an episodic memory, storing not just actions but their contextual success/failure status.

The central conditional (`If (past successful subgoal exists)`) is the key investigative mechanism. It suggests the agent first attempts to solve a new problem by analogy to past experiences before resorting to general reasoning (the LLM). This mimics human problem-solving heuristics.

The flow of "All actions are invalid" (5) is particularly noteworthy. It implies a mechanism for **negative learning**—when a set of actions consistently fails, the system doesn't just remember the failure; it actively updates its core understanding of task dependencies (the graph) to avoid those invalid paths in the future. This represents a deeper level of adaptation than simple action avoidance.

Overall, the architecture balances the generality of an LLM with the efficiency and robustness of a structured memory and planning system, creating an agent that can learn from both its successes and its mistakes to improve its performance over time.