## System Architecture and Neural Network Models

### Overview

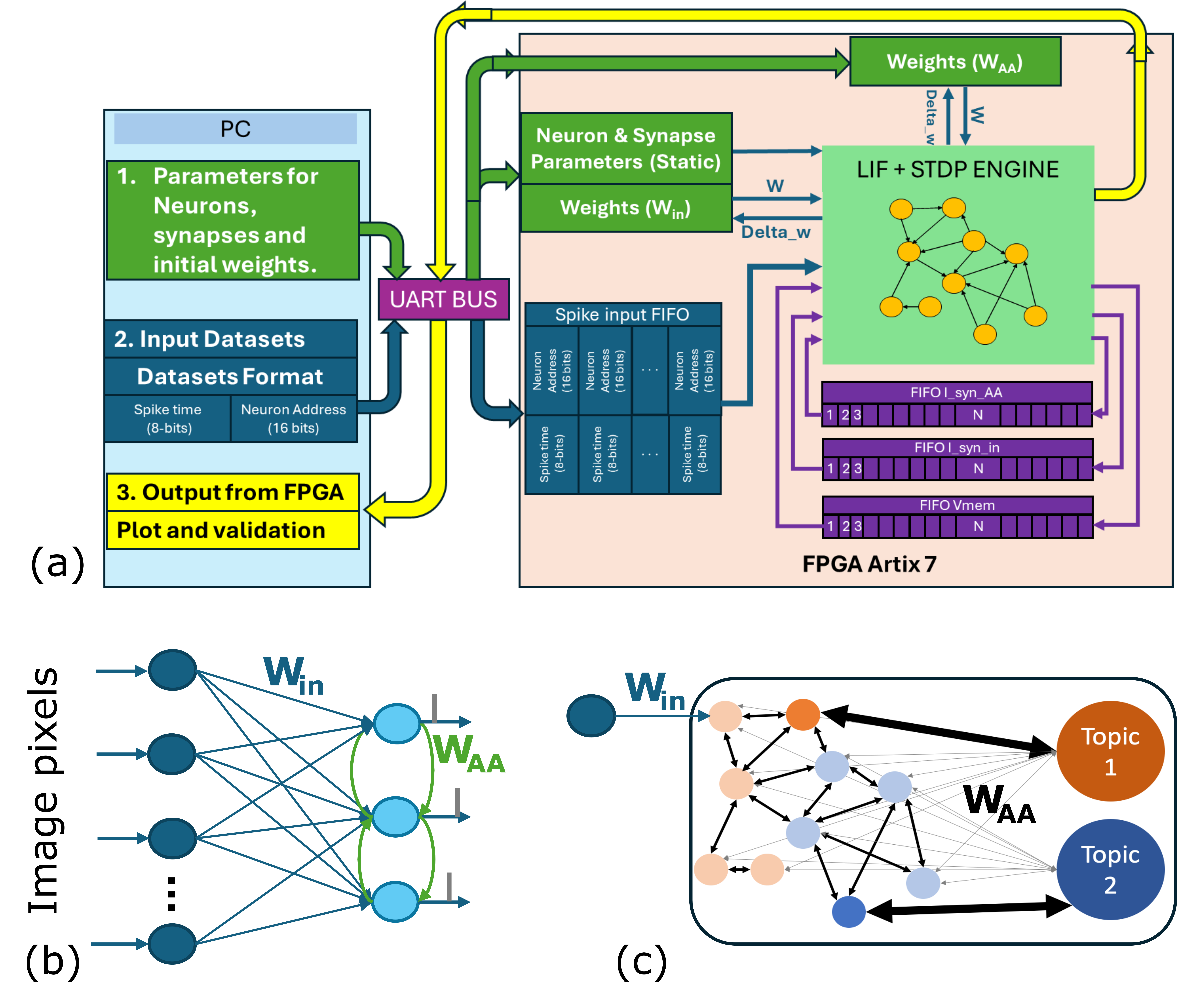

The image presents a technical system architecture for a neuromorphic computing framework, combining FPGA-based processing with neural network models. It includes three components: (a) a high-level system diagram, (b) a feedforward neural network model, and (c) a topic modeling network. The system emphasizes real-time data processing, synaptic weight updates, and topic extraction.

---

### Components/Axes

#### (a) System Architecture

1. **PC Interface**:

- **Parameters for Neurons, Synapses, and Initial Weights** (Green block).

- **Input Datasets**:

- **Spike time** (8-bits).

- **Neuron Address** (16-bits).

- **Output from FPGA**: Plot and validation (Yellow block).

2. **UART BUS**: Connects PC to FPGA (Purple block).

3. **Neuron & Synapse Parameters**:

- **Static Parameters** (Green).

- **Weights (W_in)** (Green).

4. **LIF + STDP Engine** (Light green):

- **Spike Input FIFO**:

- Spike time (8-bits).

- Neuron Address (16-bits).

- **FIFO Outputs**:

- `FIFO_I_syn_AA` (N entries).

- `FIFO_I_syn_in` (N entries).

- `FIFO_Vmem` (N entries).

5. **FPGA Artix 7**: Hardware implementation (Light pink background).

#### (b) Feedforward Neural Network

- **Input**: Image pixels (Blue nodes).

- **Weights**:

- **W_in**: Connections from input to hidden layer (Blue arrows).

- **W_AA**: Connections within hidden layer (Green arrows).

- **Output**: Hidden layer nodes (Blue and orange nodes).

#### (c) Topic Modeling Network

- **Input**: W_in (Blue node).

- **Hidden Layer**: Nodes with bidirectional connections (Black arrows).

- **Output Topics**:

- **Topic 1** (Orange node).

- **Topic 2** (Blue node).

- **Weights**: W_AA (Black arrows).

---

### Detailed Analysis

#### (a) System Architecture

- **Data Flow**:

- Input datasets (spike times and neuron addresses) are transmitted via UART BUS to the FPGA.

- The LIF + STDP Engine processes spike inputs and updates synaptic weights (W_AA) using delta_w (blue arrows).

- FPGA Artix 7 handles FIFO operations for spike times and neuron addresses.

- **Key Parameters**:

- Spike time: 8-bits.

- Neuron Address: 16-bits.

- FIFO entries (N) for synaptic updates.

#### (b) Feedforward Neural Network

- **Structure**:

- Input layer: Image pixels.

- Hidden layer: Fully connected with W_in and W_AA weights.

- Output layer: Not explicitly labeled but implied by hidden layer nodes.

#### (c) Topic Modeling Network

- **Connections**:

- Input W_in connects to hidden nodes.

- Hidden nodes connect to Topic 1 and Topic 2 via W_AA.

- Bidirectional arrows suggest recurrent or associative processing.

---

### Key Observations

1. **Color-Coded Data Flow**:

- Green: Synaptic weights (W_AA) and static parameters.

- Blue: Input data (spike times, neuron addresses).

- Yellow: Output validation and FPGA interface.

- Purple: UART BUS communication.

2. **FPGA Integration**:

- The LIF + STDP Engine uses FIFO buffers to manage real-time spike data.

- FPGA Artix 7 is the hardware backbone for parallel processing.

3. **Neural Network Design**:

- W_in and W_AA weights govern input-to-hidden and intra-hidden layer connections.

- Topic modeling uses W_AA for topic-specific weight updates.

---

### Interpretation

1. **System Purpose**:

- The architecture integrates neuromorphic computing (LIF neurons, STDP plasticity) with FPGA for efficient real-time processing.

- The UART BUS enables communication between the PC and FPGA, suggesting a hybrid software-hardware design.

2. **Neural Network Functionality**:

- The feedforward network (b) processes image data through weighted synaptic connections.

- The topic modeling network (c) extracts latent topics from input data, likely for classification or clustering.

3. **Biological Plausibility**:

- The use of LIF neurons and STDP (Spike-Timing-Dependent Plasticity) mimics biological neural dynamics, enabling adaptive learning.

4. **Hardware Optimization**:

- FPGA Artix 7’s FIFO buffers and parallel processing capabilities are critical for handling high-frequency spike data.

---

### Notable Trends

- **Weight Updates**: Delta_w (blue arrows) indicates dynamic synaptic weight adjustments via STDP.

- **Topic Separation**: Topic 1 and Topic 2 in (c) suggest binary classification or disentangled representation learning.

- **Data Granularity**: 8-bit spike times and 16-bit neuron addresses balance precision and computational efficiency.

---

### Conclusion

This system demonstrates a neuromorphic computing pipeline for real-time data processing, combining FPGA hardware acceleration with biologically inspired neural models. The integration of STDP for synaptic learning and topic modeling highlights its potential for applications in edge AI, sensory processing, and adaptive systems.