TECHNICAL ASSET FINGERPRINT

53787a11b2563884b7119388

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

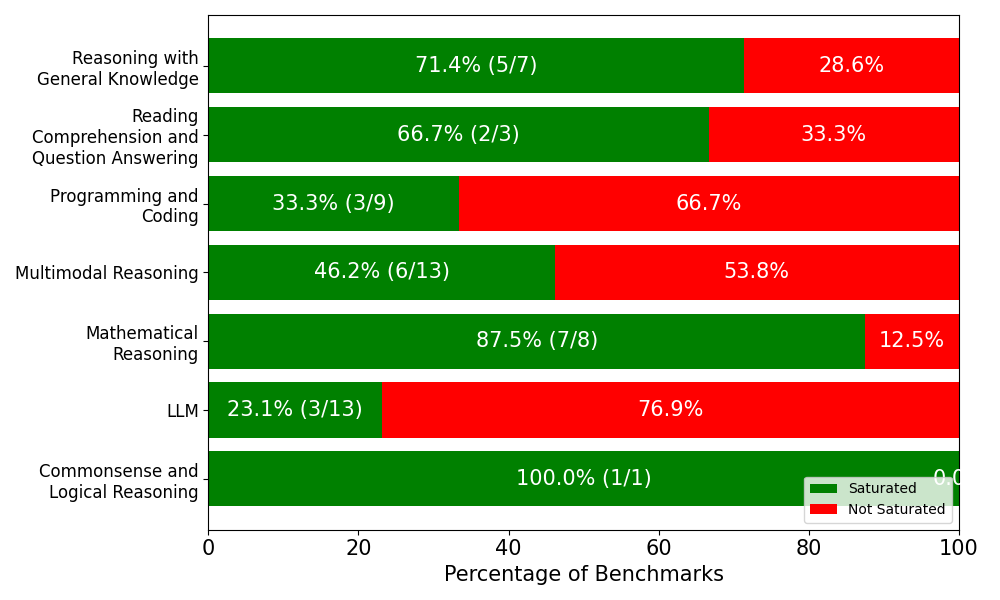

## Horizontal Bar Chart: Benchmark Saturation

### Overview

The image is a horizontal bar chart comparing the performance of a system across different reasoning and knowledge domains. Each bar represents a specific category, and the bar is divided into two colored segments: green ("Saturated") and red ("Not Saturated"). The chart displays the percentage and the fraction of benchmarks achieved for each category.

### Components/Axes

* **Y-axis:** Lists the categories being evaluated. From top to bottom:

* Reasoning with General Knowledge

* Reading Comprehension and Question Answering

* Programming and Coding

* Multimodal Reasoning

* Mathematical Reasoning

* LLM

* Commonsense and Logical Reasoning

* **X-axis:** Represents the "Percentage of Benchmarks," ranging from 0% to 100% in increments of 20%.

* **Legend:** Located in the bottom-right corner, indicating:

* Green: "Saturated"

* Red: "Not Saturated"

### Detailed Analysis

Here's a breakdown of each category's performance, including the trend and specific values:

* **Reasoning with General Knowledge:**

* Trend: Predominantly Saturated.

* Saturated (Green): 71.4% (5/7)

* Not Saturated (Red): 28.6%

* **Reading Comprehension and Question Answering:**

* Trend: Predominantly Saturated.

* Saturated (Green): 66.7% (2/3)

* Not Saturated (Red): 33.3%

* **Programming and Coding:**

* Trend: Predominantly Not Saturated.

* Saturated (Green): 33.3% (3/9)

* Not Saturated (Red): 66.7%

* **Multimodal Reasoning:**

* Trend: Slightly more Not Saturated than Saturated.

* Saturated (Green): 46.2% (6/13)

* Not Saturated (Red): 53.8%

* **Mathematical Reasoning:**

* Trend: Highly Saturated.

* Saturated (Green): 87.5% (7/8)

* Not Saturated (Red): 12.5%

* **LLM:**

* Trend: Overwhelmingly Not Saturated.

* Saturated (Green): 23.1% (3/13)

* Not Saturated (Red): 76.9%

* **Commonsense and Logical Reasoning:**

* Trend: Completely Saturated.

* Saturated (Green): 100.0% (1/1)

* Not Saturated (Red): 0.0%

### Key Observations

* Commonsense and Logical Reasoning is the only category with 100% saturation.

* LLM has the lowest saturation rate, with only 23.1% of benchmarks saturated.

* Mathematical Reasoning shows a high saturation rate of 87.5%.

* Programming and Coding and Multimodal Reasoning have more benchmarks not saturated than saturated.

### Interpretation

The chart provides a performance overview across different reasoning and knowledge areas. The "Saturated" vs. "Not Saturated" distinction likely indicates whether the system met a certain performance threshold or achieved a desired outcome for each benchmark.

The high saturation in Commonsense and Logical Reasoning suggests strong performance in this area. Conversely, the low saturation in LLM indicates a potential weakness or area for improvement. The varying degrees of saturation across the other categories highlight the system's strengths and weaknesses in different domains. The data suggests that the system performs well in areas requiring established logical rules and mathematical principles, but struggles with more complex tasks like programming and coding, and especially LLM tasks.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

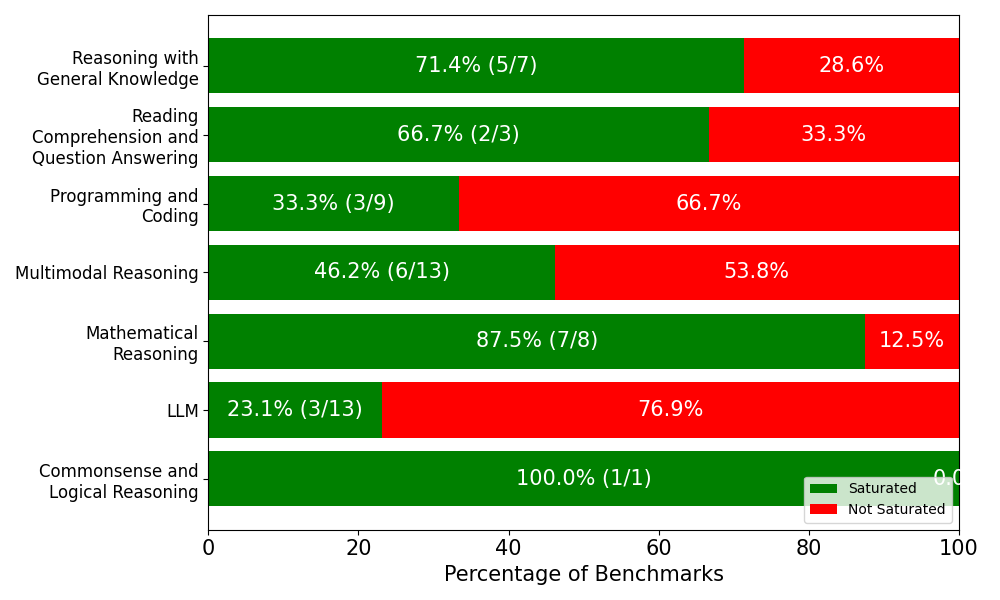

## Bar Chart: Performance Breakdown by Reasoning Category

### Overview

This image displays a horizontal stacked bar chart that visualizes the performance of a system or model across various reasoning categories. Each category is represented by a bar, segmented into two parts: "Saturated" (green) and "Not Saturated" (red). The length of each segment indicates the percentage of benchmarks within that category that fall into either the "Saturated" or "Not Saturated" state. The chart also provides the raw count of benchmarks in the format (successful/total) for each segment.

### Components/Axes

* **Y-axis Labels**: The y-axis lists the different reasoning categories:

* Reasoning with General Knowledge

* Reading Comprehension and Question Answering

* Programming and Coding

* Multimodal Reasoning

* Mathematical Reasoning

* LLM

* Commonsense and Logical Reasoning

* **X-axis Label**: The x-axis is labeled "Percentage of Benchmarks" and ranges from 0 to 100.

* **Legend**: Located in the bottom-right corner of the chart, the legend indicates:

* **Saturated**: Represented by a green color.

* **Not Saturated**: Represented by a red color.

### Detailed Analysis

The chart presents the following data for each category:

1. **Reasoning with General Knowledge**:

* **Saturated**: 71.4% (5/7) - This segment extends from 0 to approximately 71.4 on the x-axis.

* **Not Saturated**: 28.6% - This segment extends from approximately 71.4 to 100 on the x-axis.

2. **Reading Comprehension and Question Answering**:

* **Saturated**: 66.7% (2/3) - This segment extends from 0 to approximately 66.7 on the x-axis.

* **Not Saturated**: 33.3% - This segment extends from approximately 66.7 to 100 on the x-axis.

3. **Programming and Coding**:

* **Saturated**: 33.3% (3/9) - This segment extends from 0 to approximately 33.3 on the x-axis.

* **Not Saturated**: 66.7% - This segment extends from approximately 33.3 to 100 on the x-axis.

4. **Multimodal Reasoning**:

* **Saturated**: 46.2% (6/13) - This segment extends from 0 to approximately 46.2 on the x-axis.

* **Not Saturated**: 53.8% - This segment extends from approximately 46.2 to 100 on the x-axis.

5. **Mathematical Reasoning**:

* **Saturated**: 87.5% (7/8) - This segment extends from 0 to approximately 87.5 on the x-axis.

* **Not Saturated**: 12.5% - This segment extends from approximately 87.5 to 100 on the x-axis.

6. **LLM**:

* **Saturated**: 23.1% (3/13) - This segment extends from 0 to approximately 23.1 on the x-axis.

* **Not Saturated**: 76.9% - This segment extends from approximately 23.1 to 100 on the x-axis.

7. **Commonsense and Logical Reasoning**:

* **Saturated**: 100.0% (1/1) - This segment extends from 0 to 100 on the x-axis.

* **Not Saturated**: 0.0% - This segment has a length of 0.

### Key Observations

* **High Saturated Performance**: "Commonsense and Logical Reasoning" shows perfect "Saturated" performance (100.0%). "Mathematical Reasoning" also demonstrates very high "Saturated" performance (87.5%).

* **Low Saturated Performance**: "LLM" exhibits the lowest "Saturated" performance (23.1%), with a significantly larger portion of benchmarks being "Not Saturated". "Programming and Coding" also shows a majority of "Not Saturated" benchmarks (66.7%).

* **Mixed Performance**: "Reasoning with General Knowledge", "Reading Comprehension and Question Answering", and "Multimodal Reasoning" show a more balanced distribution between "Saturated" and "Not Saturated" benchmarks, with "Saturated" being the majority in the first two and "Not Saturated" being the majority in the third.

* **Small Sample Sizes**: Some categories have very small total benchmark counts (e.g., Reading Comprehension and Question Answering with 3 benchmarks, Commonsense and Logical Reasoning with 1 benchmark). This means the percentages might be less statistically robust for these categories.

### Interpretation

This chart suggests a performance evaluation of a system or model across different cognitive or reasoning tasks. The "Saturated" state likely represents successful or desired outcomes, while "Not Saturated" indicates failures or areas where the system did not meet expectations.

The data indicates that the system excels in areas requiring logical deduction and mathematical problem-solving. It performs reasonably well in general knowledge and reading comprehension. However, it struggles significantly with programming tasks and multimodal reasoning, and particularly with LLM-specific benchmarks, where a large majority of tasks are not "Saturated". The perfect score in "Commonsense and Logical Reasoning" is notable, though it is based on a single benchmark, which limits its generalizability.

The varying sample sizes across categories are important to consider. While "Mathematical Reasoning" has a high success rate, it's based on 8 benchmarks. "LLM" has a low success rate across 13 benchmarks, and "Programming and Coding" has a low success rate across 9 benchmarks. These larger sample sizes for weaker performing categories might indicate more systemic issues. The chart effectively highlights the strengths and weaknesses of the evaluated system, pointing towards areas that require further development or investigation.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Horizontal Stacked Bar Chart: Benchmark Saturation by Category

### Overview

This image is a horizontal stacked bar chart illustrating the percentage of "Saturated" versus "Not Saturated" benchmarks across seven distinct cognitive and technical evaluation categories. The chart uses a green and red color scheme to visually represent the proportion of saturation within each category. The language used throughout the chart is entirely English.

### Components/Axes

**Spatial Grounding & Layout:**

* **Y-axis (Left):** Contains seven categorical labels describing different types of reasoning or tasks. The labels are right-aligned against the axis line.

* **X-axis (Bottom):** Represents the "Percentage of Benchmarks". It is scaled from 0 to 100 with major tick marks and labels at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Main Chart Area (Center):** Contains seven horizontal bars corresponding to the Y-axis categories. Each bar spans the full 100% width of the X-axis, divided into green (left) and red (right) segments. Text is embedded directly inside the colored segments.

* **Legend (Bottom-Right):** Located inside the chart area, just above the 80-100 mark on the X-axis.

* **Green Square:** Labeled "Saturated"

* **Red Square:** Labeled "Not Saturated"

### Detailed Analysis

**Trend Verification & Visual Proportions:**

For every category, the visual length of the green bar (starting from 0 on the left) and the red bar (filling the remainder to 100 on the right) perfectly matches the embedded percentage text.

Below is the reconstructed data table based on the embedded text and visual proportions. The green sections include both a percentage and a fraction (representing the number of saturated benchmarks over the total benchmarks in that category). The red sections only display the remaining percentage.

| Category (Y-Axis Label) | Saturated (Green Bar) | Not Saturated (Red Bar) | Total Benchmarks (Derived) |

| :--- | :--- | :--- | :--- |

| Reasoning with General Knowledge | 71.4% (5/7) | 28.6% | 7 |

| Reading Comprehension and Question Answering | 66.7% (2/3) | 33.3% | 3 |

| Programming and Coding | 33.3% (3/9) | 66.7% | 9 |

| Multimodal Reasoning | 46.2% (6/13) | 53.8% | 13 |

| Mathematical Reasoning | 87.5% (7/8) | 12.5% | 8 |

| LLM | 23.1% (3/13) | 76.9% | 13 |

| Commonsense and Logical Reasoning | 100.0% (1/1) | 0.0%* | 1 |

*\*Note: The "0.0" text in the bottom right red section is partially cut off by the edge of the image, but is clearly inferable based on the 100.0% green section and the visible "0.0".*

### Key Observations

* **Highest Saturation:** "Commonsense and Logical Reasoning" is visually entirely green, indicating 100% saturation. However, the fraction (1/1) shows this is based on a single benchmark. "Mathematical Reasoning" follows closely at 87.5% (7/8).

* **Lowest Saturation:** The "LLM" category has the shortest green bar, showing only 23.1% saturation (3/13). "Programming and Coding" is the second lowest at 33.3% (3/9).

* **Sample Size Variance:** The fractions embedded in the green bars reveal significant variance in the number of benchmarks per category. "Multimodal Reasoning" and "LLM" have the highest number of total benchmarks (13 each), while "Commonsense and Logical Reasoning" has only 1.

* **Total Benchmarks:** By summing the denominators of the fractions, we can determine that this chart represents a total of 54 individual benchmarks across all categories.

### Interpretation

**What the data suggests:**

In the context of AI and machine learning evaluation, a "saturated" benchmark typically refers to a test where current models have achieved near-perfect or human-level performance, rendering the benchmark obsolete for measuring future progress.

This chart demonstrates that traditional, well-defined domains like "Mathematical Reasoning" (87.5%) and "Reasoning with General Knowledge" (71.4%) are highly saturated. The AI community has largely "solved" the specific tests currently used in these categories.

Conversely, categories like "LLM" (23.1%), "Programming and Coding" (33.3%), and "Multimodal Reasoning" (46.2%) are largely *not* saturated. This indicates that these are the current frontiers of AI research; the existing benchmarks in these categories are still challenging enough to effectively measure and differentiate the capabilities of newer models.

**Reading between the lines (Peircean Analysis):**

The most critical piece of information in this chart is not the percentages, but the *fractions*. Presenting "Commonsense and Logical Reasoning" as 100% saturated is statistically misleading without the context of the (1/1) fraction. It doesn't mean AI has mastered all commonsense; it means AI has mastered the *single* benchmark this specific study chose to include.

Furthermore, the categories with the most benchmarks (LLM and Multimodal, with 13 each) have the lowest saturation. This suggests a correlation: as a field becomes more complex and less saturated, researchers create a higher volume of diverse benchmarks to try and capture the nuances of model performance. The chart effectively highlights the urgent need for the AI community to develop new, harder benchmarks for Mathematics and General Knowledge, as the current ones are no longer useful discriminators of model capability.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Horizontal Bar Chart: LLM Benchmark Saturation

### Overview

This is a horizontal bar chart visualizing the percentage of benchmarks saturated by a Large Language Model (LLM) across various reasoning categories. Each bar represents a category, with the green portion indicating the percentage of saturated benchmarks and the red portion indicating the percentage of non-saturated benchmarks. The number of saturated benchmarks out of the total number of benchmarks for each category is also displayed.

### Components/Axes

* **Y-axis (Vertical):** Represents the different reasoning categories:

* Reasoning with General Knowledge

* Reading Comprehension and Question Answering

* Programming and Coding

* Multimodal Reasoning

* Mathematical Reasoning

* LLM

* Commonsense and Logical Reasoning

* **X-axis (Horizontal):** Represents the "Percentage of Benchmarks", ranging from 0 to 100.

* **Legend (Bottom-Right):**

* Green: "Saturated"

* Red: "Not Saturated"

* **Data Labels:** Each bar includes a percentage value (e.g., 71.4%, 28.6%) and a fraction representing (Saturated Benchmarks / Total Benchmarks) (e.g., (5/7), (2/3)).

### Detailed Analysis

Here's a breakdown of each category's saturation levels:

1. **Reasoning with General Knowledge:** 71.4% Saturated (5/7). The bar is predominantly green, with a smaller red portion.

2. **Reading Comprehension and Question Answering:** 66.7% Saturated (2/3). Approximately two-thirds of the bar is green.

3. **Programming and Coding:** 33.3% Saturated (3/9). The bar is predominantly red, with a smaller green portion.

4. **Multimodal Reasoning:** 46.2% Saturated (6/13). The bar is roughly half green and half red.

5. **Mathematical Reasoning:** 87.5% Saturated (7/8). The bar is overwhelmingly green, with a very small red portion.

6. **LLM:** 23.1% Saturated (3/13). The bar is predominantly red, with a small green portion.

7. **Commonsense and Logical Reasoning:** 100.0% Saturated (1/1). The bar is entirely green.

### Key Observations

* **Highest Saturation:** Commonsense and Logical Reasoning shows 100% saturation, indicating the LLM performs very well on this type of benchmark.

* **Lowest Saturation:** LLM category shows the lowest saturation at 23.1%.

* **Significant Variation:** There's a wide range in saturation levels across different reasoning categories, from 23.1% to 100%.

* **Mathematical Reasoning is High:** Mathematical Reasoning is also highly saturated at 87.5%.

* **Programming and Coding is Low:** Programming and Coding is relatively low at 33.3%.

### Interpretation

The chart demonstrates the varying capabilities of the LLM across different reasoning tasks. The LLM excels at Commonsense and Logical Reasoning and Mathematical Reasoning, achieving near-complete saturation of benchmarks in these areas. However, it struggles with Programming and Coding and the LLM category itself, indicating areas where further development is needed. The saturation percentages provide a quantitative measure of the LLM's performance on each type of benchmark, and the (Saturated/Total) ratios offer insight into the sample size used for each category. The differences in saturation levels suggest that the LLM's architecture or training data may be better suited for certain types of reasoning than others. The relatively low saturation in Programming and Coding could indicate a need for more specialized training data or architectural modifications to improve performance in this domain. The low saturation in the LLM category itself is curious and may indicate the benchmarks used to evaluate the LLM are particularly challenging or are designed to expose weaknesses in the model's core capabilities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Horizontal Stacked Bar Chart: Benchmark Saturation by Category

### Overview

This image is a horizontal stacked bar chart that visualizes the percentage of benchmarks that are "Saturated" versus "Not Saturated" across seven distinct capability categories for an AI model or system. The chart uses a two-color scheme (green for Saturated, red for Not Saturated) to show the proportional split within each category. The overall purpose is to illustrate performance or evaluation results, highlighting areas of strength and weakness.

### Components/Axes

* **Chart Type:** Horizontal Stacked Bar Chart.

* **Y-Axis (Vertical):** Lists seven capability categories. From top to bottom:

1. Reasoning with General Knowledge

2. Reading Comprehension and Question Answering

3. Programming and Coding

4. Multimodal Reasoning

5. Mathematical Reasoning

6. LLM

7. Commonsense and Logical Reasoning

* **X-Axis (Horizontal):** Labeled "Percentage of Benchmarks". The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located in the bottom-right corner of the chart area. It defines the two data series:

* **Green Square:** "Saturated"

* **Red Square:** "Not Saturated"

* **Data Labels:** Each bar segment contains a percentage value. The green "Saturated" segments also include a fraction in parentheses (e.g., "5/7"), indicating the number of saturated benchmarks out of the total benchmarks in that category.

### Detailed Analysis

The chart presents the following data for each category, listed from top to bottom:

1. **Reasoning with General Knowledge**

* **Saturated (Green):** 71.4% (5/7). The green bar extends from 0% to approximately 71.4% on the x-axis.

* **Not Saturated (Red):** 28.6%. The red bar occupies the remainder, from ~71.4% to 100%.

2. **Reading Comprehension and Question Answering**

* **Saturated (Green):** 66.7% (2/3). The green bar extends from 0% to approximately 66.7%.

* **Not Saturated (Red):** 33.3%. The red bar occupies the remainder.

3. **Programming and Coding**

* **Saturated (Green):** 33.3% (3/9). The green bar extends from 0% to approximately 33.3%.

* **Not Saturated (Red):** 66.7%. The red bar is the dominant segment, occupying the majority of the bar.

4. **Multimodal Reasoning**

* **Saturated (Green):** 46.2% (6/13). The green bar extends from 0% to approximately 46.2%.

* **Not Saturated (Red):** 53.8%. The red bar is slightly larger than the green segment.

5. **Mathematical Reasoning**

* **Saturated (Green):** 87.5% (7/8). The green bar is very long, extending from 0% to 87.5%.

* **Not Saturated (Red):** 12.5%. The red segment is a small portion at the end of the bar.

6. **LLM**

* **Saturated (Green):** 23.1% (3/13). The green bar is short, extending from 0% to approximately 23.1%.

* **Not Saturated (Red):** 76.9%. The red bar is the dominant segment, occupying most of the bar.

7. **Commonsense and Logical Reasoning**

* **Saturated (Green):** 100.0% (1/1). The entire bar is green, extending from 0% to 100%.

* **Not Saturated (Red):** 0.0%. No red segment is visible.

### Key Observations

* **Highest Saturation:** "Commonsense and Logical Reasoning" shows 100% saturation, though it is based on only one benchmark (1/1).

* **Lowest Saturation:** "LLM" has the lowest saturation rate at 23.1%.

* **Strong Performance:** "Mathematical Reasoning" (87.5%) and "Reasoning with General Knowledge" (71.4%) also show high saturation rates.

* **Areas for Improvement:** "Programming and Coding" (33.3%) and "LLM" (23.1%) have the lowest saturation rates, indicating these are the most challenging categories where most benchmarks are not yet saturated.

* **Benchmark Count Variation:** The total number of benchmarks per category varies significantly, from 1 ("Commonsense and Logical Reasoning") to 13 ("Multimodal Reasoning" and "LLM"). This affects the statistical weight of each percentage.

### Interpretation

This chart provides a diagnostic snapshot of an AI system's capabilities relative to established benchmarks. "Saturated" likely means the system has reached a performance ceiling or solved the benchmark tasks.

The data suggests the system excels in structured, logical domains like **Commonsense/Logical Reasoning** and **Mathematical Reasoning**, where it has nearly or completely mastered the available tests. It also performs well in **General Knowledge Reasoning**.

Conversely, the system shows significant room for growth in **Programming/Coding** and general **LLM** benchmarks, where over two-thirds of the tasks remain unsaturated. The **Multimodal Reasoning** category sits in the middle, with a near-even split.

The stark contrast between categories highlights the uneven nature of AI capability development. The system's strength in formal logic and math does not directly translate to proficiency in code generation or broad language modeling tasks as measured by these specific benchmarks. The very low benchmark count for "Commonsense and Logical Reasoning" (1/1) is a critical caveat; its 100% score, while positive, is less statistically robust than the high scores in categories with more benchmarks (e.g., Mathematical Reasoning with 8/8). This chart would be essential for guiding future research and development priorities.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Benchmark Performance Analysis

## Chart Overview

Horizontal bar chart comparing performance metrics across multiple reasoning domains. Color-coded representation of saturated vs. non-saturated benchmarks.

### Legend

- **Green**: Saturated (✓)

- **Red**: Not Saturated (✗)

- **Placement**: Bottom-right quadrant

### Axis Configuration

- **X-axis**: Percentage of Benchmarks (0-100%)

- Increment markers: 0, 20, 40, 60, 80, 100

- **Y-axis**: Categorical domains (7 total)

- Ordered top-to-bottom:

1. Reasoning with General Knowledge

2. Reading Comprehension and Question Answering

3. Programming and Coding

4. Multimodal Reasoning

5. Mathematical Reasoning

6. LLM

7. Commonsense and Logical Reasoning

## Data Analysis

### Key Trends

1. **Mathematical Reasoning** shows highest saturation (87.5%)

2. **LLM** demonstrates lowest saturation (23.1%)

3. **Commonsense and Logical Reasoning** achieves perfect saturation (100%)

### Category Breakdown

| Category | Saturated (%) | Saturated (Count) | Not Saturated (%) | Not Saturated (Count) |

|-----------------------------------|---------------|-------------------|-------------------|-----------------------|

| Reasoning with General Knowledge | 71.4 | 5/7 | 28.6 | - |

| Reading Comprehension | 66.7 | 2/3 | 33.3 | - |

| Programming and Coding | 33.3 | 3/9 | 66.7 | - |

| Multimodal Reasoning | 46.2 | 6/13 | 53.8 | - |

| Mathematical Reasoning | 87.5 | 7/8 | 12.5 | - |

| LLM | 23.1 | 3/13 | 76.9 | - |

| Commonsense and Logical Reasoning | 100.0 | 1/1 | 0.0 | - |

## Spatial Grounding

- **Legend Position**: [x: 85-100, y: 0.9] (bottom-right)

- **Bar Orientation**: Horizontal (left-to-right growth)

- **Color Consistency**:

- Green bars always represent saturated benchmarks

- Red bars always represent non-saturated benchmarks

## Trend Verification

1. **Mathematical Reasoning**: Green bar dominates (87.5%) vs red (12.5%)

2. **LLM**: Red bar significantly longer (76.9%) vs green (23.1%)

3. **Commonsense**: Perfect green saturation (100%)

## Component Isolation

1. **Header**: Chart title (implied by axis labels)

2. **Main Chart**:

- 7 horizontal bars with dual-color segmentation

- Percentage markers at 20% intervals

3. **Footer**:

- Legend with color coding

- X-axis percentage scale

## Data Table Reconstruction

| Category | Saturated (%) | Saturated (Count) | Not Saturated (%) | Not Saturated (Count) |

|-----------------------------------|---------------|-------------------|-------------------|-----------------------|

| Reasoning with General Knowledge | 71.4 | 5/7 | 28.6 | - |

| Reading Comprehension | 66.7 | 2/3 | 33.3 | - |

| Programming and Coding | 33.3 | 3/9 | 66.7 | - |

| Multimodal Reasoning | 46.2 | 6/13 | 53.8 | - |

| Mathematical Reasoning | 87.5 | 7/8 | 12.5 | - |

| LLM | 23.1 | 3/13 | 76.9 | - |

| Commonsense and Logical Reasoning | 100.0 | 1/1 | 0.0 | - |

## Critical Observations

1. **Highest Performance**: Mathematical Reasoning (87.5% saturation)

2. **Lowest Performance**: LLM (23.1% saturation)

3. **Perfect Score**: Commonsense and Logical Reasoning (100% saturation)

4. **Balanced Performance**: Reading Comprehension (66.7% saturation)

## Language Note

All textual content is in English. No non-English elements detected.

DECODING INTELLIGENCE...