TECHNICAL ASSET FINGERPRINT

53b24cec96231476088188f1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

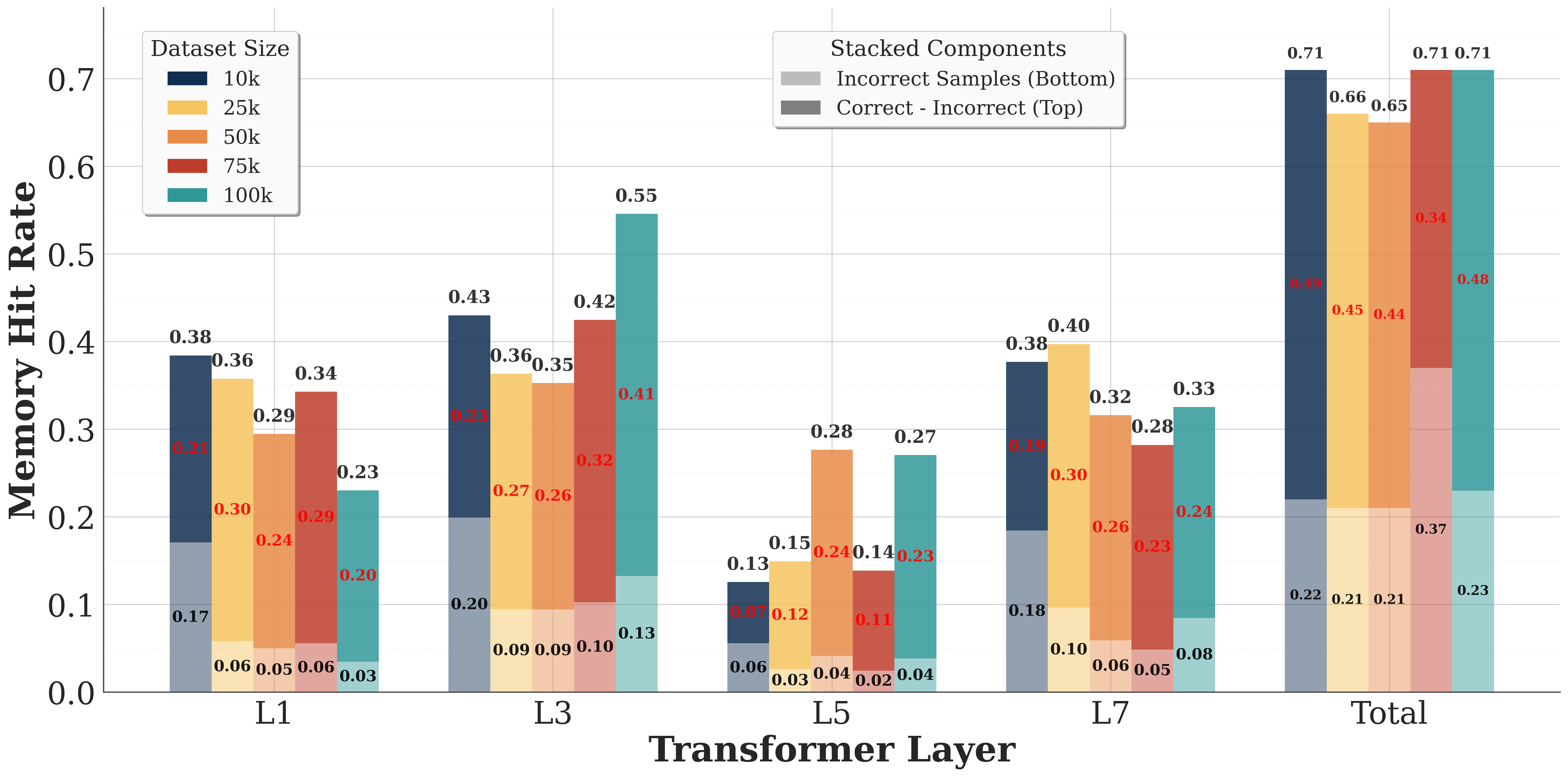

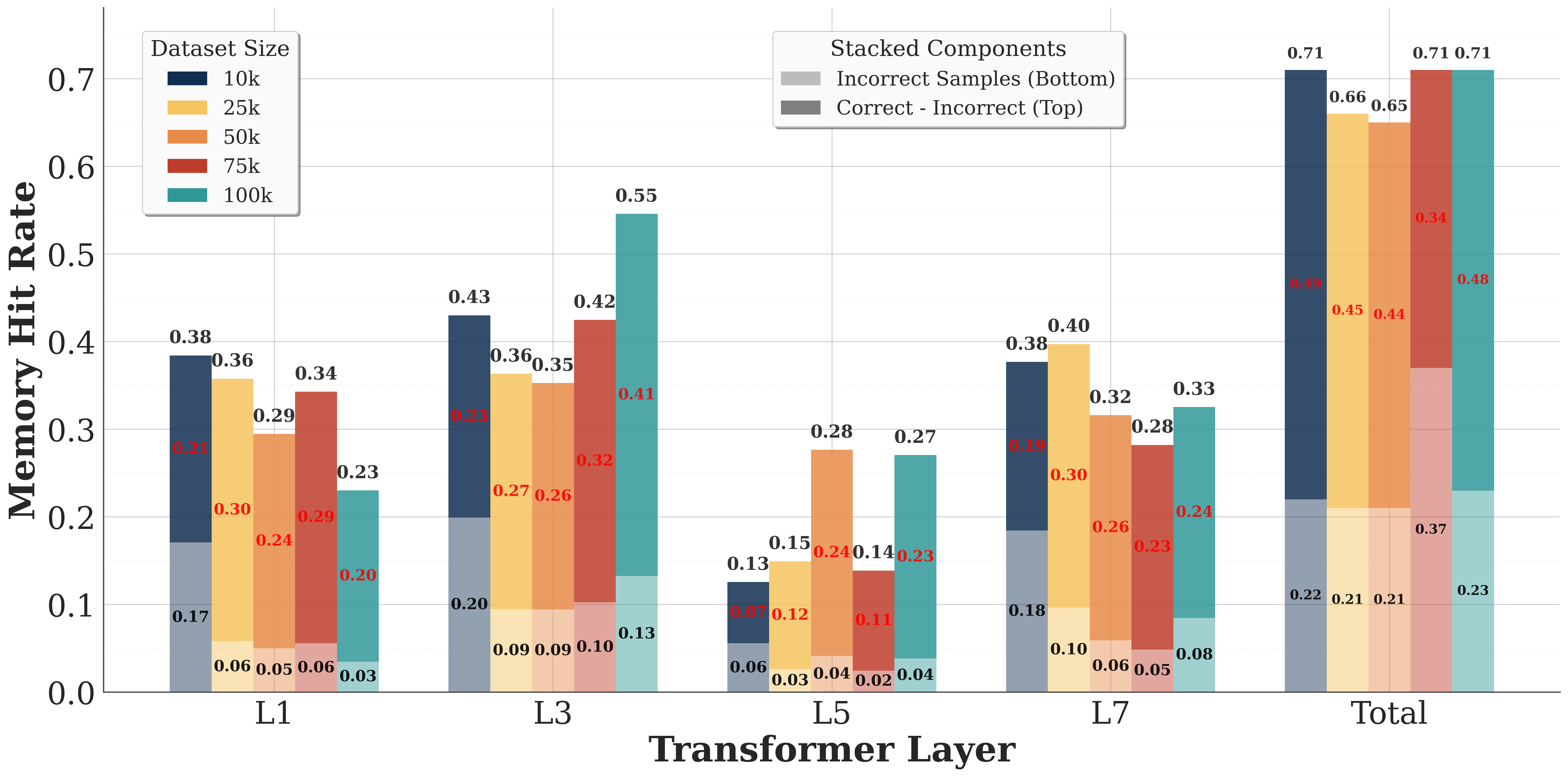

## Bar Chart: Memory Hit Rate vs. Transformer Layer

### Overview

The image is a bar chart comparing the memory hit rate across different transformer layers (L1, L3, L5, L7, and Total) for various dataset sizes (10k, 25k, 50k, 75k, and 100k). The chart also breaks down the memory hit rate into "Incorrect Samples (Bottom)" and "Correct - Incorrect (Top)" components.

### Components/Axes

* **X-axis:** Transformer Layer (L1, L3, L5, L7, Total)

* **Y-axis:** Memory Hit Rate (ranging from 0.0 to 0.7)

* **Legend (Dataset Size):** Located at the top-left of the chart.

* Dark Blue: 10k

* Yellow: 25k

* Orange: 50k

* Red: 75k

* Teal: 100k

* **Legend (Stacked Components):** Located at the top-center of the chart.

* Light Gray: Incorrect Samples (Bottom)

* Dark Gray: Correct - Incorrect (Top)

### Detailed Analysis

**L1 Layer:**

* 10k (Dark Blue): 0.38. Stacked components: Incorrect Samples (Bottom) = 0.17, Correct - Incorrect (Top) = 0.21

* 25k (Yellow): 0.36. Stacked components: Incorrect Samples (Bottom) = 0.06, Correct - Incorrect (Top) = 0.30

* 50k (Orange): 0.29. Stacked components: Incorrect Samples (Bottom) = 0.05, Correct - Incorrect (Top) = 0.24

* 75k (Red): 0.34. Stacked components: Incorrect Samples (Bottom) = 0.06, Correct - Incorrect (Top) = 0.29

* 100k (Teal): 0.23. Stacked components: Incorrect Samples (Bottom) = 0.03, Correct - Incorrect (Top) = 0.20

**L3 Layer:**

* 10k (Dark Blue): 0.43. Stacked components: Incorrect Samples (Bottom) = 0.20, Correct - Incorrect (Top) = 0.23

* 25k (Yellow): 0.36. Stacked components: Incorrect Samples (Bottom) = 0.09, Correct - Incorrect (Top) = 0.27

* 50k (Orange): 0.35. Stacked components: Incorrect Samples (Bottom) = 0.09, Correct - Incorrect (Top) = 0.26

* 75k (Red): 0.42. Stacked components: Incorrect Samples (Bottom) = 0.10, Correct - Incorrect (Top) = 0.32

* 100k (Teal): 0.55. Stacked components: Incorrect Samples (Bottom) = 0.13, Correct - Incorrect (Top) = 0.41

**L5 Layer:**

* 10k (Dark Blue): 0.13. Stacked components: Incorrect Samples (Bottom) = 0.06, Correct - Incorrect (Top) = 0.07

* 25k (Yellow): 0.15. Stacked components: Incorrect Samples (Bottom) = 0.03, Correct - Incorrect (Top) = 0.12

* 50k (Orange): 0.28. Stacked components: Incorrect Samples (Bottom) = 0.04, Correct - Incorrect (Top) = 0.24

* 75k (Red): 0.27. Stacked components: Incorrect Samples (Bottom) = 0.02, Correct - Incorrect (Top) = 0.25

* 100k (Teal): 0.27. Stacked components: Incorrect Samples (Bottom) = 0.04, Correct - Incorrect (Top) = 0.23

**L7 Layer:**

* 10k (Dark Blue): 0.38. Stacked components: Incorrect Samples (Bottom) = 0.18, Correct - Incorrect (Top) = 0.19

* 25k (Yellow): 0.40. Stacked components: Incorrect Samples (Bottom) = 0.10, Correct - Incorrect (Top) = 0.30

* 50k (Orange): 0.32. Stacked components: Incorrect Samples (Bottom) = 0.06, Correct - Incorrect (Top) = 0.26

* 75k (Red): 0.28. Stacked components: Incorrect Samples (Bottom) = 0.05, Correct - Incorrect (Top) = 0.23

* 100k (Teal): 0.33. Stacked components: Incorrect Samples (Bottom) = 0.08, Correct - Incorrect (Top) = 0.24

**Total:**

* 10k (Dark Blue): 0.71. Stacked components: Incorrect Samples (Bottom) = 0.22, Correct - Incorrect (Top) = 0.49

* 25k (Yellow): 0.66. Stacked components: Incorrect Samples (Bottom) = 0.21, Correct - Incorrect (Top) = 0.45

* 50k (Orange): 0.65. Stacked components: Incorrect Samples (Bottom) = 0.21, Correct - Incorrect (Top) = 0.44

* 75k (Red): 0.71. Stacked components: Incorrect Samples (Bottom) = 0.37, Correct - Incorrect (Top) = 0.34

* 100k (Teal): 0.71. Stacked components: Incorrect Samples (Bottom) = 0.23, Correct - Incorrect (Top) = 0.48

### Key Observations

* The "Total" transformer layer generally has the highest memory hit rate across all dataset sizes.

* The 100k dataset size tends to have a higher memory hit rate compared to the other dataset sizes, especially in the L3 and Total layers.

* The L5 layer has the lowest memory hit rate across all dataset sizes.

* The "Correct - Incorrect (Top)" component generally contributes more to the overall memory hit rate than the "Incorrect Samples (Bottom)" component.

### Interpretation

The chart suggests that the memory hit rate varies significantly depending on the transformer layer and the dataset size. The "Total" layer likely represents an aggregated or final layer in the transformer architecture, which benefits from the processing done in the earlier layers (L1, L3, L5, L7), resulting in a higher hit rate. The lower hit rate in the L5 layer could indicate a bottleneck or a less efficient memory access pattern in that specific layer. The higher hit rate for the 100k dataset size might be due to better generalization or more effective caching with larger datasets. The stacked components provide insights into the nature of memory hits, distinguishing between hits related to incorrect samples and hits related to the difference between correct and incorrect samples. This distinction can be valuable for optimizing memory access patterns and improving the overall performance of the transformer model.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Stacked Bar Chart: Memory Hit Rate vs. Transformer Layer & Dataset Size

### Overview

This is a stacked bar chart visualizing the Memory Hit Rate across different Transformer Layers (L1, L3, L5, L7, and Total) for varying Dataset Sizes (10k, 25k, 50k, 75k, and 100k). The bars are stacked to represent the contribution of "Correct - Incorrect" samples (top portion, grey/red) and "Incorrect Samples" (bottom portion, blue/green). The Y-axis represents the Memory Hit Rate, ranging from 0.0 to 0.7, while the X-axis represents the Transformer Layer.

### Components/Axes

* **X-axis:** Transformer Layer (L1, L3, L5, L7, Total)

* **Y-axis:** Memory Hit Rate (0.0 to 0.7)

* **Legend (Top-Left):** Dataset Size

* 10k (Dark Grey)

* 25k (Light Grey)

* 50k (Orange)

* 75k (Brown)

* 100k (Green)

* **Stacked Components Legend (Top-Center):**

* Incorrect Samples (Bottom - Blue/Green shades)

* Correct - Incorrect (Top - Grey/Red shades)

### Detailed Analysis

The chart presents a series of stacked bars, one for each Transformer Layer and each Dataset Size combination. The height of each bar represents the total Memory Hit Rate. The stacked segments show the proportion of the hit rate attributable to correct samples minus incorrect samples (top) and incorrect samples alone (bottom).

**L1 Layer:**

* 10k: Hit Rate ≈ 0.06 (Incorrect), 0.32 (Correct-Incorrect) - Total ≈ 0.38

* 25k: Hit Rate ≈ 0.06 (Incorrect), 0.29 (Correct-Incorrect) - Total ≈ 0.35

* 50k: Hit Rate ≈ 0.03 (Incorrect), 0.24 (Correct-Incorrect) - Total ≈ 0.27

* 75k: Hit Rate ≈ 0.05 (Incorrect), 0.20 (Correct-Incorrect) - Total ≈ 0.25

* 100k: Hit Rate ≈ 0.06 (Incorrect), 0.17 (Correct-Incorrect) - Total ≈ 0.23

**L3 Layer:**

* 10k: Hit Rate ≈ 0.09 (Incorrect), 0.34 (Correct-Incorrect) - Total ≈ 0.43

* 25k: Hit Rate ≈ 0.09 (Incorrect), 0.35 (Correct-Incorrect) - Total ≈ 0.44

* 50k: Hit Rate ≈ 0.09 (Incorrect), 0.27 (Correct-Incorrect) - Total ≈ 0.36

* 75k: Hit Rate ≈ 0.10 (Incorrect), 0.26 (Correct-Incorrect) - Total ≈ 0.36

* 100k: Hit Rate ≈ 0.13 (Incorrect), 0.20 (Correct-Incorrect) - Total ≈ 0.33

**L5 Layer:**

* 10k: Hit Rate ≈ 0.06 (Incorrect), 0.28 (Correct-Incorrect) - Total ≈ 0.34

* 25k: Hit Rate ≈ 0.04 (Incorrect), 0.27 (Correct-Incorrect) - Total ≈ 0.31

* 50k: Hit Rate ≈ 0.06 (Incorrect), 0.15 (Correct-Incorrect) - Total ≈ 0.21

* 75k: Hit Rate ≈ 0.04 (Incorrect), 0.14 (Correct-Incorrect) - Total ≈ 0.18

* 100k: Hit Rate ≈ 0.04 (Incorrect), 0.11 (Correct-Incorrect) - Total ≈ 0.15

**L7 Layer:**

* 10k: Hit Rate ≈ 0.10 (Incorrect), 0.38 (Correct-Incorrect) - Total ≈ 0.48

* 25k: Hit Rate ≈ 0.08 (Incorrect), 0.32 (Correct-Incorrect) - Total ≈ 0.40

* 50k: Hit Rate ≈ 0.06 (Incorrect), 0.26 (Correct-Incorrect) - Total ≈ 0.32

* 75k: Hit Rate ≈ 0.05 (Incorrect), 0.23 (Correct-Incorrect) - Total ≈ 0.28

* 100k: Hit Rate ≈ 0.06 (Incorrect), 0.21 (Correct-Incorrect) - Total ≈ 0.27

**Total:**

* 10k: Hit Rate ≈ 0.22 (Incorrect), 0.52 (Correct-Incorrect) - Total ≈ 0.74 (appears to be 0.71 on the chart)

* 25k: Hit Rate ≈ 0.21 (Incorrect), 0.45 (Correct-Incorrect) - Total ≈ 0.66 (appears to be 0.71 on the chart)

* 50k: Hit Rate ≈ 0.21 (Incorrect), 0.23 (Correct-Incorrect) - Total ≈ 0.44

* 75k: Hit Rate ≈ 0.21 (Incorrect), 0.17 (Correct-Incorrect) - Total ≈ 0.38

* 100k: Hit Rate ≈ 0.23 (Incorrect), 0.24 (Correct-Incorrect) - Total ≈ 0.47 (appears to be 0.48 on the chart)

### Key Observations

* The Memory Hit Rate generally increases with larger dataset sizes for the earlier Transformer Layers (L1, L3).

* The "Correct - Incorrect" component consistently contributes more to the overall Hit Rate than the "Incorrect Samples" component.

* The Total Hit Rate is highest for the 10k and 25k datasets, peaking around 0.71. The hit rate decreases for larger datasets.

* The L5 and L7 layers show relatively low hit rates across all dataset sizes.

* There appears to be a discrepancy between the Total Hit Rate values and the chart's labels (0.71 vs. 0.74, 0.71 vs. 0.66, 0.48 vs. 0.47).

### Interpretation

The data suggests that the model performs better (higher Memory Hit Rate) with smaller datasets, particularly in the earlier Transformer Layers. This could indicate that the model is overfitting to the smaller datasets or that the benefits of larger datasets are not fully realized in the later layers. The consistent positive contribution of the "Correct - Incorrect" component suggests that the model is generally able to distinguish between correct and incorrect samples, but the overall hit rate is limited by the number of incorrect samples. The decreasing hit rate for larger datasets in the "Total" category is a notable anomaly that warrants further investigation. It could be due to increased complexity in the data or limitations in the model's capacity to handle larger datasets effectively. The discrepancies in the Total Hit Rate labels suggest a potential error in the chart's presentation or data calculation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Stacked Bar Chart: Memory Hit Rate by Transformer Layer and Dataset Size

### Overview

This is a stacked bar chart visualizing the "Memory Hit Rate" across different Transformer layers (L1, L3, L5, L7) and a "Total" aggregate. The data is further broken down by five different dataset sizes (10k, 25k, 50k, 75k, 100k). Each bar is composed of two stacked components: "Incorrect Samples" at the bottom and "Correct - Incorrect" on top.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Transformer Layer". It contains five categorical groups: `L1`, `L3`, `L5`, `L7`, and `Total`.

* **Y-Axis (Vertical):** Labeled "Memory Hit Rate". It is a linear scale ranging from `0.0` to `0.7`, with major gridlines at intervals of 0.1.

* **Legend 1 (Top-Left):** Titled "Dataset Size". It maps colors to dataset sizes:

* Dark Blue: `10k`

* Yellow: `25k`

* Orange: `50k`

* Red: `75k`

* Teal: `100k`

* **Legend 2 (Top-Center):** Titled "Stacked Components". It explains the bar stacking:

* Lighter shade (bottom segment): `Incorrect Samples (Bottom)`

* Darker shade (top segment): `Correct - Incorrect (Top)`

* **Data Labels:** Numerical values are printed directly on the bars. Values for the bottom segment are in black, and values for the top segment are in red.

### Detailed Analysis

The chart presents data for five Transformer Layer groups. Below is the extracted data for each group, organized by dataset size (color). For each bar, the total height is the "Memory Hit Rate", composed of the bottom segment ("Incorrect Samples") and the top segment ("Correct - Incorrect").

**Group: L1**

* **10k (Dark Blue):** Total = `0.38`. Bottom = `0.17`, Top = `0.21`.

* **25k (Yellow):** Total = `0.36`. Bottom = `0.06`, Top = `0.30`.

* **50k (Orange):** Total = `0.29`. Bottom = `0.05`, Top = `0.24`.

* **75k (Red):** Total = `0.34`. Bottom = `0.06`, Top = `0.29`.

* **100k (Teal):** Total = `0.23`. Bottom = `0.03`, Top = `0.20`.

**Group: L3**

* **10k (Dark Blue):** Total = `0.43`. Bottom = `0.20`, Top = `0.23`.

* **25k (Yellow):** Total = `0.36`. Bottom = `0.09`, Top = `0.27`.

* **50k (Orange):** Total = `0.35`. Bottom = `0.09`, Top = `0.26`.

* **75k (Red):** Total = `0.42`. Bottom = `0.10`, Top = `0.32`.

* **100k (Teal):** Total = `0.55`. Bottom = `0.13`, Top = `0.41`.

**Group: L5**

* **10k (Dark Blue):** Total = `0.13`. Bottom = `0.06`, Top = `0.07`.

* **25k (Yellow):** Total = `0.15`. Bottom = `0.03`, Top = `0.12`.

* **50k (Orange):** Total = `0.28`. Bottom = `0.04`, Top = `0.24`.

* **75k (Red):** Total = `0.14`. Bottom = `0.02`, Top = `0.11`.

* **100k (Teal):** Total = `0.27`. Bottom = `0.04`, Top = `0.23`.

**Group: L7**

* **10k (Dark Blue):** Total = `0.38`. Bottom = `0.18`, Top = `0.19`.

* **25k (Yellow):** Total = `0.40`. Bottom = `0.10`, Top = `0.30`.

* **50k (Orange):** Total = `0.32`. Bottom = `0.06`, Top = `0.26`.

* **75k (Red):** Total = `0.28`. Bottom = `0.05`, Top = `0.23`.

* **100k (Teal):** Total = `0.33`. Bottom = `0.08`, Top = `0.24`.

**Group: Total**

* **10k (Dark Blue):** Total = `0.71`. Bottom = `0.22`, Top = `0.49`.

* **25k (Yellow):** Total = `0.66`. Bottom = `0.21`, Top = `0.45`.

* **50k (Orange):** Total = `0.65`. Bottom = `0.21`, Top = `0.44`.

* **75k (Red):** Total = `0.71`. Bottom = `0.37`, Top = `0.34`.

* **100k (Teal):** Total = `0.71`. Bottom = `0.23`, Top = `0.48`.

### Key Observations

1. **Layer Performance Variability:** Memory Hit Rate is not uniform across layers. L5 shows the lowest overall performance (all totals ≤ 0.28), while the "Total" aggregate shows the highest (all totals ≥ 0.65).

2. **Dataset Size Impact:** The relationship between dataset size and hit rate is non-linear and layer-dependent.

* In **L3**, the hit rate increases significantly with the largest dataset (100k: 0.55).

* In **L1** and **L7**, the trend is less clear, with mid-sized datasets sometimes outperforming larger ones.

* In the **Total** group, the 10k, 75k, and 100k datasets all achieve the highest observed hit rate of 0.71.

3. **Component Contribution:** The "Correct - Incorrect" (top, red label) component is generally the larger contributor to the total hit rate, except in the "Total" group for the 75k dataset, where the "Incorrect Samples" (bottom) component is larger (0.37 vs. 0.34).

4. **Notable Outlier:** The 100k dataset in **L3** (0.55) is a clear outlier, performing substantially better than other dataset sizes within that layer and better than the 100k dataset in any other individual layer.

### Interpretation

This chart analyzes how a model's ability to "hit" or recall information from memory (Memory Hit Rate) is affected by the depth of the transformer layer and the amount of training data (Dataset Size). The "Total" column likely represents an aggregate or average across all layers, showing the model's overall memory performance.

The data suggests that memory utilization is highly layer-specific. Middle layers like L5 appear to be bottlenecks for memory recall, regardless of dataset size. The exceptional performance of the 100k dataset in L3 indicates that this specific layer may benefit disproportionately from larger training data, perhaps becoming a specialized hub for memory retrieval.

The decomposition into "Incorrect Samples" and "Correct - Incorrect" provides insight into the *quality* of the memory hits. A high "Correct - Incorrect" value suggests the model is not just accessing memory but doing so accurately for correct predictions. The anomaly in the "Total" group for 75k, where "Incorrect Samples" dominate, could indicate that with this specific data size, the model's memory access becomes noisier or less precise, even if the overall hit rate remains high.

In summary, the chart demonstrates that optimizing memory in transformer models requires a nuanced, layer-aware approach, and that simply increasing dataset size does not uniformly improve memory performance across all parts of the model.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Memory Hit Rate by Transformer Layer and Dataset Size

### Overview

The chart visualizes memory hit rates across transformer layers (L1, L3, L5, L7, Total) for five dataset sizes (10k, 25k, 50k, 75k, 100k). Each bar is stacked to show two components: "Correct - Incorrect" (top) and "Incorrect Samples" (bottom). The y-axis ranges from 0 to 0.7, with values labeled on top of each bar segment.

### Components/Axes

- **X-axis (Transformer Layer)**: Labeled "Transformer Layer" with categories: L1, L3, L5, L7, Total.

- **Y-axis (Memory Hit Rate)**: Labeled "Memory Hit Rate" with a scale from 0 to 0.7.

- **Legend**: Located in the top-right corner. Colors represent:

- **Dataset Sizes**: Dark blue (10k), yellow (25k), orange (50k), red (75k), teal (100k).

- **Stacked Components**: Gray (Correct - Incorrect, top) and dark gray (Incorrect Samples, bottom).

### Detailed Analysis

#### Dataset Size Trends

- **10k (Dark Blue)**:

- L1: 0.38 (Correct - Incorrect), 0.21 (Incorrect Samples)

- L3: 0.43, 0.23

- L5: 0.13, 0.07

- L7: 0.38, 0.18

- Total: 0.71, 0.23

- **25k (Yellow)**:

- L1: 0.36, 0.30

- L3: 0.36, 0.27

- L5: 0.12, 0.09

- L7: 0.40, 0.30

- Total: 0.66, 0.21

- **50k (Orange)**:

- L1: 0.34, 0.29

- L3: 0.35, 0.26

- L5: 0.28, 0.24

- L7: 0.32, 0.26

- Total: 0.65, 0.21

- **75k (Red)**:

- L1: 0.34, 0.29

- L3: 0.42, 0.32

- L5: 0.14, 0.11

- L7: 0.23, 0.23

- Total: 0.71, 0.37

- **100k (Teal)**:

- L1: 0.23, 0.20

- L3: 0.55, 0.41

- L5: 0.27, 0.23

- L7: 0.33, 0.24

- Total: 0.71, 0.48

#### Stacked Component Trends

- **Correct - Incorrect (Gray)**:

- Increases with dataset size (e.g., 100k dataset rises from 0.23 in L1 to 0.71 in Total).

- Peaks in L3 and L7 for larger datasets.

- **Incorrect Samples (Dark Gray)**:

- Larger datasets show higher values (e.g., 100k dataset increases from 0.20 in L1 to 0.48 in Total).

- L3 and L7 layers exhibit notable spikes.

### Key Observations

1. **Total Layer Dominance**: The "Total" category consistently shows the highest memory hit rates across all dataset sizes, with 100k datasets reaching 0.71 for both components.

2. **Layer-Specific Peaks**:

- L3 and L7 layers show significant increases in memory hit rates for larger datasets (e.g., 100k dataset jumps from 0.23 in L1 to 0.55 in L3).

- L5 layers generally have lower values, suggesting reduced memory efficiency in mid-layers for smaller datasets.

3. **Incorrect Samples Contribution**: Larger datasets (75k, 100k) contribute disproportionately to the "Incorrect Samples" component, especially in L3 and L7 layers.

### Interpretation

The data suggests that:

- **Dataset Size Impacts Memory Efficiency**: Larger datasets (100k) consistently exhibit higher memory hit rates, indicating increased complexity or redundancy in memory access patterns.

- **Layer-Specific Behavior**: Early layers (L1, L3) show sharper increases in hit rates for larger datasets, while mid-layers (L5) plateau or decline, possibly due to architectural bottlenecks.

- **Error Propagation**: The "Incorrect Samples" component grows with dataset size, suggesting that larger datasets introduce more noise or edge cases that the model struggles to handle, particularly in deeper layers (L7).

This pattern aligns with transformer models' tendency to rely on early layers for basic feature extraction and later layers for complex reasoning, where larger datasets may exacerbate inefficiencies.

DECODING INTELLIGENCE...