## Flow Diagram: Energy-Aware Optimization Engine

### Overview

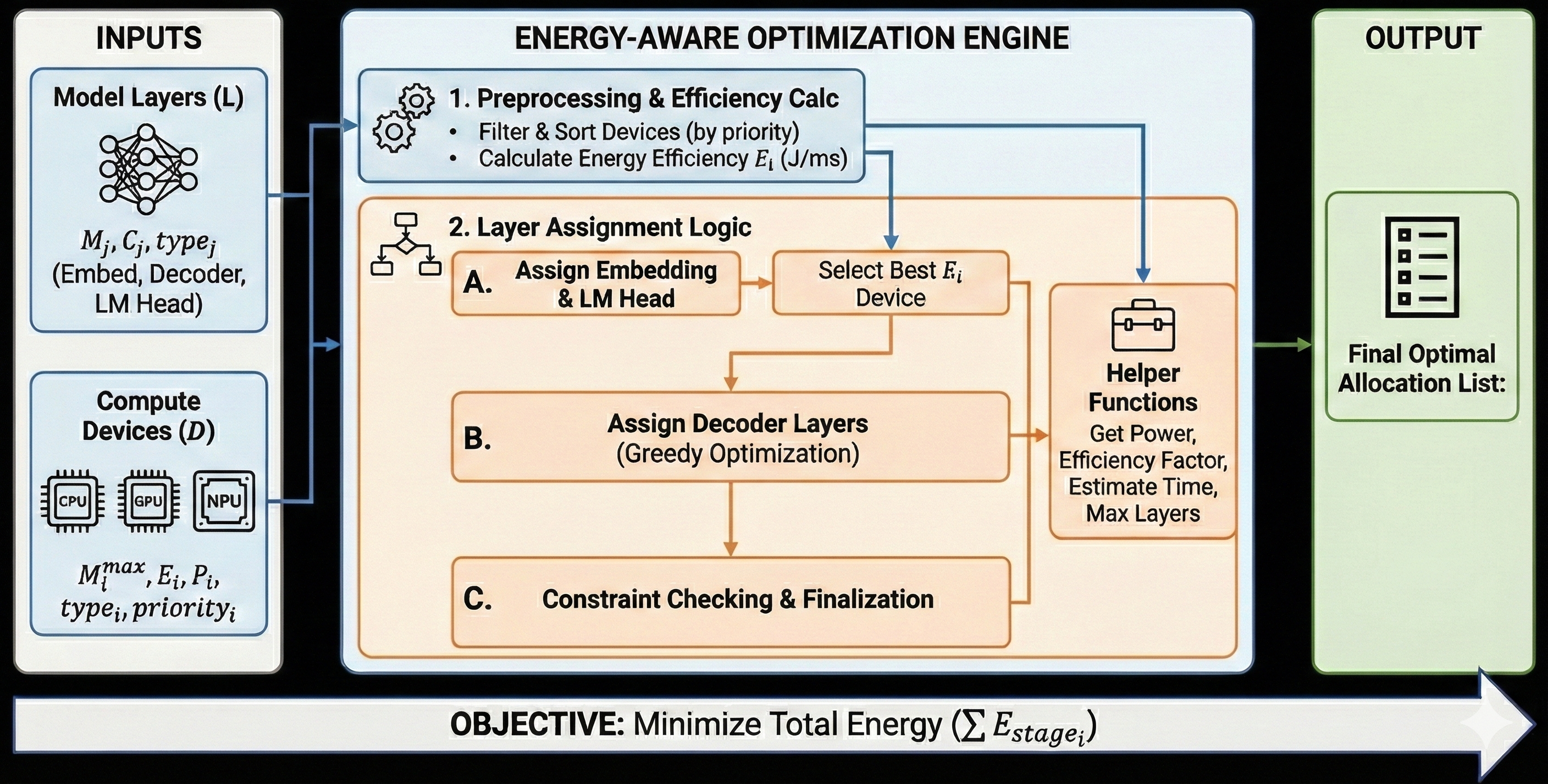

The image is a flow diagram illustrating the architecture of an energy-aware optimization engine. It shows the inputs, processing steps, and output of the engine, with a focus on minimizing total energy consumption.

### Components/Axes

The diagram is divided into three main sections: INPUTS (left), ENERGY-AWARE OPTIMIZATION ENGINE (center), and OUTPUT (right).

**INPUTS (Left)**

* **Model Layers (L):** Represented by a neural network icon. Includes parameters Mj, Cj, and typej, specifying Embed, Decoder, and LM Head.

* **Compute Devices (D):** Represented by CPU, GPU, and NPU icons. Includes parameters Mmaxi, Ei, Pi, typei, and priorityi.

**ENERGY-AWARE OPTIMIZATION ENGINE (Center)**

This section is the core of the diagram and is further divided into two main stages:

* **1. Preprocessing & Efficiency Calc:**

* Filter & Sort Devices (by priority)

* Calculate Energy Efficiency Ei (J/ms)

* **2. Layer Assignment Logic:**

* **A. Assign Embedding & LM Head:** Flows to "Select Best Ei Device".

* **Select Best Ei Device:** Flows to "Helper Functions" and "Assign Decoder Layers".

* **B. Assign Decoder Layers (Greedy Optimization):** Flows to "Helper Functions" and "Constraint Checking & Finalization".

* **C. Constraint Checking & Finalization:** Flows to "Helper Functions".

* **Helper Functions:** Includes functions to Get Power, Efficiency Factor, Estimate Time, and Max Layers.

**OUTPUT (Right)**

* **Final Optimal Allocation List:** Represented by a list icon.

**Objective (Bottom)**

* **OBJECTIVE:** Minimize Total Energy (∑ Estagei)

### Detailed Analysis

* **INPUTS:**

* Model Layers (L) are characterized by Mj, Cj, and typej, which can be Embed, Decoder, or LM Head.

* Compute Devices (D) are characterized by Mmaxi, Ei, Pi, typei, and priorityi. The devices are CPU, GPU, and NPU.

* **ENERGY-AWARE OPTIMIZATION ENGINE:**

* The engine starts with preprocessing and efficiency calculation, where devices are filtered and sorted based on priority, and energy efficiency (Ei) is calculated in J/ms.

* The layer assignment logic assigns embedding and LM head layers, selects the best Ei device, assigns decoder layers using greedy optimization, and performs constraint checking and finalization.

* Helper functions are used to get power, efficiency factor, estimate time, and max layers.

* **OUTPUT:**

* The output is a final optimal allocation list.

* **FLOW:**

* The flow starts from the INPUTS, goes through the ENERGY-AWARE OPTIMIZATION ENGINE, and ends with the OUTPUT.

* The objective is to minimize total energy (∑ Estagei).

### Key Observations

* The diagram illustrates a systematic approach to energy-aware optimization.

* The engine considers both model layers and compute devices as inputs.

* The optimization process involves preprocessing, layer assignment, and constraint checking.

* The objective is to minimize total energy consumption.

### Interpretation

The diagram presents a high-level overview of an energy-aware optimization engine. The engine aims to efficiently allocate model layers to compute devices while minimizing energy consumption. The process involves preprocessing to filter and sort devices, assigning layers based on energy efficiency, and using helper functions to estimate power and time. The final output is an optimized allocation list that balances performance and energy efficiency. The use of greedy optimization in assigning decoder layers suggests a heuristic approach to finding a near-optimal solution within a reasonable time frame. The objective function explicitly states the goal of minimizing total energy, which is a critical consideration in resource-constrained environments.