\n

## Diagram: Energy-Aware Optimization Engine

### Overview

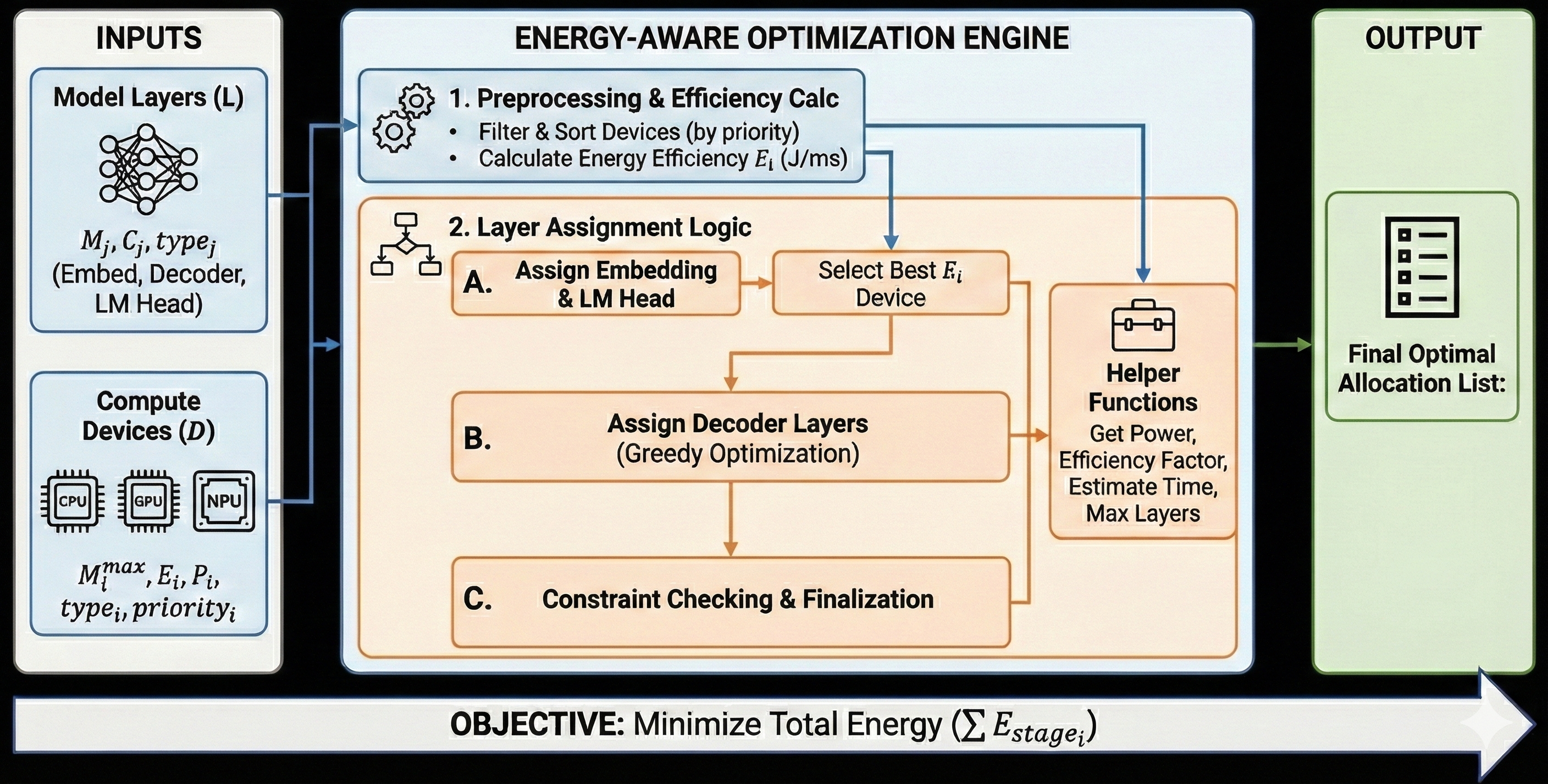

This diagram illustrates the flow of an energy-aware optimization engine, taking model layers and compute devices as inputs and producing a final optimal allocation list as output. The engine consists of three main stages: preprocessing & efficiency calculation, layer assignment logic, and constraint checking & finalization.

### Components/Axes

The diagram is segmented into three main sections: "INPUTS" (left), "ENERGY-AWARE OPTIMIZATION ENGINE" (center), and "OUTPUT" (right).

**Inputs:**

* **Model Layers (L):** Represented by a neural network graphic. Parameters include: `Mᵢ`, `Cᵢ`, `type` (Embed, Decoder, LM Head).

* **Compute Devices (D):** Represented by icons for CPU, GPU, and NPU. Parameters include: `Mᵢᵐᵃˣ`, `Eᵢ`, `Pᵢ`, `type`, `priorityᵢ`.

**Energy-Aware Optimization Engine:**

* **1. Preprocessing & Efficiency Calc:** Includes bullet points: "Filter & Sort Devices (by priority)" and "Calculate Energy Efficiency Eᵢ (J/ms)".

* **2. Layer Assignment Logic:** Subdivided into:

* **A. Assign Embedding & LM Head:** An arrow points to "Select Best Eᵢ Device".

* **B. Assign Decoder Layers (Greedy Optimization):** An arrow points to "Helper Functions".

* **C. Constraint Checking & Finalization.**

* **Helper Functions:** Includes bullet points: "Get Power", "Efficiency Factor", "Estimate Time", "Max Layers".

**Output:**

* **Final Optimal Allocation List:** Represented by a list icon.

**Overall Objective:** "Minimize Total Energy (Σ Estageᵢ)" is stated at the bottom.

### Detailed Analysis or Content Details

The diagram depicts a sequential process.

1. **Inputs:** The process begins with two inputs: Model Layers (L) and Compute Devices (D). Model Layers are characterized by their memory size (Mᵢ), channel count (Cᵢ), and type (Embed, Decoder, LM Head). Compute Devices are characterized by their maximum memory (Mᵢᵐᵃˣ), energy consumption (Eᵢ), power (Pᵢ), type, and priority (priorityᵢ).

2. **Preprocessing & Efficiency Calculation:** The first stage filters and sorts the compute devices based on their priority and calculates the energy efficiency (Eᵢ) in Joules per millisecond (J/ms).

3. **Layer Assignment Logic:** This stage is divided into three sub-stages:

* **Embedding & LM Head Assignment (A):** The embedding and language model head layers are assigned to the compute device with the best energy efficiency (Eᵢ).

* **Decoder Layer Assignment (B):** Decoder layers are assigned using a greedy optimization approach, utilizing helper functions to determine the optimal device.

* **Constraint Checking & Finalization (C):** This stage checks for constraints and finalizes the allocation.

4. **Helper Functions:** These functions provide supporting information for the layer assignment process, including power consumption, efficiency factor, estimated time, and maximum layer capacity.

5. **Output:** The final output is a list representing the optimal allocation of model layers to compute devices.

The overall objective of the engine is to minimize the total energy consumption (Σ Estageᵢ).

### Key Observations

The diagram emphasizes a priority-based approach to device selection and a greedy optimization strategy for decoder layer assignment. The use of helper functions suggests a modular design, allowing for flexibility and potential improvements in the optimization process. The objective function clearly states the goal of minimizing total energy consumption.

### Interpretation

This diagram represents a system designed to optimize the energy efficiency of machine learning model execution. By intelligently allocating model layers to different compute devices (CPU, GPU, NPU) based on their energy efficiency and priority, the system aims to reduce overall energy consumption. The use of a greedy optimization approach for decoder layers suggests a trade-off between optimality and computational complexity. The diagram highlights the importance of considering both hardware characteristics (memory, power, efficiency) and model layer types (embedding, decoder, LM head) in the optimization process. The objective function, minimizing total energy, underscores the growing importance of energy efficiency in modern machine learning deployments, particularly in resource-constrained environments. The diagram is a high-level overview and doesn't delve into the specifics of the algorithms used for filtering, sorting, or greedy optimization.