## Flowchart: ENERGY-AWARE OPTIMIZATION ENGINE

### Overview

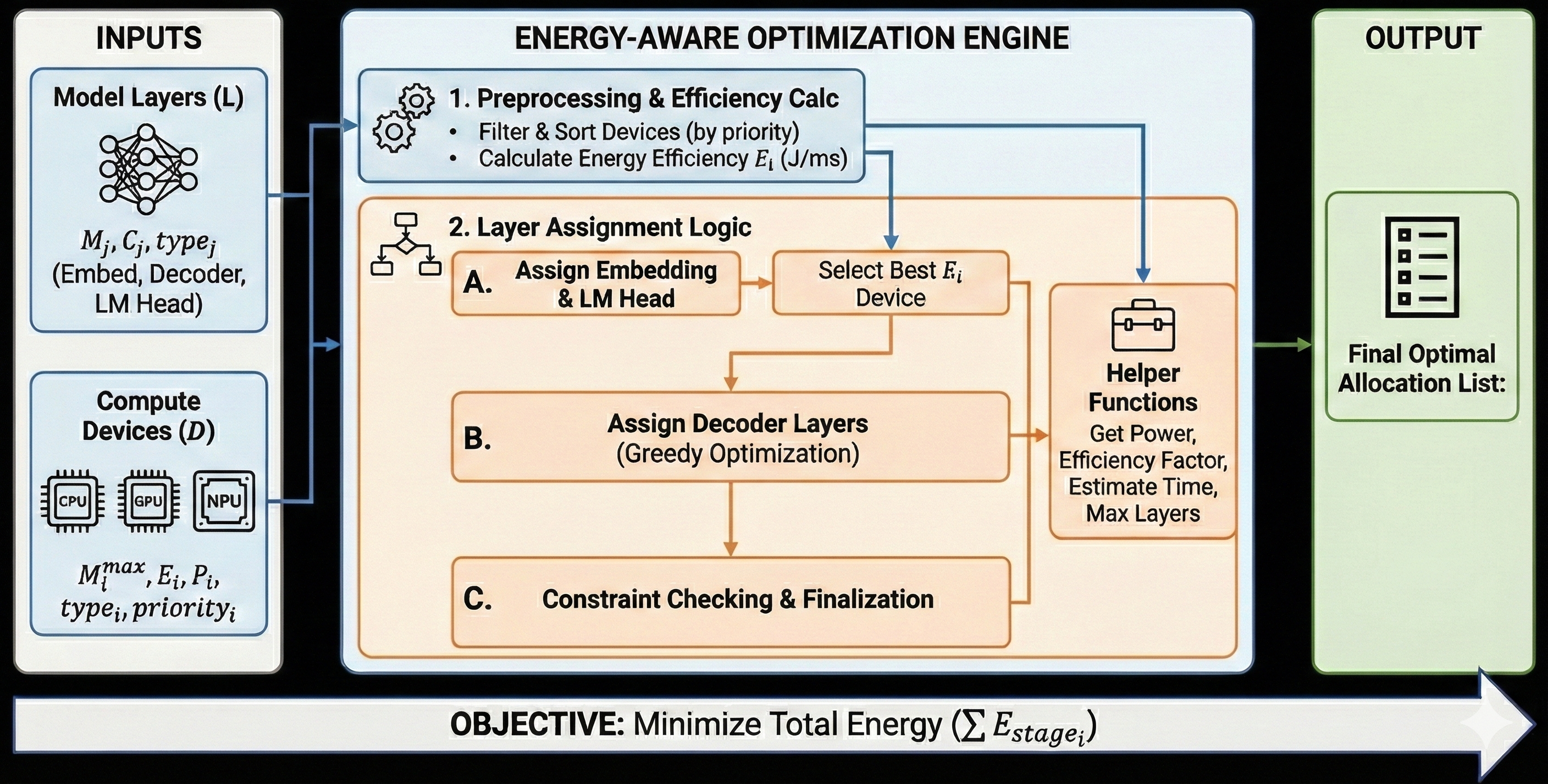

The diagram illustrates a multi-stage optimization engine designed to minimize total energy consumption (ΣE_stage_i) for deploying machine learning models. It processes inputs (model layers and compute devices), applies energy-aware layer assignment logic, and outputs an optimal allocation list.

### Components/Axes

#### INPUTS

1. **Model Layers (L)**

- Parameters: `M_j`, `C_j`, `type_j` (Embed, Decoder, LM Head)

- Visualized as interconnected nodes.

2. **Compute Devices (D)**

- Types: CPU, GPU, NPU

- Attributes: `M_i^max` (max memory), `E_i` (energy), `P_i` (power), `type_i`, `priority_i`

- Visualized as hardware icons.

#### ENGINE

1. **Preprocessing & Efficiency Calc**

- Tasks:

- Filter & Sort Devices (by priority)

- Calculate Energy Efficiency `E_i` (J/ms)

2. **Layer Assignment Logic**

- **A. Assign Embedding & LM Head**

- **B. Assign Decoder Layers** (Greedy Optimization)

- **C. Constraint Checking & Finalization**

3. **Helper Functions**

- Tasks:

- Get Power

- Efficiency Factor

- Estimate Time

- Max Layers

#### OUTPUT

- **Final Optimal Allocation List** (checklist icon)

### Detailed Analysis

- **Flow Direction**:

- Inputs → Preprocessing → Layer Assignment → Helper Functions → Output.

- **Key Relationships**:

- Energy efficiency (`E_i`) directly influences device selection.

- Greedy optimization is applied to decoder layers, prioritizing immediate energy savings.

- Constraints ensure feasibility (e.g., memory, power limits).

### Key Observations

- **Energy-Centric Design**: All stages prioritize minimizing `ΣE_stage_i`.

- **Hierarchical Optimization**:

- Preprocessing filters devices by priority before efficiency calculations.

- Layer assignment balances greedy optimization (decoder layers) with constraint adherence.

- **Modularity**: Helper functions abstract power, efficiency, and time estimation.

### Interpretation

The engine demonstrates a systematic approach to energy-aware resource allocation:

1. **Priority Filtering**: Ensures high-priority devices are considered first, potentially overriding raw efficiency metrics.

2. **Greedy Optimization**: Focuses on immediate energy savings for decoder layers, which may be computationally intensive.

3. **Constraint Enforcement**: Prevents over-allocation (e.g., exceeding device memory).

4. **Final Allocation**: Balances energy efficiency with operational constraints, producing a practical deployment plan.

The absence of numerical values suggests the diagram emphasizes workflow logic over quantitative results. The use of greedy optimization implies a trade-off between optimality and computational simplicity.