\n

## Line Charts: Comparison of Reinforcement Learning Baselines

### Overview

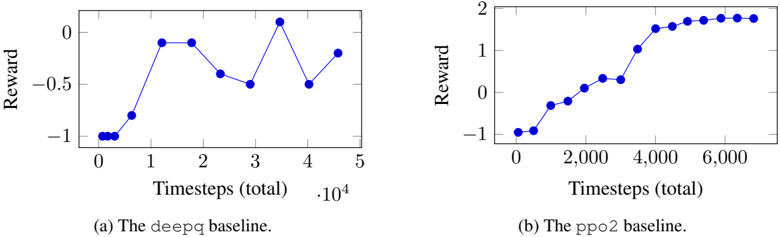

The image contains two side-by-side line charts comparing the learning performance of two reinforcement learning algorithms. The left chart (a) shows the performance of a "deepq" baseline, and the right chart (b) shows the performance of a "ppo2" baseline. Both plot "Reward" against "Timesteps (total)".

### Components/Axes

**Common Elements:**

* **Chart Type:** Line chart with circular data point markers.

* **Line/Marker Color:** Blue for both charts.

* **X-Axis Title:** "Timesteps (total)" for both.

* **Y-Axis Title:** "Reward" for both.

* **Captions:** Located directly below each chart, serving as the legend/title for each data series.

**Chart (a) - Left:**

* **Caption/Label:** "(a) The `deepq` baseline."

* **X-Axis Scale:** Linear scale from 0 to 5, with a multiplier of `·10^4` (i.e., values represent 0 to 50,000 timesteps). Major ticks at 0, 1, 2, 3, 4, 5.

* **Y-Axis Scale:** Linear scale from -1.0 to 0.5. Major ticks at -1.0, -0.5, 0.0, 0.5.

**Chart (b) - Right:**

* **Caption/Label:** "(b) The `ppo2` baseline."

* **X-Axis Scale:** Linear scale from 0 to 6000. Major ticks at 0, 2000, 4000, 6000.

* **Y-Axis Scale:** Linear scale from -1 to 2. Major ticks at -1, 0, 1, 2.

### Detailed Analysis

**Chart (a): The `deepq` baseline.**

* **Trend Verification:** The line shows high volatility. It starts at a low reward, rises sharply, then enters a phase of significant oscillation with peaks and troughs.

* **Data Point Extraction (Approximate):**

* (0, -1.0)

* (~0.2 * 10^4, -1.0)

* (~0.5 * 10^4, -0.8)

* (~1.2 * 10^4, -0.1)

* (~1.8 * 10^4, -0.1)

* (~2.3 * 10^4, -0.4)

* (~2.8 * 10^4, -0.5)

* (~3.5 * 10^4, +0.1) **(Peak)**

* (~4.0 * 10^4, -0.5)

* (~4.5 * 10^4, -0.2)

**Chart (b): The `ppo2` baseline.**

* **Trend Verification:** The line shows a smooth, generally upward trend that plateaus. It starts low, increases steadily, and then levels off at a high reward value.

* **Data Point Extraction (Approximate):**

* (0, -1.0)

* (~500, -0.9)

* (~1000, -0.3)

* (~1500, -0.2)

* (~2000, +0.3)

* (~2500, +0.3)

* (~3000, +1.0)

* (~3500, +1.5)

* (~4000, +1.6)

* (~4500, +1.7)

* (~5000, +1.7)

* (~5500, +1.8)

* (~6000, +1.8) **(Plateau)**

### Key Observations

1. **Performance Stability:** The `deepq` baseline (a) exhibits unstable learning, with the reward fluctuating dramatically after an initial improvement. The `ppo2` baseline (b) demonstrates stable, monotonic improvement until convergence.

2. **Final Performance:** The `ppo2` algorithm achieves a significantly higher final reward (~1.8) compared to the `deepq` algorithm, which ends at a negative reward (~-0.2) after its last measured point.

3. **Learning Speed:** `ppo2` shows consistent progress over its 6000 timesteps. `deepq` shows rapid initial learning within the first ~12,000 timesteps but fails to maintain or build upon that progress.

4. **Scale Difference:** The x-axis scales differ by an order of magnitude (`deepq` up to 50,000 steps, `ppo2` up to 6,000 steps), suggesting `ppo2` may be more sample-efficient in this context.

### Interpretation

This visual comparison serves as a performance benchmark between two reinforcement learning algorithms, likely from the OpenAI Gym or similar environment context. The data suggests that for the given task, the Proximal Policy Optimization (`ppo2`) algorithm is superior to the Deep Q-Network (`deepq`) baseline in two critical aspects: **stability** and **asymptotic performance**.

The `deepq` chart's volatility indicates potential issues with hyperparameter tuning, overestimation bias in Q-learning, or instability in the learning process itself. The `ppo2` chart's smooth curve is characteristic of policy gradient methods that optimize a surrogate objective function, leading to more reliable updates.

The stark contrast implies that `ppo2` is the more robust and effective choice for this specific problem domain. A researcher or engineer viewing this would conclude that further development should focus on the `ppo2` approach or investigate the causes of `deepq`'s instability, rather than using `deepq` as a reliable baseline. The charts effectively communicate not just numerical results, but the qualitative *nature* of the learning process for each algorithm.